AI agents are rapidly moving from experimental pilots into core business workflows. As a consequence, many organizations are discovering that the hardest part is not building them, but keeping them reliable once they operate in production.

Early prototypes can be created quickly, often by small teams experimenting with prompts and orchestration frameworks. However, when these systems begin interacting with real customers or financial data, weaknesses in their architecture become visible. Initially, the models may look like a working solution, but in production, they turn into a fragile system that is difficult to scale, audit, or secure.

Gartner projects that over 40% of AI agent projects will be canceled by the end of 2027. The root cause is often not the technology itself but the way the system was designed. Many organizations select development partners based on their ability to build impressive demos or rapid prototypes. In practice, those skills do not necessarily translate into the ability to design reliable production infrastructure.

When this happens, technical debt accumulates quickly. AI agent systems can become difficult to monitor, expensive to operate, and risky to deploy in regulated environments. Because organizations remain responsible for the decisions and outputs produced by their AI systems, these architectural weaknesses often translate directly into operational and legal risk.

Therefore, choosing the right AI development partner becomes less about who can build an agent fastest and more about who can design systems that remain stable and governable as they scale.

What Technical Debt Means in AI Agent Systems

In the context of autonomous AI, technical debt is not limited to messy code. It can accumulate across several layers of the system, often long before teams realize the risk.

Prompt-and-Policy Debt

Many AI agent frameworks rely on high-level abstractions that hide important system behavior. Prompts or tool rules may be distributed across orchestration layers, configuration files, and framework defaults.

It creates a brittle system logic where critical behaviors are not explicitly defined or documented. Teams often discover the problem only after launch, when small changes to prompts or workflows produce unexpected outcomes. By that point, teams should perform expensive rewrites into simpler, explicit implementations weeks after launch.

Evaluation Debt

Large language models (LLMs) are probabilistic systems. The same prompt can produce different outputs depending on context or model updates.

Without a structured evaluation framework, such as golden datasets, adversarial test cases, and tool-call contract testing, organizations cannot reliably detect performance regressions. Each model update, prompt change, or knowledge base modification introduces the risk of silent degradation.

Over time, organizations accumulate evaluation debt as systems continue operating, but no one can verify whether quality is improving or slowly deteriorating.

Security and Compliance Debt

AI agents often interact with external tools and internal databases. Failing to architect proper logging, data retention policies, and boundary controls transforms an agent into a significant legal liability.

Security researchers have already demonstrated that agentic architectures can enable indirect prompt injection and unauthorized access to tools. In some cases, malicious inputs embedded in external content can trigger unintended actions or data exposure. Vulnerabilities such as CVE-2025-32711 illustrate how untrusted inputs can be leveraged to extract sensitive information from enterprise AI assistants.

Without proper safeguards, these architectural weaknesses can quickly evolve from engineering problems into compliance and legal risks.

For organizations building agents that operate inside critical business workflows, the question is no longer whether to build custom systems, but whether the development partner understands how to design them for long-term reliability and control.

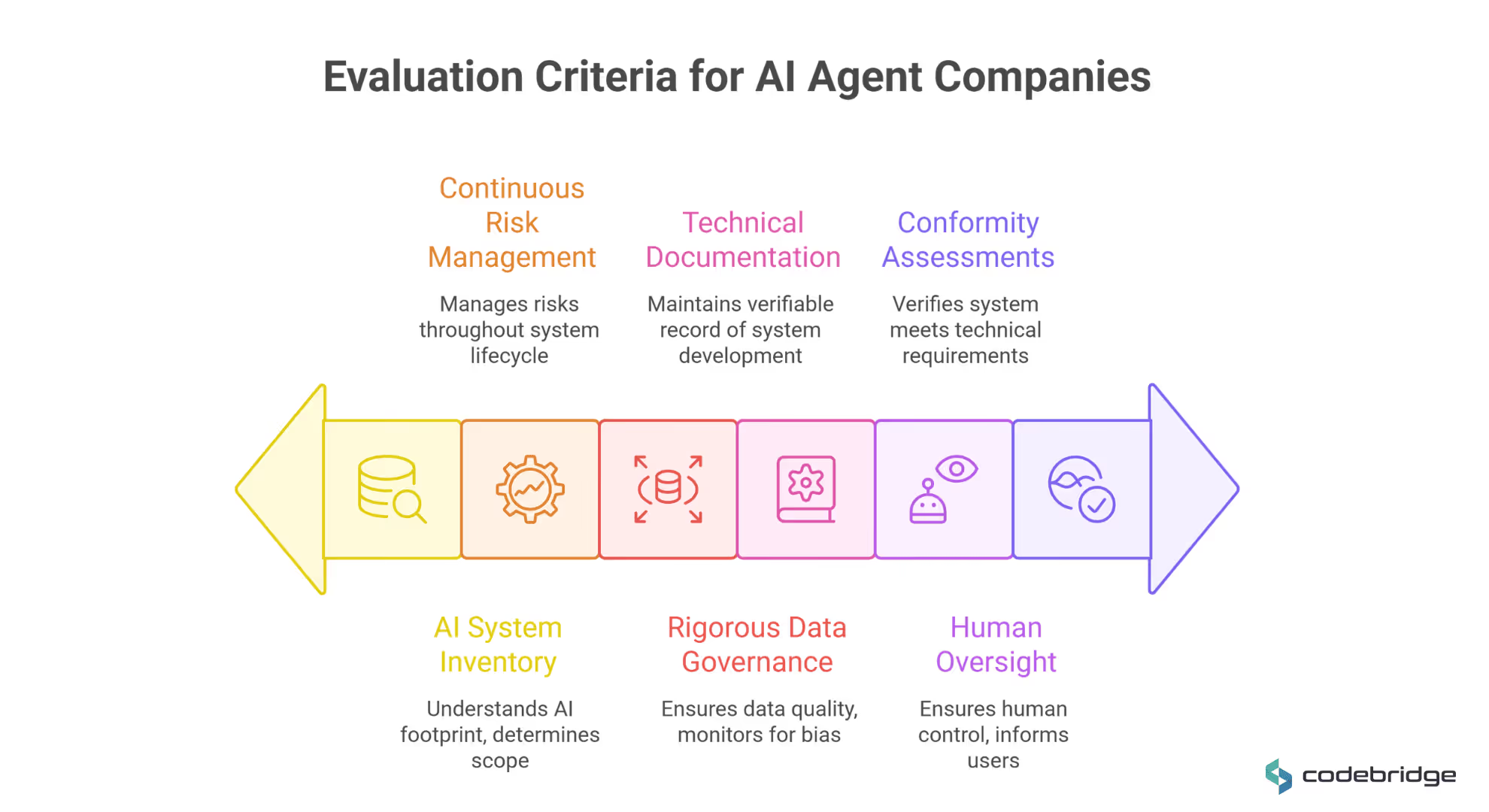

How to Evaluate AI Agent Development Companies

When choosing a development partner for an AI agent system, organizations should not treat the selection as a typical software vendor evaluation. Many vendors can build impressive demonstrations. However, far fewer can design systems that remain reliable and maintainable once they interact with real users and enterprise infrastructure.

Because AI agents operate across multiple tools and workflows and rely on probabilistic models, early architectural decisions have long-term consequences.

The following seven criteria define the profile of a high-maturity development partner capable of building scalable, debt-free agentic infrastructure.

1. Domain Expertise Over General AI Skill

Strong AI engineering skills alone are rarely sufficient. Effective AI agents must operate within the operational realities of a specific industry.

A developer may build a functional diagnostic assistant, but if they lack HealthTech experience, they may fail to implement the IEC 62304 software lifecycle requirements or HIPAA-mandated data de-identification necessary for clinical deployment.

When this happens, the consequences extend beyond a technical defect. Hospitals and healthcare providers may be forced to suspend the system’s use immediately. Regulators can require formal investigations into whether protected health information was exposed or processed improperly, and organizations may face significant financial penalties or legal liability if patient data protections were violated.

The Domain Maturity Checklist:

To evaluate if a development company can deliver an agent that is production-ready, executives should verify the following:

- Vertical-Specific Case Studies: Does the partner have a deep history in your sector (e.g., FinTech, HealthTech, or LegalTech) with measurable outcomes like ROI or TAT?

- Regulatory Literacy: Can they describe how their architecture proactively integrates frameworks such as the EU AI Act, GDPR, HIPAA, or SOX rather than treating them as post-hoc "paperwork"?

- Failure Mode Identification: Can they identify domain-specific risks, such as RAG poisoning in legal datasets, biased outcomes in automated hiring, or confident hallucinations in clinical environments?

- Workflow Integration: Do they understand the plumbing of your industry, such as integrating agents with existing enterprise systems like PACS/VNA in healthcare or specific CRMs and ERPs in sales and logistics?

Choosing a partner who values this architectural discipline ensures that your AI deployment acts as a sustainable competitive asset rather than an unmanaged liability.

2. Proven Outcomes vs. Theoretical Prototypes

AI vendors often showcase conversational demos or proof-of-concept interfaces. While these demonstrations can be visually impressive, they rarely reflect the complexity of real production environments.

Companies should look at case studies that highlight measurable business outcomes, such as ROI, turnaround time (TAT) reductions, or labor savings, rather than relying on abstract promises of innovation.

In the context of AI agents, success is not measured by the ability to generate text, but by the system’s ability to execute tasks reliably within real business processes.

To evaluate real-world outcomes, businesses can take the following actions:

- Long-Term Vitality: Ask if the cited solutions are still running in production and generating value months after the initial launch.

- The Post-Mortem Inquiry: Request a breakdown of what didn't work the first time. A partner who claims a flawless first deployment likely lacks the experience to handle the non-deterministic nature of AI.

- Quantifiable ROI Metrics: Demand specific data on efficiency gains (e.g., hours saved, reduced turnaround time) or revenue lift (e.g., increased qualified meetings).

- Liability Stress-Testing: Ask the vendor to walk through how their existing designs would have prevented high-profile liability scenarios, such as the Air Canada chatbot misrepresentation or the New York City small-business bot inaccuracies.

- Operational Integration: Ensure the outcome was a system embedded into an existing workflow (e.g., CRM or PACS integration) rather than a standalone experiment that created a new data silo.

Practical Examples of Proven Outcomes:

- HealthTech (RadFlow AI): The company engineered a workspace that integrated computer vision directly into existing PACS. Because the team understood the cognitive load and interruption science specific to radiologists, they built a "Human-in-the-Loop" platform that reduced CT reading time by 38% while adhering to FDA Software as a Medical Device (SaMD) Class II pathways.

- HR & Recruitment (RecruitAI): For an enterprise with 1,000+ employees, the challenge was in automating technical validation. The partner built a system grounded in the client’s internal hiring standards via RAG, which saved 200–300 engineering hours monthly by detecting AI hallucination patterns in applicant submissions.

3. Architecture Built for Change

Technical debt often originates in the choice of orchestration frameworks. High-quality partners avoid "black box" platforms that force business processes into rigid constraints;

Instead, they utilize modern multi-agent orchestration layers like LangGraph, AutoGen, or CrewAI so that components can be updated without rewriting the entire system.

The architecture must separate what the agent is allowed to do from the underlying models and tools to ensure the system remains portable and easy to update as model costs and capabilities shift.

Partners that design modular architectures also ensure that organizations are not permanently tied to a single model provider or orchestration framework. The system portability becomes critical as new models emerge and operational costs shift over time.

The Architectural Rigor Checklist:

- Does the partner use multi-step reasoning frameworks to ensure the agent can plan and self-correct?

- Is the system containerized to support scalable, cloud-native, or on-premise deployment?

- Is there a clear abstraction layer that allows switching between LLM providers (e.g., OpenAI to Anthropic) without rewriting the core logic?

4. Security and Compliance as a First-Class Concern

When AI agents interact with enterprise systems, they expand the organization’s potential attack surface, as a flawed agent design can convert a simple text vulnerability into unauthorized tool access or data leakage across the entire enterprise.

That’s why high-maturity development partners must move beyond generic security slogans and implement a security-by-design posture that proactively mitigates these novel risks.

Practical Examples of Security-First Implementation

- Preventing Chained Vulnerabilities: In a multi-agent system, a security-aware partner designs strict authorization boundaries so that a lower-privileged agent cannot induce a higher-privileged agent to perform sensitive actions. This pattern is also known as second-order prompt injection.

- Data Sovereignty for Regulated Industries: Mature partners assist executives in navigating complex deployment decisions, such as choosing between public APIs, VPCs, or on-premise implementations to protect intellectual property and ensure that proprietary data is never used to train public models.

To evaluate a partner's security maturity, companies should verify the following capabilities:

- Framework Adherence: Can the partner demonstrate strict compliance with global and industry-specific standards such as SOC 2, HIPAA, GDPR, and the emerging EU AI Act?.

- Threat Modeling for LLMs: Does their process include specific mitigations for LLM-specific vulnerabilities, such as indirect prompt injection and insecure output handling?.

- Bounded Autonomy: Can they provide a written "bounded autonomy policy" that explicitly defines what the agent is strictly prohibited from doing under any circumstances?.

- Immutable Audit Trails: Will the system generate detailed logs of every action, tool call, and decision that are stored securely and designed not to leak PII?.

5. A Partner Mindset, Not a Project Mindset

In traditional software development, deployment is often viewed as the finish line. But for autonomous AI agents, deployment is only the starting line. AI agent projects are uniquely risky because they are non-deterministic. It means that the same prompt can return different outputs, and performance can degrade silently over time.

A development company with a project mindset delivers a fixed scope and exits, leaving the client to manage a system that will inevitably face performance decay as data sources, user behaviors, and model capabilities evolve.

Conversely, a partner mindset acknowledges that AI is a continuous lifecycle requiring ongoing refinement, re-tuning, and scaling.

Without a long-term plan, organizations face a degradation every time a model version changes.

Effective maintenance typically costs between 15% and 30% of the initial development investment annually. This budget covers model retraining (often needed every 3–6 months), security patches, and adapting to shifting regulatory requirements like the EU AI Act.

Practical Examples of the Partner Mindset in Execution:

- Handling Model Drift: A sales operations agent that effectively qualifies leads today may lose its edge in six months as market slang or competitor tactics change. A partner mindset involves setting up continuous feedback loops where production failures are promoted to versioned test datasets with a single click, ensuring the system learns from its mistakes.

- Infrastructure Optimization: As an agent moves from 50 internal users to 5,000 end-users, token costs can skyrocket. A long-term partner actively manages this Total Cost of Ownership (TCO) by implementing caching layers, token caps, and routing routine tasks to smaller, cost-effective models while reserving premium models for complex reasoning.

- Strategic Adaptability: When a major model provider (like OpenAI or Anthropic) releases a significant update, a partner-led architecture allows for "swap the model" drills. This ensures the agent remains portable and is not locked into a single provider’s roadmap or pricing structure.

The Partner Mindset Checklist:

- Explicit Lifecycle Plan: Do they have a structured approach for model re-tuning and data refresh as business rules evolve?

- Incident Response: Can they answer: “What is the protocol at 3:00 AM when a tool times out and critical business users are blocked?”

- Performance Benchmarking: Is there a process for time-travel session replays to pinpoint where a reasoning path diverged from the intended goal?

- Regression Suite Ownership: Will they deliver a repeatable test harness (golden sets, adversarial tests) so you can validate future model updates without their intervention?

6. Evaluation and Reliability Frameworks

Because large language models produce probabilistic outputs, manual review of responses is not sufficient to ensure reliability. Production-grade systems rely on structured evaluation pipelines that test system behavior across different scenarios.

These frameworks measure factors such as factual accuracy, task completion rates, and consistency across different inputs. In higher-risk environments, human oversight mechanisms are often integrated into the system.

The Quantitative Evaluation Stack

Decision-makers should look for partners who use semantic scoring metrics to quantify performance across three primary dimensions:

- Retrieval Quality: This includes Context Precision (verifying if retrieved documents are relevant) and Context Recall (ensuring the agent retrieved all information needed to answer correctly).

- Generation Integrity: This measures Faithfulness (ensuring the response is grounded in provided data without hallucination) and Answer Relevancy (verifying the response actually addresses the user's intent).

- Agentic Execution: This tracks Task Completion (whether the agent successfully achieved the goal) and Step Efficiency (ensuring the agent didn't take circuitous paths or enter infinite loops).

Human-in-the-Loop (HITL) as a Reliability Requirement

For high-stakes enterprise applications, full agent autonomy is often a liability rather than an asset. A production-ready partner will design explicit Human-in-the-Loop (HITL) patterns to manage risk and ensure that AI acts as an augmentation, not an unmanaged replacement.

Executives should expect their partner to define clear autonomy boundaries and escalation protocols. Common patterns include:

- Human-in-the-Loop (HITL): The agent pauses at predefined checkpoints to request human approval before taking sensitive actions, such as processing a payment or deleting a file.

- Human-on-the-Loop (HOTL): The agent operates autonomously, but a human monitors a supervisor dashboard and intervenes asynchronously for exceptions or refinements.

- Confidence-Based Routing: The system automatically executes tasks only when the model’s confidence score exceeds a high threshold (e.g., 90%).

A partner who understands the execution realities of production AI will be able to provide the following verification artifacts before you sign a contract:

- Golden Test Sets: Does the partner have a curated dataset of representative inputs and "gold standard" outputs to measure performance across model updates?

- Adversarial Testing: Does the partner's evaluation plan include "red teaming" for prompt injections and edge cases designed to break the system?

- Regression Gates: Is there an automated pipeline that blocks a new prompt or model version from being deployed if its quality score drops below a set threshold?

- Traceability Logs: Will the system record not just the final output, but the "internal monologue" — the reasoning steps, tool calls, and retrieved context that led to the decision?

The Strategic Advantage of Choosing the Right Partner

Transitioning to agentic AI is a fundamental business change. With this transformation, a high-maturity partner provides two primary strategic advantages: the protection of capital investment and the optimization of the system's total cost of ownership.

Protecting Your Investment

The market is currently saturated with agent washing, where vendors sell prototypes built on simple prompts rather than rigorous systems engineering. Partnering with an engineering-focused firm prevents budgets from being wasted on generic, off-the-shelf wrappers that lack the depth to handle enterprise complexity.

A sophisticated partner ensures that your AI deployment acts as a durable business asset by:

- Building for Scale: Treating AI agents as production-grade enterprise infrastructure rather than experimental features.

- Ensuring IP Ownership: Clarifying that the client owns the custom code, prompts, policies, and evaluation assets, allowing for vendor independence.

- Mitigating Legal Risk: Designing for "bounded autonomy" to prevent liability scenarios where an agent provides wrong advice or performs unauthorized actions.

Prototype vs Production AI Systems

Optimizing Total Cost of Ownership (TCO)

The initial cost of building an AI agent is often only a small part of the total investment. Without architects who understand the operational realities of production AI, organizations frequently encounter escalating costs after deployment.

A capable partner anticipates these hidden cost drivers, from infrastructure usage to monitoring and model updates, and designs systems that remain efficient over time.

Key TCO Optimization Factors Include:

- Token and API Management: Enterprise usage can easily burn millions of tokens per month; inefficient prompts or lack of memory caching can cause API fees to exceed. A mature partner implements caching layers and token caps to control this spend.

- Cloud Infrastructure: Hosting, databases, and vector stores scale with usage; a partner utilizing cloud-native architectures and reserved instances can reduce build and operational spend.

- Reducing Silent Degradation: By delivering a repeatable test harness (golden sets and regression gates), a partner prevents the expensive outages that occur when models or retrieval indexes are updated without proper validation.

Conclusion

The emergence of AI agents requires a shift in how organizations think about system oversight. Traditional infrastructure monitoring is no longer enough. Now, teams must also monitor how agents reason, make decisions, and interact with enterprise systems.

Avoiding technical debt in these environments depends largely on architectural discipline. Organizations need development partners who prioritize clear system design and strong data governance, rather than rapid prototypes or short-term experimentation.

Ultimately, selecting a development partner for AI agents is less about outsourcing a project and more about choosing how the system will be engineered and maintained over time. Organizations that approach this decision with architectural rigor significantly reduce the risk of accumulating technical debt as their AI capabilities expand.

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript