Artificial intelligence is already shaping how organizations operate and invest. In 2025, 88% of organizations reported using AI in at least one business function, and 92% of companies said they expect to increase their AI investments over the next three years.

Yet in regulated industries, such as healthcare, financial services, and education, the path from pilot to production is rarely straightforward. Many organizations successfully demonstrate AI in controlled environments but struggle to move those systems into real operations. Projects often stall, costs increase, and leadership begins to question the initiative. In most cases, the real obstacles tend to appear in engineering design, governance structures, and regulatory readiness.

Building AI systems for regulated environments requires a different mindset from building AI products in less constrained sectors. Compliance cannot be added after the system is built. It has to shape the system from the beginning. Architecture, data pipelines, model selection, monitoring, and human oversight must all support auditability and regulatory review. When these considerations are treated as an afterthought, projects often fail long before reaching production.

Addressing these challenges requires treating AI System Engineering as a distinct discipline. It is separate from AI research, data science, or even traditional software engineering. While the AI Researcher focuses on improving models and the Data Scientist on extracting insights from data, the AI Systems Engineer focuses on scalable model deployment and orchestration — how models operate reliably inside real organizations.

In regulated industries, this distinction directly affects whether AI systems can reach production. This article outlines how decision-makers can design, deploy, and maintain AI systems that remain auditable, explainable, and resilient in production.

The Regulatory Landscape: What’s Changed and Why It Matters Now

The regulatory environment around AI is evolving quickly. What began as voluntary guidance is now becoming enforceable law. Regulators are no longer satisfied with compliance at the moment a system launches. Increasingly, they expect organizations to demonstrate that AI systems remain safe, controlled, and accountable throughout their entire lifecycle.

In healthcare and life sciences, oversight is becoming more structured. The U.S. Food and Drug Administration (FDA) is expanding its framework for Software as a Medical Device (SaMD) to address AI systems that evolve after deployment. A key concept is the Predetermined Change Control Plan (PCCP), which allows approved systems to adapt over time — provided those changes are documented and controlled in advance. At the same time, HIPAA continues to govern how patient data can be used in AI systems, making data handling and access controls a central engineering concern.

In financial services, regulators are applying established model-governance standards directly to AI. The most influential framework remains SR 11-7 on Model Risk Management, used by U.S. banking regulators to supervise models used in credit scoring, fraud detection, and risk analysis. Under this framework, organizations must validate models, monitor their behavior over time, and maintain clear documentation explaining how decisions are produced. In addition, lending systems must comply with the Equal Credit Opportunity Act (ECOA), which requires that automated credit decisions remain explainable and free from discriminatory outcomes.

For education and EdTech platforms, regulation focuses primarily on data protection and accountability. In the United States, FERPA (Family Educational Rights and Privacy Act) governs how student data can be collected, stored, and shared. As AI systems begin generating recommendations about student performance or educational pathways, institutions must ensure that automated outputs do not compromise student privacy or institutional responsibility.

Across all sectors, the EU AI Act is becoming a global reference point for AI governance. Entering into force in 2025, it introduces a risk-based framework that classifies AI systems by their potential impact. Systems used in areas such as medical diagnostics, credit scoring, or educational evaluation fall into the high-risk category and must meet strict requirements for data governance, documentation, transparency, and human oversight. Non-compliance can result in penalties of up to €35 million or 7% of global annual turnover.

These regulatory developments show that AI systems are increasingly treated like critical infrastructure, expected to operate with accountability and continuous oversight.

Why Traditional Approaches Fail in AI System Engineering

Most enterprises attempt to solve these systems engineering challenges with either pure data science thinking or traditional software development practices. Experience across regulated industries shows that neither approach alone is sufficient.

1. The Data Science Gap in Generative AI Engineering

Most machine learning teams measure success through performance metrics like accuracy, F1 scores, or AUC. However, in a regulated industry, a model that is highly accurate but cannot explain its reasoning or demonstrate fairness is a liability.

A 2025 McKinsey survey found that even among organizations actively deploying generative AI, most had not implemented basic risk management practices for common failure modes like bias or inaccuracy. The Stanford AI Index points to the same imbalance: investment in AI capabilities is growing rapidly, but governance and oversight systems are not keeping pace.

2. Deterministic Software Thinking vs LLM Engineering

Traditional software engineering assumes predictable systems. Given the same input, a program should produce the same output every time. But AI systems work differently. They are probabilistic. Their behavior depends on data distributions, model training, and changes in the environment over time. A model that works well today may behave differently months later because the underlying data has shifted.

Standard software practices — CI/CD pipelines and QA reviews — were not designed to detect these slow shifts. Research by Google’s D. Sculley and colleagues highlights that in mature machine learning systems, the model itself is often a small part of the total system. Most complexity lives in the surrounding infrastructure, such as data pipelines, feature stores, monitoring systems, and feedback loops. When those components are poorly managed, technical debt accumulates quickly.

3. The Organizational Gap: Compliance as an Afterthought

Another common failure point is organizational. In many companies, compliance and legal teams become involved only at the end of the development process, just before launch.

However, the biggest barriers to AI adoption are often organizational and not technical. In regulated industries, late involvement from compliance teams often means the system has to be redesigned to meet regulatory requirements. That can delay projects by months or even halt them entirely.

4. The Vendor Gap: The Shared Responsibility Blind Spot

The rise of third-party AI APIs has created a dangerous assumption that using a reputable AI vendor organizations can transfer most of the regulatory responsibility.

In practice, that assumption is incorrect. The EU AI Act makes a clear distinction between providers (those who build AI systems) and deployers (those who use them). Companies deploying AI remain responsible for risk management and human oversight, even when the model itself comes from a vendor.

Security researchers are raising similar concerns. For instance, the OWASP Top 10 for LLM Applications identifies supply-chain vulnerabilities as one of the major risks in AI systems. If organizations rely on external models without proper auditing or safeguards, they introduce risks that service-level agreements alone cannot eliminate.

These gaps explain why many enterprise AI projects stall after promising early results. Building reliable AI in regulated industries requires something different — a systems engineering approach designed specifically for probabilistic systems operating under regulatory scrutiny.

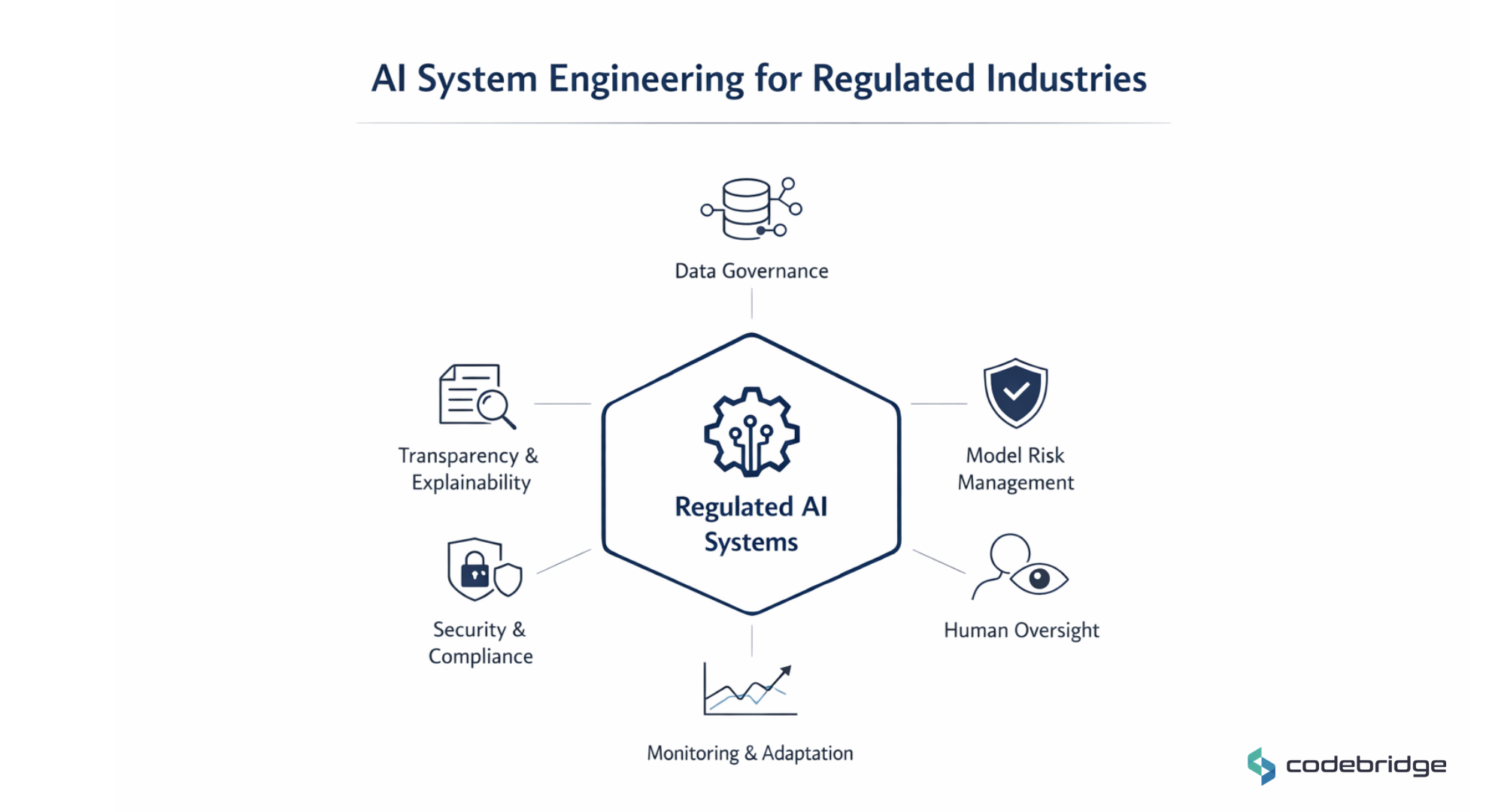

The Core Pillars of AI System Engineering for Regulated Industries

Deploying AI in regulated environments requires a system designed for accountability and long-term reliability. The organizations that succeed treat AI not as an isolated model, but as an engineered system governed by clear operational controls.

Each of the following six pillars is grounded in authoritative regulatory expectations and peer-reviewed research. Together, they form a practical blueprint for building AI systems that can withstand regulatory scrutiny.

Pillar 1: Data Governance and Provenance

In regulated industries, every decision produced by an AI system must be traceable back to the data that informed it. Regulators increasingly expect organizations to demonstrate how training data was sourced, how it was transformed, and how it ultimately influenced the model’s inputs.

This requires clear data ownership, documented preprocessing steps, versioned datasets, and metadata that records lineage across the entire pipeline. The goal is not to prove that a single raw record caused a specific output, but to ensure that the full chain, from source data to model features, can be reconstructed and audited when needed.

For organizations handling sensitive information such as patient records or financial data, strong governance also ensures that privacy obligations and consent requirements are respected throughout the lifecycle of the system.

Source: NIST AI Risk Management Framework (AI RMF 1.0); EU AI Act: Article 10

Pillar 2: AI Risk Management and Model Governance

AI systems must be governed as operational risk systems, not experimental tools. In mature environments, especially banking and insurance, this discipline is formalized as model risk management (MRM).

Under an MRM framework, models must undergo structured validation before deployment. This includes:

- Benchmarking against challenger models

- Testing under extreme scenarios

- Measuring uncertainty

- Documenting known limitations

An independent review is also often required to ensure that model assumptions and training data do not introduce unacceptable risk.

The main shift is that the model is treated as a regulated component of the business infrastructure, subject to ongoing oversight rather than a one-time development deliverable.

Source: Hidden Technical Debt in Machine Learning Systems — Google Research (Sculley et al.); NIST AI Risk Management Framework (Risk evaluation and system validation)

Pillar 3: Explainability and Transparency in AI Systems

Regulated AI systems must be understandable to the people affected by them. This does not always require fully interpretable models, but it does require meaningful transparency around how the system operates and why a decision was made.

Different stakeholders need different types of explanations. A regulator may need documentation about model logic, evaluation metrics, and training data. A domain expert, such as a clinician or credit analyst, needs explanations that allow them to assess whether a recommendation is reasonable in context.

Equally important is contestability. Individuals and institutions must have a way to question, review, and potentially override automated outcomes. Designing these mechanisms early, through interfaces, documentation, and workflow controls, helps ensure that automated decisions remain accountable.

Sources: EU AI Act — Article 86; NIST AI Risk Management Framework (Transparency, explainability, and interpretability principles);

Pillar 4: Human Oversight and Operational Control

Regulatory frameworks consistently emphasize that AI systems should not operate without meaningful human oversight. The purpose of human involvement is not simply to approve every output, but to ensure that humans retain the ability to understand and ultimately control automated decisions.

Effective oversight can take several forms. In high-risk settings, a human may review recommendations before they are executed. In other cases, oversight occurs through escalation thresholds, anomaly alerts, or supervisory review of system behavior over time.

The essential requirement is that the system’s design allows responsible professionals to intervene when needed. AI systems should support human judgment and not replace it.

Sources: EU AI Act — Article 14 (Human oversight requirements for high-risk AI systems); FDA Clinical Decision Support Software Guidance; NIST AI Risk Management Framework (Human-AI interaction and oversight mechanisms)

Pillar 5: Continuous Monitoring and Change Management

Unlike traditional software, AI systems change over time. Shifts in user behavior, market conditions, or data distributions can gradually alter how a model performs.

For this reason, compliance cannot be treated as a one-time certification at launch. Production systems must continuously monitor performance, detect data drift, and track changes in model behavior.

When thresholds are exceeded, such as declines in accuracy, fairness, or calibration, organizations must have processes for investigation and revalidation. Some sectors are already formalizing these lifecycle controls, recognizing that safe AI deployment requires ongoing supervision rather than static approval.

Sources: FDA Artificial Intelligence / Machine Learning Software as a Medical Device (SaMD) framework; NIST AI Risk Management Framework (Ongoing monitoring and lifecycle management)

Pillar 6: Security, Privacy, and Third-Party Risk

Modern AI systems rarely operate in isolation. They rely on external data sources, third-party models, cloud infrastructure, and API integrations. Each dependency introduces potential security and compliance risks.

Therefore, organizations must treat AI systems as part of a broader technology supply chain. Access controls, secure data handling, and dependency monitoring are essential components of responsible deployment.

Equally important is understanding the division of responsibility between AI providers and AI deployers. Even when organizations use third-party models or platforms, they remain responsible for how those systems are used within their own decision processes.

Sources: NIST AI Risk Management Framework (Secure and resilient AI systems); OWASP Top 10 for Large Language Model Applications;

Organizational Design and the AI Operating Model: Who Owns This?

Technology alone cannot solve regulatory risk, because it requires a structural shift in leadership and organizational design.

One of the most consequential decisions a CEO can make is defining ownership of AI system engineering. In many organizations, responsibility is fragmented across data science teams, software engineering, and compliance functions. When ownership is unclear, monitoring and documentation often fall between teams.

Effective organizations resolve this early by assigning accountability at the leadership level, whether under the CTO, Chief Data Officer, or Chief Risk Officer. What matters most is that responsibility is explicit and operational, not informal.

Many organizations are moving toward cross-functional AI governance functions that include engineering, legal, compliance, and business leadership. These are not committees that meet quarterly, but operating functions with actual authority and accountability.

Talent is another constraint. The skill set required to engineer regulated AI systems sits at the intersection of machine learning and regulatory compliance. Professionals with this hybrid background are still scarce. Recent labor market analyses by Levels.fyi show that roles requiring AI expertise command a clear pay premium. A compensation data from Dice indicates ML and AI engineers earn roughly 8% more than general software engineers, while broader studies from report salary premiums of roughly 18–28% for professionals working with AI technologies. For this reason, organizations face a strategic choice: develop these capabilities internally, recruit experienced talent at a premium, or partner with specialized firms that already operate in regulated environments.

However, the most difficult change is cultural. In many companies, compliance is treated as a final checkpoint before launch. In regulated AI systems, that model no longer works. Regulatory constraints must shape architecture and operational controls from the beginning.

The Competitive Advantage of Getting This Right

Organizations that approach AI system engineering with discipline often move more efficiently in regulated environments. Strong engineering practices tend to build credibility with regulators, which can simplify review processes and reduce the risk of costly remediation after deployment.

In healthcare, well-designed lifecycle controls, such as those anticipated in the FDA’s evolving framework for adaptive AI systems, can help organizations manage updates and improvements more predictably. This makes it easier to maintain regulatory alignment as systems evolve.

In financial services, mature model governance allows institutions to deploy AI more broadly while maintaining appropriate risk controls.

In sectors such as EdTech and LegalTech, where public trust in AI remains fragile, transparency and responsible system design can become meaningful differentiators.

There is also a timing advantage. Regulatory frameworks for AI are still developing in many jurisdictions. Organizations that establish strong engineering practices early are often better prepared to adapt as rules evolve. Rather than revisiting core architecture each time requirements change, they can adjust governance and controls within an already structured system.

Companies that treat AI as a governed system tend to move beyond pilot projects more reliably, while others remain constrained by uncertainty around risk, compliance, and operational stability.

Conclusion

AI System Engineering for regulated industries is a distinct discipline that requires integrated thinking across technology, governance, and organizational design. The enterprises should view compliance not as a burden to be managed, but as an engineering constraint to be solved to gain an early competitive advantage.

Organizations must now decide if they will continue to treat AI as an experimental research project or as a core artifact requiring the same rigor as any other mission-critical infrastructure. As you evaluate your own AI roadmap, consider whether your team has the muscle to execute on these core pillars.

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript