A Tier-1 diagnostic imaging network operating 12 radiology centers across three states faced a critical inflection point: scan volumes were growing at 22% year-over-year, yet their radiologist head count remained flat. The result was accelerating burnout, rising turnaround times (TAT exceeding contractual SLAs by 15%), and measurable degradation in detection accuracy during late-shift reads.

Codebridge was engaged to engineer a HIPAA-compliant, cloud-native AI-augmented diagnostic workspace integrated with existing PACS that would integrate computer vision directly into the clinical workflow without disrupting the existing PACS infrastructure or requiring radiologists to learn new tools. The mandate was clear: augment human expertise, never replace it.

Over a 24-week engagement, an 8-person Codebridge team delivered a production-grade "Human-in-the-Loop" platform that reduced average CT reading time from 15.2 to 9.4 minutes (a 38% efficiency gain), maintained 96% nodule detection sensitivity for sub-4mm lesions, and achieved sub-second image rendering even on low-bandwidth satellite connections used by the client's rural teleradiology sites.

The solution passed an independent clinical validation study (n=2,400 scans, double-blind design), is architected in alignment with FDA Software as a Medical Device (SaMD) Class II regulatory pathways, and has been operating in production for over 9 months without reported critical system failures.

The client is a privately held diagnostic imaging network generating nine-figure annual revenue. They serve as a contracted radiology provider for multiple hospital systems and a large network of outpatient imaging centers across several states. Their operation processes over 500 chest CT scans weekly, with seasonal spikes exceeding 700 scans during peak respiratory periods.

The diagnostic imaging market is undergoing a structural shift. Referring physician expectations for turnaround have compressed from 48 hours to same-day reporting for routine studies. Simultaneously, national workforce analyses highlight a growing radiologist shortage driven by increasing imaging volumes and physician attrition. For the client, expanding headcount alone was not a sustainable solution — they required a scalable technology multiplier.

The client defined four non-negotiable success criteria:

During a focused discovery phase, our clinical engineering team worked alongside radiologists across multiple sites to understand the operational, technical, and regulatory friction embedded within their CT workflow. What emerged was not a single bottleneck, but a layered system problem — one that could not be resolved by deploying another standalone AI algorithm.

Radiologists were required to operate across multiple disconnected systems simultaneously: the primary PACS viewer for image interpretation, a separate AI interface accessed outside the core viewer, and a voice-dictation reporting system.

This fragmented environment introduced consistent context-switching overhead — often adding several minutes per study — while forcing clinicians to manually reconcile AI findings with the primary imaging interface.

Time-motion analysis revealed that roughly one-third of total reading time was consumed by non-interpretive tasks: navigating between systems, re-orienting spatial context after window switches, manually transferring measurements into structured reports, and verifying external AI annotations.

The cost was not only temporal but cognitive. Research in interruption science shows that even brief context shifts degrade diagnostic attention. In high-volume environments, this compounding cognitive load contributes directly to fatigue, variability in interpretation, and burnout.

As one clinical leader summarized during discovery:

“We didn’t need another black-box algorithm. We needed a workspace that supports how radiologists actually think and work.”

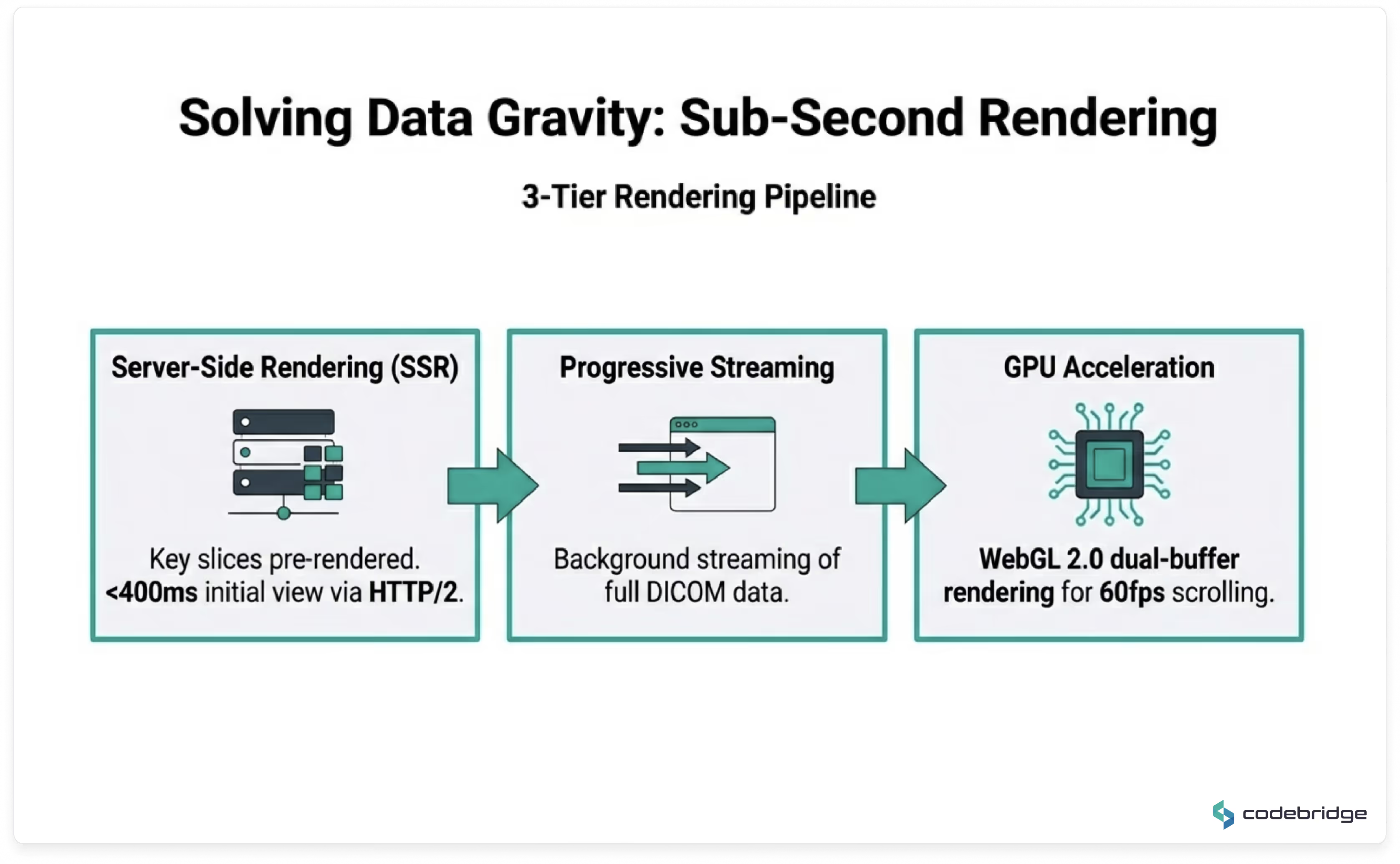

High-resolution chest CT studies routinely generate hundreds of DICOM instances per case, with full datasets often exceeding several hundred megabytes.

The client’s legacy remote access infrastructure introduced significant latency during peak usage periods. Initial study load times frequently exceeded acceptable thresholds, and scroll-through performance degraded under network congestion — particularly for rural teleradiology sites operating on low-bandwidth satellite connections.

During seasonal respiratory peaks, these constraints translated into growing backlogs, delayed turnaround times, and increased reliance on after-hours coverage.

This was not simply a network problem; it was an architectural one. Any AI overlay had to process large volumetric datasets while preserving sub-second interaction inside the radiologist’s primary workspace.

The client had previously piloted multiple commercial AI solutions. While technically functional, these tools generated elevated false-positive volumes, particularly in cases involving post-surgical changes, granulomatous disease, and motion artifacts.

Each false positive required manual verification and documentation — effectively adding work rather than removing it.

More concerning was the downstream effect on clinician behavior. Over time, a majority of radiologists reported developing the habit of dismissing AI findings without review. In such scenarios, AI ceases to function as a productivity enhancer and instead becomes a liability exposure layer.

An AI system that is routinely ignored delivers negative operational value.

Beyond workflow and performance constraints, the solution needed to operate within a strict regulatory framework.

This included:

The mandate was clear: any performance gains must be achieved without compromising compliance posture or introducing regulatory exposure.

Embedded clinical workflow analysis, infrastructure audit, regulatory gap assessment, and formal architecture definition aligned with future FDA submission pathways.

Development of the core diagnostic viewer with DICOMweb integration, secure SSO (SAML), cloud infrastructure provisioning, and IEC 62304-compliant development processes.

Model training and optimization on large-scale CT datasets, deployment via high-performance inference pipelines, reduction of false positives, and seamless embedding into the radiologist’s primary workflow.

Controlled multi-site deployment with real-world feedback loops, UX refinements, smart triage integration, and shadow-mode validation to benchmark performance against existing workflow.

Independent clinical validation study, load and security testing, staged 12-site deployment, clinician training, and preparation of documentation for FDA 510(k) pre-submission alignment.

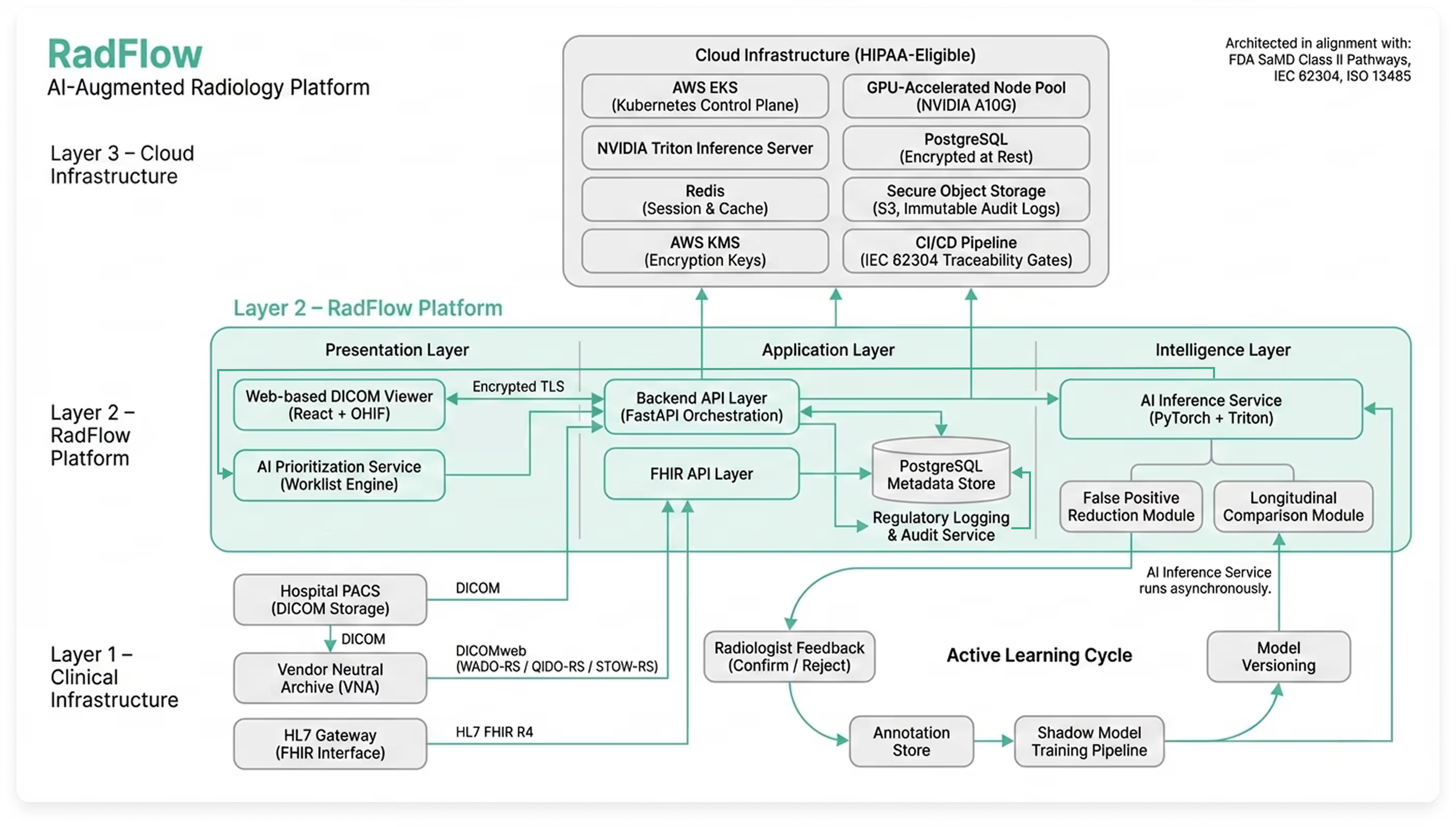

Rather than building a standalone AI model disconnected from clinical reality, Codebridge engineered a cloud-native AI-augmented diagnostic workspace designed to operate alongside existing PACS infrastructure while introducing triage, explainability, and governance capabilities.

The platform functions as an independent web-based imaging environment, fully synchronized with the client’s Vendor Neutral Archive (VNA) and reporting systems. Radiologists continue using their existing PACS worklist, but can open any study inside the AI workspace with one click through secure SAML authentication.

The result is not an algorithm — but a complete diagnostic layer that enhances interpretation, prioritization, and oversight without disrupting established workflows.

The platform consists of five integrated modules reflected directly in the production UI:

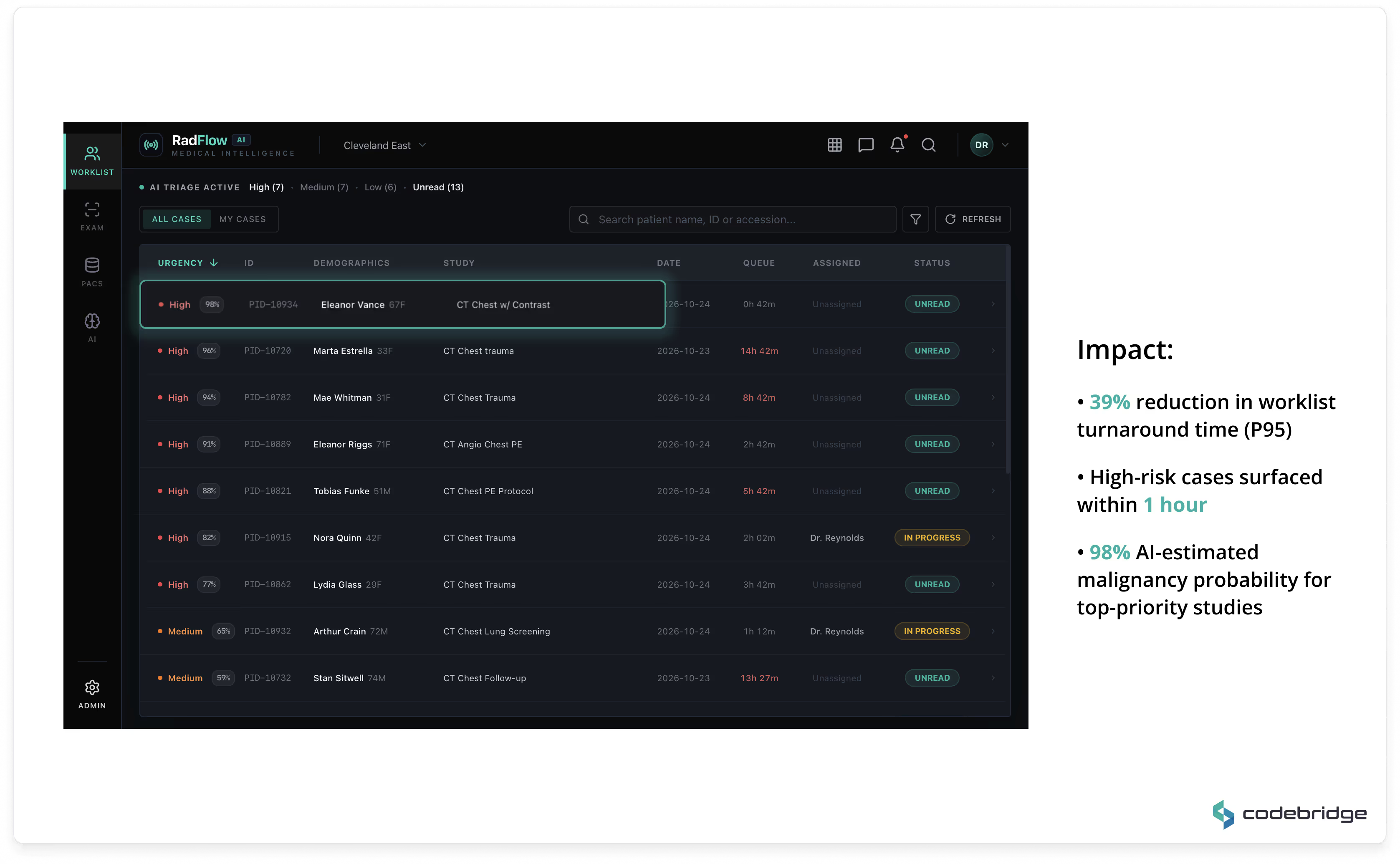

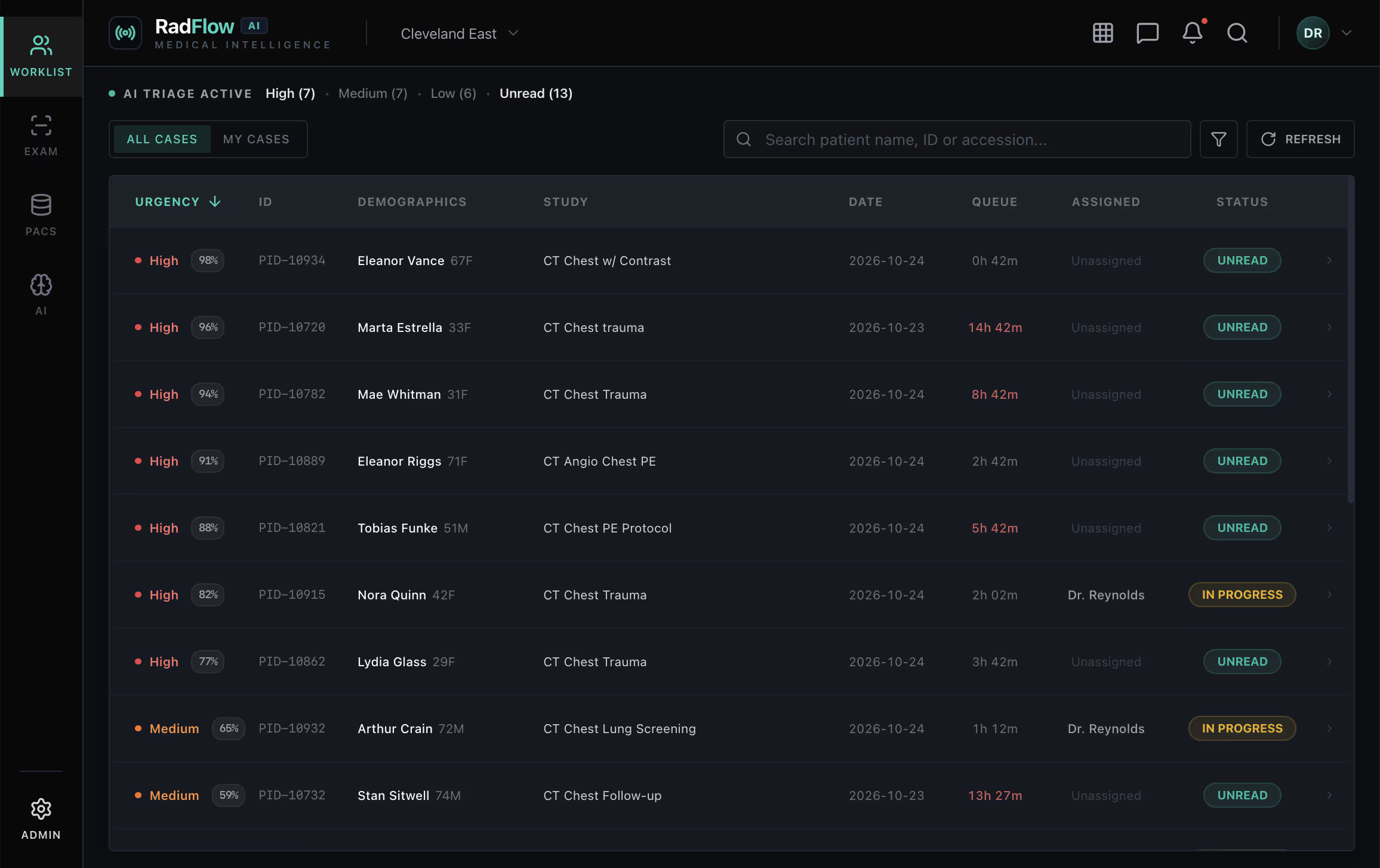

A real-time prioritized worklist ranks studies by AI-estimated malignancy probability and urgency score. High-risk cases are automatically promoted, enabling earlier review during peak scan volumes.

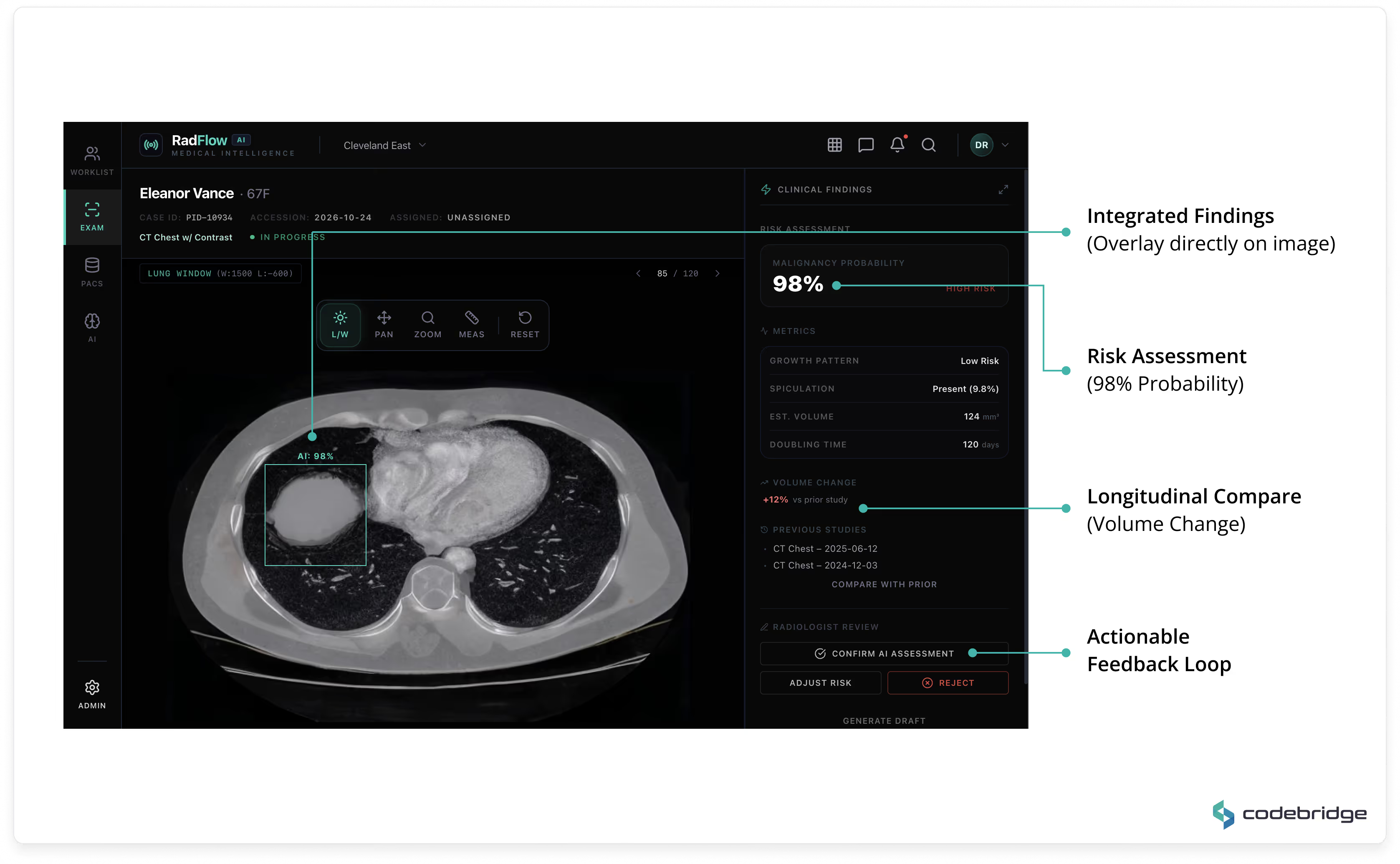

A GPU-accelerated web viewer (built on OHIF and Cornerstone.js) supports axial, MPR, and volumetric rendering directly in the browser. AI detections are displayed as structured overlays with:

All AI findings remain toggleable and fully under radiologist control.

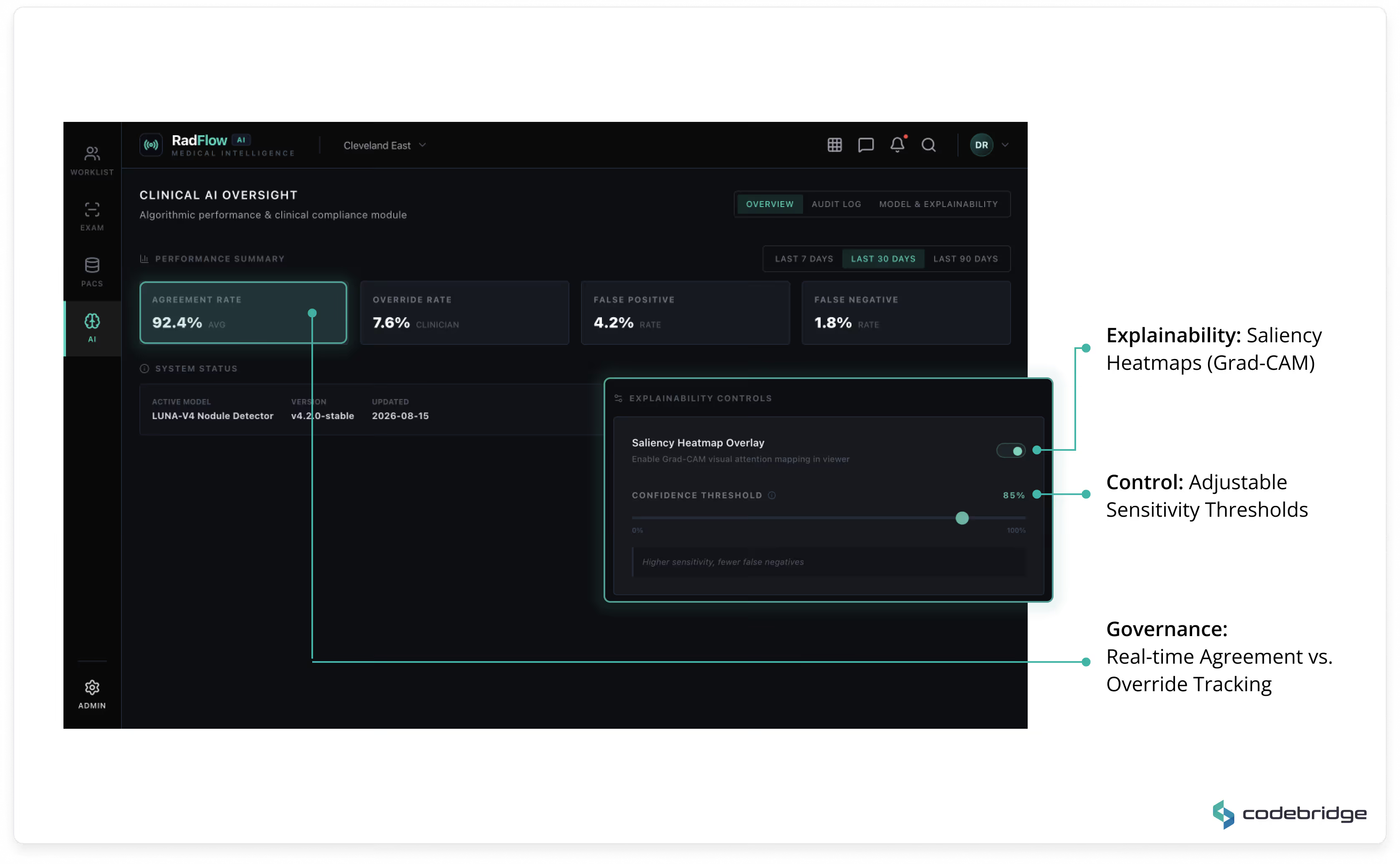

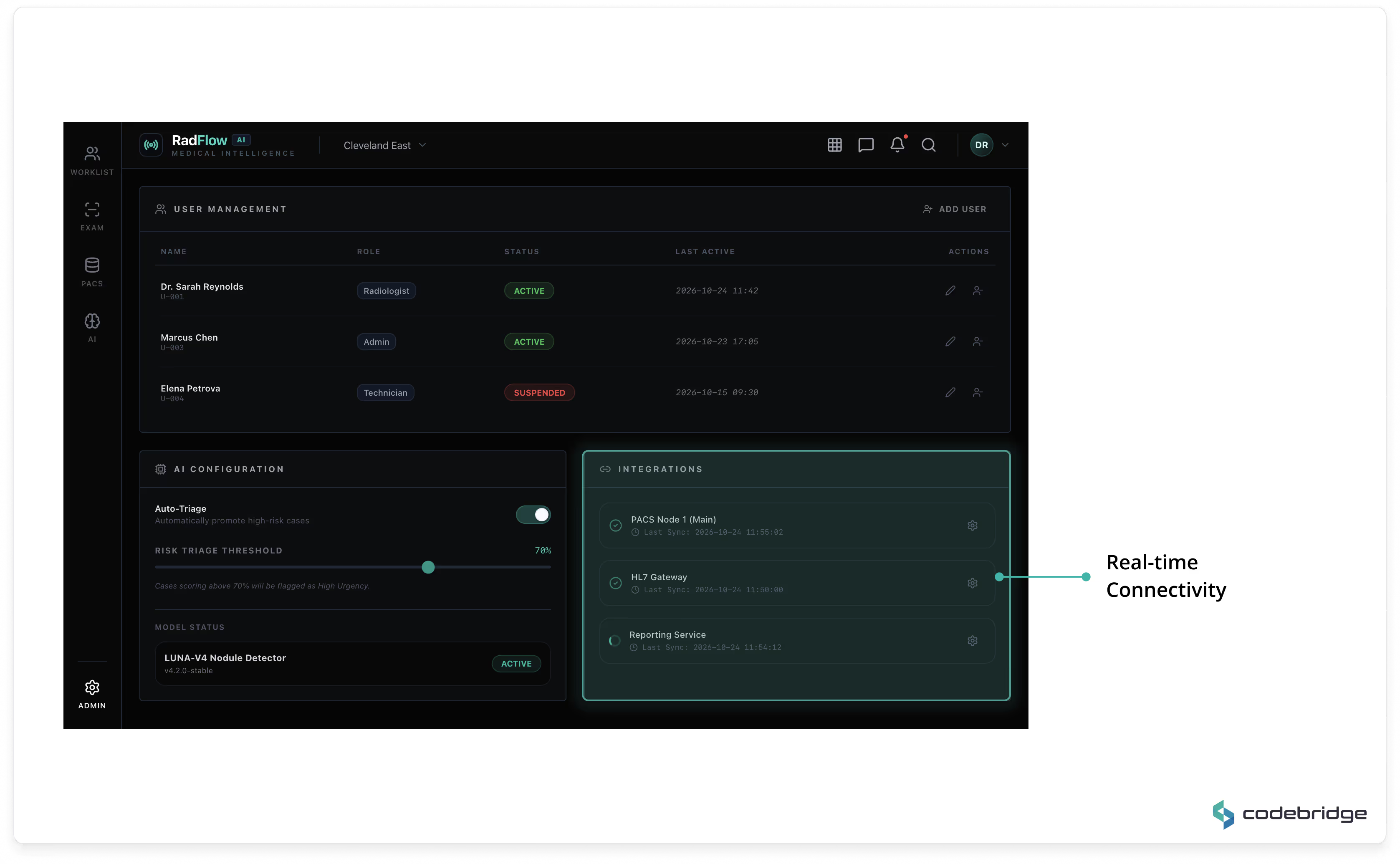

A governance dashboard tracks:

This module ensures transparent performance monitoring and regulatory traceability.

Every AI-assisted decision is logged with:

All changes are retained in immutable audit storage aligned with regulatory expectations.

Includes user management, role-based access control (RBAC), AI configuration settings, and PACS/VNA integration monitoring.

The viewer is fully browser-based and requires no local installation.

Key characteristics:

Average time to initial render: < 400ms on optimized networks.

Sub-second navigation once full dataset is loaded.

The system supports thin-slice chest CT studies (300–500MB) without perceptible UI blocking.

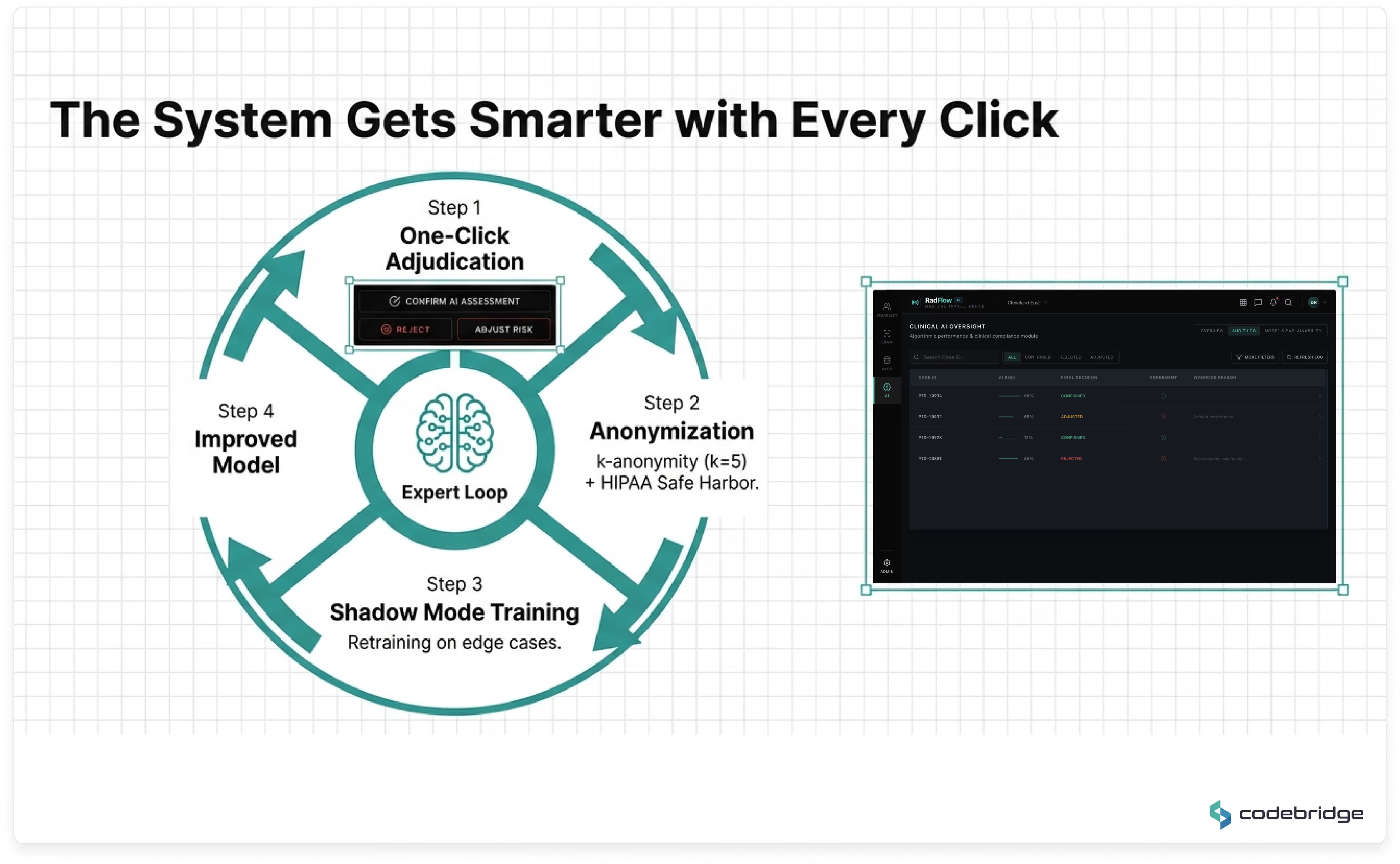

AI inference runs asynchronously upon study ingestion into the VNA.

By the time a radiologist opens a case, AI analysis is already available.

Model Design

Initial false positive rate reduced from 4.1 to 0.8 per scan.

After 9 months of active learning: reduced further to 0.4.

Sensitivity benchmark maintained at 96%.

The system is deployed in a HIPAA-eligible cloud environment.

Core components:

Average end-to-end inference latency: ~47 seconds per CT study.

Auto-scaling ensures cost-efficient GPU provisioning during peak seasonal demand.

The platform was architected in alignment with FDA Software as a Medical Device (SaMD) Class II regulatory pathways.

The development lifecycle incorporates:

The UI includes visible placeholders for FDA clearance status, ensuring readiness for post-validation regulatory marking.

Every technology choice was driven by three criteria: clinical-grade reliability, regulatory traceability, and long-term maintainability without vendor lock-in.

Codebridge deployed a cross-functional team of 8 engineers with deep domain expertise in medical imaging, regulatory compliance, and high-performance web applications.

All metrics below were validated through a formal 60-day post-deployment performance review conducted by the client’s Clinical AI Governance Board, supplemented by findings from an independent double-blind clinical validation study.

From 15.2 minutes to 9.4 minutes per study, validated across 4,800+ CT cases.

Validated in a double-blind study (n=2,400), exceeding the predefined 93% acceptance threshold.

Reduced from 4.1 (previous vendor solution) to 0.4 through a dedicated false-positive reduction network and 9 months of active learning.

70% improvement over legacy VPN-based viewers; fully functional over satellite connections (12 Mbps).

Productivity gains equivalent to approximately 3 FTE radiologists, plus a significant reduction in after-hours coverage costs at rural sites.

The Smart Triage feature automatically ranks studies by AI-estimated malignancy probability, enabling radiologists to prioritize high-risk cases during peak imaging volumes.

Within the first six months, 23 high-probability malignancy cases were reviewed within one hour of acquisition (compared to a prior average of 4.5 hours). According to the client’s Chief Medical Officer, the accelerated triage pathway contributed to earlier-stage detections during the evaluation period.

The most significant qualitative outcome was the restoration of radiologist trust in AI assistance.

The Radiologist Trust Score — an internal survey metric measuring the percentage of radiologists who routinely review AI findings — increased from 27% at baseline to 89% at six months.

This shift was not achieved through policy mandates or training initiatives, but through engineering discipline:

• 90% reduction in false positives

• Seamless in-viewport integration

• Full explainability and clinician control

“For the first time, the AI actually makes me faster instead of slower. I stopped ignoring it around week two of the pilot.” — Senior Radiologist, Site 3

Under the engagement terms, the client retains full IP ownership of:

The client’s board has identified this asset as a strategic differentiator in upcoming hospital contract renewals and as a foundational component of their planned FDA regulatory submission pathway.

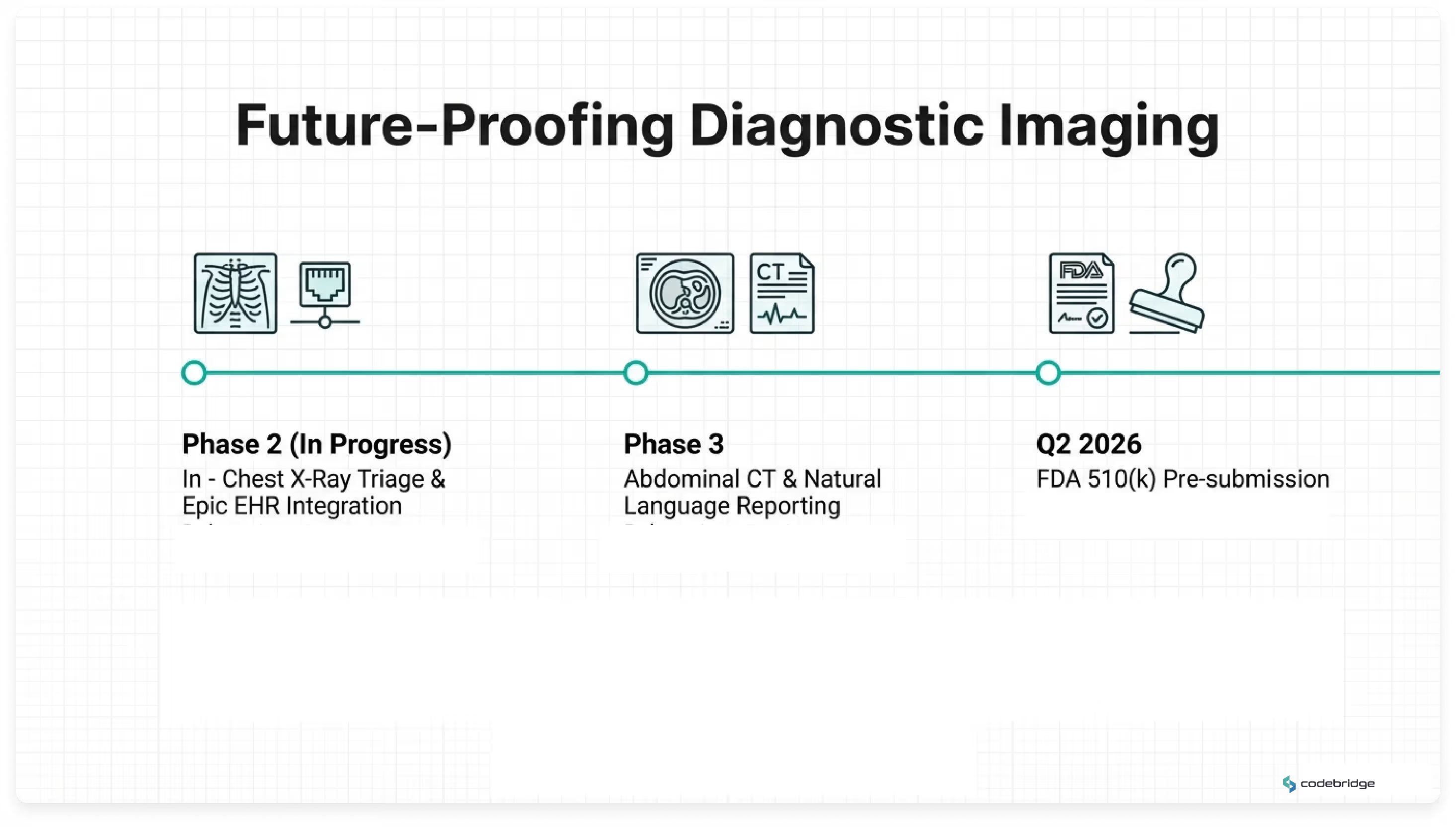

The platform was architected as a scalable foundation for expanding AI-assisted radiology capabilities across modalities and clinical domains. The client and Codebridge have aligned on a phased strategic roadmap for continued evolution.

The accumulated IEC 62304 documentation, independent clinical validation data, and regulatory design history file establish readiness for an FDA 510(k) pre-submission pathway. A pre-submission engagement is targeted for Q2 2026, subject to regulatory review timelines.

*Note: Due to NDA restrictions, the client name and specific institutional identifiers are anonymized. All performance metrics and validation results are based on real production data.

High-resolution chest CT studies may contain 600+ thin-slice images. Diagnostic cine-mode scrolling requires rendering 30–60 frames per second without perceptible latency — a threshold traditionally achievable only on native PACS workstations.

To meet this requirement in a zero-install browser environment, we implemented a dual-buffer WebGL rendering architecture. While the current frame is displayed from one GPU texture buffer, subsequent frames are decoded in parallel using WebWorkers and preloaded into a secondary GPU buffer. On scroll, buffers swap instantly.

This approach enables sustained 60fps cine-mode performance, even for 300–500MB CT datasets, delivering workstation-grade responsiveness within a secure web environment.

False positives were the primary barrier to clinical adoption in prior AI pilots. Rather than relying solely on generic model tuning, we engineered a dedicated false-positive reduction pipeline.

In regions with elevated prevalence of benign granulomatous disease, calcified nodules represented a dominant source of misclassification. We developed a post-processing classifier trained on a curated dataset of confirmed benign cases from the client’s anonymized archive. This classifier operates as a secondary filter layered atop the primary detection network.

The result was a 90% reduction in false positives while preserving 96% sensitivity for sub-4mm nodules — restoring clinician trust without sacrificing diagnostic rigor.

Continuous model improvement requires careful separation of clinical operations and research-grade data processing.

We implemented a multi-layer compliance architecture:

An independent third-party compliance audit validated that the architecture satisfies HIPAA and internal governance requirements while enabling structured active learning.

Previous AI pilots demonstrated that context switching between separate interfaces increased cognitive load and reduced adoption. Radiologists were required to reconcile findings across multiple systems, creating workflow friction and liability ambiguity.

The decision was therefore architectural, not algorithmic.

By embedding AI directly into a unified diagnostic workspace — with overlay controls, explainability, audit tracking, and triage integration — the system enhances interpretation without altering established reading patterns.

This design choice was instrumental in increasing the Radiologist Trust Score from 27% to 89% within six months.

The development lifecycle was aligned with IEC 62304 and ISO 13485 processes from project inception.

Each model version is associated with:

This structure supports a future FDA 510(k) pathway by maintaining a complete design history file and verifiable model lineage.

No.

The architecture is vendor-neutral by design and interoperates using open standards:

This ensures compatibility with enterprise PACS ecosystems while avoiding proprietary lock-in.

The AI inference layer runs on GPU-accelerated nodes within a HIPAA-eligible cloud environment, orchestrated via Kubernetes with auto-scaling policies.

During seasonal respiratory peaks, GPU resources scale dynamically based on queue depth and workload demand. Average inference latency remains ~47 seconds per CT study, without degradation of viewer responsiveness.

This separation of rendering and inference ensures UI performance is not impacted by backend model execution.

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

Unordered list

Bold text

Emphasis

Superscript

Subscript

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

Unordered list

Bold text

Emphasis

Superscript

Subscript

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

Unordered list

Bold text

Emphasis

Superscript

Subscript

“This initiative was not about automation — it was about capacity expansion without compromising diagnostic integrity. The platform allowed us to increase throughput while strengthening compliance posture and long-term regulatory readiness.”