Agentic AI systems can process thousands of decisions per hour. They can also hallucinate, misclassify, and produce outputs that violate regulatory requirements. In a recent study on legal hallucinations, researchers found that ChatGPT generated incorrect legal information 58% of the time when asked verifiable questions about federal court cases. Llama 2 reached 88%. In finance, healthcare, and law, each one of those failures is a potential liability event, not a UX problem.

Dropping a human reviewer at the end of an automated pipeline does not solve this. It slows the system down and breeds rubber-stamping: reviewers who approve outputs they no longer examine closely enough to catch errors. You get the worst of both worlds: reduced speed with no real safety gain.

To build regulated AI systems that scale, you need compliance-by-design. That means mapping specific human oversight controls (approvals, overrides, audits) to the risk profile of each task, so human judgment lands where it changes outcomes, not where it creates bottlenecks.

Defining the Governance Spectrum: HITL vs. HOTL vs. HOOTL

The compliance-by-design approach we described above depends on a governance model that matches the risk. Three models define how tightly you couple human judgment to machine execution.

- Human-in-the-Loop (HITL) requires a human to approve an action before the system executes it. A loan officer signs off on a credit decision. A physician confirms a treatment recommendation. The system cannot proceed without that approval. Businesses use HITL for high-risk, irreversible actions where a wrong output triggers regulatory exposure or patient harm.

- Human-on-the-Loop (HOTL) lets the system act on its own while a human monitors outputs and intervenes when something looks wrong. Fraud detection teams work this way: the model flags and routes transactions, and an analyst investigates the exceptions. HOTL works for high-volume tasks where approving each decision individually would collapse throughput.

- Human-out-of-the-Loop (HOOTL) removes the human from the execution path entirely. The system operates autonomously. This is appropriate only for low-risk, well-tested processes where an error costs you a retry, not a regulatory finding.

Most production systems combine all three within a single workflow. A claims processing pipeline might use HOOTL for document ingestion, HOTL for risk scoring, and HITL for final payout authorization. The governance mode shifts based on what's at stake at each step.

Knowing these modes exist is not enough. You need a method for deciding which one applies where. That requires mapping governance to risk tiers.

The Trust Protocol: Mapping Controls to Risk Classes

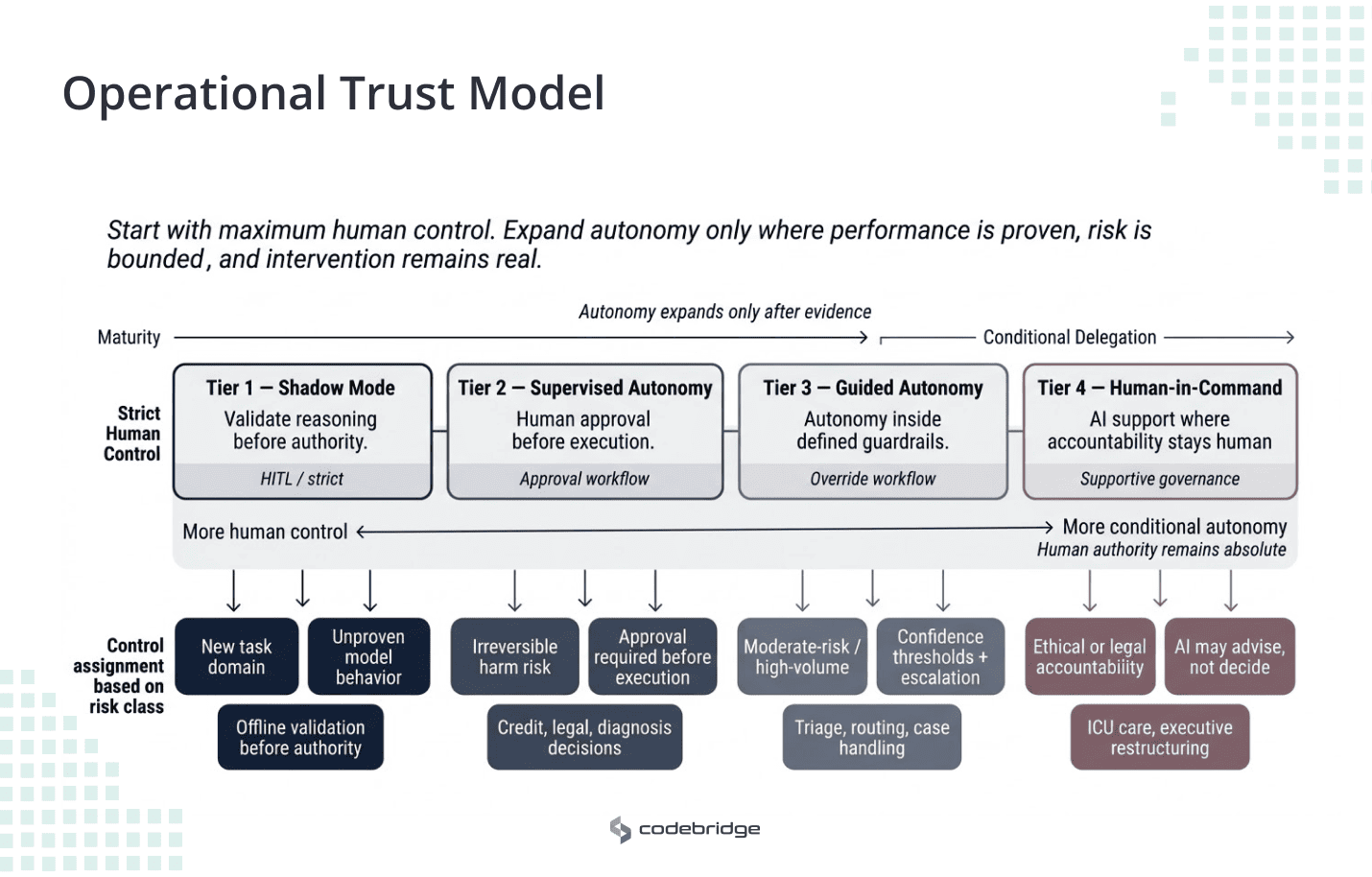

The next step is deciding which governance mode belongs at each point in the workflow. The principle is simple: start with strict human control, then expand autonomy only when performance data shows the system can be trusted.

The four tiers below describe when each model should be used, based on risk.

Tier 1: Shadow Mode.

The AI observes live data and drafts recommendations, but a human makes every decision and executes every action. The system produces outputs; none of them reach the real world without a person acting on them. This is how you validate an agent's reasoning against business context before giving it any operational authority. It maps to HITL at its most conservative. Use it during initial deployment and whenever you introduce a model to a new task domain.

Tier 2: Supervised Autonomy

Here, the AI stages an action, but execution is paused until a human provides explicit approval. This is appropriate for high-risk scenarios such as final medical diagnoses, credit loan approvals, or legal contract execution. The technical control here is the Approval Workflow, where the AI handles large-scale data analysis and risk scoring while the human acts as the final gatekeeper.

Tier 3: Guided Autonomy

The AI acts autonomously within strict, predefined guardrails, escalating to a human only when confidence scores drop below a set threshold (e.g., <90%). This is ideal for moderate-risk, high-volume tasks like routing complex support tickets or triaging patient symptoms. The control mechanism shifts from approval to Override Controls, where humans function as exception handlers.

Tier 4: Human-in-Command

For very high-risk, life-sustaining, or high-liability decisions—such as ICU interventions or major financial restructuring—the architecture must remain strictly supportive. The AI presents suggestions rather than directives, ensuring that physician or executive authority over ethical complexity and patient values remains absolute.

Tier assignment criteria

Architecting the Controls: Approvals, Overrides, and Audits

From a technical perspective, HITL must be treated as a first-class architectural component rather than a bolt-on to an existing workflow. This involves integrating specific controls into the system’s core logic. Once the task is classified by risk, the next design decision is the control mechanism attached to it.

Approval Controls (The Gatekeepers)

Approval controls belong to Tier 2 (supervised execution) and Tier 4 (advisory only) tasks. The system stages an output. Execution pauses until a qualified reviewer approves, modifies, or rejects it.

The harder problem is not adding an approval gate, but keeping it effective under real operating volume. If your approval queue backs up, reviewers start batch-approving to clear it. You have recreated the rubber-stamping problem from the introduction.

To prevent this, you need capacity planning for reviewer throughput, SLA-based routing that matches task complexity to reviewer expertise, and timeout logic that escalates stalled approvals rather than auto-approving them.

Structure the reviewer interface around constrained decision types: approve/reject, select from staged options, or confirm specific risk factors. Free-text review fields feel thorough but produce inconsistent data that neither downstream systems nor learning pipelines can use.

Override Controls (The Safety Nets)

Override controls serve Tier 3 (guided autonomy), where the system executes within guardrails and escalates exceptions. The reviewer's job is to catch what the model missed and reverse it.

An override mechanism fails if the system makes overriding harder than approving. When the AI's recommendation appears as a pre-selected default and reversing it requires three additional screens, reviewers follow the path of least resistance. Your override rate drops, but not because the model improved. Design the interface so that confirming and overriding require equal effort.

The reviewer needs enough context to form an independent judgment: the AI's confidence score, the factors that drove the recommendation, and any flags the system raised. Without this, the reviewer is evaluating a conclusion with no access to the reasoning.

Track override rates as an operational signal. A sustained increase suggests model degradation or a shift in input distribution. A rate near zero on high-volume tasks suggests reviewers are not engaging. Both patterns require investigation.

Audit Controls (The System of Record)

Audit controls span every tier. They are the evidentiary layer that proves your governance operated as designed.

GDPR Article 22 restricts automated decisions that produce legal effects on individuals. The EU AI Act (Article 14) requires that high-risk AI systems include human oversight capable of preventing or minimizing risks. If a regulator or court examines your system, they will not ask whether you had a policy. They will ask for records showing that a specific human reviewed a specific output at a specific time, and had the authority and information to intervene.

Your audit log needs to capture, at minimum: the input data the model received, the model version and configuration, the output produced, the confidence score, the reviewer's identity and decision, the timestamp, and the override rationale when applicable. Log the reasoning chain, not just the final action. A record that says "approved" without showing what was approved and on what basis will not satisfy a regulatory audit.

How the controls connect

Every override should feed into your model improvement pipeline. If reviewers consistently override the same class of output, that pattern tells your ML team where the model's blind spots are. Every approval decision, aggregated over time, provides calibration data: are reviewers modifying staged outputs frequently (suggesting the model needs retraining) or approving them unchanged (suggesting the task may be ready for promotion to Tier 3)? Audit data closes the loop by making these patterns visible and traceable.

The controls are architecturally distinct, but operationally, they form a single feedback cycle. Treat approvals, overrides, and audits as one connected operating loop. The data from each should improve the others.

When Oversight Becomes Performative

You can build every control and still fail. Approval workflows, override mechanisms, and audit logs: none of them protect you if the humans operating them stop exercising independent judgment. This is the failure mode that regulators are already prosecuting.

The SCHUFA precedent

A German credit scoring company, SCHUFA, used an automated system to generate creditworthiness scores. Human intermediaries reviewed the scores before they reached consumers. On paper, the system had human oversight. In practice, the reviewers passed scores through without influencing the determination. An EU court ruled that this constituted "solely automated decision-making" under GDPR Article 22, which prohibits such decisions without specific legal justifications. The human review existed in the architecture, but it did not exist in operation. SCHUFA lost its legal protection because the oversight had become performative.

This is not an edge case. Any system where approval rates approach 100% and review times drop below the threshold needed for genuine assessment is exhibiting the same pattern. The difference between SCHUFA and your system is that SCHUFA was found out in court.

How bias degrades each control type

Automation bias attacks the controls from Section 3 in predictable ways. Approval controls degrade when reviewers start confirming staged outputs without evaluating the underlying factors. Override controls degrade when the system makes the AI's recommendation the default and overriding requires disproportionate effort; override rates collapse, but not because accuracy improved. Audit controls degrade when every record shows "approved" with no modifications, making the logs indistinguishable from a system with no human review at all. That last pattern is exactly what cost SCHUFA its legal defense.

Detection before prevention

You cannot fix what you do not measure. Before investing in training programs or interface redesigns, instrument your oversight layer. Track approval rates over time. Measure median review duration per task type. Monitor override frequency as a percentage of total reviews. Flag reviewers whose agreement rate stays above 97% across a meaningful sample of cases.

These signals give you an early warning. If a reviewer approves 200 Tier 2 loan decisions in a shift with a median review time of 8 seconds, that reviewer is not reading the risk factors. You now have evidence that the review process is degrading, rather than a vague sense that people are checking less carefully.

Designing for engagement

Detection tells you the problem exists. Interface design determines whether it recurs. Three practices reduce automation bias at the point of review.

First, require the reviewer to assess inputs before revealing the AI's conclusion. If the interface shows the recommendation first, the reviewer anchors to it and evaluates backward from the answer. Reversing the sequence forces independent assessment.

Second, match reviewer capacity to volume. When a team responsible for Tier 2 approvals is understaffed, review times compress and approval rates rise. This is a staffing and capacity planning problem, not a training problem. No amount of calibration training overcomes a reviewer who has 45 seconds per case.

Third, rotate reviewers across task types. Familiarity with a narrow output pattern accelerates the slide into automatic approval. Rotation disrupts that pattern and maintains cognitive engagement.

HITL in production: AI-augmented radiology across 12 sites

In one diagnostic imaging deployment across 12 centers, the network processed more than 500 chest CT scans a week and had already tried commercial AI tools that clinicians largely ignored. False positive rates were high enough that AI findings added work instead of removing it. A majority of clinicians reported dismissing AI outputs without review. The oversight had become performative.

The Codebridge team that built the replacement platform structured it around the tier model from this article. AI inference produces nodule detection overlays with malignancy probability, volumetric measurements, and prior-study comparisons.

All findings appear as toggleable overlays inside the radiologist's existing viewer. The radiologist drives every interpretation. For the smart triage queue, which ranks studies by urgency, the system operates at Tier 3: routing autonomously, escalating ambiguous cases for human review.

The control layer maps to Section 3. No AI annotation reaches a final report without radiologist confirmation. Every dismissed or modified finding is logged with the clinician's rationale. Audit records capture model version, confidence score, clinician identity, and override status on every case, aligned with IEC 62304 traceability and a planned FDA 510(k) pathway. A governance dashboard tracks agreement rates, override rates, and false positive trends across all sites, using the detection signals described in Section 4.

Results after nine months:

- Average CT reading time dropped from 15.2 to 9.4 minutes, a 38% reduction.

- Nodule detection sensitivity held at 96% for sub-4mm lesions across 2,400 validated scans.

- False positives fell from 4.1 to 0.4 per scan.

- The radiologist trust score, measuring the percentage of clinicians who routinely review AI findings, rose from 27% to 89%.

The trust recovery matters most. The previous tools failed because the deployment architecture ignored governance. When HITL controls gave clinicians genuine authority over AI outputs and made that authority frictionless to exercise, engagement returned. The governance layer did not slow the system down. It made the system trustworthy enough to use.

Conclusion: What to do with this?

GPT-4 hallucinates on 58% of verifiable legal questions. SCHUFA lost its legal protection because its human reviewers approved scores without examining them. These are not outlier failures. They are the predictable result of deploying AI in regulated environments without a governance architecture designed for the risk.

This article laid out a specific framework for avoiding both failure modes. Classify each task by risk tier. Assign the control mechanism that tier requires: approvals for high-risk execution, overrides for guided autonomy, and audits across everything. Instrument your oversight layer so you can detect when reviewers stop engaging before a regulator detects it for you.

Start with your highest-liability workflow. Map each step to a tier. Identify where a human decision currently exists and assess whether that decision is genuine or performative. If your approval rates are near 100% and review times are measured in seconds, your governance is architectural fiction. Fix that workflow first. Then expand the framework outward.

The organizations that scale AI in regulated domains will do it by earning trust incrementally, promoting tasks from tighter to looser oversight as performance data justifies the move, and pulling them back when it doesn't. Governance is not a constraint on your system's capability. It is the mechanism that allows the capability to expand.

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript