AI models now generate full features, workflows, and services that ship to production. If your engineering team uses Copilot, Cursor, or an agentic coding assistant, a growing share of your codebase was written by a model, reviewed (to varying degrees) by a human, and deployed through your existing pipeline.

That pipeline was designed for human-written code. The review process, the CI checks, and the approval gates all assume a developer who understands the trade-offs behind their design decisions. AI-generated code carries no such assumption. It optimizes for functional correctness on the happy path, and it does so at a volume that outpaces most teams' ability to verify it.

A large-scale evaluation across state-of-the-art models found that at least 62% of generated programs were vulnerable to common weaknesses (CWEs). Google Cloud's DORA research adds a useful frame: AI amplifies whatever engineering discipline already exists. Strong teams get faster. Weak fundamentals get worse, faster.

This makes AI-generated code a governance problem. Your SDLC needs to account for code that is produced at machine speed, carries no authorial intent, and defaults to plausible over secure. This article lays out a control framework for doing that: policy, specifications, sandboxing, review tiers, and CI enforcement.

Why AI-Generated Code Breaks Traditional Code Review

Code review has always relied on three implicit assumptions: the author had intent behind their design choices, they understood the domain, and they could explain the trade-offs they made. AI-generated code satisfies none of these. The model has no awareness of your system's invariants, no memory of past architectural decisions, and no accountability for its output. When a reviewer opens a PR, they're evaluating output that looks like a senior engineer wrote it but carries none of the reasoning that would normally back it up.

The Shift in Review Economics

Your team can now generate code at a pace that your review process was never designed to absorb. A single developer using an agentic assistant can open more PRs in a day than a team used to produce in a sprint. The constraint is "how fast can a qualified reviewer verify that this code is correct, secure, and maintainable?"

In practice, this means your most experienced engineers become the bottleneck. PR queues back up. Review depth drops as volume increases. Teams start pattern-matching on surface quality ("the code looks clean, the tests pass") instead of reasoning about behavior, edge cases, and failure modes. Generation speed creates the illusion of throughput while verification quality degrades.

Review Fatigue as a Security Vulnerability

The danger is not only the presence of vulnerable code but the erosion of the review process itself. When code is cheap to produce, the perceived cost of reviewing it drops, too.

Developers "vibe code" entire features, scaffolding applications from model output with minimal manual inspection. Reviewers, facing a growing queue of polished-looking diffs, start skimming. Approvals become shallow, and the rubber-stamp replaces the review.

This is where the security exposure compounds. The code that gets waved through isn't obviously broken. It passes linting. It has tests. It handles the happy path. But it may also contain hardcoded credentials, unparameterized queries, or dependencies that don't exist yet (a risk we'll cover in the next section).

Accountability Shifts to Controls

You can no longer assume that the person who opened the PR fully understood the code in it. That doesn't eliminate the need for human ownership. Every AI-generated change still needs a named owner who can explain its logic and maintain it over time. But ownership alone isn't a sufficient safeguard when the owner didn't write the code and may not have deeply reviewed it.

The practical answer is risk-tiered review. Low-risk changes (test generation, boilerplate, documentation, internal tooling) can follow an expedited path with strong automated checks. High-risk changes (authentication flows, payment logic, infrastructure configuration, anything touching regulated data) require named human reviewers with domain expertise and explicit security sign-off.

OWASP's guidance is clear, saying your team is responsible for all committed code, regardless of whether a human or a model wrote it. The review tier determines how you discharge that responsibility.

Security Risks in AI-Generated Code: What Fails in Practice

AI-generated code fails differently from human-written code. When a developer introduces a vulnerability, it's usually traceable to a reasoning error, a knowledge gap, or a shortcut under time pressure. When a model introduces one, the code still looks well-structured, passes basic checks, and handles the happy path. The failure is hidden behind plausibility.

Understanding where AI-generated code breaks helps you design controls that target the right risks. Some of these are familiar vulnerabilities at higher volume. Others are categories that didn't exist before models started writing production code.

Insecure Code Patterns Inherited from Training Data

Models learn from public code, and public code is full of known vulnerabilities. AI-generated output frequently reproduces SQL injection patterns (CWE-89), cross-site scripting (CWE-79), and hardcoded credentials (CWE-798) because those patterns appear throughout the training data in code that otherwise functions correctly. The model isn't choosing an insecure approach. It's reproducing what statistically follows from the prompt, and insecure patterns are well-represented in the corpus.

This makes AI-generated vulnerabilities harder to spot than human-introduced ones. A developer who hardcodes a credential is usually cutting a corner they know about. A model that hardcodes a credential produces code that looks identical to a deliberate, considered implementation. The diff looks clean. The review has to go deeper.

Stale Dependencies and the AI Model Temporal Gap

Models have a training cutoff. When they suggest a library version, they suggest one that was current and safe at training time. If that version has since been flagged with a critical CVE, the model has no way to know. Your CI pipeline pulls in a dependency that was a reasonable choice six months ago and a known vulnerability today. This "temporal gap" compounds with each month between model training and production use.

Dependency Hallucinations and Slopsquatting in AI Coding

This is one of the more dangerous risks specific to AI-generated code. Models sometimes suggest packages that don't exist. A model might recommend fastapi-security-helper as an import. The package has never been published. But the hallucination is consistent: multiple users, across multiple sessions, get the same suggestion.

Attackers have learned to exploit this. They monitor common hallucinated package names and register them on public registries like npm or PyPI, with malicious payloads inside. When your developer accepts the model's suggestion and your build pulls the dependency, you've installed attacker-controlled code into your environment through your own CI pipeline. No exploit required. The model provided the supply chain entry point.

Prompt Injection Risks in Agentic AI Coding Workflows

As AI assistants gain the ability to read files, browse repositories, and execute commands, a new class of attack opens up. Traditional security separates instructions from data. LLMs don't. They process a code comment, a README, and a user prompt through the same context window with no privilege boundary between them.

In an indirect prompt injection, an attacker embeds instructions in a file the agent will read: a README, a docstring, a configuration comment. The agent treats the embedded instructions as context and acts on them. It might exfiltrate environment variables, modify configuration files, or push changes the attacker designed. The agent follows the injected instructions because it has no mechanism to distinguish them from legitimate ones.

Shadow AI: Data Exposure Risks in AI-Assisted Development

Separate from agent-level risk, your developers are making daily decisions about what context they feed into AI tools. When someone pastes proprietary source code, internal API schemas, or customer data into a public model's chat interface, that input may be used in future training. Your internal logic, naming conventions, and system architecture become part of a public model's knowledge. This is an operational data-governance gap that most teams haven't addressed with a clear policy.

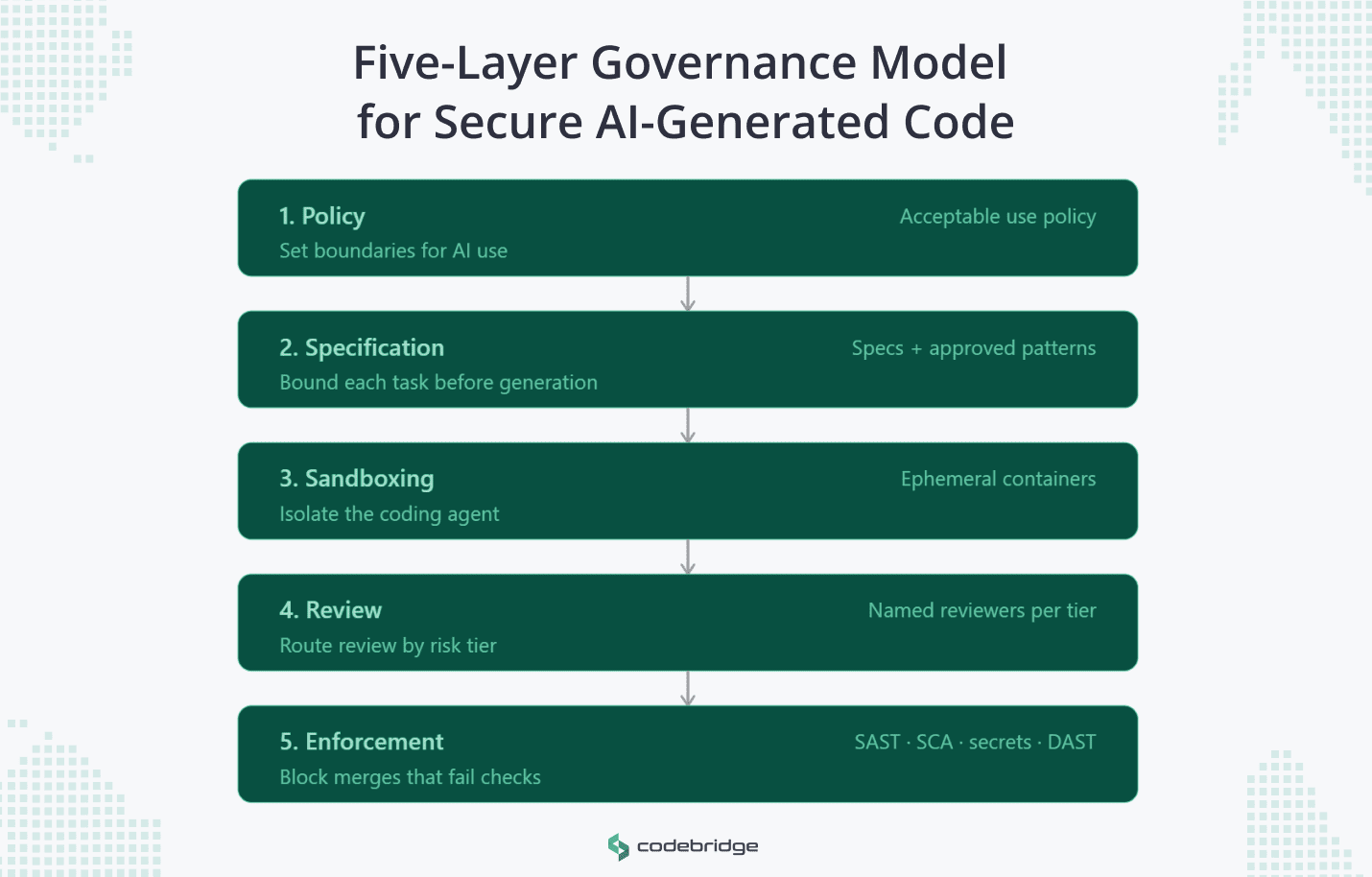

A Five-Layer Governance Model for Secure AI-Generated Code

Securing AI-generated code requires governance at five layers: policy, specification, sandboxing, review, and enforcement. Each layer solves a different problem, and skipping any one of them creates a gap that the others can't compensate for.

5.1 The Policy Layer: Define where AI may and may not be used

Before your team writes a single AI-assisted line, you need an Acceptable Use Policy (AUP) that draws clear boundaries around where AI generation is permitted, where it's restricted, and where it's prohibited.

A useful AI-use policy answers three questions:

- Which tasks are open for AI generation with standard review? Test scaffolding, documentation, boilerplate, and internal tooling typically fall here.

- Which domains require additional controls? API integrations, data transformations, and business logic that touch external systems are common candidates.

- Where is AI generation off-limits entirely? Cryptographic implementations, regulatory compliance logic, deployment automation, and anything involving secrets management should be restricted zones where human authorship is required.

The policy also needs to address data boundaries: what context developers can and cannot feed into AI tools. Proprietary source code, customer data, internal schemas, and cloud credentials should have explicit handling rules. Without this, your shadow AI exposure (covered in the previous section) remains an unmanaged risk.

5.2 The Specification Layer: Bound the work before generation

The single highest-leverage control you can apply to AI-generated code is giving the model a well-defined specification before it writes anything. When requirements are vague and architecture is unstated, the model improvises. In a production system, improvisation creates risk.

A useful spec for AI-assisted work includes the task scope and architectural constraints (which components are involved, which technologies are approved, how they interact), security requirements and approved patterns (parameterized queries, secrets loaded from environment variables, authentication via your existing identity provider), and acceptance criteria with risk classification (what "done" looks like, and how sensitive the change is).

This is what GitHub's spec-driven development model formalizes: the spec becomes the shared source of truth that constrains both the model and the reviewer. Teams that embed non-negotiable security principles (drawn from CWE/MITRE) directly into the specification layer have reported a 73% reduction in security defects compared to unconstrained generation.

5.3 The Sandboxing Layer: Isolate generation and execution

Your AI coding agent should never run with the same access as your developers. The specification bounds what the model is asked to do. The sandbox bounds what it's able to do.

In practice, this means running AI agents in ephemeral containers with no persistent state, restricted network access (limited to internal registries and approved endpoints), read-only access to the repository by default with write access scoped to a specific branch or directory, no access to production credentials, secrets stores, or deployment pipelines, and resource limits (CPU, memory, execution time) that prevent runaway processes.

This layer is your containment boundary. If an agent is compromised through prompt injection or suggests a malicious dependency, the sandbox limits the blast radius. Without it, a single compromised generation step can reach your build pipeline, your secrets, or your infrastructure.

5.4 The Review Layer: Make review risk-based, not uniform

Applying the same review process to every AI-generated change will either slow your team to a crawl or erode review quality through volume fatigue. The answer is risk-tiered review.

Low-risk changes (test generation, documentation updates, boilerplate, internal tooling) follow an expedited path. Automated checks handle the bulk of verification. A lightweight human review confirms the output is reasonable. These changes should flow quickly because holding them to the same standard as security-critical code wastes reviewer attention.

The risk classification should be defined in your policy (layer 5.1) and applied consistently. If a team has to decide the review tier on a case-by-case basis for each PR, the system depends on individual judgment under time pressure, which is exactly the failure mode you're trying to eliminate.

5.5 The Enforcement Layer: CI gates make governance real

Policy, specifications, review tiers: none of these matters if a developer can bypass them by merging directly. The enforcement layer makes governance mechanical. If a check doesn't pass, the code doesn't merge. No exceptions, no overrides without an auditable escalation path.

For AI-generated code, four categories of automated checks are essential.

- Static Application Security Testing (SAST): Scanned during editing and in the PR workflow to catch logic flaws as they are generated.

- Software Composition Analysis (SCA): Essential for mapping transitive dependencies and detecting "phantom" packages introduced through hallucinations.

- Secrets Detection: ML-enhanced scanning that analyzes the mathematical entropy of strings to differentiate between real keys and benign placeholders.

- Dynamic Application Security Testing (DAST): Vetting the application's runtime behavior by simulating real-world attacks to ensure it matches the intended security posture.

These four checks should be mandatory for all AI-assisted code paths and non-bypassable without a documented exception approved by a named security owner. The CI gate is where your governance framework either holds or fails.

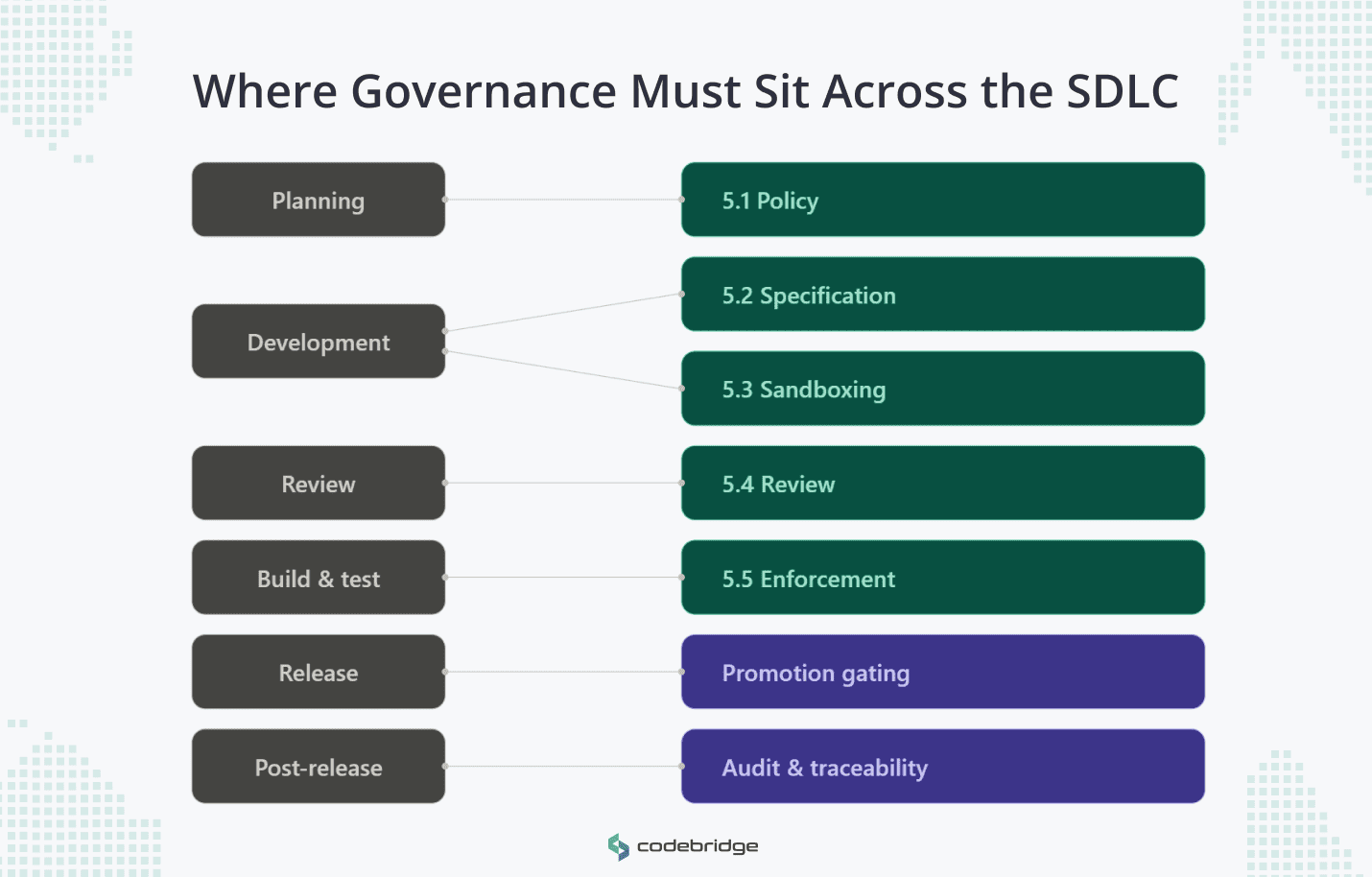

AI Code Governance Across the SDLC: Phase-by-Phase Controls

The five control layers map onto specific SDLC phases. This aligns with the structure NIST's Secure Software Development Framework (SSDF) recommends: governance at every stage, not bolted on at the end.

- Planning: Policy Layer (5.1). Define where AI generation is permitted, restricted, or prohibited. Classify change types by risk tier.

- Development: Specification Layer (5.2) + Sandboxing Layer (5.3). Bound each task with an explicit spec. Isolate the agent's execution environment.

- Review: Review Layer (5.4). Route PRs by risk tier. Low-risk changes follow the expedited path. High-risk changes require named reviewers with domain expertise and security sign-off.

- Build and Test: Enforcement Layer (5.5). SAST, SCA, secrets detection, and DAST gate the merge. No passing checks, no merge.

- Release: Promotion gating. High-risk changes require explicit promotion approval from a named owner at each stage (staging, production). Lower-risk tiers can promote automatically with passing gates.

- Post-Release: Audit and traceability. Log which model generated the code, what spec it was generated against, who reviewed it, what checks it passed, and when it was promoted. For agentic workflows, store the full agent-server interaction (prompts, tool calls, outputs) in an immutable log. These records are your forensic path during incidents and your documentation for SOC 2, ISO 27001, or any compliance framework requiring code-change traceability.

The first four phases are covered by the governance model. Release and post-release extend it into territory most teams haven't formalized yet for AI-assisted code.

Executive Checklist for Scaling Secure AI-Assisted Development

If you're expanding AI-assisted development beyond a few early adopters, these seven questions will tell you whether your governance is ready.

If you can answer yes to all seven, you have a governance framework. If not, the gaps tell you where to start.

AI-generated code will keep getting better, faster, and more autonomous. The teams that benefit from that trajectory are the ones whose specifications, review processes, and CI gates are already designed for machine-speed output. Build the governance now, while the volume is still manageable. Retrofitting it later, under pressure, is how controls get compromised.

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript