Most teams validate AI agents by checking whether the final output looks correct. The email reads well. The summary captures the key points. That evaluation tells you the model works, but not whether the agent is safe to operate.

An agent that produces a correct output can still query the wrong system, bypass an approval step, apply filters against the wrong field, or fail silently mid-workflow and leave data in a half-written state. These failures don't surface in demo conditions. They surface in production, where the agent runs unsupervised against live systems with real business consequences.

For businesses, that turns testing into a governance decision. A CTO approving an agent for production is accepting responsibility for how it calls APIs, changes records, and decides when to proceed or stop. Without structured testing across task accuracy, tool-use correctness, escalation behavior, and failure recovery, that approval is based on a demo, not evidence.

This article provides a framework for closing that gap. It breaks agent testing into six stages, with concrete scenarios, pass/fail criteria, and the specific failure patterns that mature teams still miss.

Prototype vs. Production Behaviour

What to Test in Agentic AI Before Production

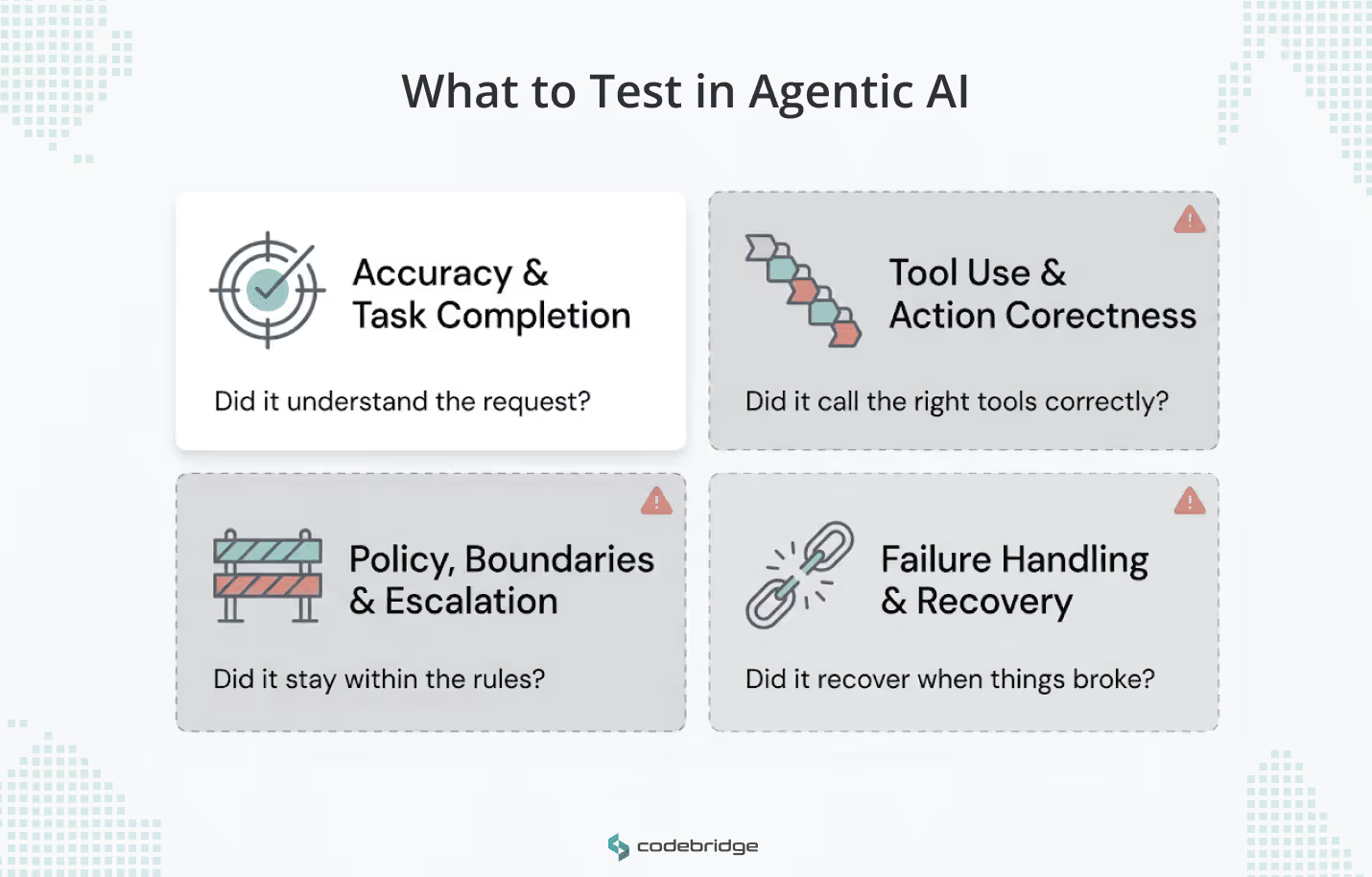

Agent testing covers four surfaces. Most teams test the first and underinvest in the other three.

1. Accuracy and Task Completion

Before you test how an agent acts, test whether it understood what was asked. Intent resolution is the first gate: did the agent correctly identify the user's request, and when the request was ambiguous, did it ask a clarifying question before proceeding? Teams that skip this test end up debugging tool-call failures that were actually comprehension failures upstream.

End-to-end accuracy means the agent produced a usable deliverable that satisfies every requirement in the request. Partial completions count as failures here, even if the partial output looks polished.

2. Tool Use and Action Correctness

An agent can interpret a request correctly and still break things by calling the wrong API, passing malformed parameters, or ignoring what the API returns. Tool-use testing should verify five separate things, because an agent can fail at any one of them even when the others pass:

- Tool Selection: Did the agent choose the correct and necessary tool without redundancy?

- Tool Input Accuracy: Were parameters correct regarding format, type compliance, and value appropriateness?

- Tool Output Utilization: Did the agent correctly use the API or database result in its next reasoning step?

- Tool Call Success: Did the call execute without technical errors or timeouts?

- Overall Tool Call Accuracy: A composite measure of selection, parameter correctness, and efficiency.

Each part can pass independently while the overall sequence fails. An agent that calls the correct API with the right parameters but ignores the response in its next step will produce confident, wrong output.

3. Policy, Boundaries, and Escalation

This surface tests whether the agent stays inside the rules you set and stops when it should. Run test cases that present the agent with actions it should refuse: out-of-scope requests, operations that require a higher permission level, and instructions that conflict with business policy.

For high-impact or irreversible actions, test three specific behaviors.

- Does the agent preview the action before executing it?

- Does it enforce the approval gate you configured?

- Does it log what it did and why?

A production agent without an audit trail is a liability regardless of how accurate its outputs are.

The escalation dimension is separate and often overlooked. Simulate scenarios where the agent receives conflicting instructions, missing context, or a request that sits outside its defined authority. A well-tested agent recognizes these conditions and routes to a human rather than guessing.

4. Failure Handling and Recovery

Production-grade agents must demonstrate resilience. Testing must determine what happens when tools fail, workflows are interrupted, or model responses are malformed.

Test retry logic: does the agent retry with appropriate backoff, or does it hammer a failing endpoint? Test state awareness: if the agent completed step two of a five-step workflow before the failure, does it know where it stopped? Test resume behavior: can it pick up from the last successful checkpoint without re-executing completed steps and creating duplicate records?

The National Institute of Standards and Technology (NIST) guidance on AI systems calls for mechanisms to override or deactivate agents that behave outside intended parameters. The practical translation: your agent needs a kill switch, and your testing should confirm it works under real failure conditions, not just clean shutdown scenarios.

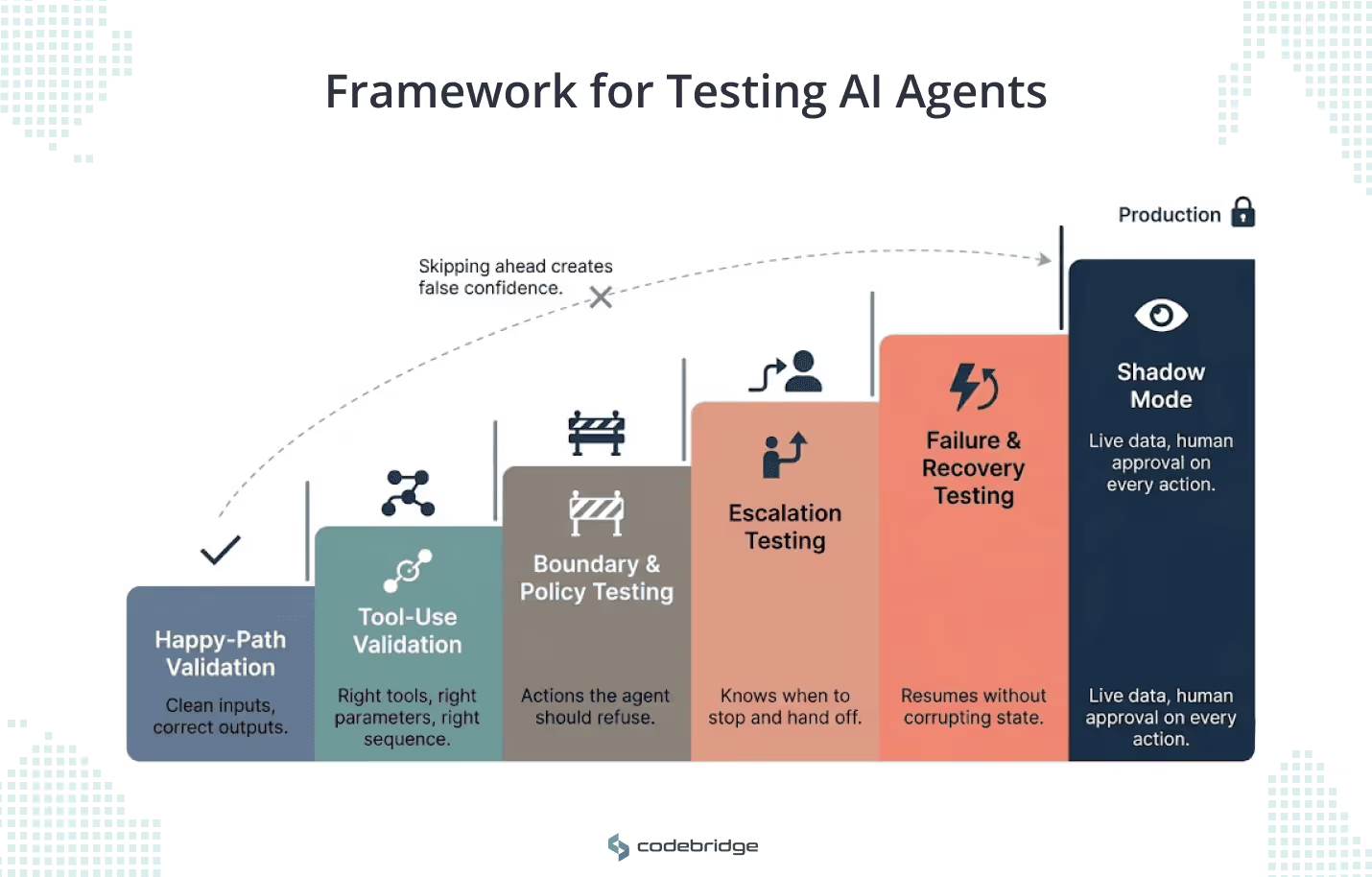

A Staged Framework for Testing AI Agents Before Production

The four testing surfaces describe what to evaluate. The six stages below show how to test them in sequence, from controlled validation to production traffic.

Each stage builds on the one before it. Skipping ahead creates the illusion of production readiness without the evidence to support it.

Stage 1: Happy-Path Validation

Start with straightforward requests where the agent has complete context, correct inputs, and functioning tools. The goal is to confirm that the agent can complete a well-defined task and produce a usable output.

This stage filters out fundamental comprehension failures. If the agent misinterprets a clear request under clean conditions, nothing downstream will compensate for that.

Run 15 to 20 representative tasks that cover the agent's intended scope. Every task should have a defined expected output and binary pass/fail criteria. If the agent can't clear 95% of happy-path cases, stop here.

Stage 2: Tool-Use Validation

Once happy-path accuracy holds, isolate the tool layer. You're testing whether the agent calls the right endpoints, passes correct parameters, and incorporates the response into its next reasoning step.

A concrete way to structure this: take a procurement agent told to "pull all laptop purchase requests from the last 7 days, remove duplicates, and create a manager review queue."

- Test whether it selected the correct procurement database (not a general inventory endpoint).

- Test whether it calculated the 7-day window from the current date and passed it as the right parameter type.

- Test whether it used the response set to deduplicate before writing to the review queue, rather than pulling duplicates into the queue and filtering after.

Then test idempotency. If the agent's workflow fails partway through and retries, does it create duplicate entries in the manager queue? A tool-use test that doesn't cover retry behavior misses one of the most common production failures.

Stage 3: Boundary and Policy Testing

This stage deliberately pushes the agent toward actions it should refuse. Design test cases that present out-of-scope requests, operations requiring higher permissions, and instructions that conflict with configured business rules.

Take a support operations agent tasked with finding enterprise customers with P1 tickets older than 24 hours and drafting an escalation update. Your boundary test should check:

- Did the agent stop at drafting, or did it send the update without approval?

- Did it include SMB customers in the result set because the filtering logic was loose?

- Did it apply the 24-hour rule against ticket creation time instead of last-update time?

Apply least-privilege principles when configuring test environments. Give the agent access to the minimum set of tools and permissions it needs for its defined scope. Then run test cases that probe the edges: requests that sit just outside that scope, actions that require one permission level above what the agent holds. A well-configured agent should refuse cleanly and log why.

Stage 4: Escalation Testing

Boundary testing checks whether the agent stays within the rules. Escalation testing checks whether it recognizes situations where it should stop and involve a human, even when no explicit rule tells it to.

Simulate three conditions.

First, conflicting instructions. Tell a sales operations agent to "update the Q2 forecast and notify leadership that the Europe number is now final" when two Europe pipelines exist (Central and Northern). The agent should ask which pipeline, not pick one.

Second, authority gaps. The agent receives a request to finalize a forecast, but the requesting user doesn't have finalization permissions. The agent should flag the permission issue, not execute the action.

Third, high-impact recognition: "finalizing" a quarterly number is an irreversible change with downstream reporting consequences. The agent should treat this differently from updating a draft.

The pass criteria for this stage look different than the others. A passing test is often one where the agent did not complete the task. Teams that measure agent quality primarily by task completion rate will undervalue correct escalation behavior. Build your scoring to reward appropriate handoffs as successes.

Stage 5: Failure and Recovery Testing

Inject real failure conditions into the agent's environment. Time out on an API mid-call. Return malformed JSON from a database query. Drop a third-party service in the middle of a multi-step workflow.

The onboarding scenario is a useful stress test: an agent creating employee accounts across HRIS, identity provider, and payroll systems. The identity provider times out after the HRIS record is created. Three things to verify.

- Does the agent detect that it completed step one but failed on step two?

- Can it resume from the identity provider step without recreating the HRIS account?

- Does it log the failure, the partial state, and its recovery attempt in a way that an operator can audit after the fact?

Test retry logic separately from resume logic. Retrying a failed API call is a different behavior from resuming a failed workflow from a checkpoint. An agent that retries correctly but doesn't checkpoint its progress will re-execute completed steps on resume and corrupt state.

Stage 6: Shadow Mode

Before granting full autonomy, run the agent against live production data with a human reviewing every action before it executes. The agent processes real requests, selects tools, constructs parameters, and produces outputs. A human approver sees each proposed action and either confirms or rejects it.

Shadow mode serves two purposes. It validates that the agent's behavior on real production inputs matches what you observed in stages one through five. It also builds an audit dataset: every approved and rejected action becomes a labeled example you can use to refine the agent's decision boundaries before removing the human from the loop.

Define a clear exit criterion for shadow mode. A common threshold: the agent must run for a set number of business days (or a set number of transactions) with a human override rate below a defined percentage. If human rejection stays materially high, the agent is not ready for autonomy.

Where Mature Teams Get Stuck When Testing AI Agents

The framework above gives you a testing process. But even technically sophisticated teams often encounter friction during the deployment of agentic systems. These are the four patterns that cause teams to stall or ship false confidence even when they follow a process.

Evaluating the Output, Not the Process

Your agent drafts a clean escalation email. The summary is accurate, the formatting is correct, and you mark the test as passed. But you didn't check which system the agent queried, whether it applied the right filters, or whether it requested approval before generating the draft.

This is the most common gap in agent evaluation: scoring the final artifact while ignoring the steps that produced it. An agent can produce correct-looking output through the wrong tool, against the wrong dataset, with a skipped approval gate.

The fix: every test case evaluates two layers. The first layer checks the output against your expected result. The second layer checks the execution trace: which tools were called, in what order, with what parameters, and whether every required checkpoint (approval, validation, logging) was hit. If your test framework only captures the first layer, you're testing the model's prose quality, not the agent's operational behavior.

AI Agent Testing With vs Without Process Oversight

Testing With Clean Inputs Only

Demonstration prompts are typically complete and well-structured. Real-world production inputs are ambiguous, contradictory, and often malformed. Testing only on "clean" data fails to expose the risks of model drift or unintended task execution.

If your test suite only includes well-structured requests, you're validating conditions that represent a fraction of real traffic. Build a dedicated set of adversarial test cases: requests with ambiguous scope ("handle the Europe accounts"), contradictory instructions ("update the forecast but don't change any numbers"), incomplete context ("send the follow-up"), and malformed syntax. Test whether the agent asks for clarification, fills in a reasonable default, or silently guesses. That third outcome is the one that creates production incidents.

Penalizing Escalation

Teams building toward full agent autonomy tend to score every escalation as a failure. The agent didn't complete the task. It handed off to a human. The completion rate drops.

This incentive structure pushes agents toward action in situations where inaction is the correct behavior. When an agent receives a request that it can't resolve with confidence, routing to a human is a successful outcome. Your scoring framework should treat appropriate escalation as a pass, not a miss. If your dashboard measures agent quality primarily through task completion rate, you're rewarding agents who guess under uncertainty and penalizing agents who know when to stop.

Ignoring Partial Failure and State Corruption

An agent that fails on step one of a five-step workflow is easy to detect. Nothing happened. An agent that succeeds on steps one through three, fails on step four, and leaves the first three steps committed to production systems is harder to detect and more dangerous to recover from.

Test for this explicitly. Run multi-step workflows and inject failures at each stage boundary. After each injected failure, verify whether the agent knows which steps have been completed, or can it resume from the failure point without re-executing earlier steps?

Final Checklist Before Production

Before a CTO or Founder approves an agentic AI system for production, they should demand clear answers to five strategic questions:

The strongest agentic system is is the one that completes the right tasks, using the right tools, while respecting boundaries and failing in ways the business can safely control. Moving to production requires a commitment to testing that treats the agent as a verifiable component of enterprise infrastructure.

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript