AI has become a core product decision for EdTech companies. Founders and CTOs now face a specific question: which AI capabilities improve learning outcomes and operational efficiency at a sustainable cost, and which ones add complexity without improving the product?

The distinction matters because the evidence is starting to split. UNESCO and the U.S. Department of Education both treat generative AI as a capability that demands governance — UNESCO calls for immediate policy action, and the Department of Education warns about bias, privacy exposure, and over-reliance on automation.

This signals that shipping AI features without pedagogical structure and data controls creates regulatory and quality-of-outcome risk that compounds over time.

This piece breaks down where AI adds measurable value in EdTech, what risks you need to govern before deployment, and a phased rollout sequence built around operational readiness.

Why AI in EdTech is a Product Strategy Decision

Most EdTech companies are shipping AI features under competitive pressure. Investors expect it. Sales teams need it on the feature list. But the gap between "we have AI" and "our AI improves outcomes without creating new liabilities" is where product strategy lives and most teams haven't closed that gap yet.

Start with what the evidence shows about learning quality. OECD research on GenAI in education surfaced a pattern that should shape how you scope every AI feature: students using GenAI with structured pedagogical guidance showed real learning gains, while students using the same tools without that structure produced better-looking work but learned less. The outputs improved; the understanding didn't. If you're selling to school districts or universities, that finding is a renewal risk. Institutions are starting to ask whether AI features help students learn or help students skip learning. You need an answer backed by your product design, not your marketing copy.

Then look at the cost structure. AI features carry inference costs, model management overhead, and integration complexity that traditional software doesn't. A naive implementation, wrapping an LLM API call around an existing workflow, might demo well, but it introduces per-interaction costs that scale with usage rather than with revenue. If you haven't modeled how inference costs behave at 10x your current user base, you're building a margin problem into your product.

Plus, FERPA governs how student data flows through any system touching U.S. education. State-level laws like California's SOPIPA add restrictions on how student PII can be processed and stored. The EU AI Act classifies educational AI as high-risk, which triggers conformity assessments and documentation requirements before you can deploy in European markets.

These aren't future concerns. Procurement teams at large districts and universities are already including AI governance questions in their RFPs. If your architecture can't answer those questions cleanly — where data goes, how models use it, what audit trail exists — you lose deals before the product conversation starts.

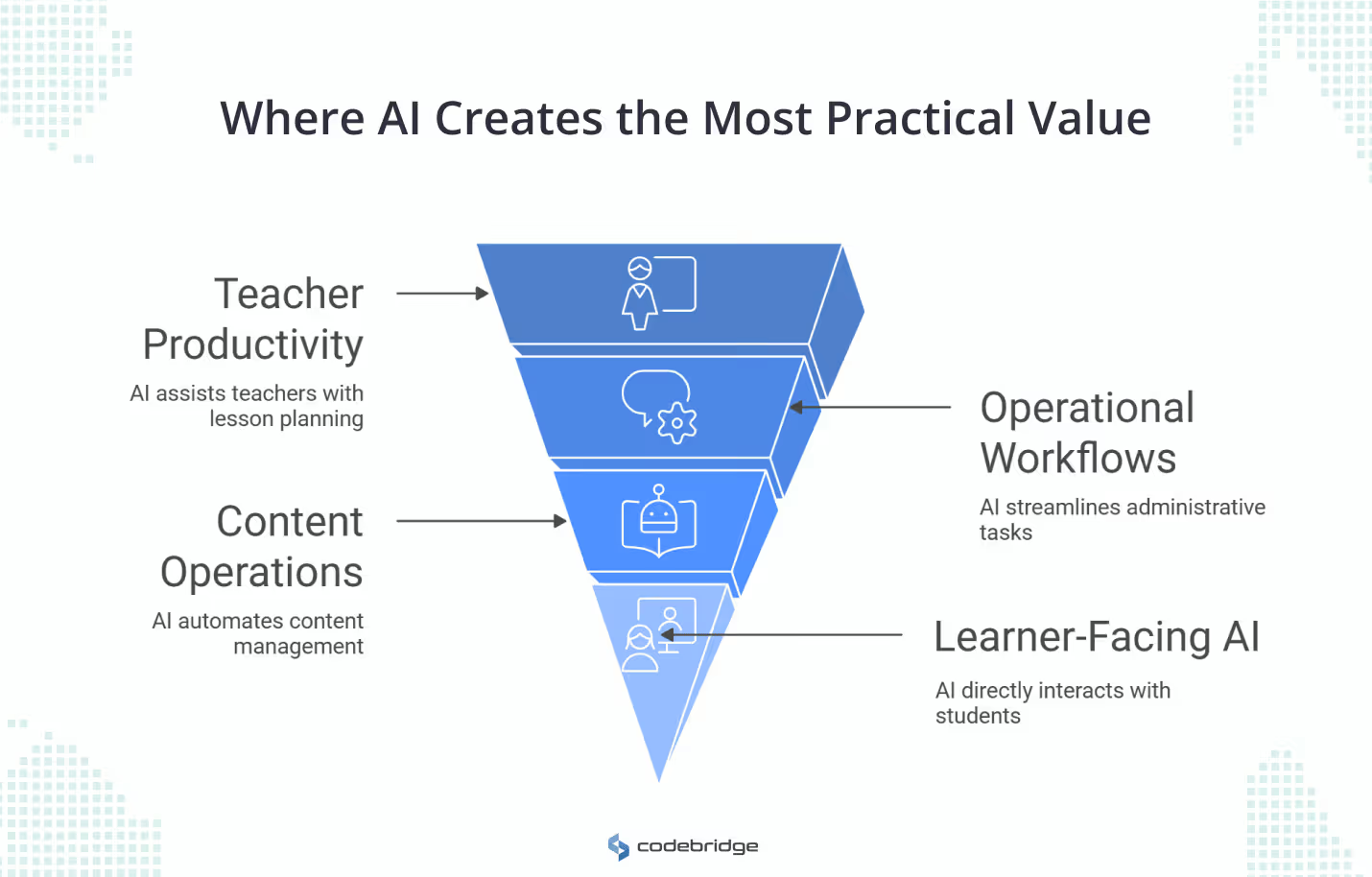

Where AI in EdTech Creates the Most Practical Value

AI use cases in EdTech vary widely in deployment risk and how directly they touch student outcomes. Organizing them by those dimensions helps you decide what to build first and what to defer.

Teacher and Instructor Productivity

This is the lowest-risk starting point. When AI generates lesson plans, rubrics, or quiz variations, a teacher reviews the output before it reaches any student. The failure mode is a bad draft that gets discarded, not a bad learning experience that goes undetected.

Adoption is already moving fast here. RAND data shows teachers' use of AI for lesson planning doubled between 2024 and 2025. Tools like MagicSchool AI report saving teachers 7+ hours per week on rubric creation, quiz generation, and progress summaries.

Content differentiation is where the leverage compounds: a teacher working with a mixed-ability classroom can generate three versions of the same assignment at different reading levels in minutes, instead of writing each one from scratch. That addresses a problem of learner variability that traditional one-size-fits-all materials never solved at the individual teacher level.

For product teams, this category is attractive because the value metric is straightforward: time saved per instructor per week, measured against license cost.

Operational Workflows

Enrollment, onboarding, and student retention workflows produce clear ROI because you can measure them with conversion rates and retention numbers rather than longitudinal learning studies.

A chatbot handling admissions FAQs around the clock reduces "summer melt" (students who accept admission but never enroll) and cuts seasonal staffing costs. Engagement analytics that flag at-risk students based on login frequency, assignment completion, and grade trends give advisors a prioritized intervention list instead of a gut check.

These are well-scoped problems with defined inputs and outputs, which makes them easier to build, test, and maintain than anything touching pedagogy directly.

Content and curriculum operations

For EdTech companies managing large content libraries, AI-driven backend operations affect margin directly. Automated curriculum tagging and metadata generation reduce the content ops headcount required to maintain searchable, standards-aligned libraries.

LLM-based localization lets you bring course content into new language markets faster and at lower cost than traditional translation pipelines, though you still need human review for cultural and pedagogical accuracy.

The business case here is speed to market and content team efficiency, both measurable against existing operational baselines.

Learner-Facing AI

This is the highest-value and highest-risk category. AI that interacts with students directly can accelerate learning, but it can also undermine it if the product design is wrong (a dynamic the next section covers in detail).

The strongest evidence comes from intelligent tutoring systems. A 2025 randomized controlled trial published in Scientific Reports tested an AI tutor engineered with specific design constraints: it managed cognitive load by sequencing problems progressively, prompted students to explain their reasoning before moving forward, and used language designed to reinforce a growth mindset. That tutor doubled learning gains compared to in-class active learning. The result wasn't about the AI model – it was about the pedagogical scaffolding built around the model.

Formative feedback is the second high-value application. Giving students immediate, specific feedback on writing or problem-solving has strong evidence behind it as a learning accelerator, but it has always been bottlenecked by instructor time. AI can close that feedback loop within minutes of submission instead of days. The design constraint is the same as with tutoring: the feedback needs to guide the student toward a corrected understanding, not hand them the answer.

The ordering here is deliberate. Teacher tools and operational workflows let you ship, learn, and build governance muscle before you take on the complexity of learner-facing AI, where the cost of getting the design wrong shows up in student outcomes rather than in a dashboard.

What AI in EdTech Looks Like in Production: The TutorAI Build

Codebridge built Tutorai for a European EdTech startup that wanted to replace expensive human tutoring with AI-driven 3D avatar tutors. The early prototype exposed two execution problems that most learner-facing AI projects hit: cost and latency.

SaaS-based streaming avatars cost $32.33 per tutoring hour, which broke the unit economics at scale. Response delays of 3-5 seconds broke the conversational flow that effective tutoring depends on.

However, the architecture solved both. Codebridge replaced the SaaS avatar dependency with a custom WebGL rendering pipeline, reducing the per-hour cost from $32.33 to $1.15 - a 96% reduction. A hybrid model strategy split the workload between GPT-5 mini for lesson generation (optimizing for cost) and OpenAI Realtime-mini for voice interaction (optimizing for latency), achieving speech-start latency under one second. To keep the AI within tutoring boundaries, the team built a retrieval-augmented generation layer that anchored every response to the active subject curriculum for Science and English tracks.

Tutorai worked because the team treated it as an infrastructure and unit-economics problem from day one, not as a chatbot wrapper. The model selection, rendering pipeline, and pedagogical grounding were architectural decisions made before a single feature shipped - which is the pattern that separates sustainable learner-facing AI from demo-ready prototypes that can't scale.

Why Product Design Determines AI in Education Outcomes

Two studies published in 2025 frame the design problem with unusual clarity, because they tested nearly opposite approaches to the same question: what happens when students use AI to learn?

The first, published in the Proceedings of the National Academy of Sciences, studied high school math students who had open access to generative AI with no hint systems, no limits on what the AI would produce. During practice, those students performed well. When the AI was removed, they underperformed students who had never used it. The AI had done the cognitive work for them. They produced correct answers without building the underlying skill, and the gap showed up the moment the tool was gone.

The second, a randomized controlled trial published in Scientific Reports, tested an AI tutor built with deliberate constraints. The tutor sequenced problems to manage cognitive load, required students to articulate their reasoning before advancing, and used language patterns designed to reinforce a growth mindset. Students using that tutor doubled their learning gains compared to in-class active learning. Same underlying technology. Opposite outcome. The difference was the product design layer between the model and the student.

For product teams, the takeaway is that the AI model is a component. The learning outcome is a function of what you build around that component: how you constrain its outputs, what you require from the student before the AI responds, how you sequence difficulty, and where you insert instructor oversight.

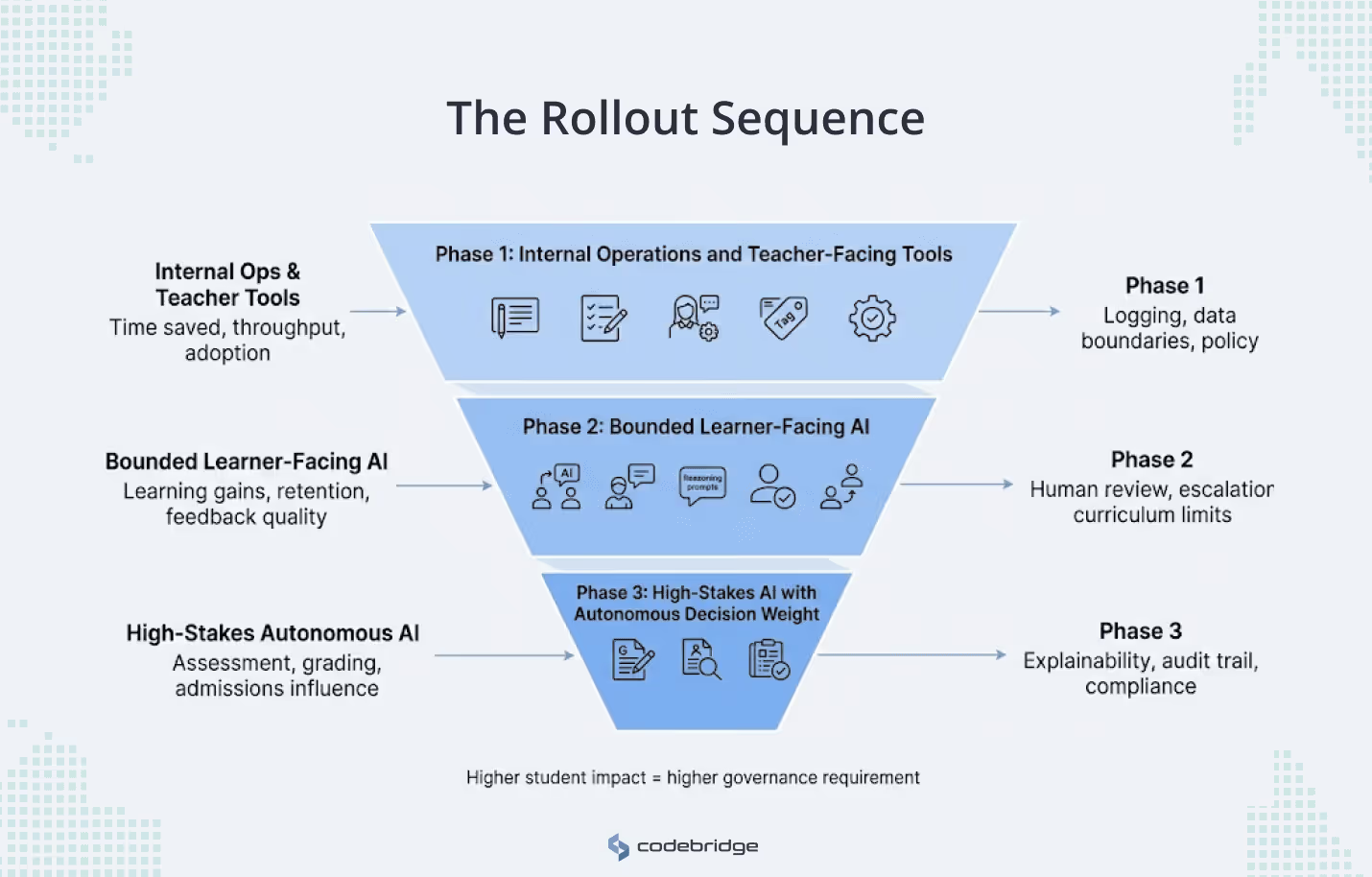

AI in EdTech Rollout Sequence That Manages Risk at Each Stage

The sequence below is organized by how close AI gets to student learning outcomes and how much governance infrastructure each phase requires. Each phase builds the organizational capability needed for the next one.

Phase 1: Internal operations and teacher-facing tools

Start where the failure mode is lowest: workflows where a human reviews every AI output before it reaches a student or affects a decision.

What to build. Lesson plan generation, rubric drafting, quiz and assessment creation, progress summary automation, and internal content tagging. These tools take time-intensive, repetitive work off teachers and content teams.

What to measure. Time saved per user per week. Content production throughput (items tagged, quizzes generated, lesson plans created) compared to your pre-AI baseline. User satisfaction and adoption rate among teachers. These are operational metrics with clean baselines — you'll know within one quarter whether the tool is delivering value.

What governance to put in place. This phase is where you build the governance muscle you'll need later.

- Establish your AI usage policy: which models you use, where data flows, what audit trail exists.

- Implement logging for every AI-generated output, even in internal tools — you need this infrastructure before you expose AI to students.

- Define your data boundaries: student PII should never reach a third-party model without explicit architectural controls (anonymization, data residency, contractual guarantees from your model provider).

If you're serving U.S. education markets, your FERPA compliance posture needs to be validated here, not retrofitted in Phase 2.

What can go wrong. Teachers over-rely on generated content without reviewing it, which degrades curriculum quality gradually and invisibly. Content teams treat AI output as final rather than as a draft, and errors propagate into your content library. Your logging and audit infrastructure is built as an afterthought and can't scale when Phase 2 requires it.

When to move on.

- You've deployed at least two teacher-facing or internal tools in production.

- Your logging captures every AI interaction with enough metadata to audit.

- Your data governance policy is documented, reviewed by legal, and enforceable at the architecture level.

- Teachers or content staff are actively using the tools, and you have at least one quarter of usage data showing measurable efficiency gains.

Phase 2: Bounded learner-facing AI

This phase puts AI in front of students, which changes the risk profile from "wasted staff time" to "degraded learning outcomes." Every feature in this phase needs pedagogical design constraints built into the product architecture, not bolted on as policy.

What to build.

- Guided tutoring with structured problem sequences, formative feedback on writing and problem-solving, and scaffolded practice generation.

- The key design constraints from the evidence covered earlier in this article apply directly here.

Your tutoring features should sequence problems to manage cognitive load, require students to explain their reasoning before the AI advances them, and limit the AI's output scope to hints and guiding questions rather than complete answers.

What to measure. This is where engagement metrics stop being sufficient. You need outcome metrics: pre/post assessment gains for students using AI features versus a control group, skill retention measured after the AI is removed, and feedback quality ratings from instructors reviewing AI-generated feedback. Time-to-feedback (how fast students receive a response after submission) is a valid operational metric, but only alongside the outcome data. If usage goes up and assessment performance doesn't, the feature is a crutch.

What governance to put in place. Human-in-the-loop oversight for this phase means defining the loop concretely.

- Instructors can review and override any AI-generated feedback before or after delivery.

- AI-generated content in high-stakes practice (exam prep, graded assignments) goes through a sampling-based QA process where a percentage of outputs are reviewed by a qualified educator on a recurring cycle.

- You need an escalation path for low-confidence outputs - if the model's uncertainty is high or the student's input falls outside the expected scope, the system routes to a human rather than generating a response.

- Your prompt architecture should enforce content scope limits: the AI operates within a defined curriculum boundary and refuses or deflects out-of-scope queries rather than improvising.

What can go wrong. Students become dependent on AI scaffolding and underperform when it's removed - the exact pattern the PNAS study documented. Your pedagogical constraints are too loose, and the AI drifts toward giving answers instead of guiding reasoning (this happens gradually as prompt behavior shifts across model versions).

When to move on. You have at least two quarters of outcome data showing learning gains (not just engagement) for students using AI features. Your QA process has a documented error rate, and that rate is stable or declining. Your escalation and override systems are functioning in production and being used by instructors. You've survived at least one model version update without breaking your pedagogical constraints - this is a critical operational test, because model behavior changes are the most common source of silent regression in AI-driven products.

Phase 3: High-stakes AI with autonomous decision weight

This phase involves AI making or heavily influencing decisions that affect student outcomes, academic records, or institutional access: automated grading without instructor review, admissions scoring, predictive retention interventions, and academic integrity adjudication.

When to consider this phase. Most EdTech companies should not be here yet. This phase requires mature governance infrastructure, validated outcome data from Phase 2, and regulatory readiness for the markets you serve.

If you're selling into the EU, the AI Act classifies educational AI as high-risk and requires conformity assessments, transparency documentation, and human oversight mechanisms. In the U.S., automated admissions or grading decisions will face scrutiny under existing anti-discrimination and due process frameworks. Your legal and compliance teams need to review every autonomous AI feature against applicable regulations before it ships.

What governance to put in place. Explainability is the hard requirement. For any AI-driven decision that affects a student's grade, progression, or access, you need to be able to produce an audit trail that explains why the system reached that conclusion in terms a non-technical reviewer can understand.

What can go wrong.

- Bias in model outputs creates equity violations that generate legal and reputational exposure.

- Grading inconsistency erodes trust with institutions, students, and parents.

- Model updates change decision boundaries in ways your monitoring doesn't catch, and you discover the drift only when a customer reports it.

- Opacity in decision logic makes it impossible to respond to appeals or regulatory inquiries.

These failure modes are why this phase requires the most governance infrastructure and the longest validation period before deployment.

The honest assessment. Phase 3 is where the highest long-term value sits - an automated, defensible assessment at scale would transform EdTech unit economics. But it's also where the highest reputational and regulatory risk concentrates.

Conclusion

AI changes what EdTech products can do. It doesn't change what makes them work. The companies that get durable value from AI will be the ones that know which bottleneck each feature solves, what governance it requires, and when to keep a human in the loop.

Start with teacher tools and operations. Build your logging, data boundaries, and QA infrastructure on those low-risk workflows. Move to learner-facing AI only when your architecture can enforce the pedagogical constraints that separate learning gains from dependency.

Treat every AI feature as a product architecture decision, because that's what your institutional buyers, your regulators, and your outcome data will hold you to.

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript