You have a budget for AI agents, a board expecting results within two quarters, and you have an engineering team that will maintain whatever you ship. The question you face is where to start. A general-purpose agent that works across your whole organization, or a narrow agent built for one workflow?

Most founders frame this as a trade-off between breadth and depth. In practice, the decision turns on something harder to see during a demo – governance cost. How much organizational effort will you spend securing, monitoring, permissioning, and explaining this system before it pays for itself?

This article breaks down the operational and governance differences between horizontal and vertical agents so you can match the right model to your team's capacity, your data maturity, and your timeline for results.

Horizontal AI Agents Solve the Wrong Problem First

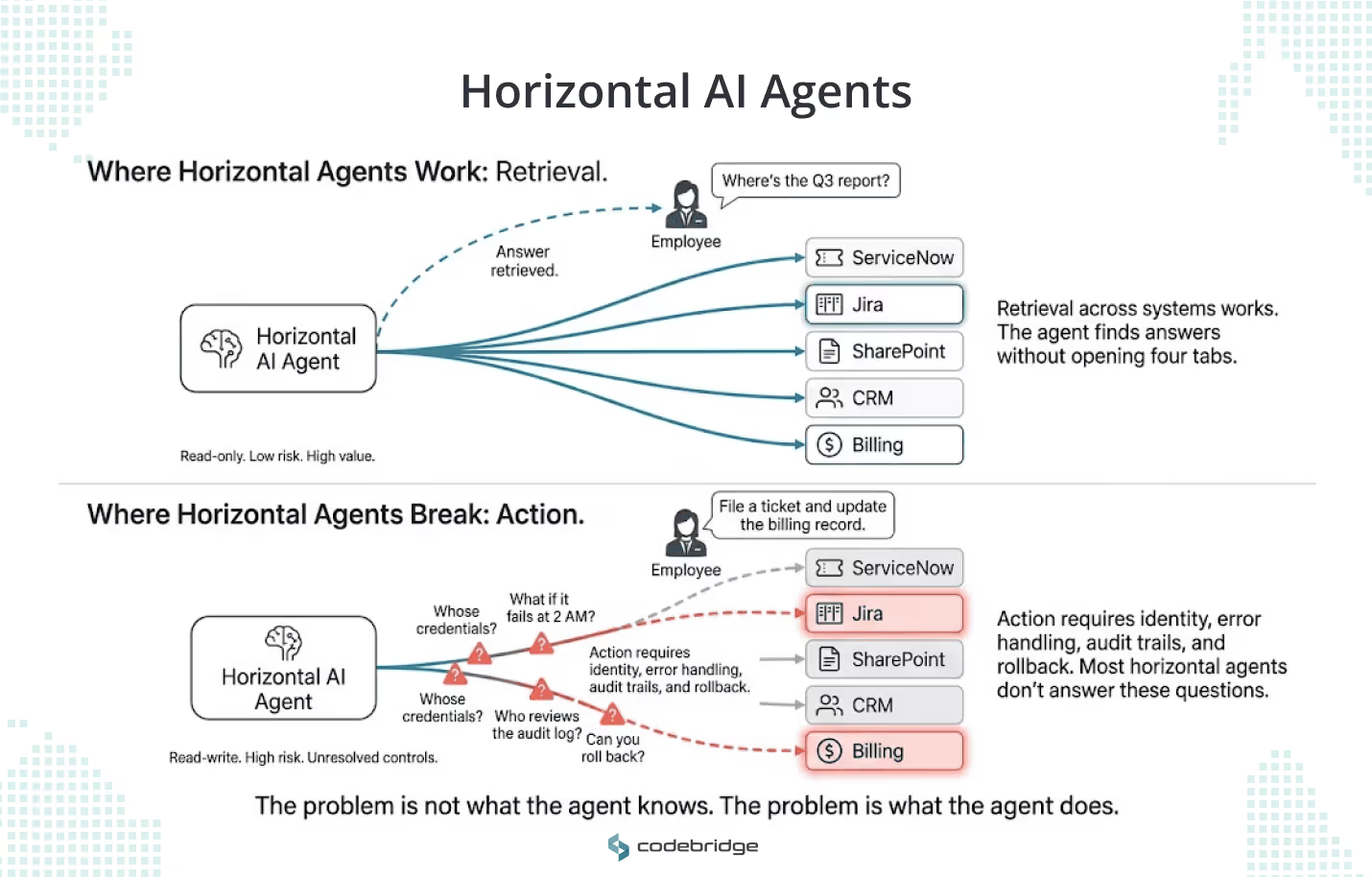

Horizontal agents are general-purpose systems designed to operate across multiple functions, teams, and industries rather than being tailored to a single use case. These systems typically rely on large, foundational models trained on diverse datasets, allowing them to adapt to scenarios ranging from answering questions to analyzing data across an entire organization.

Companies often prioritize horizontal models because of their broad internal applicability and the narrative of a single platform that can be reused across departments.

Google built Gemini Enterprise on this model: permissions-aware search across ServiceNow, Jira, and SharePoint, with a multimodal interface layered on top. For a 200-person company running six or seven SaaS tools, that pitch sounds like a direct fix for the daily friction of context-switching.

And for retrieval, it works. Your team asks a question, the agent finds the answer across systems, and nobody has to open four tabs. The problem starts when you ask the agent to do something. File a ticket, update a record, or send a notification. The moment an agent takes action inside a production system, you need to answer a different set of questions: whose credentials does it use? What happens when it makes a mistake in your billing system at 2 AM? Who reviews the audit log?

If your product handles patient data, financial records, or regulated workflows, those questions land on your desk before the agent writes its first Jira ticket.

Why Horizontal AI Agents Become Harder to Govern at Scale

Every system your agent touches adds a layer of access control, data sensitivity review, and failure-mode analysis. Microsoft's enterprise agent framework treats each agent as a digital worker: assigned identity, scoped permissions, full audit trail. That guidance exists because Microsoft watched early adopters deploy broad agents without those controls and spend months cleaning up the results.

For a Series A SaaS company with 40 engineers, this overhead compounds fast. Your team needs to map permissions across every connected system. You need escalation paths for each integration. You need someone to own the monitoring. And you need all of that before the agent handles its first production request.

The cost shows up in three places that founders tend to underestimate.

- Data quality: a horizontal agent that searches across your knowledge base, Slack history, and CRM will surface whatever inconsistencies exist in those systems. If your API documentation contradicts your onboarding guides, the agent will confidently serve both answers to different people.

- ROI attribution: when an agent helps everyone a little, you cannot tie that improvement to a revenue line or a cost reduction your CFO will sign off on.

- Team load: someone on your engineering team will own each integration, and that someone has other priorities.

OWASP and NIST both emphasize least-privilege scoping as a baseline for trustworthy AI systems. In practice, least-privilege design for a horizontal agent means writing and maintaining permission logic for every system it touches. For a scale-up with limited DevOps capacity, that is a real constraint on what you can ship next quarter.

Why Vertical AI Agents Often Deliver Enterprise ROI Faster

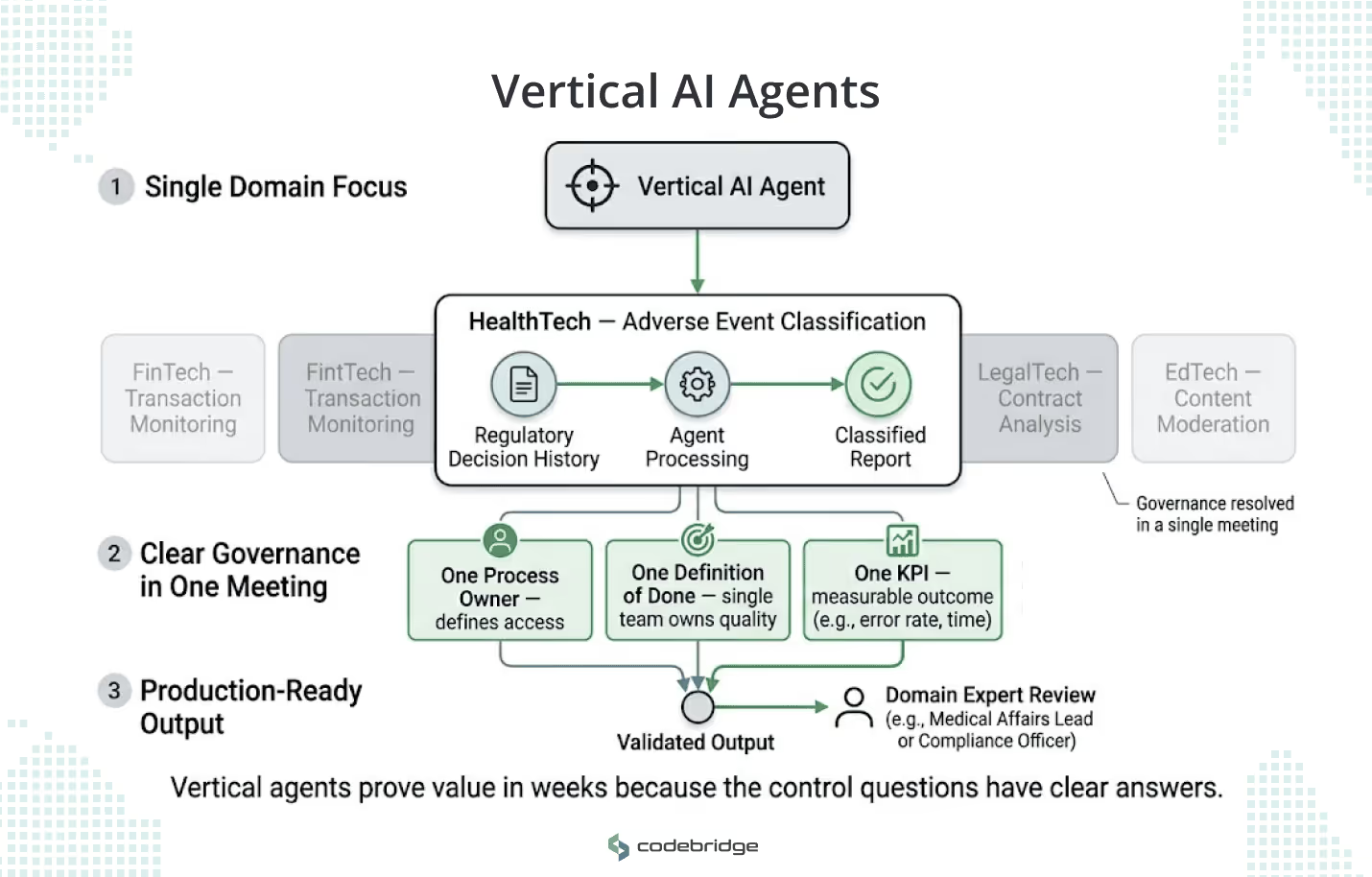

Vertical AI agents are designed to operate deeply within a specific industry, function, or use case. By focusing on a single domain — such as supply chain optimization, claims handling, or financial risk analysis — these agents deliver more accurate insights and predictions than horizontal alternatives.

These agents reach production faster because you can answer the governance questions in a single meeting. One process owner decides what the agent can access. One team defines what "done" looks like. One KPI (claim processing time, error rate, review throughput) tells you whether it worked.

AWS found that the most successful early agent deployments "collapse handoffs" in a specific workflow, removing the hours or days of back-and-forth between a request and a resolution. That pattern maps to how most growing tech companies actually operate: you have a bottleneck in a specific process, and a person or small team owns that process end-to-end.

Consider what this looks like inside a HealthTech company processing clinical trial data. A vertical agent built for adverse event report classification can train on your regulatory team's decision history, operate within your existing compliance framework, and produce outputs your medical affairs lead can validate against a known standard. Compare that to deploying a general-purpose agent across your entire R&D operation and asking five different team leads to each define what "correct" means for their workflows.

The same logic holds for FinTech transaction monitoring, LegalTech contract analysis, and EdTech content moderation. Wherever you have a repeatable process with clear inputs, defined exceptions, and a measurable outcome, a vertical agent can prove value in weeks. A horizontal agent covering the same ground will still be in its permissioning phase.

The choice between horizontal and vertical AI agents is an architectural and budget-allocation decision, not merely a tech category selection. While horizontal agents appear attractive for their broad utility, enterprise value is realized only when an agent improves a workflow with acceptable risk, clear ownership, and measurable output.

The following table serves as a strategic decision framework for CEOs and CTOs to evaluate these models based on governance-adjusted ROI and operational reality.

Strategic Comparison: Vertical AI Agents vs. Horizontal AI Agents

Execution Reality: Why Vertical Agents Usually Win First

Founders and CTOs often find that horizontal agents win executive demos because they appear broadly useful, but vertical agents win buying decisions because they are easier to secure and measure.

- The Governance Tax: As soon as an agent moves from simple information retrieval to taking action, horizontal models face massive governance requirements. Organizations must define whose permissions the agent uses across dozens of systems and how to audit failures that span multiple departments.

- Workflow Clarity: AWS and Anthropic emphasize that value appears only when work is defined in "painful detail". Horizontal agents struggle with this because their "done state" is often vague. Vertical agents, by contrast, address one specific piece of work with a clear start, end, and purpose.

- Measurability: It is difficult to prove a business case for a system that helps "everyone a little". A vertical agent designed for invoice exception processing or contract review has a direct link to labor cost reduction and queue wait times.

Strategic Recommendation

For technology-driven companies, the most resilient path is to start vertical and expand horizontally later.

- When to go Vertical: Use when precision matters, such as in highly regulated environments (finance, healthcare) or for workflows with high-stakes decision-making where you need tight control over "least-privilege" permissions.

- When to go Horizontal: Use when the primary need is permissions-aware retrieval or knowledge discovery, the organization already has clean data and strong identity controls, and the goal is broad productivity uplift rather than autonomous action.

Vertical agents define the first successful operating model for real enterprise AI, while horizontal agents eventually define the long-term interface for how employees interact with those systems.

Enterprise AI Strategy: Start with Vertical Agents, Expand Horizontally Later

If you are a founder or CTO planning your first agent deployment, here is a sequence that reduces risk at each step.

Phase 1: Pick one workflow. Find a process with a clear start, clear end, and defined tools. Write a job description for the agent as if you were hiring a contractor for this role. If you cannot write that job description in a single page, the scope is too broad.

Phase 2: Design governance before code. Define permissions, escalation triggers, and observability requirements before your team writes the first line of agent logic. This step feels slow. It saves you from rebuilding the system when your security review catches what you skipped.

Phase 3: Prove the outcome. Run the agent with one owner and a specific KPI. Measure for four to six weeks. If the numbers move, you have a business case. If they do not, you have a cheap lesson.

Phase 4: Replicate the pattern. Take the governance templates, integration patterns, and monitoring setup from Phase 3 and apply them to an adjacent workflow. Each deployment should cost less engineering time than the last.

Phase 5: Evaluate a horizontal layer. Once you have three or four vertical agents running in production with stable governance, assess whether a cross-system retrieval layer would reduce friction for your broader team. At this point, you have the operational foundation to support it.

Conclusion

Horizontal agents may become the default interface between your team and your digital systems over the next few years. The companies building toward that future on a stable foundation will get there. The companies that skip the foundation work and deploy broad agents into ungoverned environments will spend their engineering budget on incident response and permission remediation instead of product development.

Your first agent deployment sets the pattern for every deployment that follows. Start with a workflow your team understands, build the governance to match, prove the value, and expand from that base. The technical capability of the model matters less than whether your organization can operate it with confidence at the current stage of your data maturity and team capacity.

For engineering leaders at SaaS, HealthTech, FinTech, and LegalTech companies building products used by thousands of users, the calculus is straightforward. You already manage complex integrations, compliance requirements, and architectural trade-offs. Apply that same discipline to your agent strategy. The rigor you bring to system design is the same rigor that makes an agent deployment succeed or fail in production.

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript