Agentic AI does not remove data engineering complexity. It surfaces weak data foundations faster, and with fewer guardrails than a human operator would.

For founders and CTOs responsible for production environments, the gap between a promising agent demo and a reliable production deployment comes down to one question: does the host system provide trusted metadata, fresh operational context, reliable execution paths, and enforceable governance? If any of those are missing, the agent inherits the gaps and acts on them at machine speed.

An AI agent’s value is directly constrained by the environment it operates in. Databricks has built its agentic strategy around unified semantics, lineage, and open governance. Snowflake frames its “Agentric Enterprise” as a coordination layer that requires trusted enterprise data and a robust control plane. Both treat the data layer, not the model, as the prerequisite.

This article examines agents that monitor pipelines, reason over quality signals, and trigger actions within governed workflows. It is wrtitten for technical leadership evaluating where and how to introduce agentic capabilities into existing data infrastructure.

What Agentic AI in Data Engineering Looks Like

Technical leaders need a clear boundary between agentic AI, standard workflow automation, and co-pilot assistants. A co-pilot helps an engineer write code or fix bugs under direct human oversight. An agentic system observes the environment, reasons over metadata, and decides on actions within defined boundaries, without waiting for a human prompt at each step.

In data engineering, that distinction matters because the agent’s scope of action determines the blast radius when something goes wrong. An agentic system built on an LLM operates as a cognitive controller for pipeline tasks: monitoring, root cause analysis, schema reconciliation, and remediation. Confluent’s “streaming agents” illustrate this pattern, using built-in observability and safe recovery paths to bridge data processing and real-time reasoning.

Three practical applications clarify what this looks like in production:

- Requirements validation. The agent cross-references new data requests against existing infrastructure and compliance policies, identifying feasibility issues before development starts. This replaces a manual review cycle that typically takes days.

- Design optimization. The agent analyzes legacy schemas and metadata to infer transformation logic and simulate resource allocation under peak load. Engineers review the output rather than building the analysis from scratch.

- Automated remediation. The agent monitors service logs, performs root cause analysis on failures, and executes bounded self-healing actions: scaling infrastructure, retrying jobs with adjusted parameters, or routing incidents to the right team. The key constraint is that each action has an explicit rollback path.

Each of these works only when the agent has access to shared, machine-readable context. Without that, you get a text generator with pipeline permissions.

Why Weak Data Foundations Break Agentic AI in Production

A weak data environment does not produce incorrect agent outputs. It produces confident agent actions based on wrong state. Your team then spends more time diagnosing the agent’s decisions than they would have spent doing the work manually.

Five failure modes show up consistently in production:

Stale context. An agent tasked with detecting drift or routing incidents gets fed batch context that is hours old. The agent’s reasoning logic may be correct, but it acts on a system state that no longer exists. In an incident response workflow, that delay turns a containable issue into a cascading one.

Broken or missing lineage. Without a living record of how data moves and transforms, the agent cannot assess the blast radius of a schema change. It also cannot trace a metric shift back to its root cause. Your team ends up debugging the agent’s action instead of the original data issue.

Inconsistent semantics. When different teams define “revenue” or “active users” differently across fragmented tools, the agent reasons over conflicting definitions. These produce silent correctness bugs: reports that look right, pass automated checks, and mislead decision-makers.

Pipeline unreliability. Upstream lag and unstable jobs feed bad signals to the agent. The agent treats those signals as truth and may trigger unnecessary remediation loops or incorrect escalations. Each false action adds noise to your on-call rotation and erodes trust in the system.

Weak runtime governance. Many organizations have policy documents but lack runtime controls. Nothing prevents the agent from reading sensitive columns, triggering expensive queries, or bypassing security boundaries. The policy exists in a wiki. The agent operates in a runtime.

Each of these failures shares a root cause: the agent operates on whatever state the environment provides, at whatever speed the system allows. If that state is stale, fragmented, or ungoverned, the agent scales those problems across every workflow it touches.

Five Data Engineering Foundations for Safe Agentic AI

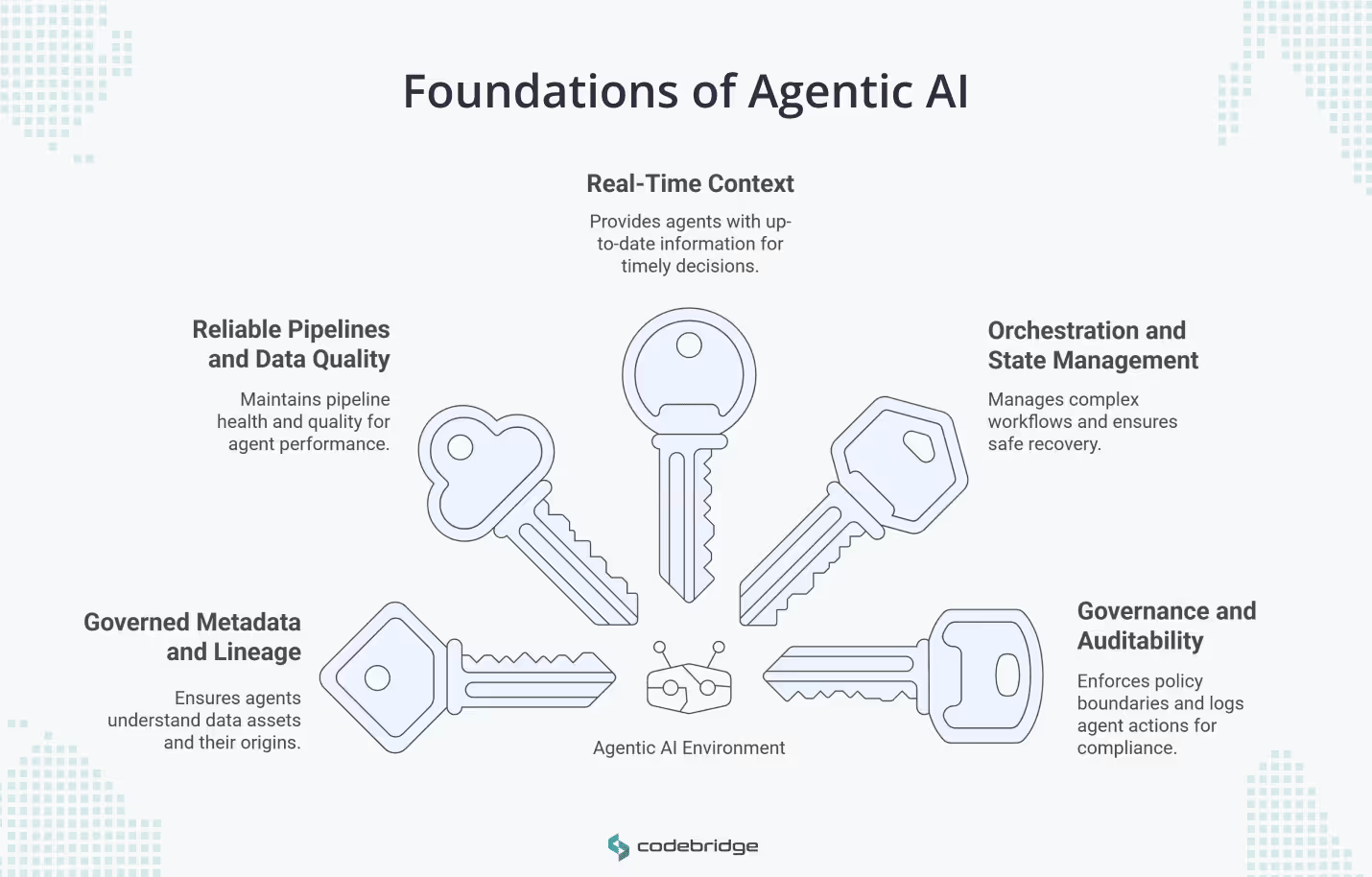

A CTO evaluating agentic AI should focus engineering investment on the host environment first. The model’s sophistication matters far less than the quality of the context and controls around it. Five foundations form the minimum viable prerequisite for safe, autonomous data engineering.

1. Governed Metadata and Lineage

Your agent needs to know what assets exist, where they came from, who owns them, and what depends on them. Raw data access is not enough. Metadata turns raw tables into usable context.

Snowflake treats lineage tracking as a practical requirement for troubleshooting and AI governance, linking model provenance back to specific training snapshots and feature pipelines. For organizations operating under frameworks like the EU AI Act, this lineage record is also a compliance requirement: you need to demonstrate reproducibility from model output back to source data.

2. Reliable Pipelines and Data Quality Controls

Pipeline health and quality signals must be exposed as machine-readable telemetry. If your team monitors pipeline status through dashboards that no one checks consistently, an agent will face the same blind spots.

Databricks’ System Tables illustrate the right pattern: exposing job timelines, execution behavior, and lineage as queryable assets. An automated system can centrally monitor jobs and identify failures without relying on tribal knowledge. The standard your environment needs to meet is that any pipeline failure is visible to the agent within seconds, with enough context to determine severity and scope.

3. Real-Time or Near-Real-Time Context

Ask this question: how fresh is the context your agent acts on? If an agent observes a traffic burst or schema drift, the value of its response depends on whether it sees the current state or a snapshot from two hours ago.

Operational agents that trigger decisions or route incidents cannot function on stale data. Confluent’s architecture addresses this through managed context engines with role-based access control, delivering fresh event streams to agents that respond to network malfunctions or payment failures within seconds. The gap between batch context and streaming context is where most agent-driven incidents originate.

4. Orchestration, State, and Safe Recovery

Production agents participate in multi-step workflows. That means they need state tracking, retries, and checkpointing. Without these, a failed agent action in step three of a five-step workflow leaves your pipeline in an indeterminate state that requires manual recovery.

Two resilience patterns matter here. Circuit breakers prevent cascade failures by stopping the agent from retrying a downstream system that is already degraded. The Saga pattern manages distributed transactions by ensuring each step has a compensating action that can undo it. Snowflake’s “control plane” concept reinforces this: coordinated execution that determines whether an action should occur and defines the recovery path if it fails.

When you build AI agents with Codebridge for data engineering workflows, circuit breaker logic and saga-pattern recovery are part of the architecture spec. They defined before the first pipeline integration, not after the first incident.

5. Governance, Policy, and Auditability

If an agent can affect business reporting or trigger downstream jobs, it needs explicit policy boundaries and tamper-proof decision logs. You need to answer two questions at any point in time: what did the agent do, and was it authorized to do it?

The NIST AI Risk Management Framework supports this by treating governance as a continual requirement across the AI lifecycle, tied to organizational risk controls. Snowflake places policy guardrails and authorized action at the core of its agentic architecture. For your implementation, governance should be enforced at runtime, not documented in a separate system and reviewed after the fact.

Case Study: How Uber Built Agentic-Ready Data Infrastructure

Uber manages over 120,000 production workflows used by 3,000 users. Their experience illustrates what a large-scale data environment needs before agentic capabilities become viable.

Uber built the Unified Data Quality (UDQ) platform to monitor and detect quality issues across 2,000 critical datasets. The system catches 90% of incidents proactively, using centralized metadata as a source of truth to auto-generate tests. That reduced manual onboarding effort and enforced consistent quality standards across teams.

They then introduced WorkflowGuard to govern their daily workflow volume. This layer enforces standards on retention periods, resource pool access, and schedule intervals. One governance policy alone reduced legacy workflows by 66% and improved the execution success rate from 69.28% to 85.22%, generating $200,000 in amortized annual compute savings.

Uber did not start with an agent. They started with metadata standards, quality infrastructure, and workflow controls. Those investments created the environment that an agentic system could operate in safely. The sequence matters: the hard part of agentic data engineering is providing the agent with trustworthy context and bounded execution, not giving a system the ability to act.

Where Mature Data Engineering Teams Get Stuck with Agentic AI

Most organizations that struggle with agentic AI do not lack tools. They lack a coherent operating model that exposes trusted context to an automated layer. The tools exist, but they do not interoperate in ways an agent can consume.

Five friction points show up repeatedly:

Incomplete metadata. Documentation exists, but teams maintain it inconsistently. Automated systems cannot ingest it because it sits in formats designed for human readers: Confluence pages, Notion docs, tribal knowledge in Slack threads.

Surface-level lineage. Lineage tracking is available but lags behind the actual platform state. The lineage graph shows what the system looked like last week, not what it looks like now.

Fragmented observability. Infrastructure signals live in Datadog. Data quality signals live in custom dashboards. Pipeline orchestration status lives in Airflow. No agent can reason across the full workflow when signals are trapped in separate tools.

Static governance. Policy exists in PDFs and wikis. Nothing enforces it at runtime. The agent can read the policy document but has no mechanism to check whether a specific action complies.

Localized context. Real-time data is available for specific ingestion points, but the downstream workflow relies on stale batch processing. The agent sees current state at the source and stale state at the destination, creating mismatches that produce wrong actions.

These barriers keep teams locked into co-pilot-level AI assistance. The agent inherits the fragmentation of the underlying environment, and no amount of model sophistication compensates for it.

Agentic AI Readiness Assessment for CTOs and Technical Leaders

Before investing in an agent platform, run your data environment against these diagnostic questions. Each one identifies a specific capability gap that will constrain agent performance in production.

Any “no” answer in this list represents a constraint that will limit agent reliability in production. Address these gaps before evaluating agent platforms.

Conclusion

Agentic AI in data engineering is an architectural and organizational challenge. The model’s intelligence is a secondary concern. What matters is whether your data layer gives the agent trustworthy state to reason over and bounded mechanisms to act within.

For most enterprises, the path to agentic capability starts with the discipline of data engineering itself: building metadata standards, reliable pipelines, and governance guardrails. Autonomy follows from a reliable data layer. It does not replace the need for one.

The organizations that will deploy agentic AI successfully are the ones investing in environment quality now. The agent is the last piece, not the first.

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript