57% of organizations have an AI agent in production. 21% have a mature way to govern one. McKinsey reports 71% of organizations use generative AI, and more than 80% see no EBIT impact from it. These three numbers describe the same gap. Agents are easy to pilot and hard to operate, and most agent projects stall inside that gap.

Building an agent is a systems engineering problem. What determines whether it works in production is the scaffolding around the model, such as control flow, tool permissions, and the handoff to humans. A chatbot takes an input and returns a string. An agent holds a goal across multiple steps, calls tools, and can take actions no one signed off on.

Now, the agent can retry a failed tool call until it exhausts its token budget, pass a plausible but wrong intermediate step through to the next step without anyone noticing, or follow instructions planted in content it was asked to read. Standard CI/CD, code review, and uptime monitoring don't catch most of this.

What follows is what we require before signing off on an agent for production. Eight principles, in the order they're usually decided: workflow scope, success metrics, tool surface, control flow, observability, human oversight, security, and rollout.

Principle 1: Start With a Constrained Workflow When You Build AI Agents for Business

The most successful first deployments of AI agents are not broad "digital employees" but tightly scoped systems designed for high-volume, rules-bounded workflows. Organizations that see real ROI often cluster their agents around structured work such as customer support queries, invoice reconciliation, or internal knowledge retrieval.

Consider a well-documented reference case. An OpenAI-based customer service assistant deployed at a major fintech handled 2.3 million conversations in its first month, roughly the workload of 700 full-time agents, and dropped average resolution from 11 minutes to under 2.

The architectural reason it worked was workflow design: a known set of query types, structured customer data, existing resolution protocols, and a defined handoff to a human for anything the agent couldn't resolve. The model was a component. The workflow was the system.

A "Good First Candidate" for an agent is a process with clear success criteria and well-structured domain data. Conversely, tasks that are emotionally sensitive, ambiguous, or highly consequential should remain hybrid, where agents handle routine steps but hand off to humans for final decisions.

Principle 2: Define the Business Metric Before the AI Agent Architecture

An agent earns its place through a measurable change in how work gets done. Resolution time drops. Manual touches per case drop. First-contact resolution rises. Cost per handled task falls below the cost of the human touch it replaces. If the team can't name the target number, the team isn't ready to pick an architecture.

The hidden constraint is step‑level reliability. In many real deployments, even single‑digit to low double‑digit error rates per agent step are common, and those errors compound across a workflow. For illustration, if each step succeeds 90% of the time, a five‑step workflow only succeeds about 59% of the time — roughly 41% of runs fail somewhere along the way.

Closing that gap costs compute, latency, and engineering time: retries and fallbacks, validation gates and evaluation suites, and human review on the tail of the distribution. Budget that mitigation explicitly.

Four metrics cover most cases. Each needs a defined threshold before the build starts, not after.

- Resolution time. End-to-end latency from task arrival to task closure, measured against the human-in-the-loop baseline. Target the point where the agent is faster and the accuracy is within tolerance.

- Exception rate. Percentage of tasks that escalate to a human. Set the rate that the workflow can sustain given the current support team capacity, and track the mix of escalation reasons.

- Step-level accuracy. Reliability per sub-task, measured in production traces, not on an offline test set. For multi-step workflows, the per-step floor is usually >99%; anything lower compounds into unacceptable workflow failure.

- Cost per task. Fully loaded token spend plus infrastructure plus escalation cost, compared to the cost of the human touch it replaces. If the economics only work at model prices that haven't shipped yet, the design isn't ready.

Ownership matters as much as the metrics. In practice, the product lead sets the operating target, engineering owns step-level accuracy and cost per task, and the operations team that runs the workflow owns the exception rate. No single role can own all four without the accountability collapsing in one direction, usually toward cost, because it's the easiest to measure. Assign the four explicitly in the project brief.

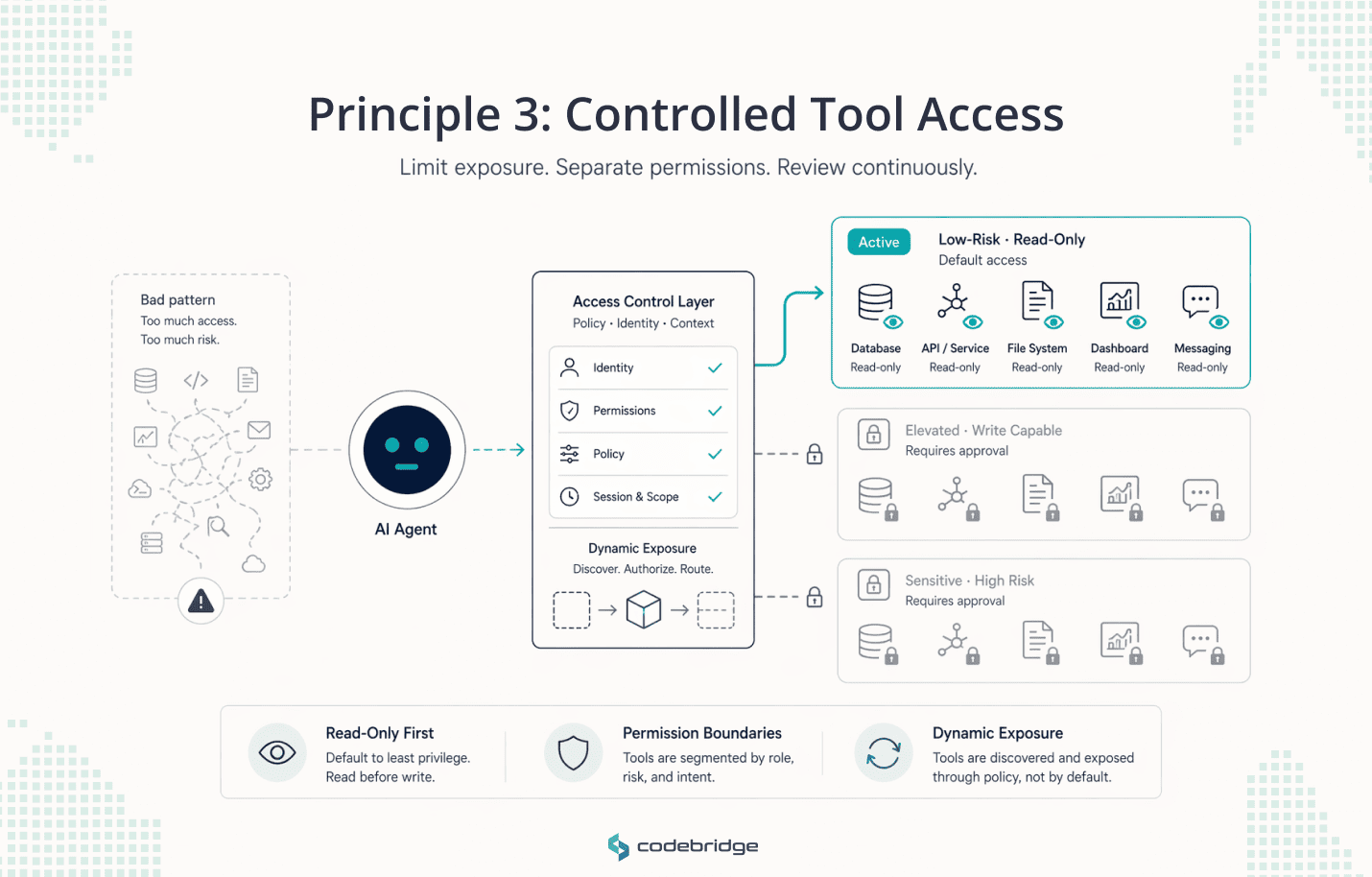

Principle 3: Tool Access and Permissions Are Core to AI Agent Architecture

The fastest path to an unreliable or unsafe agent is exposing too many tools with too much permission. Every external capability granted to an agent, whether an API call, a database query, or a file system modification, expands the attack surface and increases the risk of tool sprawl.

Published benchmarks and our own delivery experience suggest keeping the number of tools exposed at any given turn small, ideally fewer than 20, to maintain accuracy. Overloading a single agent with too many tools leads to role overload, where context drifts, and priorities blur.

Three design rules keep the tool surface governable:

- Read-Only First: Agents should initially be deployed in read-only roles, with state-changing capabilities added only after the reasoning logic is proven.

- Permission Boundaries: Tool access should be segregated by role, workflow, and risk class.

- Dynamic Exposure: Using protocols like Model Context Protocol (MCP) to allow agents to discover and interact with tools through standardized, stateful interfaces rather than hardcoded prompts.

The failure mode to design against is not the agent calling the wrong tool once. It's the agent calling a tool the team forgot it had access to, six months after the reasoning behind the permission was lost. Tool inventories age badly. Review them on the same cadence as IAM policies.

Principle 4: Enterprise AI Agents Need Explicit Control Flow, Not Agent Magic

The model cannot be the control plane. Left to decide its own loop, an agent will retry a failed tool call until the token budget runs out, invent an intermediate step to justify continuing, or exit a workflow one action short of the goal because its context window is filled with earlier reasoning. The model is good at picking the next step inside a constrained set of options. It is not good at deciding when the workflow is finished.

The control flow – what counts as a valid action, how many steps are allowed, what happens when a step fails, when the agent hands back to the caller – sits in code outside the model. Four patterns cover most production designs.

- Contracts over Free-form Action: Use typed JSON schemas and strict input/output contracts to ensure the model cannot "wander" outside of its intended function.

- Explicit Step Limits: Implement hard budget ceilings and iteration counts (e.g., maximum 10 turns) to prevent infinite loops.

- Plan-and-Execute vs. ReAct: While ReAct (Reasoning and Acting) is highly adaptable, it is token-expensive and sequential. Plan-and-Execute patterns, which generate a full plan upfront, provide more predictable costs and faster execution for structured tasks.

- Retry and Fallback Logic: Differentiate between transient network errors (retry with backoff) and logic errors (escalate to a human).

The test for whether the control flow is adequate: if the model were replaced tomorrow with a different vendor, would the workflow still run? If the answer depends on prompt tuning, the control logic is inside the model and hasn't been externalized yet.

Principle 5: AI Agent Observability Is a Prerequisite for Production Trust

You do not have a production system if you can only see the final answer; you must be able to inspect every step, tool call, and handoff. Agent quality is inherently unstable, shifting with changes in prompts, models, and data context.

89% of organizations running agents have some form of tracing. Most of those implementations capture the top-level input and output and call it done. That catches almost none of the interesting failures. The failures that matter are the ones where the agent produced a plausible final answer on top of a wrong intermediate step – the retrieval returned a stale document, the tool was called with a malformed argument that the next step silently accepted, or the plan diverged from the user's actual request two turns ago. These are only visible at the step level.

Leadership should demand visibility into:

- Trace-Level Reasoning: The ability to replay historical data to see exactly why an agent made a specific decision.

- Latency Breakdowns: Understanding the "physics of latency" between the prefill phase (input processing) and the decode phase (sequential generation).

- Regression Testing: Automated evaluations that use "LLM-as-judge" to ensure that small prompt updates do not break existing functionality.

Principle 6: Human-in-the-Loop AI Agents Belong in the Operating Model

.png)

Human approval in an agent workflow is a capacity allocation decision. The team decides which actions the agent executes unsupervised, which actions require a human signature before they commit, and which actions the human monitors after the fact. The question isn't whether to include humans, every serious deployment does, but where the approval boundary sits and how the humans stay effective at enforcing it.

Three risk categories usually carry approval gates: actions with direct financial consequences, actions visible to the end customer, and actions that create legal or regulatory exposure. A refund over a threshold. An outbound email to a named account. A data deletion. A contract modification. These are bounded, enumerable, and belong in the change-management list that the team reviews before launch, not in the agent's prompt.

Two primary patterns define this oversight:

- Human-in-the-Loop (HITL): A human must approve specific tool calls before the agent proceeds, maximizing control for sensitive operations like code deployment or database deletions.

- Human-on-the-Loop (HOTL): Agents operate with greater autonomy within "permission scopes," and humans intervene only when risk thresholds are exceeded, or confidence scores drop below a certain level.

A common pitfall is approval fatigue, where users blindly confirm warnings, effectively negating the safeguard. Strategic HITL design targets specific, high-risk decision points rather than every step, ensuring the human remains a high-level supervisor rather than a bottleneck.

Principle 7: AI Agent Security and AI Agent Governance Must Be Designed In From Day One

When an agent can process untrusted content and take external action, security becomes a systems-level problem rather than just a model-layer one. Agents are uniquely vulnerable to indirect prompt injection, where malicious instructions are embedded in a webpage or email the agent is tasked to summarize, causing it to hijack the user's session or exfiltrate data.

Meta's "Agents Rule of Two" provides a practical framework for this risk. To avoid high-impact consequences, an agent should simultaneously satisfy no more than two of the following three properties in a single session:

- [A] Process untrustworthy inputs (e.g., inbound emails, web search).

- [B] Access sensitive systems or private data.

- [C] Change state or communicate externally (e.g., send emails, move money).

If a workflow requires all three, it must not be permitted to operate autonomously and requires mandatory human-in-the-loop validation.

Principle 8: Enterprise AI Agents Should Be Rolled Out Like Serious Software

Agent deployment must follow the same rigor as any production-critical software update, including staged rollouts, versioning, and rollback readiness. Non-deterministic systems are prone to regressions that are difficult to detect through traditional CI/CD assertions.

Critical deployment strategies include:

- Immutable Deployments: Every deployment is a versioned snapshot of code, prompts, tool definitions, and model configuration that never changes once live.

- Execution Pinning: Long-running tasks (which can take weeks when waiting for human approval) must continue on the version they started with. If a code update occurs while an agent is mid-workflow, the new logic may fail to interpret the existing history, leading to silent corruption.

- Shadow-Mode Validation: Running a new version in parallel with the old one to measure behavioral divergence before full release.

- Explicit Kill Criteria: Pre-defined thresholds for latency, cost, or error rates that, if met, trigger an immediate halt to the rollout.

The readiness check: if the team pushes a new version right now, can it explain what happens to the 47 agent runs currently in flight? If the answer is "they'll be fine, probably," the deployment model isn't done.

A Practical Checklist for AI Agents in Production

Eight gates, one per principle. Each one should return a clear yes before launch, not a qualified one.

Conclusion

The production question for an agent program isn't how capable the model is. It's whether the team can answer four things without hedging: what the agent is allowed to do, what it's forbidden from doing, how every step is monitored, and where control passes to a human.

Four answers, written down, owned by named people. The eight principles in this piece describe what has to be built behind those answers, scoped workflows, defined operating metrics, governable tool surfaces, explicit control flow, step-level observability, risk-weighted oversight, security designed in rather than retrofitted, and deployment that handles in-flight runs.

Teams that treat these as engineering requirements ship agents that hold up under real load. Teams that treat them as documentation ship pilots that demo well and stall in production. The competitive advantage over the next two years is operational, not algorithmic.

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript