OpenClaw is an architecturally ambitious platform, designed to serve as a single long-lived gateway for AI agents across diverse surfaces, including WhatsApp, Telegram, Slack, and Discord. By connecting these messaging layers to local nodes and execution tools, it offers founders and CTOs a powerful path toward autonomous workflows. However, the security profile of OpenClaw is frequently misunderstood by the teams deploying it into high-stakes environments.

OpenClaw's own documentation is explicit: the gateway treats authenticated callers as trusted operators, and a single instance is not a hostile-tenant security boundary. When CTOs plug this personal-tier design into shared team inboxes or customer-facing workflows without redesigning isolation and governance, they inherit a trust model that doesn't match the job the system is doing.

This article covers the operational failure modes that follow from that mismatch, plus a meta-failure that amplifies them: treating self-hosting as a substitute for governance.

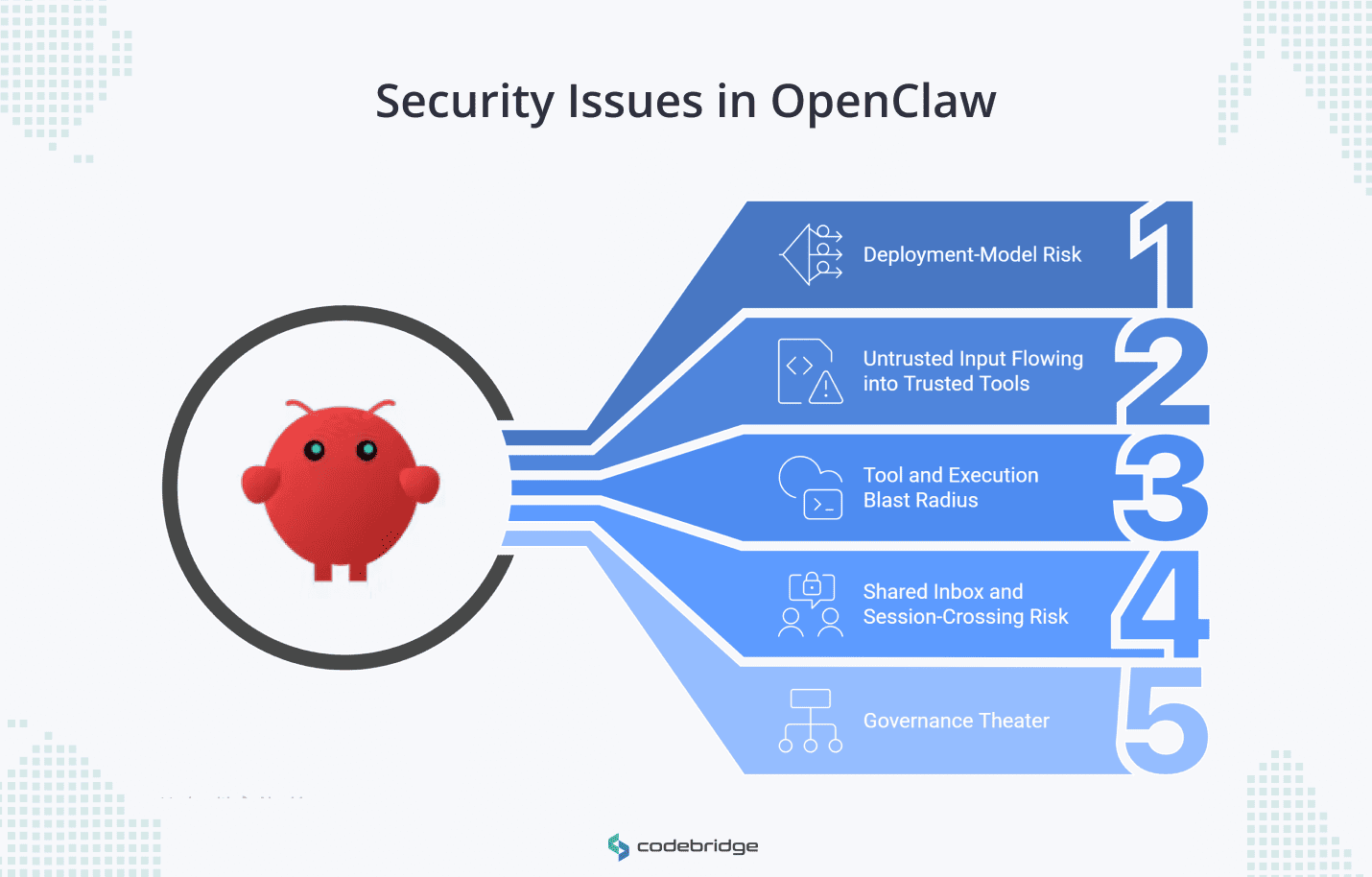

What Security Issues in OpenClaw Really Look Like

To evaluate if OpenClaw is safe for a specific use case, businesses must move beyond the search for named vulnerabilities and look at five distinct categories of operational failure.

1. Deployment-Model Risk

OpenClaw's documentation is explicit: a single gateway is not a multi-tenant security boundary, and running one for mutually untrusted or adversarial operators isn't supported. On a shared instance, one operator can see another's session history and tool calls, and depending on configuration, their credentials. A team that deploys this as a production boundary has a shared workstation, not a segmented service.

2. Untrusted Input Flowing into Trusted Tools

Any bytes reaching the agent from a messaging channel, a web search, an email, or an attachment are attacker-controlled until proven otherwise. This is prompt injection, and the mechanism is mundane. A page the agent is asked to summarize contains instructions to email the contents of another document to an outside address, and the model follows them. OpenClaw treats inbound content as untrusted by design. Most teams don't configure the surrounding tools to enforce that boundary in practice.

3. Tool and Execution Blast Radius

Teams give OpenClaw agents shell access, node commands, and filesystem reach because the workflows demand it. The platform ships exec approvals and allowlists as guardrails, and they work for their intended purpose: stopping an operator from firing a destructive command by accident. They are not designed to contain a hostile user driving the same agent.

GHSA-48wf-g7cp-gr3m illustrates the gap. An exec-guard bypass via env -S let the policy analyzer see a different command than the runtime executed. Allowlists built on static analysis of shell semantics have a recurring history of this class of mismatch. Treating them as a security boundary for untrusted input means accepting that bug category as a live risk.

4. Shared Inbox and Session-Crossing Risk

OpenClaw routes incoming messages to sessions. In a shared Slack channel or group chat, the default routing pins multiple users to a single "main" session, which means one user's question can surface context from another user's earlier conversation, including document contents and tool results. The fix is one config line: session.dmScope: 'per-channel-peer'. Most teams don't set it because the default produces no error. There's no alert, just leakage.

5. Governance theater

The preceding four risks are technical. The fifth is organizational and sits above them: treating "we self-host" as a meaningful security statement. Self-hosting determines where the bytes live. It doesn't map risks, measure controls, or establish ownership of incident response when an agent executes a command it shouldn't have. Teams that skip this layer end up with infrastructure they operate and agent behavior they can't explain, which is the condition that produces the worst postmortems.

Where OpenClaw Security Risk Starts to Rise

Three variables drive OpenClaw risk in practice: the number of operators sharing the instance, the agent's capability surface, and the sensitivity of the data passing through it. Each raises required control levels independently. Scaling any one of them while leaving the others untreated produces a deployment that runs cleanly right up until the first incident.

- Gateway sharing. The moment two independent operators touch the same instance, you need per-operator isolation or a separate gateway per team.

- Local infrastructure access. When the agent can read local files, capture a screen, or run

system.runon a node, treat every tool as a privilege to design, not a convenience to enable. - Public or semi-public channels. Any participant in a Slack room or group chat becomes part of the attack surface. One of them only needs the agent to read something.

- Regulated data. Once customer PII, financial credentials, or NDA-bound material enter the flow, the question isn't whether you need governance but whether the governance you have is reviewable.

How to Reduce OpenClaw Risk Before It Reaches Production

These risks are real, and it is far better to address them before they turn into incidents. At Codebridge, we recommend starting with the controls below to reduce OpenClaw risk and build a stronger security baseline.

- Separate trust boundaries. One gateway per user group at a minimum. One VPS or OS user per group when the data sensitivity demands it. Don't share a gateway across teams with different authority levels.

- Strip tool permissions by default. Start every workflow on a

minimalprofile and add capabilities only when the use case demands them. Settools.fs.workspaceOnly: trueto contain the filesystem reach to a specific directory. - Treat messaging as hostile input. Enable session.dmScope:

'per-channel-peer'on any shared surface. Sandbox any agent that reads web pages, emails, or attachments the team didn't produce. - Keep credentials out of prompts. Use environment variables or an encrypted secret provider. A secret pasted into a prompt is now part of the transcript, the logs, and potentially the context of whatever model processed it.

- Establish reviewable governance. Map the agent's capabilities, track how often approvals fire, and assign ownership for incidents. The NIST AI RMF (Govern, Map, Measure, Manage) is a reasonable scaffold if you don't already have one.

OpenClaw Production Hardening Checklist

Self-Hosted vs. Managed: Where OpenClaw GDN Fits

Most of the list above is repeatable infrastructure work rather than product work. OpenClaw GDN handles the gateway, isolation, credential boundary, and audit layers at the platform level, which leaves the workflow design to your team. Teams that would rather not rebuild that stack can provision an isolated GDN instance at Openclaw.gdn.

GDN provisions a dedicated VM per customer with firewall protection and what its architecture calls zero-access: after provisioning, GDN removes its own SSH path to the instance, so API keys and runtime data stay on the customer's VM and nowhere else. That closes a class of operator-insider risk most self-hosted deployments leave implicit.

Managed hosting reduces infrastructure risk. It does not reduce workflow risk. A team on GDN still decides which tools the agent can reach, how it handles untrusted input, and who approves exec calls. Those decisions are the product, and they stay with the team that owns the product.

Do You Need an OpenClaw Security Review Yet?

The maturity of your security posture should match the complexity of your deployment.

Conclusion

OpenClaw isn't insecure. Its documentation is clear about the trust model it was built for: one operator, one gateway, personal scope. The security problem is structural and starts when a team deploys that model into shared inboxes, customer-facing flows, or workflows with shell access and regulated data in them, without rebuilding the isolation and governance those contexts require.

The work to close that gap is known. Separate trust boundaries. Strip tool permissions. Treat inbound content as hostile. Keep credentials out of prompts. Maintain governance you can review. The question for most teams isn't whether to do this work, but whether to do it themselves.

If you're running an OpenClaw deployment against real workloads and want a second set of eyes on the architecture, book a call with a secure integration specialist. Thirty minutes is usually enough to tell whether the current deployment needs hardening, replatforming, or just configuration changes.

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript