Most companies adopted AI tools faster than they updated their security architecture to account for them. Engineering teams run copilots, product teams connect LLM APIs to internal data, and business users spin up AI assistants through low-code tools, often without IT's awareness or approval. /

As a result, they get a distributed AI footprint that security teams cannot fully inventory, classify, or govern. Recent research showed that 99.4% of CISOs reported SaaS or AI security incidents in 2025, yet only 6% of organizations have an advanced AI security strategy in place.

This would be a manageable problem if AI components behaved like traditional software. They don't. An internal summarization agent can, through misconfigured permissions, query financial databases it was never intended to access. A customer-facing copilot can leak context from one user session into another.

For technical leadership, this is a systems hygiene problem. You need a control layer that provides continuous visibility across your AI footprint, and most organizations do not have one yet.

What AI Security Posture Management Is

AI Security Posture Management (AI-SPM) gives security teams continuous visibility into where AI systems run, what data they access, how they're configured, and whether they comply with the policies the organization has set. It treats every AI component as part of the attack surface.

The clearest way to understand the scope: traditional Cloud Security Posture Management (CSPM) checks whether a server is patched. AI-SPM checks whether the model running on that server is vulnerable to prompt injection, or whether it can reach customer PII through an over-permissioned retrieval pipeline.

That distinction matters because the categories in this space overlap enough to confuse procurement and planning.

AI-SPM vs. DSPM for AI vs. AI Governance

Most organizations need all three, but AI-SPM is the layer that closes the gap between stated policy and observed reality.

How AI-SPM Works in Practice

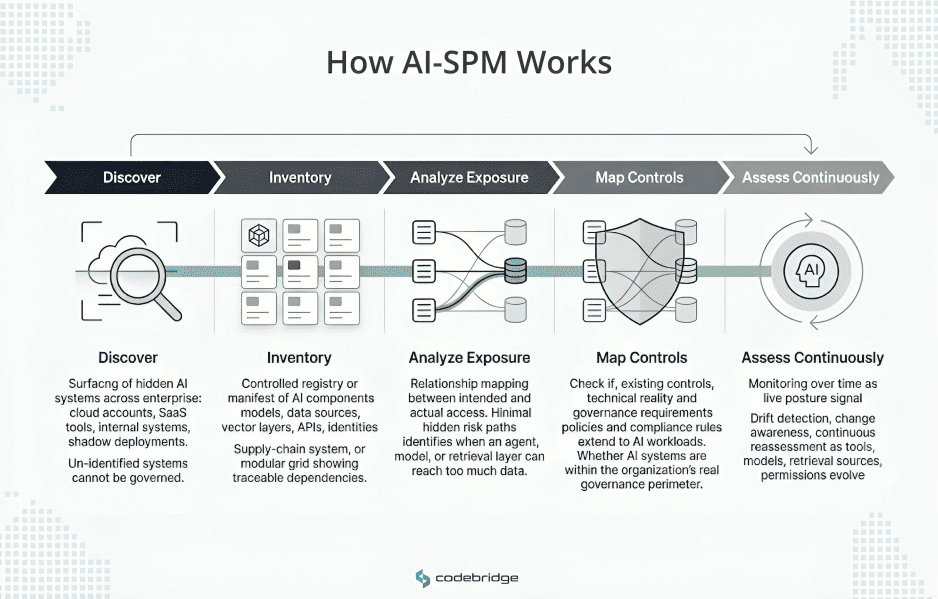

AI-SPM operates as a continuous process across five layers. Each layer solves a specific visibility or control problem that becomes a liability if left unaddressed.

Discovery

You cannot manage AI workloads you have not found. Discovery scans your infrastructure. Cloud accounts, SaaS platforms, internal applications, and on-premises systems. It identifies every AI component running in the environment, including those deployed without IT's knowledge.

This is harder than it sounds. Business users build AI workflows through low-code tools and "vibe coding." Engineering teams spin up model endpoints in dev accounts that never get registered. SaaS vendors embed AI features that activate by default. A discovery process that only scans approved infrastructure will miss a significant fraction of actual AI usage.

Inventory (AI Bill of Materials)

Once you've found the workloads, you need to catalog them. An AI Bill of Materials (AI-BOM) records the components behind each AI system: which model it uses, what data sources it connects to, which vector stores or retrieval layers are involved, what third-party APIs it calls, and which identities have access.

Think of this as the supply chain manifest for an AI system. Without it, you cannot answer basic security questions:

- "What happens to our exposure if this model provider has a breach?"

- "Which systems are affected if we revoke access to this data source?"

Exposure analysis

This layer maps the actual data access paths of your AI systems and compares them to what's intended. The gap between those two things is where breaches start.

A common finding: an AI agent built for internal text summarization inherits broad service account permissions and can query databases containing customer PII, financial records, or source code. The team that built the agent never intended this access. The permissions were inherited from the infrastructure defaults, and no one reviewed them against the agent's actual scope.

Exposure analysis identifies these misalignments before an attacker or a misconfigured prompt does.

Policy coverage and control mapping

Security teams need to verify that existing controls extend to AI workloads. Many organizations have mature access controls, logging, and review processes for human users and traditional services, but AI agents, model endpoints, and retrieval pipelines sit outside those controls entirely.

This layer maps your technical controls against the regulatory and compliance frameworks you operate under, the EU AI Act, NIST AI RMF, internal security policies, and identifies coverage gaps. The question it answers: "Do our controls actually apply to the AI layer, or do they only cover the infrastructure underneath it?"

Continuous posture assessment

AI systems are non-deterministic. Their behavior changes as models are updated, new tools are connected, retrieval sources are modified, and permissions drift. A system that was secure at deployment can become exposed through routine changes that no one flags.

Continuous assessment treats posture as a signal, not a snapshot. It detects configuration drift, newly introduced data access paths, and changes in the threat landscape as they happen, rather than surfacing them in the next quarterly audit.

Where posture breaks first: four recurring exposure zones

Security failures in AI deployments tend to cluster in four areas. These are not independent categories. They compound: untracked AI usage leads to uncontrolled data exposure, which persists because policies haven't been extended to cover AI workloads, and all of it scales with the attack surface that agentic workflows introduce.

Unknown AI usage (shadow AI)

Employees adopt AI tools faster than most organizations can evaluate, approve, and provision them. Over 80% of Fortune 500 companies now have active AI agents, many built by business users with low-code platforms and no security review. When teams build and deploy AI tools outside sanctioned channels, those tools inherit no monitoring, no access controls, and no incident response coverage.

The challenge isn't that people are acting maliciously. They're solving real problems with available tools. But every untracked AI system is a blind spot in your security posture.

Sensitive data exposure

AI systems consume data aggressively. Training pipelines pull from internal repositories. Retrieval-augmented generation indexes documents into vector stores. Copilots process the content of whatever files users open in their workflow.

The architectural problem: once sensitive data enters a model's training set or a shared vector database, you cannot selectively retrieve or delete it. The data is embedded in weights or chunked into vectors that don't preserve record-level boundaries. PII, trade secrets, or regulated data that makes it into these systems becomes a persistent exposure.

Incomplete policy coverage

Most organizations have access controls, audit logging, and review processes built around human users and traditional service accounts. AI agents, model endpoints, and retrieval pipelines are a different class of identity. They operate continuously, make autonomous decisions, and interact with backend systems through API calls that bypass the user-facing controls.

NIST's AI security guidance highlights that traditional vulnerability scanners miss AI-specific artifacts. If your security policy defines access rules for human users and standard services but has no provisions for non-human AI identities, your policy coverage has a structural gap.

The agentic attack surface

Autonomous agents introduce a qualitatively different risk profile. When an agent can read external inputs, invoke tools, and trigger actions in other systems, it becomes a target for Indirect Prompt Injection (XPIA).

The attack pattern: an agent processes an external document, email, or webpage that contains hidden instructions embedded in the content. The agent interprets these instructions as part of its task and executes them. The user who triggered the workflow sees none of this.

This is an inherent property of systems that process untrusted input and have tool-calling permissions. Every external data source that an agent can read is a potential injection vector. Every tool an agent can invoke is a potential escalation path. The attack surface grows multiplicatively with the agent's capabilities.

Do you actually need AI-SPM?

A useful starting test: Can you list every AI system in your environment and state what data each one can access? If you cannot, you have an unmanaged posture problem regardless of scale.

For a more structured assessment, these five questions help determine how urgently you need a formal AI-SPM program:

If your AI usage is limited to a single isolated pilot with no access to sensitive data and no agentic autonomy, formal AI-SPM may be premature. In practice, that state rarely lasts. AI adoption tends to expand quickly once an initial use case proves value, and the posture gap widens with each new deployment.

What AI-SPM does not replace

AI-SPM is a visibility and prioritization layer. It tells you what's running, what's exposed, and where your controls fall short. It does not fix those problems by itself. Four capabilities sit outside its scope and remain essential.

Secure system design. Posture management detects misconfigurations and policy gaps after deployment. It does not prevent them. If your AI systems are built without threat modeling, input validation, and scoped permissions from the start, AI-SPM will surface a long list of findings — but you'll be remediating problems that better design would have prevented.

Least-privilege architecture. Every AI agent, model endpoint, and retrieval pipeline needs access controls scoped to its actual function. AI-SPM can identify over-privileged identities, but it cannot enforce least-privilege on your behalf. Your teams need to build and maintain those constraints.

Human-in-the-loop controls. Autonomous AI systems making high-stakes decisions — financial transactions, data deletions, access grants — need explicit human confirmation gates. AI-SPM can tell you which agents have the ability to take these actions, but the decision to require human approval is an architectural and policy choice.

Logging and observability. AI-SPM depends on data from your logging, tracing, and monitoring infrastructure. If your AI workloads don't generate audit logs, or if those logs aren't collected and retained, posture management has no signal to operate on.

Conclusion

AI-SPM does not solve every AI security problem. It solves the first one: knowing what you have, what it can access, and whether your controls cover it. Without that foundation, every other security investment, red teaming, model evaluation, policy development, is operating on incomplete information.

For technical leadership, the practical question is straightforward. You need to determine whether your organization can account for its AI footprint today, and if it cannot, what tooling and processes you need to close that gap before the next deployment widens it.

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript