Teams building AI features for B2B SaaS reach the same architectural decision point early in development. The model performs well in a notebook. It produces reasonable answers against test data. Then the project slows down on a more consequential question: should the feature retrieve context at query time, fine-tune the model on domain data, or rely on deterministic logic in code?

The wrong choice usually fails in familiar ways. A RAG pipeline used for a feature that really required consistent formatting can hallucinate on edge cases and drive up token costs. A fine-tuned model applied to data that changes weekly can lose accuracy quickly. Workflow logic used where natural language understanding is actually required can turn into a brittle rules engine that demands constant manual updates as the business evolves.

These failures often show up between pilot and production. In most cases, the root cause is the same: the team committed to an architecture before defining requirements for freshness, consistency, latency, and auditability. The better approach is to evaluate those requirements first, then match the architecture to the feature rather than the other way around.

This article explains where RAG, fine-tuning, and workflow orchestration each fit, where each one breaks down, and how to select the right approach for a specific B2B SaaS feature. The objective is to give product and engineering leaders a practical decision framework before integration work begins.

When RAG Is the Right Architectural Choice

RAG is the right fit when a feature must answer questions using data that cannot be frozen. That includes tenant-specific configurations, internal document libraries, pricing rules that change daily, and compliance records updated on unpredictable schedules. When the underlying knowledge moves faster than a model can be retrained or redeployed, retrieval is the approach that keeps pace.

At a practical level, RAG works in a simple way. Enterprise content is converted into vector embeddings and stored in a searchable index. When a user query arrives, the system retrieves the most relevant chunks and passes them to the LLM as context alongside the query. The model’s weights remain fixed. The knowledge stays external and replaceable. That separation is what makes RAG operationally flexible: the team updates the index, not the model.

Keeping knowledge outside the model is also one of RAG’s biggest advantages in regulated environments. Because each response can be traced back to the source documents selected by the retriever, teams can create an auditable link between the system’s answer and the information it used. For B2B products operating under GDPR, HIPAA, or the EU AI Act, that matters in practical terms. If a tenant requests data deletion, the records can be removed from the index. The model itself never absorbed that data, so there is no ambiguity about whether it still influences outputs. By contrast, when customer data has been blended into model weights through fine-tuning, verified removal becomes a governance problem.

RAG does, however, come with tradeoffs that need to be understood up front.

The first is retrieval quality. A RAG system is only as good as the chunks it retrieves. If chunking splits important context incorrectly, or if the embedding model does not represent domain-specific terminology well, the retriever may surface the wrong information. The LLM can then produce an answer that looks grounded and confident while still being wrong. IIn production, this kind of error is hard to catch because the answer still looks credible. Diagnosing it requires tracing the retrieval layer rather than the model layer, which means teams need observability and tooling that many do not build until after the first incident.

The second tradeoff is latency. Retrieval adds roughly 50 to 300 milliseconds of overhead per query. For internal knowledge assistants or document Q&A features, that may be negligible. For experiences that are closer to real-time and operating at scale, that overhead needs to be evaluated against the product requirement.

The third is cost predictability. RAG increases per-query token costs because retrieved context is injected into every prompt. The more content the retriever pulls in, the larger the prompt becomes and the more expensive the request is. Unlike a fine-tuned model, where prompt size can stay small and consistent, RAG costs rise with retrieval volume. In multi-tenant products, where query complexity can vary significantly by customer, that makes budgeting less predictable.

These tradeoffs are manageable, but they are still real operational commitments. Teams should account for them before locking in the architecture.

When Fine-Tuning Is the Better Fit

Fine-tuning is most useful when the issue is not what the model knows, but how it behaves. Many teams initially reach for fine-tuning because they assume they need to teach the model their domain knowledge. In this context, that is the wrong reason. In B2B SaaS, fine-tuning is the better choice when the requirement is structurally consistent output across large numbers of edge cases that prompt engineering alone cannot reliably handle. That can include structured JSON for downstream services, predictable response lengths for UI consistency, required domain terminology, or stable reasoning patterns that support user trust over time.

The appeal is practical: lower latency, smaller prompts, and more predictable per-query cost. Without a retrieval step, inference latency is lower. Without injected context, prompts remain small and consistent. That makes per-query cost easier to model, which is especially important when pricing AI features in a multi-tenant product. With RAG, cost varies with retrieval volume. With fine-tuning, costs can be modeled more directly against query volume.

The heavier burden appears before and after deployment.

Fine-tuning requires data curation, which means assembling a high-quality labeled dataset through either months of manual effort or a structured pipeline the team maintains over time. It also requires evaluation infrastructure. Teams need to prove that the tuned model actually outperforms the base model with strong prompt design. Without that comparison, it is easy to invest in ML overhead only to discover that better prompting would have delivered most of the benefit.

There is also retraining risk. Each retraining cycle can narrow the model too far, improving performance on the target task while weakening performance on adjacent queries that users still expect it to handle. That degradation compounds over time and requires dedicated benchmarks to detect.

Governance is another major consideration. When a customer’s data has been included in the training set, that customer’s patterns are embedded in the model’s weights. If that customer later invokes GDPR erasure rights, it is difficult to prove that their data no longer affects outputs. In practice, the remedy is retraining from scratch on a cleaned dataset. In a multi-tenant product, that is not a cleanup task after the fact. It is a compliance process that has to be designed before training begins.

Fine-tuning is therefore the right choice when behavioral consistency is non-negotiable and the underlying knowledge remains stable. The real question is whether that level of infrastructure and governance commitment is justified, or whether a well-designed RAG system with strong prompt engineering gets close enough.

Where Workflow Logic and Orchestration Matter Most

Many AI features in B2B SaaS become fragile because teams push model calls into places where deterministic code would work better. Workflow logic and orchestration exist to define that boundary. They determine when the LLM is called, what data it can access, what is allowed to happen with the output, and what the system does when the result cannot be used safely.

Many teams route too much through the model simply because the feature is labeled as AI, even when deterministic logic would be cheaper and more reliable. Tasks such as classification against a known taxonomy, routing based on structured metadata, or applying established business rules are often better handled with deterministic logic. In those cases, code is faster, cheaper, and easier to debug than an LLM call.

The key is to separate tasks that truly need language understanding from tasks that just need predictable code.

Orchestration becomes indispensable when teams need to wrap the LLM in controls they can enforce, test, and audit. That includes validating and sanitizing inputs before inference, verifying outputs against business rules before they reach the user, defining fallback behavior for low-confidence or unusable model responses, limiting how many model calls a single user action can trigger, and ensuring the model only receives data the requesting user is authorized to access. These are not controls that prompt instructions alone can enforce reliably.

Deterministic orchestration does have costs. It requires upfront specification, which can slow early development. Complex rule systems can become their own maintenance burden as the product changes. And workflow logic cannot handle ambiguous inputs or open-ended reasoning on its own, which is exactly where the LLM still belongs. The goal is not to remove model calls entirely. It is to make sure every model call is deliberate, scoped, and surrounded by logic the team controls.

In many production architectures, the orchestration layer ends up carrying more operational weight than either the retrieval system or the fine-tuned model. It is the layer where teams enforce the rules that make an AI feature safe to ship.

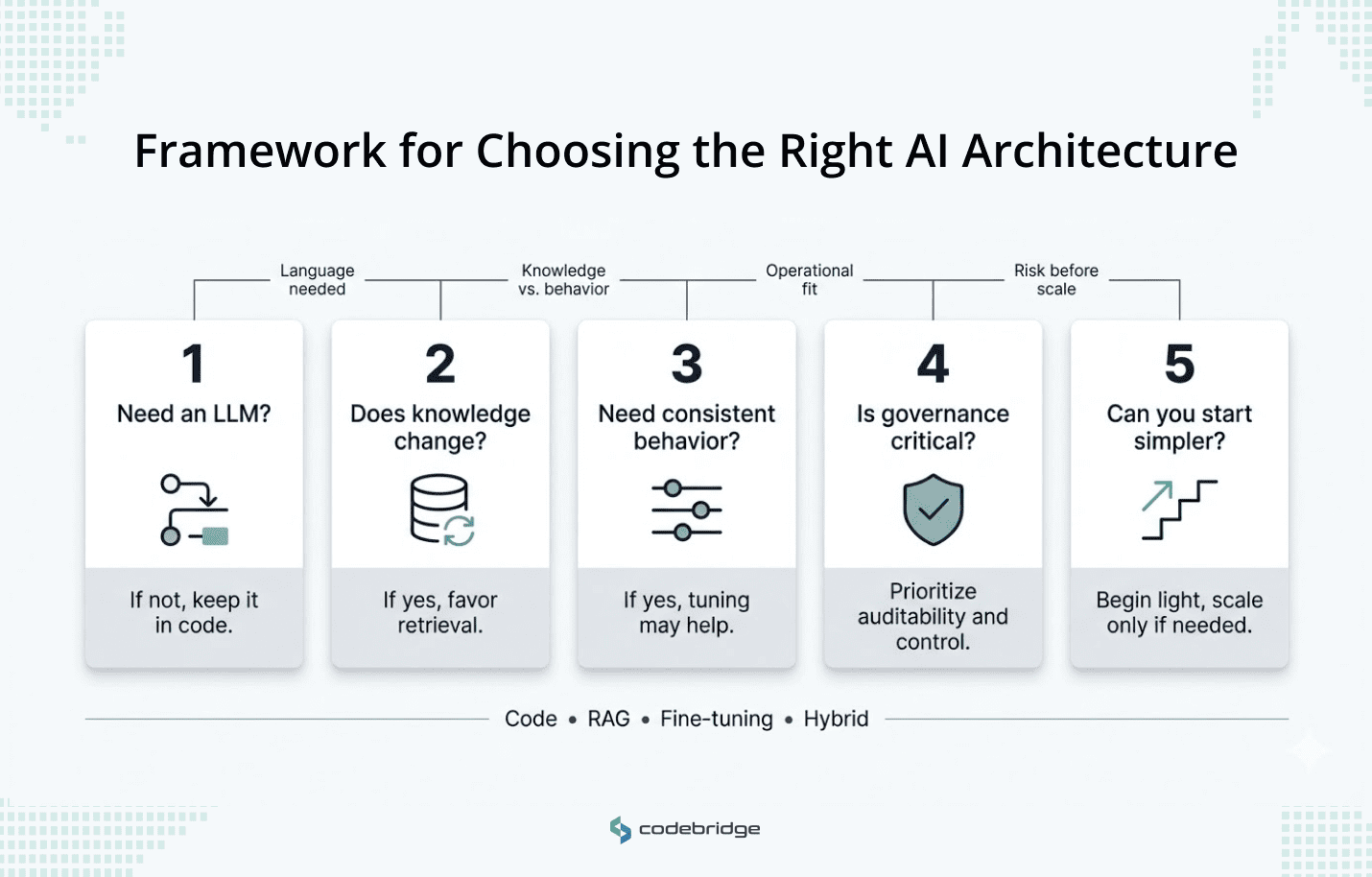

A Practical Decision Framework

These approaches solve different problems. In practice, most production features do not rely on only one. The architectural question is usually more specific: which parts of the feature need retrieval, which parts require behavioral consistency, and which parts should stay in deterministic code?

A practical framework starts with five questions. Not every question will apply equally to every feature, but together they create the discipline that prevents teams from locking into an architecture too early.

1. Does this task need an LLM at all?

This is often the first question teams should ask and the one they skip most often. Routing based on structured metadata, classification against a known taxonomy, and the application of codified business rules belong in the orchestration layer. Every unnecessary LLM call introduces cost, latency, and another failure mode to debug. The starting point is to define the line between what requires natural language understanding and what should remain in code.

2. How often does the knowledge behind this feature change?

If the knowledge changes daily or varies by tenant, retrieval is the stronger fit. If the knowledge is stable across longer periods and the requirement is tied to conventions, formatting rules, or reasoning patterns, fine-tuning becomes more viable. Many features sit between those extremes: stable behavioral requirements combined with dynamic tenant-specific knowledge. In those cases, a hybrid architecture can make sense, with fine-tuning or structured prompting handling behavior and RAG handling knowledge.

3. What does the team need to build and maintain?

Each option creates a different long-term operating burden. RAG requires an embedding pipeline, chunking strategy, retrieval evaluation, and index maintenance. Fine-tuning requires labeled datasets, benchmarks, retraining cycles, and ML expertise. Workflow orchestration requires upfront specification and ongoing rule maintenance. The right decision is not only about technical fit. It is also about what the team can operate reliably at its current stage.

4. What is the regulatory and governance exposure?

If the product operates under GDPR, HIPAA, or customer-specific data handling requirements, the architecture has to support deletion and auditability by design. RAG provides the cleanest posture because tenant data stays in the index and can be removed directly. Fine-tuning on tenant data creates governance debt that grows over time. Workflow logic contributes deterministic audit trails that compliance teams can use in front of regulators. These constraints need to be mapped before any integration work begins.

5. Can the team start simpler and graduate later?

Some features that appear to require RAG or fine-tuning can launch initially with strong prompting wrapped in deterministic orchestration. Starting with a simpler implementation lets the team learn from real usage before committing to heavier infrastructure. In many cases, the responsible progression is prompt engineering first, then RAG, then fine-tuning only where the data and requirements justify it.

How the Framework Works in Practice

Recent Codebridge’s case study shows how these architectural choices can operate together in one system. In an AI-assisted recruitment platform for a US-based enterprise processing 1,500 to 3,000 engineering applications per month, the solution combined all three approaches rather than forcing one method to do everything.

The orchestration layer carried the greatest operational weight. A central orchestrator built on LangGraph coordinated five specialized agents across sourcing, screening, assessment, interview analysis, and onboarding. Confidence-based routing allowed autonomous decisions only when confidence exceeded 90%. Borderline cases were escalated automatically to human recruiters, and final-stage candidates were never rejected by the system. That control logic lived in deterministic code, not in prompts.

RAG grounded the agents in the company’s actual hiring standards. Technical requirements by role, annotated examples of strong and weak candidate responses, and internal evaluation criteria were stored in a retrieval index. Each agent queried that index at inference time, which prevented the system from inventing evaluation criteria that did not exist in the company’s process. When hiring standards changed, the team updated the index rather than retraining the model.

Behavioral consistency was handled through structured output requirements. Assessment agents generated personalized test assignments in a predictable format, while interview agents produced structured debrief reports with consistent sections such as technical depth, speech pattern analysis, and red flags, all rendered in a recruiter dashboard. The formatting and reasoning patterns stayed stable across thousands of candidates. That is the kind of problem fine-tuning is meant to solve, though in this case the team achieved it through structured prompting and orchestration.

The system reduced full-cycle hiring time from 24 days to 10–12. Manual engineering review workload fell by 60%. Candidate response time moved from more than 24 hours to under 2 minutes. The system reached break-even within its first year.

Conclusion

The point is not to pick a better approach. It is that each one should handle the part of the system that it is actually suited for. Retrieval handles dynamic knowledge. Orchestration handles control, routing, and governance. The team handled behavioral consistency by enforcing structured output requirements.

The five-question framework is most useful before the team writes integration code. It gives product and engineering leaders a way to map each component of a feature to the approach that fits its actual requirements, rather than the one that simply feels most like building AI.

Teams usually run into trouble when they commit to an architecture before understanding what each layer of the feature demands. The teams that ship reliable AI features in production treat retrieval, behavioral control, and deterministic logic as separate design decisions, then use orchestration to hold them together.

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript