For the past two years, companies have largely been implementing enterprise AI as a layer that generates content — text, code, summaries, and recommendations. In most products, these capabilities are at the interface level, responding to user prompts and returning outputs for human review. In this pattern, the core application architecture and workflow orchestration remain unchanged.

However, as AI adoption matures, technical leaders are facing a more consequential design choice. Some AI systems continue to function as assistive tools, while others are being designed to plan tasks, interact with internal services, and execute decisions across workflows. The key distinction lies in architectural responsibility rather than feature scope.

For SaaS businesses, scale-up, and regulated environments, this distinction directly affects system design. It influences infrastructure complexity, observability requirements, compliance exposure, and operational risk.

This guide clarifies the difference between Generative AI and Agentic AI — and explains why choosing between them is one of the most consequential technical decisions businesses will make this year.

Defining the Paradigms: Generation vs. Execution

To make informed architectural decisions, one must first isolate the core functionality of each paradigm.

What is Generative AI?

Generative AI refers to probabilistic models, most commonly large language models, to produce text, code, or other artifacts in response to prompts. The interaction pattern is reactive and request-driven: a user provides input, and the system returns an output. Responsibility for interpreting, validating, and acting on that output remains external to the model.

The market for these content engines remains substantial, with end-user spending on GenAI models projected to reach $14.2 billion in 2025, alongside $1.1 billion for specialized, domain-specific models.

Common enterprise applications include knowledge work acceleration, such as drafting marketing copy, summarizing legal documents, or providing code completion for developers. However, Generative AI does not own the workflow. It functions as an augmentation layer where final actions and responsibility remain with the human user.

What is Agentic AI

By contrast, Agentic AI systems are designed to pursue goals rather than generate isolated outputs. These systems can plan tasks, maintain intermediate state, invoke tools or APIs, and execute multi-step workflows across software boundaries. They prioritize decision-making over content creation and do not require continuous oversight to operate in complex environments.

Statistical projections suggest that Agentic AI will drive approximately 30% of enterprise application software revenue by 2035, surpassing $450 billion, up from just 2% in 2025.

Real-world examples include Codebridge’s RecruitAI platform, which illustrates Agentic AI in practice: a multi-agent system that autonomously screens candidates, coordinates assessments, analyzes interviews, and moves applicants through hiring workflows across integrated tools. Rather than generating isolated summaries, the agents plan and execute multi-step processes while keeping final decisions under human oversight.

Reframing the Discussion: A Strategic Architecture Decision

The distinction between Gen AI and Agentic AI is primarily architectural, not a comparison of underlying models. The same foundation model can power both approaches, but the surrounding system design determines whether AI produces isolated artifacts or assumes operational responsibility within a workflow. For technical leadership, the choice between augmentation and execution directly shapes long-term system design, cost structure, and governance requirements.

From Content Flow to Control Flow in Agentic Workflows

The most significant mental shift for companies is the transition from content flow to control flow.

Generative AI relies on content flow where a user provides a prompt, the model processes it through a stateless inference pipeline, and it produces a static artifact, such as a drafted email, a code snippet, or a text summary. In this case, the model remains dormant until triggered, and the human user retains the responsibility for verifying and implementing the output.

Agentic AI operates on control flow. Rather than returning a single output, it coordinates data and actions across multiple systems to complete a goal. In this model, the architecture shifts from a reactive assistant to a proactive digital actor that monitors its environment, identifies sub-tasks, and iterates through execution loops until a goal is met or a safe stopping point is reached.

Agentic AI Architecture vs. Generative Systems Design

Stateless inference pipelines typically characterize the architecture of Generative AI. Integration occurs primarily at the interface or data layer, often utilizing Retrieval-Augmented Generation (RAG) to inject relevant document context into a prompt to reduce hallucinations. While this approach is effective for accelerating knowledge work, the design is fundamentally reactive and does not independently own a workflow.

Conversely, Agentic AI architecture requires a layered execution design that mimics executive reasoning. A robust enterprise-grade system typically includes:

- Reasoning Engines: A central LLM (or ensemble of models) that acts as the cognitive engine, interpreting context and decomposing high-level objectives into actionable plans.

- Execution Modules: Layers that interact with external tools, APIs, and software through programmatic functions to perform tasks like updating a CRM or restarting a service.

- Orchestration Frameworks: Software that manages the complexity of multi-agent systems, handling task sequencing, resource allocation, and failure recovery.

Persistent Memory in AI Agents: The Bridge to Reliable Autonomy

Generative AI systems largely require relevant context to be supplied with each prompt. However, Agentic systems are stateful by design. To execute multi-step objectives reliably, they must retain task context, track prior decisions, and incorporate environmental feedback as execution unfolds.

This requires deliberate memory architecture. Short-term memory functions as the agent’s working context, storing intermediate tool outputs, current sub-goals, and execution state within an active session. Long-term memory persists durable information such as user data, organizational policies, historical outcomes, and system constraints that must remain accessible across sessions.

Without structured memory layers, autonomy degrades into repeated inference, but with them, the system can reason across time and operate within defined boundaries.

LLM Infrastructure Costs and the Hidden Economics of AI Systems

Once AI moves from generation to execution, the cost structure of the product changes. In generative systems, infrastructure scales predictably, where each request is an isolated inference call, and cost correlates linearly with usage.

But, in agentic systems, intelligence becomes part of the control layer, and pricing changes from server uptime to cognitive effort. The cost of an outcome now depends on how many reasoning steps, tool invocations, and reflection cycles are required to reach it.

Stateless API Calls vs. Stateful Systems

Statefulness significantly increases architectural complexity. Because agentic systems retain task history and intermediate goals, scaling involves more than adding user capacity. Teams must coordinate large numbers of concurrent processes operating across APIs, databases, and enterprise systems.

That coordination layer introduces what many teams experience as an unreliability tax. It is an additional compute, latency, monitoring, and engineering safeguards required to contain probabilistic failure.

A chatbot generating an imperfect email is a usability issue; an autonomous agent looping while updating a ledger becomes an operational liability.

LLM Hallucinations, Token Growth, and the Unreliability Tax

One of the most underestimated economic risks in agentic design is quadratic token growth. Multi-turn execution loops often resend accumulated context at each step, meaning a ten-cycle reflection process can consume dozens of times more tokens than a single inference pass.

Research indicates that resolving one complex software issue with an unconstrained agent can cost between $5 and $8 in tokens alone. At scale, these costs compound rapidly.

Reliability introduces a second trade-off: latency. Single-shot LLM performance on complex tasks frequently plateaus around 60% to 70% accuracy. Achieving the 95 percent reliability required for business-critical workflows typically demands longer reasoning chains, orchestration layers, and iterative validation.

As a result, while a generative response may return in under a second, a multi-agent execution loop can take 10 to 30 seconds to converge on a stable result. Therefore, the architectural question for tech businesses is not whether autonomy is possible, but which workflows justify its economic and latency overhead.

This trade-off does not imply that agentic systems are inherently inefficient. It means their economics must be engineered deliberately. Autonomy increases computational surface area, but thoughtful architectural constraints can contain cost, latency, and failure risk. The main challenge is to design systems where intelligence scales proportionally with business value.

Strategic Optimization Patterns

Managing Total Cost of Ownership (TCO) requires more than choosing a strong model. It requires controls that determine when advanced reasoning is actually needed. Several design patterns have emerged to align autonomy with economic discipline.

- Routing Patterns: Instead of sending every query to the most capable (and expensive) model, use a lightweight classifier to handle simple tasks with cheap, fast models, escalating only complex reasoning to more powerful agents.

- Prompt Caching: If an agent consistently references the same large knowledge base or set of instructions, use prompt caching to avoid reprocessing that text. This can reduce input costs by approximately 90% and latency by up to 75%.

- Dynamic Turn Limits: Rather than using a hard cap on agent iterations, implement limits based on the probability of success. Recognizing when an agent is unlikely to solve a task and exiting the loop early can cut token costs by up to 24% without impacting overall solve rates.

- Memory Layers: Implement a dedicated memory layer to store successful plans and past interactions. By querying this layer first, an agent can remember a previously solved problem and retrieve the solution in milliseconds rather than re-planning from scratch at high cost.

Governance, Risk, and Compliance in Regulated Domains

When an AI system gains the power to act, the security surface area of the enterprise expands. A generative system may produce incorrect text, but an agentic system can modify records, trigger transactions, or propagate errors across integrated platforms. Autonomy increases the scope and potential impact of failures or misuse.

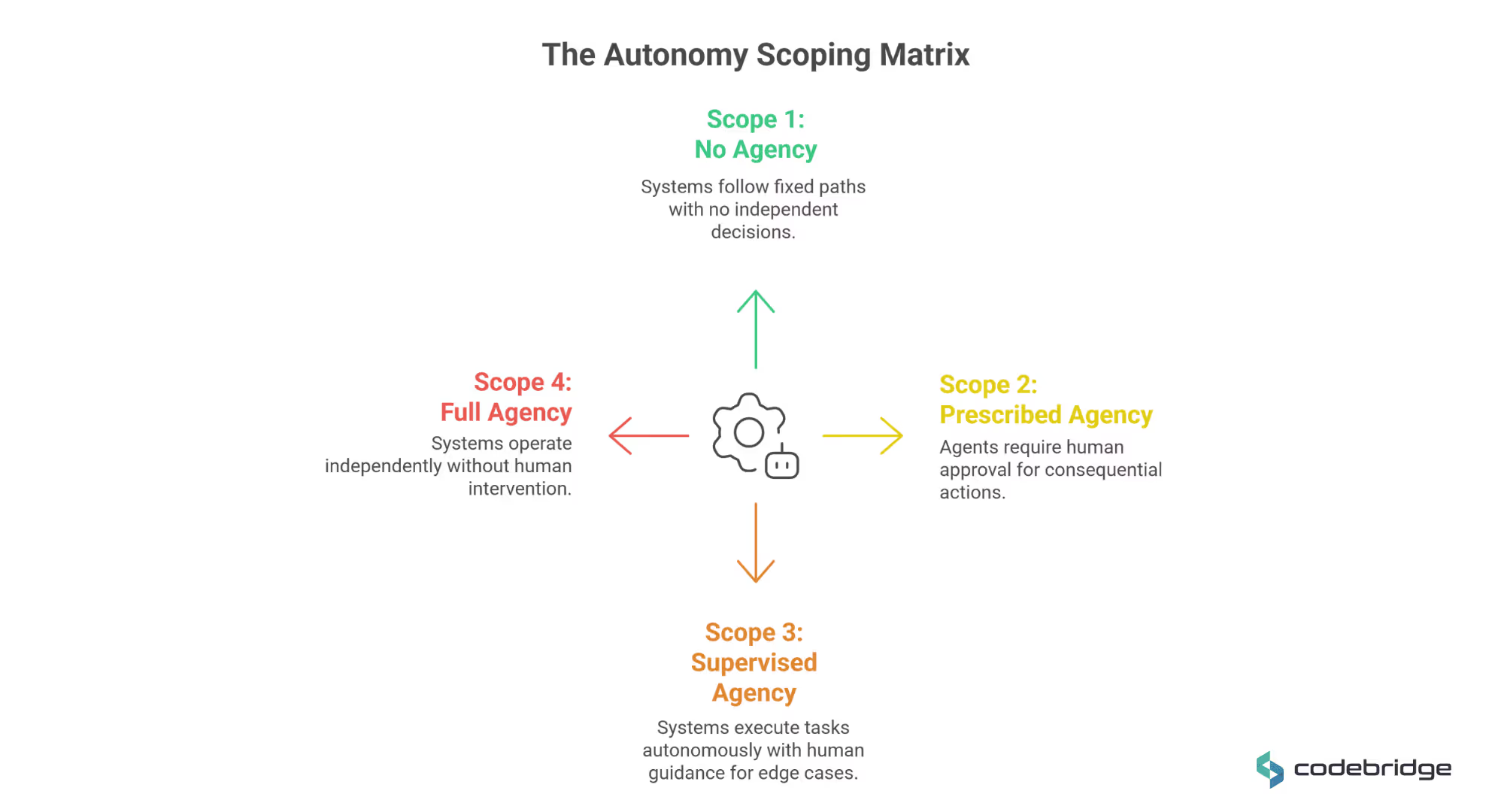

Levels of AI Autonomy: The Autonomy Scoping Matrix

To manage these risks, technical leaders must align their architecture with the organization's specific risk tolerance using a structured scoping matrix. This framework categorizes agentic architectures based on their level of connectivity and independent decision-making:

- Scope 1: No Agency. These systems are essentially read-only and follow human-initiated, fixed execution paths. Security focuses on process integrity and boundary enforcement.

- Scope 2: Prescribed Agency. Agents can modify system states but require strict Human-in-the-Loop (HITL) approval for every consequential action. This scope is common in regulated environments where manual verification is a legal necessity.

- Scope 3: Supervised Agency. Systems execute complex tasks autonomously after a human trigger. They utilize dynamic tool selection and only require human guidance for edge cases or trajectory optimization.

- Scope 4: Full Agency. These are self-initiating systems that orchestrate multi-system workflows without human intervention. They require advanced anomaly detection and automated containment mechanisms to prevent runaway processes.

EU AI Act, HIPAA, and GDPR: AI Compliance Realities for CTOs

In regulated sectors such as healthcare and finance, the boundary between assistive AI and autonomous system-level execution becomes a compliance requirement. Principles like least privilege must govern tool access and data flows, particularly under regimes such as HIPAA, GDPR, and the EU AI Act. These frameworks demand traceability, data governance controls, and explainability of automated decisions, as an autonomous system without auditable lineage is not merely risky, it is non-compliant.

In healthcare, processing PHI requires a Business Associate Agreement (BAA) with cloud providers — even if the data is encrypted or not directly viewable. A BAA ensures that the entire processing chain meets federal safety standards; using tools like web search within an agent can invalidate HIPAA eligibility if those specific endpoints are not covered by the agreement.

Under the EU AI Act, agentic systems must be ready for rigorous governance, risk reporting, and bias monitoring, especially if they touch high-risk scenarios. Autonomous actions without a clear, auditable reasoning chain are unacceptable in these jurisdictions.

Observability and Monitoring as a Mandatory Layer

Therefore, observability becomes foundational. Traditional Application Performance Monitoring (APM) is insufficient for non-deterministic systems that may appear technically healthy while producing flawed reasoning.

Engineering teams must adopt trace-first monitoring practices, such as OpenTelemetry instrumentation, to capture execution paths, decision points, and tool interactions. Without structured traces, it becomes extremely difficult to reconstruct why an agent acted as it did.

Decision Framework: When to Use Which?

Choosing between a Generative AI interface and a full Agentic AI system becomes a strategic decision because the choice determines how much responsibility the system assumes — and that affects architecture, cost, risk, and governance. Leaders should align their approach with the level of workflow control, reliability, and risk they are willing to accept.

When to Deploy Generative AI

Generative AI is optimal when the objective is information synthesis and human decision support, rather than updating systems, invoking APIs, or performing operational tasks.

- Primary Goal: Content creation, rapid prototyping, or human-in-the-loop decision support. Gen AI excels at knowledge work acceleration, such as drafting email newsletters, summarizing academic papers, or assisting developers with code completion.

- Risk Profile: When the company has a low risk tolerance, human judgment must remain the final authority. If a failure results only in unpleasant text rather than an unauthorized state change, Gen AI patterns are typically sufficient.

- Time-to-Value: When the focus is on achieving quick productivity gains through individual augmentation. Deploying a copilot is faster and less technically demanding than building an autonomous digital actor.

When to Architect Agentic AI

Agentic AI becomes necessary when a system must independently complete multi-step tasks, such as updating records, triggering transactions, or coordinating actions across multiple tools, without waiting for human approval at every step.

- Primary Goal: Complex, multi-step execution across integrated enterprise tools like ERP, CRM, or ticketing systems. Agentic AI is designed to finish the job by coordinating between multiple platforms to achieve a measurable business result.

- Operational Scale: When speed, 24/7 availability, and autonomous scale are prioritized over creative nuance. Agentic systems are ideal for repetitive, rule-bound processes where human bottlenecks currently constrain growth.

- Adaptability: When the system must respond dynamically to changing environmental conditions or unstructured data that a fixed RPA script cannot handle.

From Copilots to Autonomous AI Agents: A Progressive Autonomy Model

For long-term delivery, we recommend a phased adoption model. The framework allows an organization to build architectural maturity and earn trust in autonomous systems through incremental complexity.

- Phase 1: Augmentation (Generative AI Copilots): Deploy reactive assistants to help human workers analyze data and draft content. In this phase, humans maintain 100% of the decision-making authority and execution responsibility.

- Phase 2: Assisted Automation (Human-in-the-Loop Agents): Introduce agents that can plan and prepare actions, but require explicit human approval (approval gates) before executing any state change. This supervised agency limits the blast radius of potential errors while automating the data gathering and reasoning steps.

- Phase 3: Bounded Autonomy (Fully Agentic Workflows): Scale to systems that initiate and execute multi-system workflows independently within strictly defined policy, cost, and risk constraints. These systems operate as a digital workforce and require the highest levels of observability and governance to ensure alignment with organizational goals.

Conclusion

The distinction between Gen AI and Agentic AI marks the difference between an application that assists users and an infrastructure that operates the business. While Generative AI unlocked the potential for conversational intelligence, autonomy requires a complete architectural transformation — one centered on state persistence, orchestration engines, and execution authority.

For the scale-ups, treating Agentic AI as a simple API integration is a recipe for cascading errors, unmanageable token costs, and compliance failures. Success in the agentic era requires deliberate architectural planning around memory layers, tool orchestration, compliance, and rigorous observability.

Many organizations are beginning to transition toward AI-native infrastructure, and technical leaders who invest in autonomous, governed, and scalable agentic architectures today will build the self-optimizing organizations of tomorrow.

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript