When the Tunnel Drops, You Blame the Model

A distributed team building AI edge products on NVIDIA Jetson Orin Nano hit a wall that anyone shipping creative-tech work in 2026 will recognize. Their 7B model was generating tokens at roughly 15 tok/s over a remote serial connection, but the connection dropped silently every few minutes. Debugging felt impossible. As one of the engineers put it: "high jitter makes it feel like the model is broken when it is actually the tunnel." They were chasing a ghost. The model was fine. The pipeline between human and machine was the problem.

If you work anywhere near visual systems, AI-assisted production, or design-engineering handoffs, you have lived a version of this story. The tool feels broken. The output looks wrong. You spend three days suspecting the wrong layer.

KEY TAKEAWAYS

The bottleneck is rarely where you think it is. Jitter masquerades as model failure; missing indexes masquerade as framework failure; vague briefs masquerade as agent failure.

Leadership inertia is the #1 AI scaling barrier in 2026, not employee resistance, McKinsey's research flips the conventional narrative.

Problem decomposition is the new core skill for anyone collaborating with AI agents, including designers shipping creative pipelines.

Boring monolith stacks routinely handle 700-1000 RPS on a single VPS. Most teams over-engineer before they ever hit "one VPS scale."

$4.4T in productivity growth is on the table, but only for teams that fix the unglamorous plumbing first.

The Hidden Problem: We're Solving for the Wrong Layer

McKinsey's 2025 Superagency in the Workplace research pegs the long-term productivity opportunity from corporate AI use cases at $4.4 trillion, with up to 45% of employee activities automatable using current technologies. Those numbers get repeated in every keynote of 2026. What gets repeated less often is the part of McKinsey's research that contradicts the standard narrative.

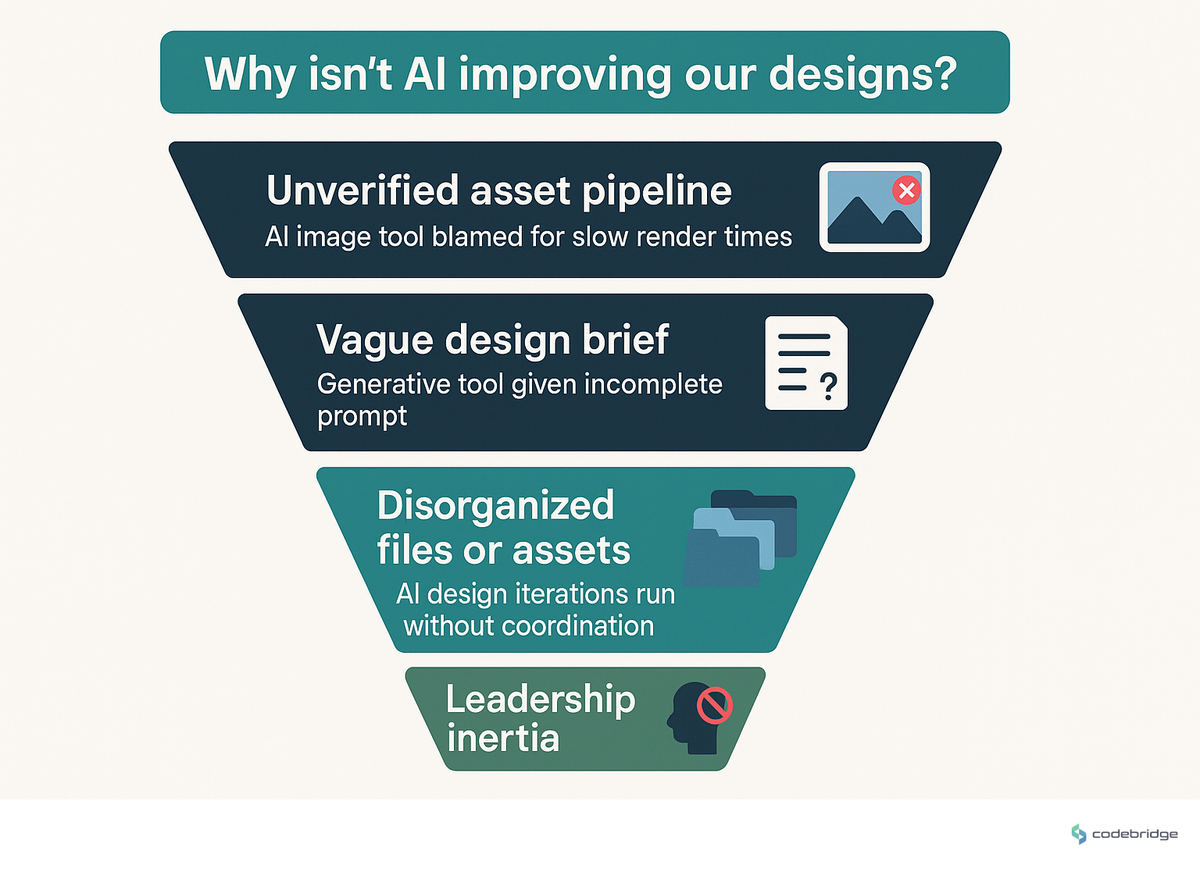

The common belief is that employees resist AI. The reality, per McKinsey, is the opposite: employees are ready; leaders are not steering fast enough. The gap between "we have AI tools" and "our team ships faster because of them" is rarely a tooling gap. It is an orchestration gap. The diagram below maps where teams actually lose velocity:

Real Stories from the Trenches

Consider the Anthropic research project that built a C compiler entirely with AI agents, no human-written code in the final output. The lead was an adversarial-ML scientist working with sixteen agents in parallel. The first attempts failed completely. The vague instruction "build a compiler" was unworkable. Only when the work was decomposed into precisely defined subtasks with clear inputs and outputs did the system click into place.

"16 Agents, each handling its own piece, completed the entire compiler... without a single line written by a human." But it only worked once the goal was decomposed. Vague briefs killed every earlier attempt.

imaginex, dev.to, Skills Required for Building AI Agents in 2026

The lesson generalizes hard for any creative-technology team in 2026: problem decomposition is the new core engineering skill. If subtasks share state, you need a coordinator the moment you exceed three parallel agents, otherwise outputs contradict each other. Replace "agents" with "junior designers," "render farms," or "AI image variants" and the same rule holds.

A second story, from a solo developer running a production app on a deliberately boring stack, Postgres + REST + React on a single VPS, surfaces a different blind spot. Their post hits a number worth memorizing:

"700-1000 RPS on a single VPS is the reality check most developers need to hear." Boring tech is invisible because it doesn't break. Stack Overflow and Shopify run on monoliths.

the_nortern_dev, dev.to, My 2026 Tech Stack Is Boring As Hell

And then there is the ORM trap. A backend team introduced Prisma in development against 100 rows. Everything was instant. They shipped to production with 10 million rows and watched the app crawl. Foreign keys and join columns had no indexes. The ORM hid the inefficient query under three layers of fluent syntax. The fix took an afternoon. Finding the cause took two weeks.

The Pattern: What Successful Teams Do Differently

Across these three stories, the dropped serial tunnel, the sixteen-agent compiler, the unindexed ORM, one pattern repeats. Successful teams instrument the boring layer first. They check the tunnel before they retrain the model. They define the inputs and outputs before they spawn the agents. They add the indexes before they swap the database engine.

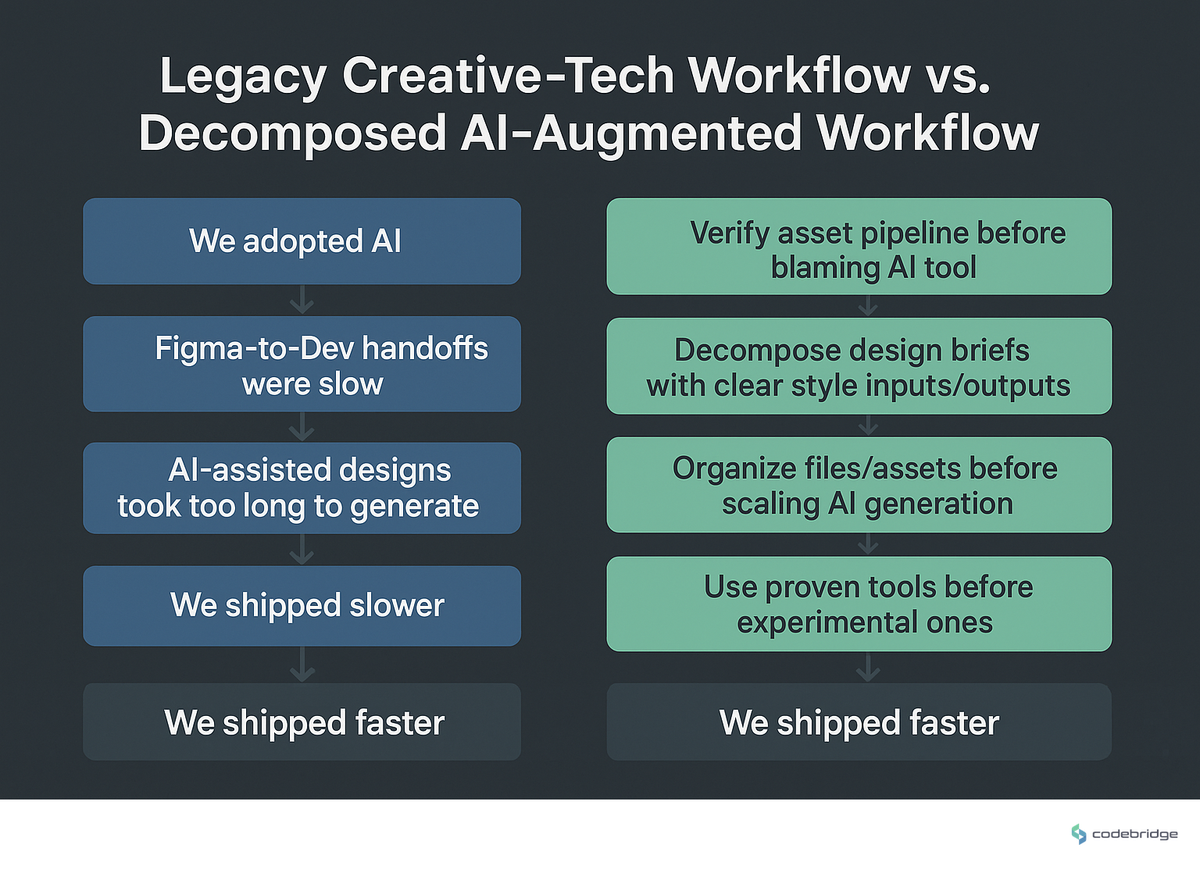

This aligns with what McKinsey calls the operating model: insurers using advanced analytics for autonomous data processing in claims triage and fraud detection didn't get faster by buying smarter algorithms. They got faster by collapsing the handoffs between systems. The visualization below contrasts the two operating modes:

When McKinsey says employees are ready and leaders are the bottleneck, what they mean operationally is this: the work of decomposing tasks into agent-shaped pieces is leadership work, and most leaders are still treating it as a tooling decision.

Actionable Framework: Five Moves That Compound

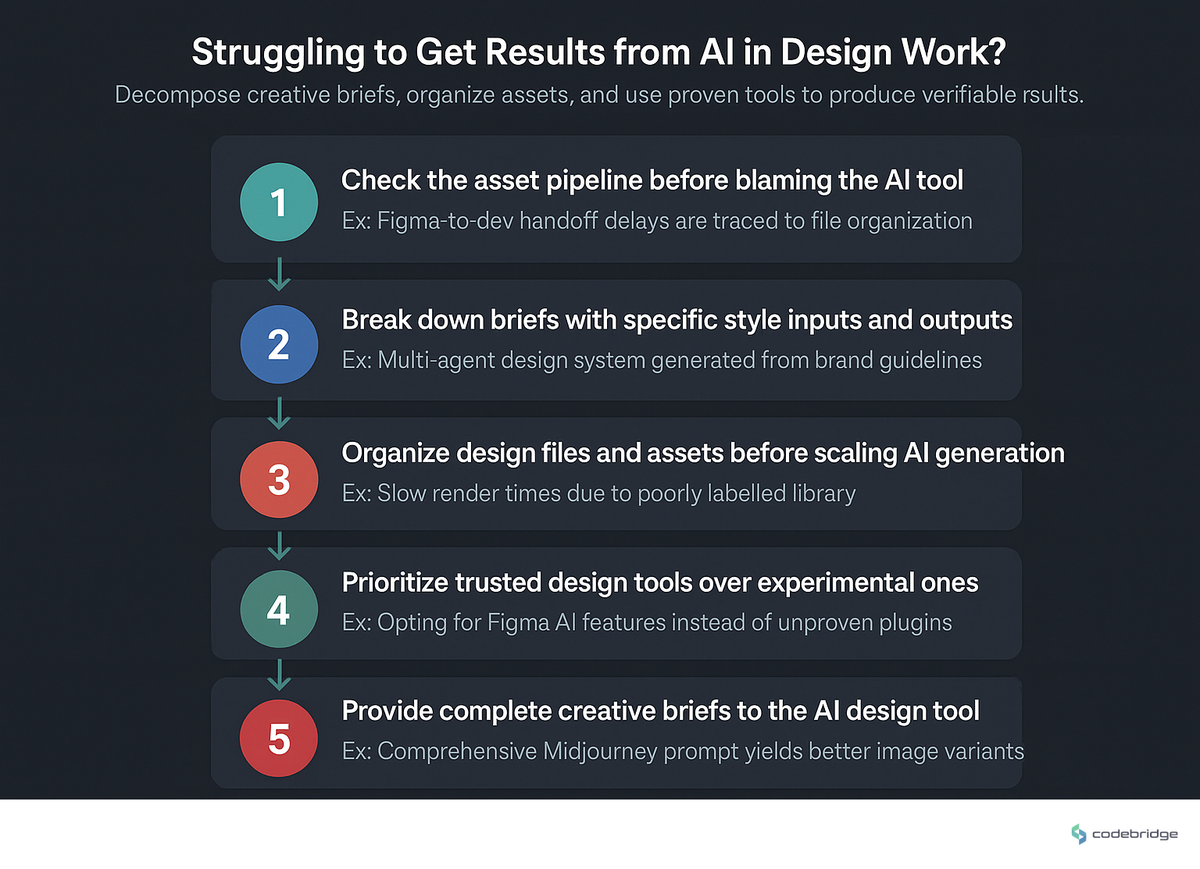

Here is what to do this quarter, regardless of whether your "agents" are LLMs, render workers, or contractors. The process flow below ties these together:

- 1. Verify the tunnel before you blame the model. Wrap fragile connections (serial bridges, render queues, asset pipelines) in supervised services with auto-restart. The Jetson team's fix was a systemd unit with

Restart=always; your equivalent might be a watchdog on your Figma-to-CMS sync. - 2. Decompose every brief into named subtasks with explicit inputs and outputs. If two subtasks share state, route them through one coordinator. Never run more than three parallel AI workers on a shared artifact without one.

- 3. Index your foreign keys, join columns, and frequently filtered fields before you scale. ORMs prevent SQL injection but hide query inefficiency. The dev-to-prod gap is almost always an indexing gap.

- 4. Default to boring tech until you have actual evidence you've outgrown it. 700-1000 RPS on one VPS is the bar most teams never reach. If your traffic is below that, distributed architecture is a tax, not a feature.

- 5. Always run agentic tools in Planning mode and dump maximum context. One developer on Conductor noted that "there's a lot of dead time where nothing is happening, I'm just sitting there watching it code." That dead time is usually the cost of insufficient context up front. Pay it once, save it ten times.

Closing: The Tunnel Was Always the Issue

The Jetson team didn't need a better model. They needed a more reliable connection. The compiler-building agents didn't need more intelligence. They needed clearer inputs. The ORM team didn't need a faster database. They needed the indexes that were always going to be necessary. The $4.4 trillion productivity opportunity is real. It just isn't where the keynote slides point. It's hiding in the boring plumbing, and the teams that fix the plumbing first are the ones who get to claim it.

Not sure which layer is actually slowing your team down?

Run the diagnostic checklist below against your current pipeline this week.

Diagnostic Checklist: Are You Debugging the Wrong Layer?

Your team has blamed an AI model or tool for output quality at least twice in the last month without verifying upstream connection stability or input formatting.

Your most common AI prompt or brief is more than one sentence long but contains no explicit inputs, outputs, or success criteria.

You run more than three parallel AI agents or workers on a shared artifact with no designated coordinator.

Your production database has tables over 1M rows where foreign keys, frequently filtered columns, or join keys are not indexed.

You moved off a monolith in the last 12 months while serving fewer than 700 RPS on a single VPS.

Your slash commands or automated workflows silently drop attached context (issues, briefs, asset references) without anyone noticing.

Leadership in your org has not personally walked through a full AI-assisted workflow full in the last 90 days.

REFERENCES

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript