Many teams building agentic AI are pushed toward multi-agent designs because specialization and coordination sound like a clean way to improve performance. Academic research frequently showcases complex systems where 2 to 5 agents negotiate and vote to solve problems

Some of those designs make it into production, but many become hard to operate once real cost, latency, and debugging pressure show up.

The failure pattern is consistent. A team architects a multi-agent system that performs well in staging, then discovers that coordination overhead, token costs, and opaque failure chains make it unmanageable at scale. By the time the on-call engineer gets paged at 2 AM, nobody can trace which agent introduced the bad output. The system gets rebuilt as something simpler, and the original architecture becomes an expensive lesson in earned complexity.

This article walks through that tradeoff with a specific lens: a compliance document review workflow at a Series B fintech. We compare single-agent and multi-agent approaches across cost, latency, debuggability, and team capacity. The goal is to give CTOs and engineering leaders a practical way to evaluate when multi-agent complexity is justified and when it destroys more value than it creates.

Single-Agent and Multi-Agent Architecture in a Compliance Workflow

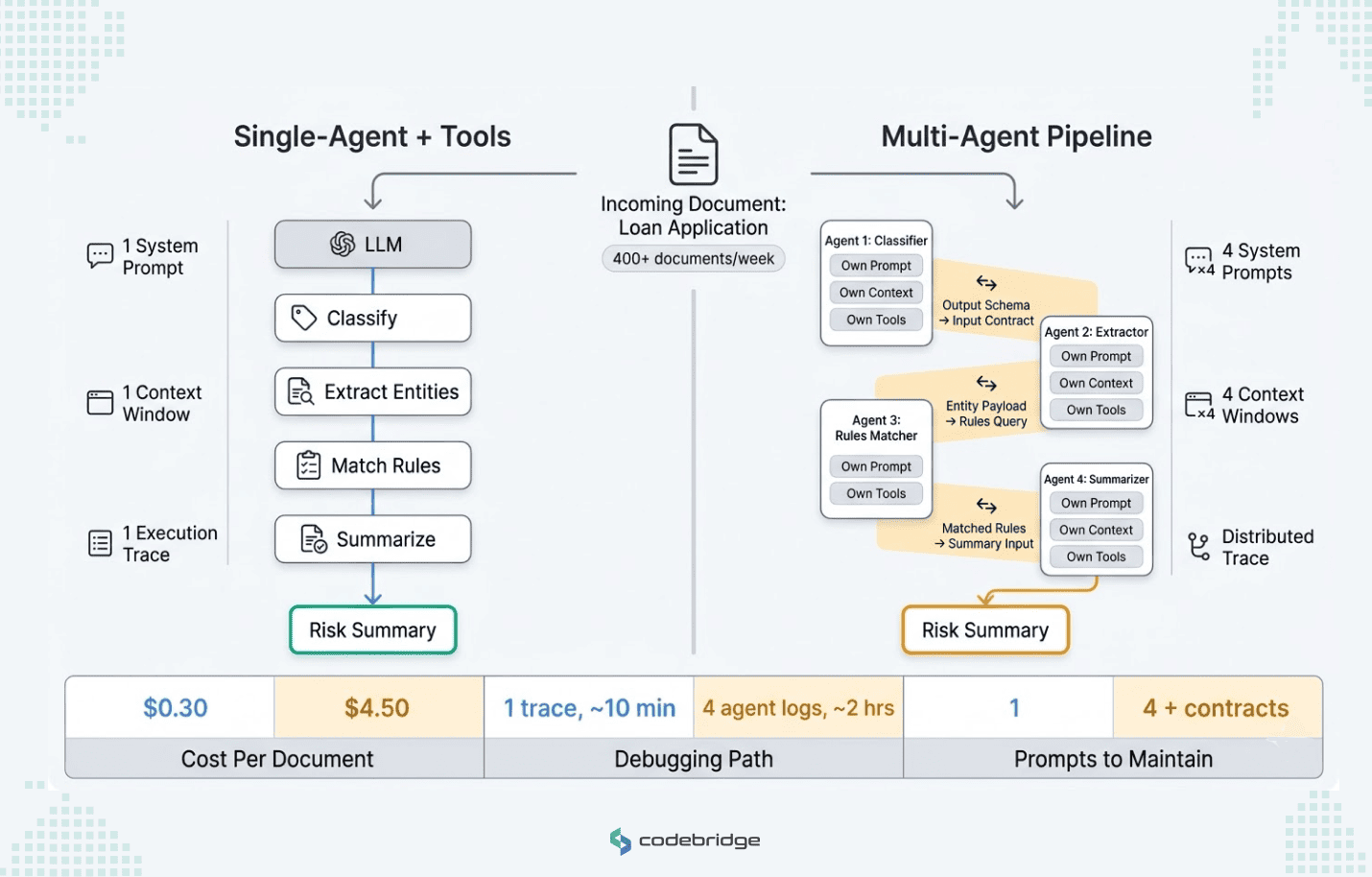

To evaluate these architectural patterns, consider a Series B fintech company with a 15-person engineering team. No dedicated ML engineers. Their compliance team reviews 400+ documents per week, including loan applications, KYC filings, and regulatory disclosures. Each document goes through four steps: classification by type, entity extraction, matching against the relevant regulatory ruleset, and a risk summary for the compliance officer. The CEO wants this automated. The CTO has to choose how.

Single-Agent + Tools (The Monolith)

In this monolithic approach, one LLM handles the entire workflow. It receives a document, classifies it, calls an extraction API, queries a rules database, and writes the summary. One system prompt. One context window. One execution trace.

For the engineering team, this design is easy to reason about. When the output is wrong, an engineer reads the prompt, checks the tool calls, and finds the gap. Deployments touch one service. Monitoring covers one pipeline. The cost per document is predictable because every request follows the same token path.

However, this approach strains as the domain expands. As more document types and regulatory frameworks are added, the system prompt becomes bloated. This often triggers the "lost in the middle" effect, where the model begins to ignore instructions buried in the center of a large context window. Teams typically mitigate this by using Retrieval-Augmented Generation (RAG) to inject only relevant rules or by implementing prompt decomposition to break one large prompt into focused, routed sub-tasks.

Multi-Agent Systems (The Microservices)

If the single-agent approach resembles a monolith, this approach mirrors a microservices architecture by splitting the work across specialized agents: a classifier agent, an extraction agent, a rules-matching agent, and a summarizer agent. Each agent operates with its own specific prompt, context window, and tool access.

CTOs who have lived through a monolith-to-microservices migration will recognize the shape of what follows. You gain modularity. You can update the extraction agent without touching the classifier. You can swap the rules-matching model independently. Each agent's prompt stays small and focused.

You also inherit the operational costs that come with distributed systems. Four agents means you must now maintain multiple prompts and manage a complex coordination protocol and a message-passing layer.

The sharpest risk is contract dependencies. The classifier agent's output schema is the extraction agent's input contract. Change that schema, and the downstream agents break. In a traditional microservices stack, teams manage this with API versioning and integration tests. In an agent pipeline, the output of the classifier agent serves as the "contract" for the downstream extraction and rules agents. A single schema change can cascade across the entire system, requiring a coordinated update of all agent prompts and logic.

Those dependencies matter because they do not just add complexity; they also raise the operating cost of the system.

Multi-Agent AI Cost: The Coordination Tax on Tokens and Engineering Time

Go back to the fintech scenario. The single-agent system processes a compliance document by making four tool calls in sequence: classify, extract, match rules, and summarize. Each document costs roughly $0.30 in API tokens. At 400 documents per week, that's about $6,200 per year. Predictable and budgetable.

Let’s run the same workflow through a four-agent pipeline. Each agent carries its own system prompt, which means each agent re-ingests context independently. Every handoff between agents generates coordination tokens: status checks, output validation, and context passing. Those tokens do not improve the user-facing output. They are coordination overhead that shows up mainly in system cost.

In practice, multi-agent systems consume up to 15x the tokens of their single-agent equivalents. For the fintech example, that turns $6,200 per year into $93,000. A Series B company burning through runway will feel that number in quarterly board conversations.

The accuracy-to-cost ratio is often difficult to justify for standard production workloads. Controlled experiments have shown that while moving from a single-agent to a multi-agent setup can improve truthfulness by approximately 28% in Q&A tasks, it frequently results in a 3.7x increase in API costs.

If your single-agent system already classifies documents at 94% accuracy and the multi-agent version reaches 97%, you're paying 3.7x more to close a 3-point gap. If the gain is only three percentage points, many teams may find that targeted human review is cheaper than absorbing the coordination overhead of a multi-agent design.

Furthermore, the cost that often blindsides teams is engineering time. In our experience, building a multi-agent system typically requires 3 to 5 times the engineering hours of a single-agent equivalent due to the complexities of state management and failure handling.

Agent's Latency in Production: From 18 Seconds to 3

In production, not every request from multi-agent systems completes in a reasonable time.

In the single-agent compliance pipeline, one LLM makes four tool calls in sequence. Each call adds latency, but the total stays predictable because the model holds context across the entire workflow.

In a multi-agent system, each agent makes its own LLM call, and each call starts cold. The classifier agent generates a structured output. The extraction agent ingests that output, reloads its own system prompt and context, and generates its own output. The rules agent and the summarizer agent do the same. Four separate inference calls, each carrying its own prompt overhead, each waiting for the previous agent to finish. In this scenario, the latency compounds.

In one production case, the company implemented a six-agent mesh architecture, where agents debated and collaborated. The system worked and resulted in a P95 latency of 18 seconds and a cost of $8-12 per query. For a workflow that their users expected to complete much faster, those numbers killed adoption before the product team could measure accuracy.

The team rebuilt the system using only two agents and a strict state machine. Instead of letting agents coordinate through unstructured message-passing, the state machine enforced a fixed sequence: Agent A completes step 1 and passes a validated schema to Agent B, which completes steps 2 through 4 without negotiation and competing outputs.

Latency dropped to 3 seconds, and cost fell to $0.40 per query. The difference in accuracy was negligible. The accuracy difference between the six-agent version and the two-agent version was less than 1%.

That is the key tradeoff. The team spent months building a system that was 30x more expensive and 6x slower to produce an accuracy improvement their users could not detect. The rebuild, which took six weeks and two engineers, delivered a system that met SLOs on day one.

Multi-agent systems can be justified in domains where even a 1% accuracy gap carries real regulatory or clinical risk. The problem is that many teams commit to that complexity before measuring whether the gap actually exists.

Debugging Single-Agent vs Multi-Agent Failures

The most significant risk for an on-call engineer is a distributed failure. In a single-agent architecture, failures are linear: an engineer can read the prompt, examine the output, and identify the gap in minutes.

In a multi-agent mesh, failures are decentralized. When a system produces an incorrect answer, finding the source of the error requires tracing a conversation across multiple LLM calls to determine which agent introduced the contradiction. Without a rigid state machine, this process is often intractable under production pressure.

In this kind of architecture, tracing a failure can easily take 45 minutes to two hours.

Two properties make this failure pattern dangerous:

- The output looked right. Each agent validated only against its own context. The final summary looked credible even though it was incorrect. A compliance officer reviewing at volume would have no reason to question it.

- The failure was silent. No agent errored. No schema broke. A confident misclassification cascaded through three downstream agents unchallenged.

The architectural fix is schema validation at every agent boundary. When a handoff fails validation, the pipeline halts and logs exactly where the contract broke. But building and maintaining that validation layer competes with product development for your engineers' time.

Which raises the question this article has been building toward: can your team absorb multi-agent complexity without stalling the roadmap?

Framework: When Multi-Agent Architecture Is Worth the Complexity

This section turns the earlier analysis into a framework you can apply to your own system.

Start with the baseline, not the architecture.

Before you evaluate multi-agent designs, measure what a single-agent system delivers. Most teams skip this step. They scope the multi-agent version first because it maps neatly to the problem's logical decomposition: one agent per subtask. That mapping feels clean, but it front-loads coordination costs before they know whether the simpler version falls short.

The evaluation sequence is:

Start by optimizing the single-agent workflow and measuring accuracy, latency, and unit cost. Then improve retrieval. Then tighten the tool layer. Re-measure after each change.

If the quality gap closes, you've saved months of multi-agent engineering. If it doesn't, you now have a quantified gap: "Our single-agent system classifies documents at 89% accuracy. Our compliance team requires 97%. Prompt engineering and retrieval improvements got us to 93%. The remaining 4% costs us X dollars per month in manual review."

A quantified performance gap is the strongest justification for adding agents. Without it, you're building a multi-agent infrastructure to solve a problem you haven't proven exists.

Evaluate your team's operational ceiling.

Multi-agent systems require specific engineering capabilities that single-agent systems don't. Before you commit to the architecture, check whether your team can staff these functions:

- Contract ownership. Someone maintains data schemas between agents and tests every downstream agent when formats change.

- Distributed debugging. Your on-call engineers need to trace failures across multiple agent logs within your incident SLA.

- Prompt regression testing. Every model update requires testing across all agents. A prompt tweak in one agent can silently break others.

A 15-person team without dedicated ML engineers can run a single-agent system with standard DevOps practices. Running a four-agent pipeline with contract testing, distributed tracing, and prompt regression requires at least two to three engineers spending a meaningful share of their time on agent infrastructure instead of product work. For many Series B teams, that's 15-20% of engineering capacity redirected from the roadmap.

Check domain depth before adding reflection.

One of the most common vendor pitches for multi-agent systems is "self-correcting agents": Agent A generates an answer, Agent B critiques it, Agent A revises. The pitch implies that more agents produce more accurate results through iterative refinement.

"Self-correcting agents" work in domains where the base model has strong coverage. In specialized domains, a reflection loop can reinforce the model’s existing blind spots rather than correct them. A reflection agent can check formatting and internal consistency. It can't verify facts against regulations it hasn't seen.

Before you invest in multi-agent reflection, test whether the base model can answer domain-specific questions accurately when given the right context through retrieval. If retrieval is enough to make the base model reliable, a single-agent design is usually the better choice. If retrieval still does not close the gap, additional agents are unlikely to solve the underlying knowledge problem.

The evaluation applied to the fintech case.

The compliance CTO from Section II would walk through this framework as follows. The single-agent system classifies documents at 93% accuracy after prompt optimization and retrieval improvements. The compliance team requires 97% for audit readiness. The 4% gap costs roughly $4,200 per month in manual review for misclassified documents. A multi-agent system with a dedicated classifier agent could close that gap, but it would increase API costs from $6,200 to an estimated $40,000-$60,000 per year, add 6-8 weeks of build time, require two engineers on ongoing agent infrastructure maintenance, and extend debugging time from minutes to hours.

The CTO's decision: is closing a 4% accuracy gap worth $35,000-$55,000 in additional annual API cost, 15% of engineering capacity redirected to agent maintenance, and a 4x increase in incident resolution time? For some compliance environments, the answer is yes. For most Series B companies burning runway, the answer is not yet.

That "not yet" matters. The framework doesn't say multi-agent systems are wrong. It says they should be the last tool you reach for, after simpler improvements have been measured and exhausted.

Conclusion

Design the system on the assumption that any model output can be wrong. This applies whether you run one agent or ten. The question changes from "how smart is my agent?" to "what happens when this agent produces garbage?" Teams that plan for model failure are more likely to build systems that hold up in production, not just in demos.

The evidence in this article points in one direction. A multi-agent compliance pipeline costs $93,000/year, where a single-agent version costs $6,200. It adds seconds of latency that kill user adoption. It turns a 10-minute debugging session into a two-hour trace across four agent logs. And the accuracy improvement that justifies all of that overhead is often smaller than what better prompts and retrieval can deliver on their own.

Before choosing a multi-agent design, compare the baseline against the alternative in performance, operating cost, engineering effort, and incident response burden. The teams that ship reliable AI systems aren't the ones with the most agents. They're the ones who earned every agent they added.

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript