Two years. Full-time. No salary. A solo technical founder posts to Hacker News that they've finally — finally — gotten their AI toolchain, their personal workflow, and a stealth product to intersect at "good enough to ship." And then refuses to say what the product is.

"I'm not going to tell you what it is, because if I did there's too little moat and HN is crawling with great people who could probably replicate it and execute on it faster than me."

Anonymous founder, Hacker News

If you're a CTO in a stealth or pre-stealth startup right now, you read that and feel two things at once. First: that's exactly right. Second: two years is a brutal price to pay for getting the validation loop wrong even once. This playbook is about not paying that price.

KEY TAKEAWAYS

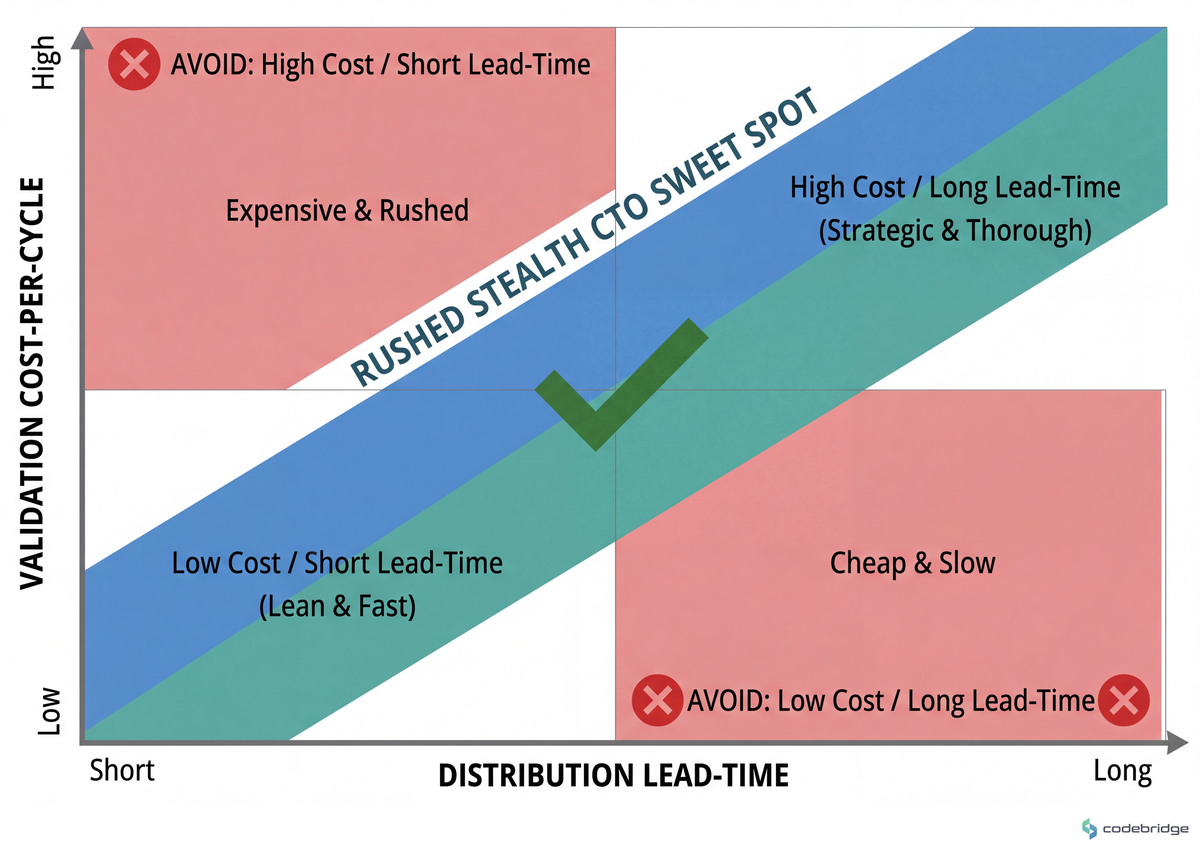

Build speed is no longer the binding constraint. AI-assisted coding has collapsed replication time, which means distribution lead-time and validation cost-per-cycle are now the constraints worth optimizing.

Every wrong assumption costs twice for early-stage CTOs — once in engineering hours, once in the personal opportunity cost of a wrong build that never recovers revenue.

Technical moat in 2026 is increasingly distributional, not architectural. Founders observe that ten clones often appear before the first MVP earns traction.

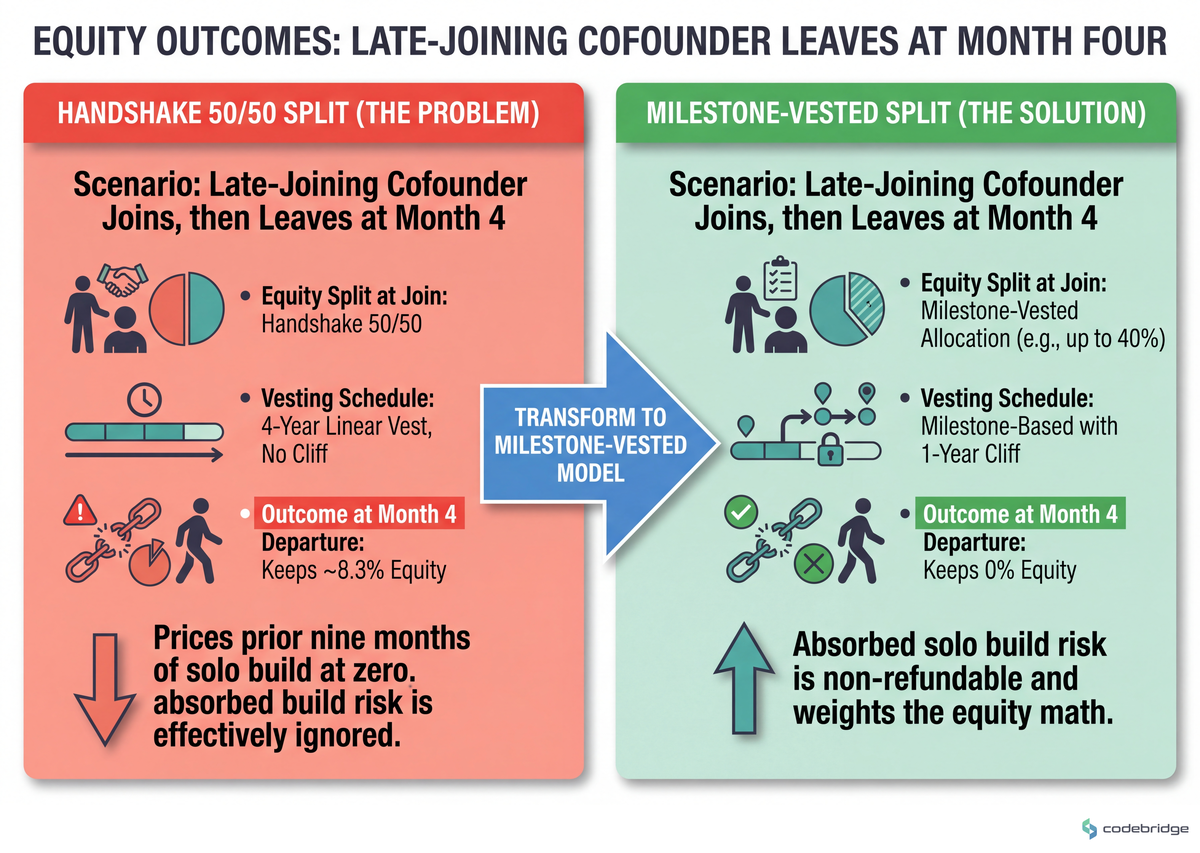

Late-joining cofounder equity should be milestone-vested, not handshake-split. Solo build risk that's already been absorbed is non-refundable and should weight the equity math.

Stealth is a discipline about the idea, not the team. Stay quiet on what you're building; be loud on the capability you're developing around it.

The Hidden Problem: Cycle Cost, Not Cycle Speed

The lean-startup canon was written for an era when shipping was the hard part. Eric Ries put it cleanly:

"The essential lesson is not that everyone should be shipping fifty times per day but that by reducing batch size, we can get through the Build-Measure-Learn feedback loop more quickly than our competitors can."

Eric Ries, on Lean Startup methodology

That logic still holds, but the asymmetry has flipped. When AI-assisted coding compresses build cost, the bottleneck moves to discovery: the cost of being wrong about what to build in the first place. A 2025 founder commenting on this shift summarized the market reality bluntly:

"Before you even manage to build an MVP and get early traction, ten other ventures are already doing the same thing — burning money and making any roadmap to profitability useless."

Anonymous founder, Reddit r/startups

The pattern we want to make visible is depicted below.

From the Field

The pain is not theoretical. Three threads from the past year capture three distinct flavors of it.

A founder writing from the trenches of defence-tech startup Teletactica described what hardware iteration looks like in active conflict environments. Standalone-unit cycles couldn't keep up with electronic-warfare conditions that shifted week to week:

"Jamming, shifting threats, and high operational tempo required near-constant adaptation, while we still had to build engineering and manufacturing processes that could hold under pressure."

Teletactica engineering, via Vestbee

Their working answer was modularity plus co-location with end users. Not faster shipping — closer feedback. Cycles compressed because the round-trip from "field observation" to "design change" got physically shorter.

A different problem, same root cause. A B2B SaaS founder posted to r/ycombinator after nine months solo:

"I've been building a B2B SaaS for 9 months solo. No salary, self-funded. Product is in production with 3 design partners."

OP, Reddit r/ycombinator

The question they asked was about a potential cofounder requesting a 50/50 equity split. The thread doesn't reveal how they structured it. What it does reveal is the structural blind spot — solo build-risk that has already been absorbed is invisible at the negotiation table unless the equity instrument explicitly prices it.

And one more, because lean engineering for CTOs with limited hours has a personal-economics dimension that gets glossed over:

"Every wrong assumption costs you twice — once in time and once in the guilt about being away from home."

Anonymous founder, Reddit r/founder

The lesson here isn't "work less." It's that the price of a wrong build is non-recoverable for founders without a runway buffer or a partner picking up slack. Validation cost-per-cycle is the metric that survives this constraint. Iteration count doesn't.

The Pattern: Compress the Loop Before You Compress the Build

The teams that come out of stealth with traction in 2026 share a stance that's quieter than "ship fast." They protect three things in order: discovery cost, distribution lead-time, and equity dilution. Build cost — historically the hard one — is now the easy one.

Steve Blank framed this principle nearly two decades ago, and it has aged better than most lean writing:

"The CTO's primary job is to make sure the company's technology strategy serves its business strategy. If your business strategy is to create a low-burn, highly iterative lean startup, you'd better be using foundational tools that make that easy rather than hard."

Steve Blank, Startup Lessons Learned

The Playbook: Seven Steps for the First 90 Days

The flow below is what we walk new stealth CTOs through. Each step has a "good looks like" and a "common failure mode" because both are needed.

Step 1 — Tag every assumption with a discovery cost

What to do: List every load-bearing assumption in your roadmap. Tag each with the cheapest method to invalidate it: customer call, paper prototype, landing-page test, or code.

What good looks like: 70%+ of your assumptions resolvable without code. Each tagged with a deadline in days, not sprints.

Common failure mode: Treating "we'll learn it from usage" as a discovery method. Usage is a learning method only if you have users — and you don't yet.

Step 2 — Run 30 customer calls before the second feature

What to do: Before building feature two, complete 30 structured 30-minute calls with target users. Record patterns, not validations.

What good looks like: You can predict the next interview's top three pains within the first five minutes. That's signal saturation.

Common failure mode: Pitch-shaped calls. If you find yourself "showing them the demo," you're not discovering — you're selling, and the data is contaminated.

Step 3 — Treat your AI toolchain as a quarter-long investment

What to do: Pick a primary coding agent, a primary spec-writing model, and a primary review model. Lock them for at least one quarter. Build prompt libraries, custom evals, and a workflow that you measure.

What good looks like: A measurable improvement in commits-per-discovery-cycle quarter over quarter. The HN solo founder above describes spending one to two years tuning their setup before output quality crossed the revenue-grade threshold — that's the realistic horizon, not a weekend.

Common failure mode: Tool-hopping every two weeks because a new model dropped. The compounding gain is in your prompts, not the model name.

Step 4 — Build distribution before you're ready to launch on it

What to do: Pick one channel (audience, partnerships, design-partner pipeline, niche community) and start compounding it the same week you write the first line of production code.

What good looks like: By the time the product is testable, you have a list of 50+ named potential users who know your name. Distribution lead-time is the moat AI hasn't eaten.

Common failure mode: "We'll do GTM after we ship." In a market where ten clones appear before your MVP, post-launch distribution is a starting line, not a ramp.

Step 5 — Co-locate or remote-shadow with at least one design partner per build cycle

What to do: Spend a half-day per cycle physically beside (or screen-shared with) one user. Watch them work. Don't talk.

What good looks like: You leave with two product changes you wouldn't have written down if asked in a survey. The Teletactica pattern — modularity plus embedded operators — works at every scale, not just hardware.

Common failure mode: Substituting analytics for shadowing. Analytics tells you what happened. Shadowing tells you what was happening in the user's head when it happened.

Step 6 — Gate cofounder equity on milestones, not handshakes

What to do: If you bring on a cofounder after months of solo build, structure equity with (a) a 12-month cliff, (b) milestone-based vesting tranches, and (c) explicit credit for risk already absorbed (founder shares vested at signing for the solo period).

What good looks like: The split honestly answers the question "what would the equity look like if this person walked at month 4?"

Common failure mode: A 50/50 split with a 4-year linear vest and no cliff, on the theory that "we'll figure it out." You're pricing your prior nine months of solo build at zero. Don't.

The contrast in equity outcomes is shown below.

Step 7 — Stay stealth on the idea; stay loud on the capability

What to do: Don't disclose the specific product hypothesis. Do disclose the capability stack you're building (the toolchain, the customer-discovery cadence, the technical posture). Capability is recruiting fuel; the idea is the moat.

What good looks like: Senior engineers and design partners come to you because of public reputation on the capability axis, while the product hypothesis stays unpublished.

Common failure mode: Total radio silence. You signal nothing, build no audience, and emerge from stealth into the same red ocean the Reddit thread above described.

Close: What to Do This Week

The opening case — two years of stealth, a workflow finally in place, an idea the founder won't disclose — is not a story about patience. It's a story about what happens when you treat the validation loop as your real product. The build is downstream of that.

So: tomorrow morning, list every load-bearing assumption in your current roadmap and tag each with the cheapest discovery method that could invalidate it. Wednesday, schedule three 30-minute calls against the riskiest one. By Friday, you should have either killed that assumption or earned the right to start writing code against it. That's a 30-minute artifact you can produce today and a one-week loop you can run forever.

The cheapest line of code you'll ever write in 2026 is the one your customer-discovery process tells you not to write at all. Treat discovery as engineering, not as research.

Building toward a stealth launch and want a second opinion on your validation loop?

Talk to our team about auditing your discovery cost-per-cycle before your next sprint.

Diagnostic Checklist: Run This Against Your Current Setup

Can you name the top three load-bearing assumptions in your current roadmap, and the cheapest method to invalidate each? Yes / No

In the last 30 days, did you spend more hours on customer calls or on coding? Calls / Code (Code = risk signal at pre-traction stage.)

If you lost your AI toolchain tomorrow, would your output drop more than 30%? Yes / No (No = you haven't compounded the toolchain enough yet.)

Do you have 50+ named potential users in a list outside your CRM today, before public launch? Yes / No

If a cofounder walked at month four, would your current equity instrument feel fair to you in retrospect? Yes / No

Have you watched a real user use your product in the last 14 days — not a recording, not analytics, but live? Yes / No

If a competitor cloned your visible capability stack tomorrow, would your distribution lead-time still give you 3+ months of advantage? Yes / No

Score: 5+ Yes = healthy. 3-4 Yes = patch the No's this quarter. 0-2 Yes = pause feature work and rebuild the loop before it costs you another year.

REFERENCES

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript