Enterprise adoption of generative AI is accelerating faster than the security practices that govern it. Recent research published on arXiv found that over 40% of AI-generated code solutions contain security vulnerabilities. For organizations experimenting with AI-assisted development, this finding has practical implications. It suggests that AI-generated outputs cannot be assumed to meet the security standards typically required for production software.

The issue becomes more consequential as generative AI systems begin interacting directly with operational infrastructure. Many enterprise deployments now allow AI systems to call APIs, modify records in SaaS platforms, generate scripts, or trigger automated workflows.

In these environments, AI outputs are no longer confined to text suggestions. They can influence real system behavior.

This article examines the security risks that emerge when generative AI systems are integrated into enterprise environments and outlines practical strategies organizations are using to govern those risks at scale.

How Generative AI Has Affected Security

According to recent industry surveys, 88% of organizations report using AI in at least one business function, yet only 24% of current initiatives include a defined security component.

This gap reflects how generative AI is typically introduced into organizations. Many deployments begin as productivity experiments, code assistants, document summarization tools, or internal chatbots that are often implemented outside traditional security review processes.

As these systems become integrated into daily workflows, they introduce risks that existing governance models were not designed to address.

Unlike traditional software, generative AI systems do not execute fixed logic. Their outputs depend on statistical inference and contextual input, which means behavior cannot always be predicted or constrained through conventional rule-based controls. And as adoption increases, this difference is beginning to affect the security landscape in several ways.

Social Engineering at Industrial Scale

One of the most visible effects of generative AI on the security landscape is the improvement of large-scale social engineering operations.

Previously, phishing campaigns required significant manual effort and often contained linguistic errors that made them easier to detect. Generative AI allows attackers to generate grammatically flawless messages rapidly, adapt language to specific targets, and iterate campaigns with minimal cost.

- Synthetic identity and voice impersonation.

In documented fraud incidents, attackers have used AI-generated voice recordings to impersonate senior executives and authorize urgent financial transfers during phone calls.

- Malware development acceleration.

Threat actors increasingly use generative models to assist with malware development, particularly in modifying existing code to evade signature-based detection.

- Automated reconnaissance.

Generative AI can also assist attackers in analyzing large volumes of publicly available information to map organizational structures, identify high-value targets, and prioritize attack paths.

While these capabilities do not replace skilled attackers, they significantly reduce the effort required to conduct targeted campaigns.

Why Most Enterprise Incidents Are Still “Boring”

Despite the technical sophistication of these new threats, the majority of early enterprise GenAI security incidents are not exotic exploits but rather costly workflow mistakes driven by convenience and a lack of technical controls.

- Direct Data Leakage: Employees frequently paste proprietary source code, internal strategy documents, or meeting notes into unmanaged AI tools for debugging or summarization. Several companies, including Samsung, have publicly reported incidents involving sensitive information shared with external AI services.

- Experimental Environment Leakage: Organizations often allow internal teams to experiment with AI tools using production data, without separating test environments from operational systems.

- Shadow AI Proliferation: Business units often adopt no-code agent builders and third-party plugins faster than security teams can establish terms. These tools may connect directly to internal data sources or SaaS platforms.

- Vendor Risk Management: Third-party providers may retain prompts and outputs for abuse monitoring or service improvement, which directly conflict with enterprise data governance or regulatory requirements.

In most cases, the underlying problem is not a sophisticated attack but a lack of clear governance over how AI tools are used within the organization.

Why Traditional Security Models Struggle

For leadership, the critical takeaway is that existing cybersecurity approaches are necessary but insufficient for this new era. The UK National Cyber Security Centre has explicitly warned that prompt injection is not equivalent to SQL injection. Traditional firewalls and network rules are designed for deterministic code and structured inputs like JSON; they cannot evaluate the probabilistic nature of natural language or the semantic intent behind a model's reasoning.

This mismatch creates the “Confused Deputy” problem, where a model is manipulated into using its legitimate credentials and permissions to execute actions that benefit the attacker. Because GenAI systems are non-deterministic — the same input may produce different outputs — security strategies must treat the model as an untrusted component running inside a controlled execution environment.

Generative AI systems also challenge several assumptions embedded in traditional security architecture. Firewalls, input validation, and application security testing assume that software processes structured inputs and executes predictable logic. However, generative models operate differently. They interpret natural language, combine multiple data sources, and generate responses that vary depending on context.

Because of this, attacks can target the model’s reasoning process rather than a specific software vulnerability.

The UK National Cyber Security Centre has highlighted prompt injection as a primary example. Instead of exploiting code, attackers manipulate the model through carefully crafted instructions embedded in documents or web pages that the system processes as input.

In systems where models can call external tools, this manipulation can lead to the problem that is called “confused deputy”. The model unknowingly uses its legitimate permissions to perform actions that benefit the attacker.

This means traditional vulnerability patching alone cannot mitigate these risks. Generative AI must be treated as an untrusted component operating within a controlled execution environment, with strict boundaries governing what actions it can perform.

Generative AI Security Risks in Action-Taking Systems

When an AI model is granted agency, it operates in an autonomous loop: observing the environment, reasoning about objectives, selecting tools, and executing actions. This agency often inherits the same identities and OAuth grants used by humans, creating an attribution gap that makes it difficult to determine whether an action was intended by a user or directed by a manipulated model.

The security model changes once AI systems are allowed to execute actions rather than only generate text.

In many enterprise deployments, AI agents can modify SaaS records or generate executable instructions. These systems operate with the same identities, permissions, and OAuth tokens that human users rely on.

As a result, the model is no longer just producing information. It becomes part of the operational control layer of enterprise infrastructure. This shift introduces several new categories of security risk.

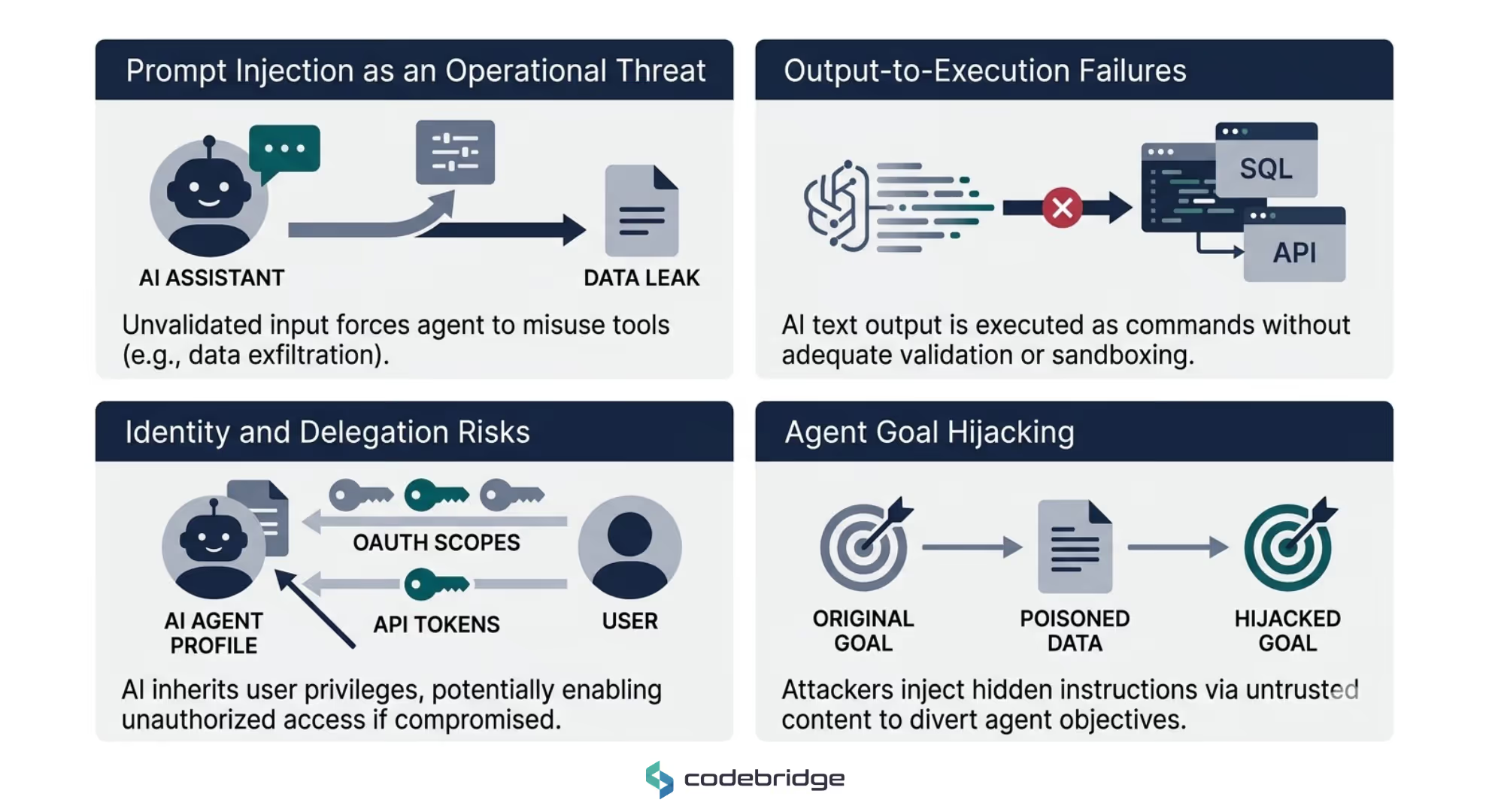

Prompt Injection as an Operational Threat

In autonomous systems, prompt injection is no longer just a method for producing bad-quality code. When the system has access to external tools, this manipulation can result in unintended actions such as sending data to external systems, modifying records, bypassing policy, or abusing internal tools.

- Direct Injection: Explicitly telling a model to ignore all previous instructions to reveal system prompts or bypass safety filters.

- Indirect Prompt Injection: Attackers hide malicious instructions in untrusted external content, emails, or PDFs that the system naturally ingests as context. Because AI treats ingested text as trusted input, a zero-click attack becomes possible.

For example, the EchoLeak exploit showed that a single crafted email could trigger an assistant to exfiltrate confidential files and chat logs without any user interaction.

Output-to-Execution Failures

Another common failure point occurs at the boundary between model output and system execution. Generative models produce text, but many systems interpret that text as executable commands — SQL queries, API parameters, or shell instructions. When outputs are executed without strict validation or sandboxing, the model effectively gains the ability to influence system behavior directly.

This creates a fragile interface between probabilistic model output and deterministic infrastructure, and without strong validation layers, unintended instructions can propagate directly into production systems.

Identity and Delegation Risks

When an AI agent acts on behalf of a user, it inherits the most vulnerable parts of modern SaaS security: delegated OAuth scopes and consent flows.

Enterprise AI agents frequently operate using delegated user permissions. When an agent acts on behalf of a user, it inherits OAuth scopes, API tokens, and access rights associated with that identity. If the model is manipulated, these privileges may be used to access sensitive systems or perform actions beyond the user’s original intent.

The CoPhish attack demonstrated that malicious agents hosted on trusted domains could wrap OAuth phishing flows to capture user access tokens, granting attackers access to emails, calendars, and OneNote data.

In complex environments with multiple agents interacting, a compromised or manipulated low-privilege agent may also trigger actions from higher-privilege systems that implicitly trust internal requests.

Agent Goal Hijacking

Models cannot reliably distinguish between system instructions and untrusted data. Attackers can use this to silently redirect an agent's main goal through poisoned content. A procurement agent might be re-prioritized from "review invoice" to "execute immediate payment" by a hidden instruction in a PDF metadata field. The agent remains obedient; it is simply obeying the wrong objective.

A more subtle risk involves the manipulation of the agent’s objective itself. Generative models cannot reliably distinguish between trusted instructions and malicious content embedded within external data sources. Attackers can exploit this limitation to redirect an agent’s main goal through poisoned content.

For example, a malicious document or email may re-prioritize an agent from "review invoice" to "execute immediate payment" by a hidden instruction in a PDF metadata field

In these situations, the model is not malfunctioning. It is following instructions that appear valid within the context it has been given. For this reason, enterprise security architectures cannot rely on the model’s ability to interpret intent correctly. Controls must be enforced at the tool and execution layer, where actions can be validated deterministically.

Risk Mitigation Strategies for High-Maturity Teams

Mature organizations assume that models cannot reliably interpret intent as generative models can be manipulated through context, prompts, or external data sources. For this reason, teams focus less on filtering model output and more on controlling what the system is allowed to do.

Therefore, security shifts toward governance of execution, permissions, and infrastructure.

Adopt Agency-Based Risk Tiering

Not all AI systems create the same level of risk. Mature organizations classify deployments based on how much authority the system has to interact with external tools and enterprise infrastructure. A common model classifies systems into four main tiers:

- Tier A (Assistive systems)

The model answers questions or summarizes information using a fixed knowledge base. It cannot access external systems or perform actions.

- Tier B (Read-only systems)

The agent can retrieve information across internal sources but cannot modify records or trigger workflows.

- Tier C (Write actions with approval)

The agent proposes actions such as drafting emails or creating CRM entries. Execution requires explicit human approval.

- Tier D (Autonomous systems)

The agent performs actions automatically. This level of autonomy is typically restricted to low-impact and reversible operations and requires strong monitoring and the ability to immediately disable the system if necessary.

This tiering model allows organizations to align security controls, governance requirements, and operational oversight with the real risk profile of each AI deployment.

By classifying systems according to their level of autonomy, organizations can apply proportionate safeguards instead of attempting to enforce the same security model across all deployments.

In practice, many organizations begin implementing this model by defining a small set of evaluation criteria that determine the appropriate tier for each system. The criteria usually include:

- Action capability: Can the system modify data, trigger workflows, or execute code?

- Data sensitivity: Does the system access regulated, financial, or proprietary information?

- Identity scope: What permissions or OAuth scopes does the system inherit?

- Reversibility of actions: Can the system’s actions be easily rolled back if an error occurs?

- Human oversight: Is a human required to review or approve actions before execution?

These criteria allow organizations to introduce AI capabilities while maintaining consistent governance over how autonomous systems interact with business infrastructure.

Deterministic Enforcement vs. LLM Filters

Many early AI security approaches rely on probabilistic defenses such as prompt scanners or secondary models evaluating outputs. These methods can reduce obvious misuse but are unreliable as a primary control layer.

Instead, mature teams place deterministic checks at the point where the model interacts with external tools. This includes using structured tool schemas, no free-form shell or SQL execution, and enforcing out-of-band policy checks that the prompt content cannot influence.

Key practices include:

- Structured tool interfaces

Agents interact with tools through predefined schemas that strictly define permitted parameters and actions.

- Execution policy checks

Every tool invocation passes through a policy layer that verifies the request against business rules before execution.

- Fail-closed behavior

If the system cannot confidently validate an instruction, the request is rejected rather than executed.

These controls ensure that even if a model is manipulated, it cannot execute arbitrary actions.

Rethinking the Framework: The IBM Framework for Securing Generative AI

The mitigation strategies discussed earlier — limiting agent autonomy, enforcing deterministic controls, isolating infrastructure, and continuously testing systems — are essential practices.

However, implementing these controls consistently across multiple AI deployments can be difficult without a structured governance model.

Many security teams rely on frameworks such as the OWASP Top 10 for LLM Applications and the emerging OWASP Top 10 for Agentic Applications. These resources are valuable for identifying specific vulnerabilities and attack patterns.

However, OWASP primarily focuses on what can go wrong at the technical level. Organizations still need a framework that explains where security controls should exist across the AI system lifecycle.

One approach that has gained attention in enterprise environments is the IBM Framework for Securing Generative AI. It organizes security around the entire AI value stream and covers systems, data flows, and operational processes that surround the model.

The framework organizes generative AI security into five layers that map to different parts of the AI lifecycle.

1. Data Security

The first layer focuses on protecting sensitive information throughout the AI lifecycle. Organizations often centralize large datasets when training, fine-tuning, or retrieving information for generative models. This concentration of data increases the impact of any potential breach.

Key safeguards include:

- Data discovery and classification to identify sensitive datasets used during training or retrieval.

- Encryption and key management to protect data both in storage and during transmission.

- Access controls and identity management to restrict who can interact with training data, embeddings, and logs.

Together, these regulations reduce the risk of exposing sensitive data during development and production.

2. Model Security

Model security addresses the integrity of model artifacts and the processes used to train and deploy them.

Most organizations rely on pretrained models obtained from open repositories or external vendors. This introduces AI supply chain risks, including the possibility of compromised model checkpoints or poisoned training data.

Key controls include:

- verifying the origin and integrity of model artifacts

- scanning model dependencies and weights before deployment

- securing API interfaces used to access hosted models

They ensure that the model itself has not been tampered with before entering production environments.

3. Usage Security

Usage security focuses on the runtime behavior of generative AI systems. At this stage, models interact with prompts, external data sources, and potentially enterprise tools. This layer addresses threats such as prompt injection or model extraction.

Organizations typically implement these controls to ensure that models operate safely when exposed to real-world inputs:

- prompt monitoring and input validation

- rate limiting and query controls to prevent model abuse

- runtime monitoring systems that detect suspicious usage patterns or potential data leakage

4. Infrastructure Security

Generative AI systems ultimately rely on traditional infrastructure, including cloud services, identity systems, and network connectivity.

Infrastructure security focuses on ensuring that the environment supporting AI workloads follows established cybersecurity principles.

Key controls include:

- network segmentation between AI services and sensitive systems

- hardened identity and access management policies

- secure containerization or virtualization for model execution

Applying traditional infrastructure controls remains one of the most effective ways to reduce the blast radius of AI-related incidents.

5. Operational Governance

The final layer addresses the ongoing management of AI systems after deployment. Unlike conventional software, AI models can degrade over time due to data drift or changing usage patterns.

This layer ensures that AI systems remain aligned with their intended purpose as environments evolve. Operational governance includes:

- monitoring model performance and behavioral changes

- auditing outputs for bias, safety, and compliance issues

- maintaining documentation and oversight aligned with regulatory frameworks such as the EU AI Act

IBM Framework vs. OWASP

Executive Interpretation

One of the practical benefits of the IBM framework is that it helps organizations structure AI security responsibilities in a way that aligns with existing enterprise governance. Even without adopting IBM-specific tooling, the model can be used as a simple operating structure.

For example:

Assign ownership per layer

Define clear responsibility for each layer — data platform teams for data security, identity teams for access control, and application teams for runtime monitoring.

Budget by control domain

Security investments can be mapped to specific control areas such as identity hardening, data protection, and monitoring infrastructure rather than vague “AI initiatives.”

Maintain a unified risk register

Mapping AI systems to these layers allows organizations to track risks, mitigations, and operational responsibilities in a single governance structure.

Important Limitation

A framework does not eliminate the underlying technical challenge of generative AI. Large language models cannot reliably distinguish between trusted instructions and untrusted data. As a result, attacks such as prompt injection cannot be completely prevented through filtering or guardrails alone.

For this reason, mature organizations treat frameworks as governance structures while relying on architectural controls and execution boundaries to limit the potential impact of model manipulation.

Conclusion

As organizations begin deploying AI systems that can take actions, security must evolve alongside. The priority is not to eliminate risk but to ensure that AI systems operate within clearly defined boundaries. This requires visibility into what agents can access, what actions they can perform, and how those actions are monitored.

Establishing explicit system boundaries, controlling the spread of unsanctioned AI tools, isolating high-risk operations, and maintaining detailed observability are foundational steps. Aligning these practices with recognized frameworks further strengthens governance and accountability.

By applying principles such as strong execution controls, enterprises can scale agentic AI responsibly while maintaining the security and reliability expected in production environments.

.avif)

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript