You spent the weekend stitching together an 8-week plan for the next product surface — sequencing, verification, dependencies, on-call coverage. You bring it to the executive review on Monday. The CEO and head of product look at you like you have lost the plot. "These timelines are not what we should expect in 2026. Why is anyone on your team still writing code? We pay for an AI agent."

If that exchange has not happened to you yet, it has happened to your peers. The tension is no longer about whether AI accelerates engineering — it does, measurably. The tension is that the velocity numbers your CEO reads about online are creation-rate numbers, and the cost numbers nobody is showing them are review-rate, verification-rate, and maintenance-rate numbers. Those costs land on you.

KEY TAKEAWAYS

AI velocity relocates the bottleneck rather than removing it. Faster PR creation produces a proportional spike in senior-engineer review load and verification time.

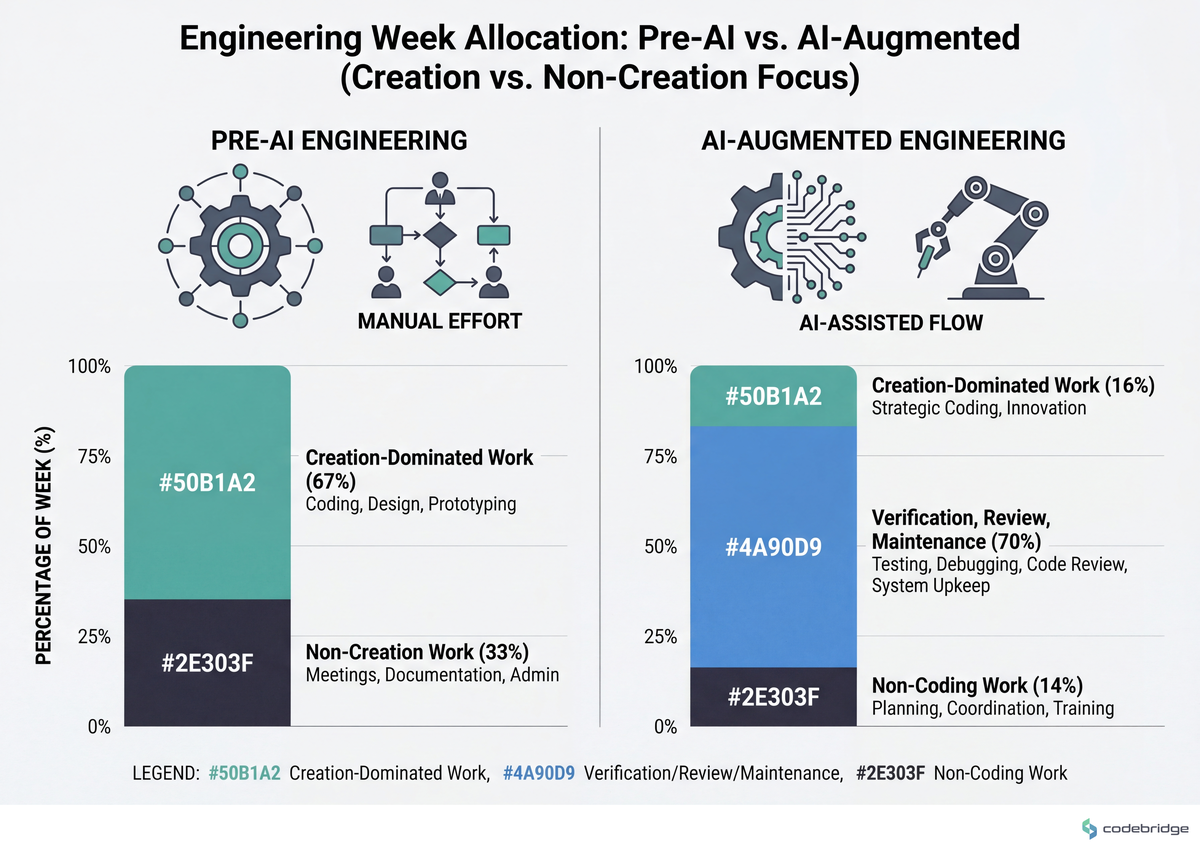

Engineers in 2026 spend the majority of their week on non-coding work. Industry surveys put the share of time on refactoring, debt cleanup, and verification well above half.

Foundations capacity is a sprint allocation, not a "when we have time" line item. Teams that lock 20-30% per sprint for debt and tooling outperform teams that schedule it reactively.

Internal developer platforms fail on adoption, not on architecture. The dominant blocker is cultural and political, not technical.

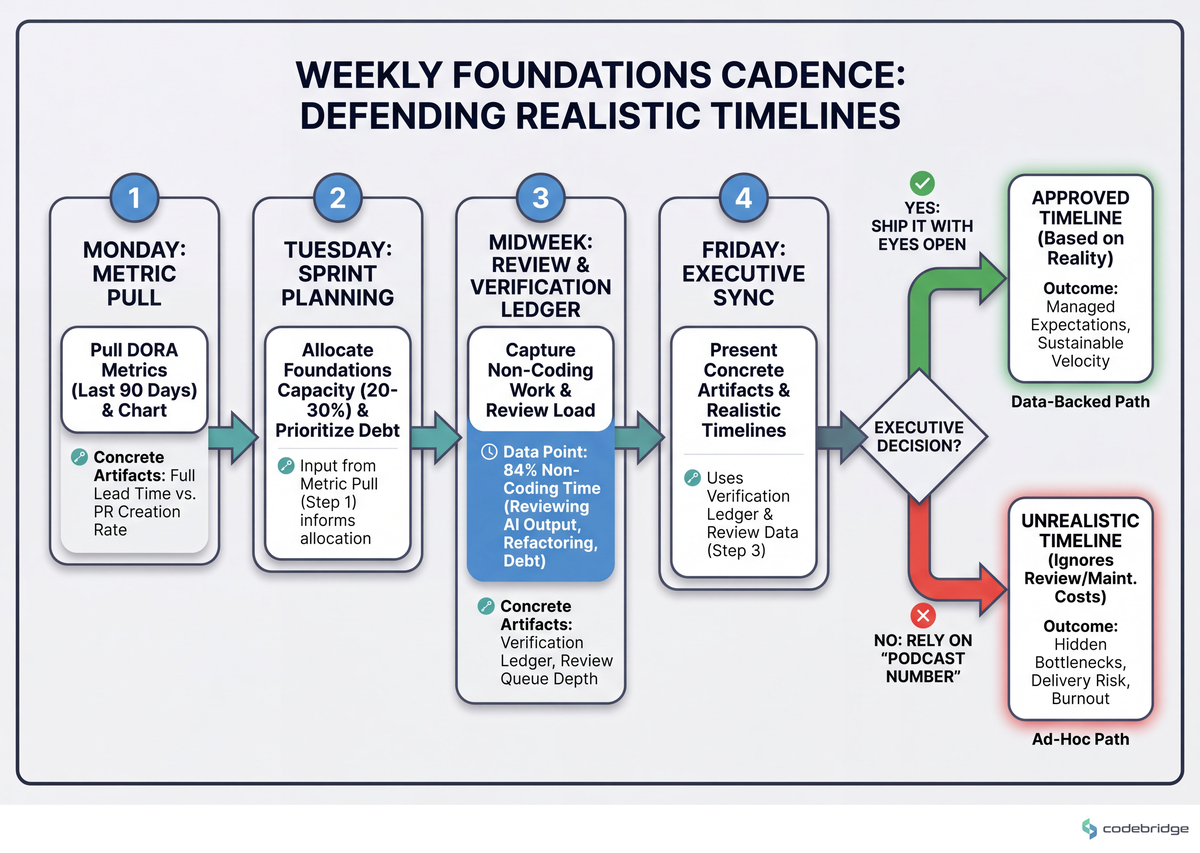

Defending realistic timelines requires concrete artifacts. Verification load, review queue depth, and debt-cleanup hours give the executive room to say "ship it" with their eyes open.

The Hidden Problem: Velocity Is Easy to Measure, Foundations Are Easy to Skip

Every CTO at an early-stage SaaS company knows the unwritten rule: foundations work is what gets cut first when a sales call moves up the pipeline. What is new in 2026 is that AI tooling has compressed the visible part of the work — code generation — by enough that the invisible work now dominates the calendar. Developers report spending up to 84% of their time on non-coding work: reviewing AI output, refactoring around its blind spots, chasing the architectural foresight it lacks, and paying down debt it generated last quarter. That figure comes from a 2026 community survey of AI-augmented teams, and it lines up with what Chainguard's 2026 Engineering Reality Report documents across 1,200 engineers and tech leaders: people want to build, but they spend their days maintaining.

The DORA program's body of work on software delivery performance has been pointing at this for years: the metric that correlates with business outcomes is full lead time for changes, not commit count or PR throughput. AI tooling moves PR creation; it does not, on its own, move lead time. The diagram below contrasts where engineering time concentrated in 2023 versus where it concentrates in 2026:

Read alongside Martin Fowler's technical debt quadrant, the 2026 picture has a specific shape: AI accelerates "deliberate, prudent" debt (we know we are taking a shortcut and we know why) but it disproportionately generates "inadvertent, reckless" debt — code that nobody on the team fully owns, written against an architecture nobody on the team articulated. That is the debt class that compounds the fastest, and it is the class your CEO has the hardest time seeing.

Real Stories from the Field

Three patterns keep surfacing in public engineering forums. They are worth reading next to each other because they describe the same underlying mechanic from three different angles.

The first pattern is bottleneck relocation. A community thread analyzing GitHub Copilot adoption inside engineering teams captured it with one line:

"The bottleneck simply shifts to your senior engineers who now have to review double the volume." Developers ship PRs roughly 58% faster, but the review queue grows in lockstep, and the people qualified to do those reviews do not multiply.

r/TopAIReviews, Reddit thread on Copilot ROI measurement

The second pattern is the slow erosion of the engineers you most want to keep. A widely-shared HackerNews comment from an engineer with eight years of mentoring experience, now directing AI coding agents, framed it bluntly:

"The engineer who cares loses their job because they're not hitting the metrics." When velocity is the only KPI, the people who pause to understand what they shipped get punished for the pause. The people who do not pause keep shipping — until the system they shipped into stops working in a way nobody can debug.

HackerNews commenter, thread on AI coding agent supervision

The third pattern is the executive-pressure feedback loop. An engineering lead posted to r/ExperiencedDevs about presenting a multi-month plan to leadership and being told the timeline was, embarrassing:

"They literally looked at me like I was crazy… they said these timelines are not what we should expect in 2026." The thread does not reveal how the conversation ended. The author was still negotiating when the post was written.

r/ExperiencedDevs, thread on AI-driven timeline pressure

Three different framings, one shared mechanic: velocity gains relocate work into parts of the system that are harder to see, harder to measure, and easier to deny exist.

The Pattern: Foundations as a Forecasted Cost, Not a Discretionary Expense

The successful early-stage SaaS engineering organizations we work with stop treating foundations as cleanup. They treat foundations as a forecasted operational cost, like cloud spend — visible on the same dashboards, tracked with the same rigor, defended with the same data.

The State of Platform Engineering Vol 4 report makes a related point about internal developer platforms: 45.3% of teams cite developer adoption as their top challenge — not technical complexity, but cultural resistance to the platform their colleagues built. Read against the velocity-relocation problem, this implies something specific for early-stage CTOs: building the foundations is the easier half of the job. Getting your own team to use them, and getting your CEO to fund them, is the harder half.

The Playbook: Five Steps to Run This Quarter

Each step below has a concrete trigger, a concrete artifact, and a way to tell whether you actually did it versus whether you talked about doing it. The diagram that follows the steps shows the weekly cadence they fit into.

Step 1 — Replace "PR throughput" on your dashboard with full lead time

What to do: Pull the four DORA metrics for the last 90 days — lead time for changes, deployment frequency, change failure rate, time to restore. Put lead time on the same chart as PR creation rate. The gap between the two lines is your bottleneck-relocation tax.

What good looks like: the two lines move together within a 20% band. Failure mode: PR creation accelerates by 50%+ while lead time stays flat or worsens — that means review and verification are absorbing all the gain.

Step 2 — Lock 20-30% of every sprint for foundations work, with named owners

What to do: Reserve a fixed capacity slice on every sprint board for foundations. Each item needs a named owner, an acceptance criterion, and a measurable signal (test coverage delta, MTTR delta, on-call page count, build time). Anything below the threshold gets cut from the foundations slice and funded explicitly.

What good looks like: the foundations capacity is the same line item every sprint, defended in planning the same way feature work is defended. Failure mode: foundations work appears on the board only after an incident.

Step 3 — Pair every velocity tool with a proportional verification investment

What to do: When you adopt or expand an AI coding tool, allocate 30-50% of the projected velocity gain back into verification: static analysis tuned for the new tool's output patterns, structured prompting templates, mandatory explain-back sections in PR descriptions, contract tests at module boundaries the AI most often crosses incorrectly. The rule of thumb: if your AI tool budget grows by $X this quarter, your verification tooling budget grows by at least $0.30X.

What good looks like: trust in AI-generated PRs measurably rises (you can ask your seniors to rate it monthly). Failure mode: PR throughput rises while a r/ExperiencedDevs-style "the senior engineers do not trust any of this" thread exists inside your own Slack.

Step 4 — Treat your internal platform as a product, not infrastructure

What to do: Pick the three workflows your developers do most often (provisioning a new service, deploying to staging, requesting a feature flag). Measure the time-to-first-success for each, with a new engineer as the unit of measurement. Set a target — for early-stage SaaS, "new engineer ships a real production change inside 5 working days" is a reasonable bar — and instrument it.

What good looks like: developer adoption of internal tools is a tracked metric with a named owner. Failure mode: the platform team builds something technically beautiful that the rest of engineering routes around because nobody onboarded them to it.

Step 5 — Walk into every executive review with the verification ledger

What to do: Build a one-page artifact: review queue depth (median + p90), verification hours per shipped feature, debt-cleanup hours allocated vs consumed, change failure rate. Bring it to every leadership meeting where someone might compare your timelines to a competitor's launch announcement. The artifact does the arguing for you.

What good looks like: when an executive pushes back on a timeline, you point at the ledger. Failure mode: you defend the timeline with conviction and adjectives, the executive has a number from a podcast, the number wins.

The diagram below shows how these five steps fit into a recurring weekly cadence:

What to Do This Week

You do not need a quarter to start. Tomorrow morning, pull the DORA metrics for the last 90 days and chart lead time alongside PR creation rate (Step 1). Wednesday, in your next sprint planning, name the foundations slice explicitly with an owner and an acceptance criterion (Step 2). By Friday, draft the one-page verification ledger and walk it into your next executive sync (Step 5). Steps 3 and 4 are the work of the next two quarters, but they only get traction if the first three are already on the wall.

The 30-minute artifact you can produce today: open a doc, list every AI coding tool your team has adopted in the last 12 months, and next to each one write the verification investment you made at the same time. If the right column is mostly blank, you have your starting point. The conversation that started this article — the executives who looked at the lead like they were crazy — does not get won by arguing harder. It gets won by changing what is on the wall.

If you cannot, in numbers, tell your CEO what your team's lead time for changes was last week and how it has trended over the last 90 days, that is the actual emergency — not the timeline gap with whatever your competitor announced on LinkedIn.

Diagnostic Checklist: Where Your Foundations Stand This Quarter

Run these against your own organization. Each "yes" is a flag, not a failure. Score: 0-2 yes flags = healthy, 3-4 = at risk, 5+ = the foundations debt is already accruing interest faster than you are paying it down.

Has your PR creation rate grown by more than 30% in the last 6 months while lead time for changes has stayed flat or worsened? Yes / No

In your last sprint, was the foundations work line item either absent, unowned, or cut mid-sprint to make room for feature work? Yes / No

If a senior engineer left tomorrow, would the median PR review time on your team measurably increase? Yes / No

Has any AI coding tool been adopted in the last 12 months without a corresponding budget line for verification tooling, static analysis tuning, or review-time investment? Yes / No

Does a new engineer joining your team need more than 5 working days, with hand-holding, to ship their first production change? Yes / No

In your last executive review, did you defend a timeline with conviction and adjectives rather than with a one-page ledger of verification load and review queue depth? Yes / No

Has your on-call rotation gotten louder, longer, or harder to staff since you expanded AI tooling adoption? Yes / No

Need a sharper picture of where your foundations debt is concentrated?

Talk to our team about a one-week engineering audit that produces the verification ledger, the lead-time chart, and the foundations-capacity model your next executive review needs.

REFERENCES

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript