AI agent frameworks solve real problems. They can help teams manage orchestration, state, tool use, and multi-step execution far faster than building everything from scratch. But in the wrong workflow, they can also introduce more complexity than value. A company that applies an orchestration-heavy stack to a narrow task may end up with slower delivery and a system that is harder to control than the problem ever required.

That is why choosing an AI agent framework is a system design decision tied to the business task itself: what the system is expected to do, how much variation the workflow contains, how reliable the outcome must be, and what happens when the system gets it wrong. The right stack depends more on whether the use case calls for lightweight automation, structured orchestration, human oversight, or production-grade control.

This article is designed to help decision-makers make that choice in the right order. Start with the use case, the workflow complexity, the risk profile, and the operational requirements. Then determine what kind of architecture is needed. Only after that, choose the framework and supporting tools that fit the system you are actually trying to build.

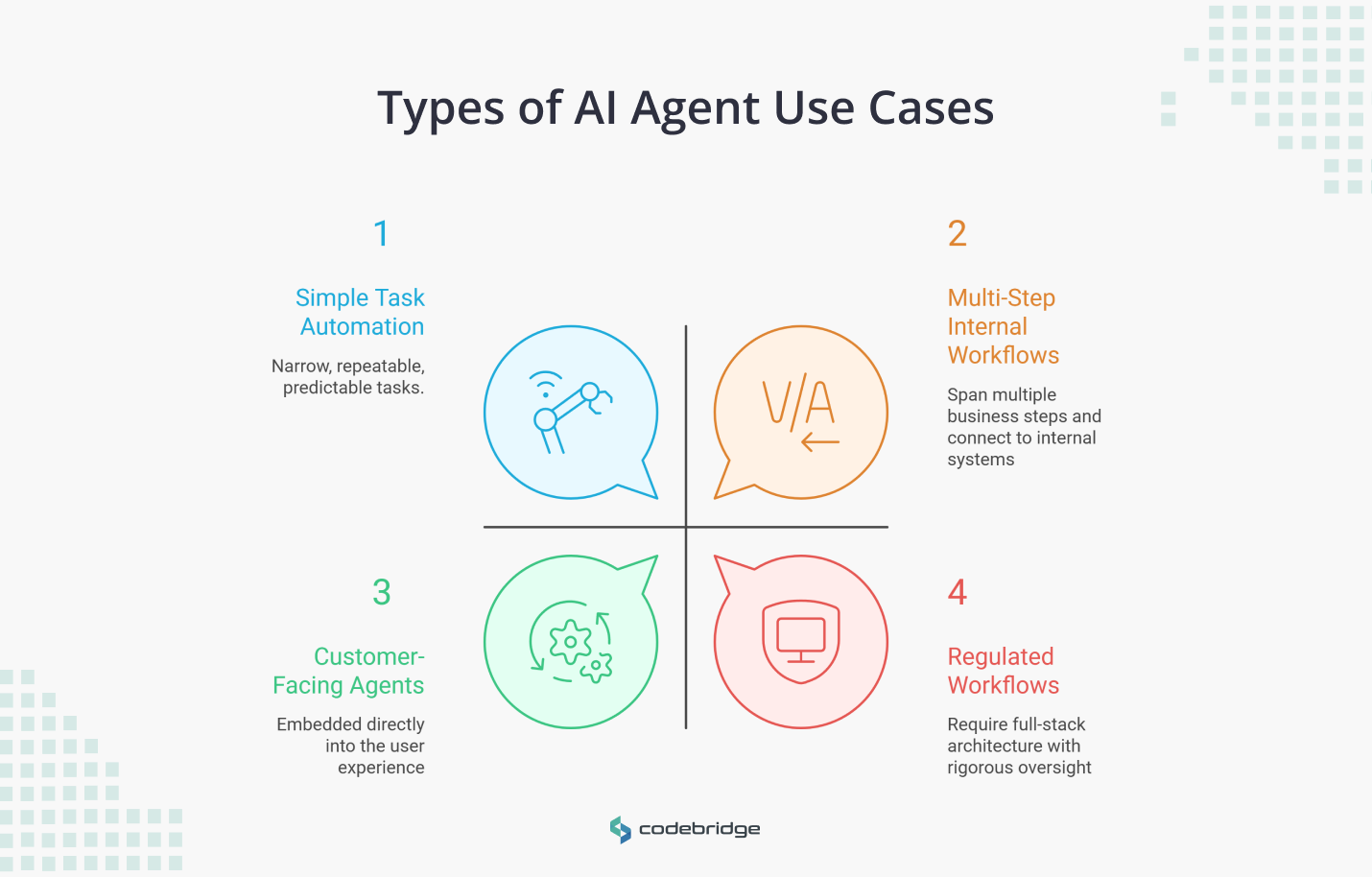

The 4 Types of AI Agent Use Cases

Before comparing frameworks, it helps to classify the type of system being built. That step is easy to skip, and it is where many framework decisions start going wrong. Teams often evaluate agent stacks as if they were interchangeable developer tools, when in practice they support very different operating models.

A narrow automation task, an internal multi-step workflow, a customer-facing assistant, and a regulated decision-support process do not create the same architectural demands. They differ in autonomy, state, orchestration, safety requirements, and the cost of failure.

Official guidance from Anthropic and OpenAI also points in this direction: start with the workflow pattern, then add complexity only where the use case actually needs it.

1. Simple Task Automation

These involve narrow, repeatable tasks such as data extraction, summarization, or structured drafting. These use cases have low autonomy requirements and follow predictable paths. In many cases, simple patterns are enough, and a heavy framework adds more complexity than value.

2. Multi-Step Internal Workflows

These are systems that span multiple business steps, maintain state across interactions, and connect to internal systems like CRMs or reporting pipelines. Examples include support triage and automated reporting. Here, orchestration starts to matter because the challenge is not just generating output, but managing process flow reliably.

3. Customer-Facing AI Agents

These systems are embedded directly into the user experience, such as copilots inside SaaS products or guided support assistants. They require high levels of predictability and sophisticated safety logic to protect brand integrity. Failures here affect product quality and brand trust, not just internal efficiency.

4. High-Risk or Regulated Workflows

Used in finance, healthcare, or legal compliance, these systems generate outputs that can affect sensitive decisions or user rights. They require full-stack architecture with rigorous oversight and auditability.

This decision map helps separate use cases that need lightweight execution from those that require orchestration or regulated system controls.

How AI Agent Systems Are Structured

Once the business use case is clear, the question becomes: what parts of the system are actually required to make it work reliably in production?

In practice, most AI agent systems are built from six core building blocks.

Not every use case activates these layers equally. A simple task automation may need only strong reasoning and basic evaluation. A high-risk or regulated workflow requires the deepest guardrails, oversight, and auditability. That is why one framework rarely solves the whole problem. The real task is to identify which layers your use case depends on, then choose a framework and supporting tools that fit that architecture.

Use Case 1: Simple Task Automation

What you are really building: A single-step task where the model receives an input, follows a clear instruction, and produces a structured output. The workflow is predictable, the scope is narrow, and there is little or no need for the system to make decisions across multiple steps.

The stack you need: You primarily need the development layer. In practice, this means a well-designed prompt, a structured output format, and — if needed — one or two tool calls through the model's native API. No orchestration, no persistent state, no multi-agent coordination. At this stage, optimizing single LLM calls with in-context examples is often sufficient.

Frameworks that fit: Anthropic's native tool-use and structured outputs, or the OpenAI Assistants SDK, are well-suited here. They provide the foundational components, such as prompt templates and tool wrappers, needed for rapid prototyping. A framework becomes worthwhile only when you find yourself rebuilding the same scaffolding repeatedly across multiple simple tasks.

Where Teams Get Stuck: The most common mistake at this level is reaching for an orchestration framework before the task needs one. A team building a document summarizer does not need a multi-agent graph — but it is easy to adopt one early because the framework's abstractions feel productive during prototyping. The cost shows up later in added latency on every call and debugging complexity that is disproportionate to what the system actually does.

The other failure pattern is skipping evaluation entirely because the task seems too simple to warrant it. Even a single-step automation benefits from a basic output quality check, especially if it runs at volume.

Practical Takeaway: Start with the model's native API and add tooling only when a clear, repeated need emerges. If the task is running at scale, invest early in a lightweight evaluation check to catch drift before it compounds.

Use Case 2: Multi-Step Internal Workflows

What you are really building: A system where an incoming request triggers a sequence of actions, such as retrieving data from one system, transforming it, making a decision, writing the result to another system, and the agent needs to track where it is in that sequence. These systems move beyond chaining prompts into true orchestration.

The stack you need: The core challenge is ensuring that context survives between steps, that failures at step three don't silently corrupt step five, and that the system can resume or retry without starting over. You need both a development layer and a robust orchestration layer to manage handoffs and state transitions between different tasks.

Frameworks that fit:

- LangGraph: Ideal for complex, long-running workflows that require persistent state management and deterministic task execution via Directed Acyclic Graphs (DAGs).

- CrewAI: Fits when the workflow is better modeled as role-based task delegation. For example, one agent gathers data, another analyzes it, and a third formats the output.

Where teams get stuck: The system works in testing but breaks unpredictably in production because edge cases were never surfaced. A support triage agent that handles the five most common ticket types flawlessly may silently misroute the sixth.

The second pattern is poor recovery — when a step fails midway through a long workflow, teams discover they have no mechanism to resume from that point and must restart the entire sequence.

Practical Takeaway: Define what happens when a step fails, when context is ambiguous, and when the agent encounters a case it was not designed for. Build retry and human escalation logic into the orchestration layer from the start, not after the first production incident.

Use Case 3: Customer-Facing AI Agents

What you are really building: A system where the end user is your customer, not your employee. The inputs are unpredictable, the tolerance for bad outputs is low, and failures are not caught internally — they are experienced directly by the people your business serves.

This changes the quality bar. A customer-facing agent who gives a wrong answer creates a support escalation or may erode trust in the product.

The stack you need: You need orchestration for flow control, but the critical layer at this tier is evaluation. You should also trace the full decision path the agent took to get to the final output. If a support copilot gives the right answer but retrieved it from the wrong source, that is a latent failure that will surface in a different conversation. Production monitoring, regression testing against known scenarios, and real-time quality scoring become essential.

Frameworks that fit:

- LangGraph: Provides the flow control necessary for predictable user interactions.

- LangSmith: Essential for production monitoring, offline/online evaluation, and regression testing (critical for catching regressions before users do).

Where teams get stuck: Companies launch without an evaluation pipeline and rely on user complaints as the quality signal. By the time a pattern of bad responses surfaces through support tickets or churn data, the damage is already done.

The second pattern is over-trusting retrieval. Teams build RAG-powered copilots, verify that retrieval works on a test set, and ship. Then, companies find that in production, the agent confidently presents information from marginally relevant documents.

The third and most subtle problem is inconsistency. The agent gives a good answer to a question on Monday and a different answer to the same question on Thursday. Without regression testing against a stable set of known inputs, this kind of drift is invisible until a customer notices.

Practical Takeaway: Treat evaluation as a product feature. Before deploying, build a baseline test set of realistic customer inputs with expected outputs, and run it on every model or prompt change. In production, log every agent decision path. Not just final responses, so that when quality degrades, you can diagnose where in the chain the failure started.

Use Case 4: High-Risk or Regulated Workflows

What you are really building: A system where errors have significant financial, legal, or ethical consequences. Your organization is accountable for those decisions, regardless of whether a human or an agent made them. These systems must recognize their own limits and proactively transfer control to human users when a workflow fails or encounters high-stakes decisions.

The stack you need: You need everything from the previous tiers — orchestration, evaluation, monitoring — plus a governance layer that most frameworks do not provide out of the box. This means granular access controls over what the agent can and cannot do, immutable logging of every decision and data access, and clearly defined escalation thresholds where the system stops and hands control to a human.

Frameworks that fit:

- Semantic Kernel (Microsoft): designed for enterprise integration, supports .NET and Python, has built-in planner/orchestration patterns, and gives teams fine-grained control over every step of the agent's execution.

- Custom Infrastructure: Organizations often build custom "supervision" layers to provide audit trails, access controls, and real-time enforcement of safety constraints that off-the-shelf frameworks may lack.

Where teams get stuck: Businesses don’t treat governance as an architectural layer. Teams add logging and access controls after the agent is already built, then discover that the execution flow was never designed to produce the data those controls need. Audit trails that capture final outputs but not intermediate reasoning steps are insufficient when a regulator asks why a specific recommendation was made.

The second pattern is assuming that a framework's built-in guardrails satisfy regulatory requirements. They rarely do. Regulatory compliance is domain-specific, jurisdiction-specific, and evolving — it requires custom policy logic that lives outside the framework.

Practical Takeaway: Design the oversight system before the agent. Define what decisions require human approval, what data must be logged, and what conditions trigger an automatic halt — then build the agent within those constraints. Treat the governance layer as the product, and the agent as a component operating inside it.

A Practical Executive Model for Selection

Everything above leads to one decision view. The matrix below maps use case complexity to the architecture, frameworks, risks, and oversight each tier demands. Start with your row. Read across.

Conclusion

There is no single best framework, but there is a wrong way to choose one. Teams that start with the tool and work backward toward the problem end up rebuilding six months later. Teams that start with the workflow, classify the risk, and map the required architecture build systems that hold up when the use case scales or the model changes.

The framework is the most replaceable part of the stack. The decisions you make about orchestration, evaluation, and oversight are not. Get those right, and the framework choice becomes straightforward.

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript