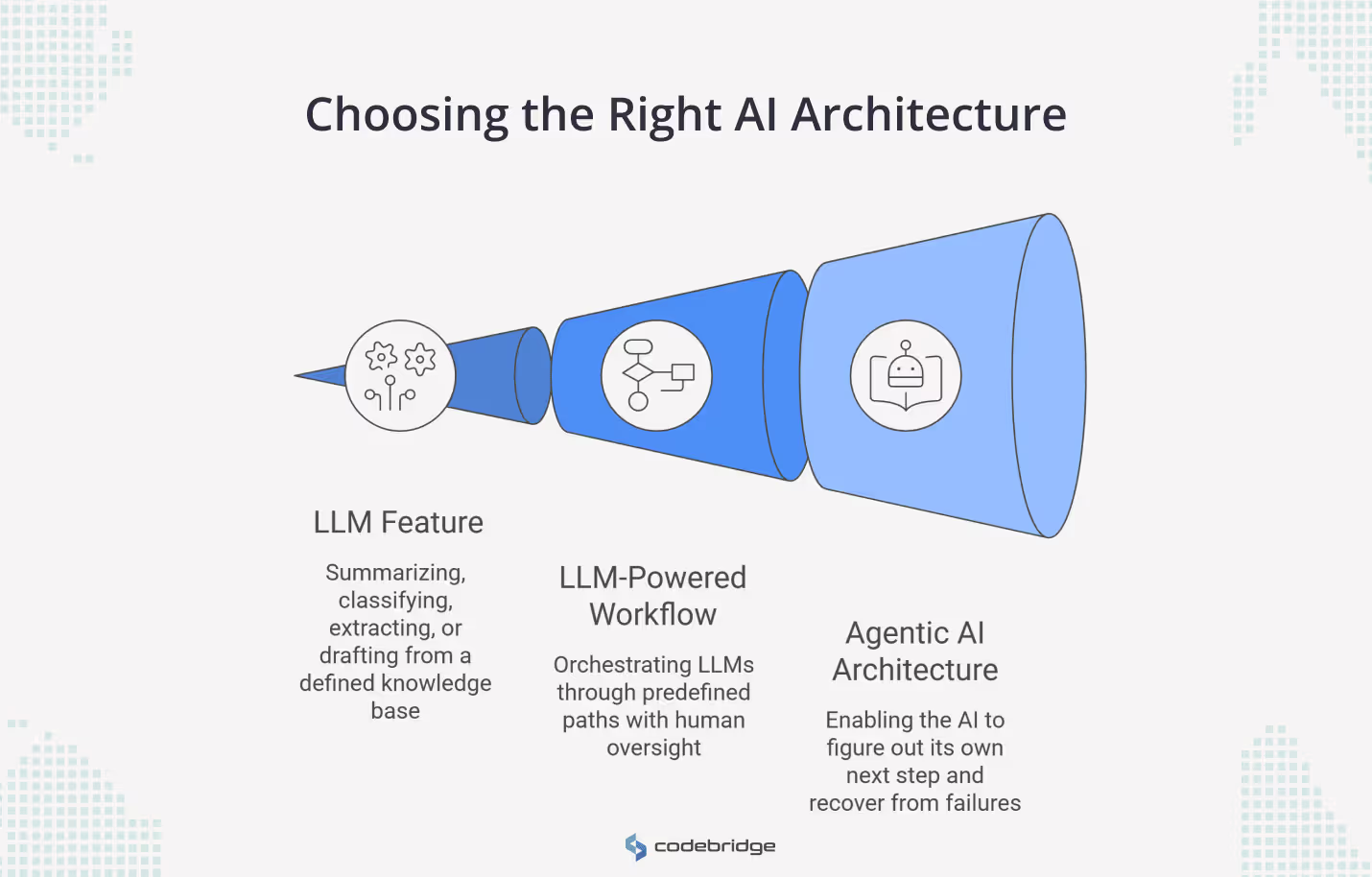

You probably see this choice as binary: add an LLM feature or build an agent. But an LLM is a model. An agent is the architecture around it. Tools, memory, orchestration, control flow, safeguards. Two different categories of things.

So the question you should ask about your roadmap is narrower than "LLM or agent." Ask whether your product needs better reasoning inside a single interaction, or whether it needs a system that can chase an outcome across multiple steps and changing conditions.

OpenAI and Anthropic both draw this line. OpenAI describes agents as systems that accomplish tasks on a user's behalf using tools and guardrails. Anthropic separates structured workflows from autonomous agents.

We work with technical founders and engineering leaders who face this decision on real product timelines with real constraints. Below, we break down when a plain LLM feature is enough, when a workflow gives you more without the overhead, and when an agent architecture is worth the operational cost.

How to Choose the Right AI Architecture for Your Product

You do not always need an agent. Sometimes, you need the simplest architecture that reliably delivers the outcome your users care about. For most teams we work with, that means a single well-prompted LLM call with retrieval, clear instructions, and structured outputs. No framework. No orchestration layer. Just a model doing one job well.

Anthropic and OpenAI both say this, by the way. Anthropic found that their most successful builder teams rely on simple, composable patterns rather than agent frameworks. OpenAI recommends starting with clear use cases and predictable boundaries before adding autonomy. These are the companies building the models, and they are telling you to slow down.

When an LLM Feature Is Enough for a Product Roadmap

If your product needs to summarize, classify, extract, or draft from a defined knowledge base, you probably need an LLM feature, not an agent. The model handles the language. Your application controls what happens before and after. That split is the whole point.

Think about what this looks like in practice. A support tool where your team reviews and sends AI-drafted responses. A document pipeline that extracts fields and feeds them into a rules engine. An internal search interface where employees ask questions against approved sources. In each case, you get real value from the model without giving it any autonomy. Your code still decides what runs, when, and in what order.

Foundation model providers call this an "augmented LLM," and the label matters. Plugging in a retrieval or a tool call does not make your product an agent. It makes it an LLM feature with better inputs. Plenty of the systems we build at Codebridge sit right here, and they ship faster, cost less to operate, and break in predictable ways. That last part matters more than most teams realize.

When to Use an LLM-Powered Workflow Instead of an AI Agent

For many of the products we build, this is the right stopping point. Not an LLM feature. Not an agent. A workflow where the process is defined in advance, but one or more steps use an LLM to make a judgment call.

You know the path. Your code defines the sequence, the branching logic, and the handoffs. The model shows up at specific steps to do specific work: score this ticket, summarize this document, flag this clause. Then your system takes over again and moves to the next step.

Workflows orchestrate LLMs through predefined paths. Agents direct their own paths. If you can draw your process on a whiteboard before you write any code, you have a simple workflow.

The reason this matters: you keep control. Your team can add model-powered judgment to document review, onboarding, support routing, compliance queues, without handing the system permission to decide what happens next. We see technical founders reach for agent architecture when a workflow would give them 90% of the value at a fraction of the operational cost. Test your process on a whiteboard first. If the steps are stable, you need a workflow.

When Your Product Actually Needs an Agentic AI Architecture

You need an agent when your product has to figure out its own next step. Not retrieve a result. Not follow a sequence. Figure out what to do, pick a tool, try it, and recover when the first attempt fails.

OpenAI's practical guide puts it in concrete terms: agents fit problems that involve complex decision-making, unstructured data, or rule-based systems too brittle to maintain. The common thread is that you cannot draw the process on a whiteboard ahead of time. The steps depend on what the system finds along the way.

Picture a product that investigates operational exceptions. It pulls context from three different systems, tests a hypothesis, hits a dead end, tries a second path, and escalates to a human only when it has enough information to make that escalation useful. You cannot hard-code that sequence because the sequence changes with every exception. That is agent territory.

Try to use a blunt test. If you can spec the happy path and the three most common failure paths in a flowchart, build a workflow. If the paths depend on a runtime context that you cannot anticipate at design time, you are looking at an agent. Most teams get to this point later than they expect.

What Changes When You Move From an LLM Feature to an AI Agent

When you move from an LLM feature to an agent, you change your operating model. Most teams plan for the capability. Few plan for what comes with it.

With an LLM feature, you evaluate whether the model's output is good enough. With an agent, you are responsible for a longer list. What can this system access? Which actions need a human to approve them? How do you detect when it fails silently? What do you log, and who reviews the logs? How does the system recover when it picks the wrong path halfway through a task?

These are operations questions, not model questions. Many teams recommend human-in-the-loop checkpoints for high-stakes actions and repeated failures. NIST's AI Risk Management Framework goes further, saying that trustworthy AI depends on governance, measurement, and risk management across the full system lifecycle. Both are saying the same thing. The model is the smallest part of your problem once you give it the ability to act.

We tell clients this early because the teams that struggle with agents are rarely struggling with the model. They are struggling with permissions, observability, and failure recovery. If you are not ready to invest in those three things, you are not ready for agents.

AI Agent Risks: What Expands With More Autonomy

Every tool you give an agent is an attack surface. Every permission is a trust decision. This is not theoretical risk management. NIST's recent work on securing agent systems names the specific threats: identity spoofing, authorization failures, indirect prompt injection, and gaps in audit trails. These problems do not exist when your LLM feature drafts a summary inside a sandbox. They show up the moment your system can read a database, call an API, or send a message on a user's behalf.

So if you choose an agent architecture, you are also choosing to build the discipline around it. You need to define which tools the agent can reach and under what conditions. You need approval gates for destructive or irreversible actions. You need logging that captures the agent's reasoning chain, not just its outputs. And you need a plan for what happens when the agent encounters a prompt injection attempt or a context window that has been quietly corrupted.

Businesses can build impressive agent demos, but then stall for months on these exact problems. The model worked. The operations around it did not. Build the guardrails into your architecture from day one, or plan to rebuild later at three times the cost.

A Simple Roadmap Test

Forget the labels. Look at what your product needs to do, then match the architecture to the behavior.

Use an LLM feature when your product needs to summarize, classify, extract, draft, or answer questions inside a single bounded interaction. Your code controls the flow. The model handles the language. Ship it, monitor output quality, iterate on prompts.

Use a workflow when your business process has defined steps, but one or more of those steps need model judgment. You can draw it on a whiteboard. You can spec the inputs and outputs for each stage. The model shows up where you tell it to, does its work, and hands control back.

Use an agent when the system must decide its own next step, choose between tools based on what it finds, maintain state across attempts, and recover from failure without a human stepping in. You cannot draw this process in advance because the path depends on the runtime context.

Start simple. Add autonomy only when the use case forces your hand. At Codebridge, we pressure-test this decision with every client before a single line of architecture gets written. The cheapest agent is the one you did not build when a workflow would have worked.

Conclusion

Most products do not need an agent. They need a well-built LLM feature or a workflow that puts model judgment in the right places. The architecture that wins is the one that delivers value to users without dragging in operational complexity you have not staffed for.

Start there. Prove that the LLM feature moves a metric that your users or your business care about. When you hit a wall because the process requires decisions your code cannot anticipate, promote the system to an agent. Not before.

The three tiers are not a maturity ladder. An LLM feature is not a lesser version of an agent. A workflow is not a stepping stone. Each one is the correct architecture for a different kind of problem. The mistake is picking the tier based on what sounds ambitious instead of what the product behavior demands.

Get the behavior right first. The architecture follows.

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript