Most agentic AI deployments in manufacturing that survive past pilot are narrow. They sit above MES and ERP, generate recommendations and work orders for human approval, and never write to control systems. Market data shows that predictive-maintenance agents account for 38% of agentic AI deployments in manufacturing today, and supply-chain optimization is the fastest-growing application at a projected 30% CAGR through 2030. Adoption is concentrating on specific workflow types, not on broad autonomy.

That concentration isn't a transitional state on the way to autonomous factories. It is the production-grade form of the technology, and most of the work in adopting it is deciding which workflows belong inside that pattern. The decision sits in whether a specific workflow is cross-system and exception-heavy enough to justify the integration and oversight cost an agent introduces. Most workflows aren't. Confusing the two creates fragile architecture, integration debt the operations team inherits, and a probabilistic system sitting too close to deterministic safety logic.

Identifying which workflows belong in the narrow band is most of the strategy. The rest is architecture, governance, and the operational discipline to keep the agent above the deterministic layer where it belongs.

What Agentic AI Means in Manufacturing

An agentic system in manufacturing is a context-aware AI system that monitors production signals, interprets operational conditions, decides the next appropriate step, and acts across manufacturing software systems such as MES, CMMS, ERP, quality platforms, and scheduling tools.

The key difference from a dashboard or analytics tool is the willingness to operate across systems that don't share a data model, and to handle exceptions that weren't enumerated when the workflow was designed.

For a technical buyer evaluating where agents fit, it is necessary to distinguish them from existing technologies. Agentic AI is not:

- A Dashboard: It does not merely visualize data; it initiates responses to it.

- A Point Predictive Model: While a model might predict a bearing failure, an agent coordinates the spare parts query, technician scheduling, and production re-routing.

- A Standard Chatbot: It does not simply retrieve information from manuals; it interacts with system APIs to change the state of the workflow.

- RPA (Robotic Process Automation): RPA follows deterministic "if-this-then-that" rules. Agentic AI uses reasoning to handle operational exceptions where rules are not predefined.

The value of an agent is found not in its ability to coordinate action across siloed systems. In manufacturing, this typically manifests as Large Language Model (LLM)-based agents that interpret natural-language goals, query legacy databases, and generate executable work orders or schedule changes.

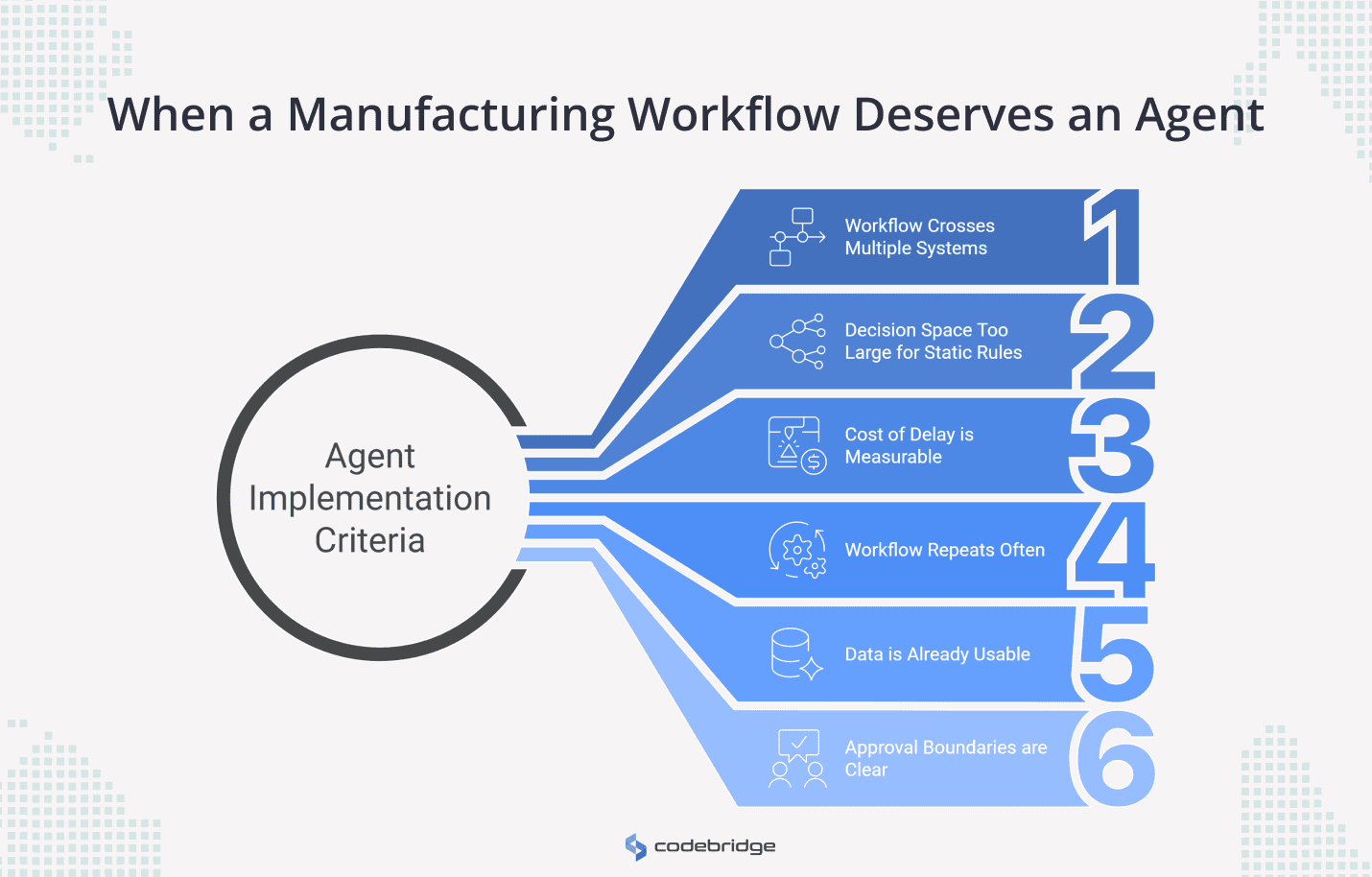

When a Manufacturing Workflow Deserves an Agent

Six conditions separate workflows that justify an agent from workflows that don't. A candidate workflow needs to pass all six. Failing any one of them usually means the engineering cost outweighs the operational return, or that a a script, an RPA bot, or a dashboard does the job better.

1. The workflow crosses multiple systems

If the decision can be made by reading one system, an agent is overkill. The case for an agent starts when a single decision depends on machine status from the MES, the production schedule, current inventory in the ERP, and supplier availability at the same time. Coordination across systems that don't share a data model is what an agent is for.

2. The decision space is too large for static rules

A good outcome has to be definable, maximize OEE while meeting a customer deadline, minimize changeover cost while clearing a backlog. The path to that outcome shouldn't be. If the constraints change with every shift and the combinations of valid actions can't be enumerated in advance, static rules will either miss cases or become unmaintainable. That's the operational gap an agent fills.

3. The cost of delay is measurable

The integration cost of an agent is justified when slow response carries a real price: unplanned downtime, scrap from quality drift, and expedited shipping when a planner reacts late. If the workflow runs on a weekly cadence and a few hours of latency don't matter, the agent isn't earning back its build cost.

4. The workflow repeats often

Agents are expensive to integrate, prompt the engineer, and govern. That cost amortizes only over recurring exceptions. One-off strategic decisions are still better handled by human analysts. Recurring operational exceptions like disruption response, maintenance triage, and quality investigation are where agents pay back.

5. The data is already usable

"People archaeology" is the practice of finding the one veteran engineer who knows where a particular dataset lives; it is the most common reason agentic projects stall. If the plant doesn't have standardized asset IDs, clean event logs, and digitized workflow systems, the first project isn't an agent. It's the data infrastructure that makes an agent possible.

6. Approval boundaries are clear

The organization has to be able to state, before the pilot starts, which actions the agent executes autonomously and which require human sign-off. Pilots stall when this isn't decided in advance: the agent generates recommendations, no one is authorized to act on them, and the system produces volume without producing decisions.

The six tests sort use cases by where agents fit cleanly, where they fit with caveats, and where the architecture argues against them.

Four Agentic AI Use Cases in Manufacturing That Justify the Complexity

In Codebridge's agentic AI work with manufacturing clients, four workflows clear all six tests cleanly. These are the use cases where the technology is earning its place in production environments today.

1. Production Disruption Response

Disruptions are inherently cross-system and time-sensitive. A delay on a single assembly line can cascade into labor misallocation, missed delivery windows, and inventory pileups.

The most concrete production deployment in this category today is Bosch's Shopfloor Agent, running across multiple Bosch plants, including the Bamberg site in Germany. The agent ingests production and machine data, analyzes error patterns and logs during breakdowns, generates hypotheses about root causes, proposes specific corrective actions, and supports documentation of the fix.

Operators on the line execute the actions; the agent handles the reasoning, search, and write-up steps that previously took engineers hours of manual work. Bosch reports annual savings of around €850,000 per plant from reduced downtime, and has commercialized the technology to external manufacturing customers since late 2025, a credibility signal that the internal results held up to commercial scrutiny.

The pattern generalizes beyond troubleshooting. An agent reading disruption signals from machine data and shift reports, checking WIP status, and evaluating alternative routings produces a recovery plan in minutes rather than the hours it takes a planner to assemble manually. Customer delivery commitments and any safety-related calls still go to a human.

2. Predictive Maintenance and Work-Order Orchestration

Predictive maintenance (PdM) identifies when a failure might occur, but it does not manage the response. The work between the alert and a closed-out repair is where the operational value sits: checking the ERP for the right replacement part in stock, finding a technician with the certification and an open shift slot, scheduling the line stop so it lands at a changeover rather than mid-run. That's also what tends to get dropped when the alert goes to an inbox.

Siemens' Senseye Predictive Maintenance with the Maintenance Copilot interface, running at Sachsenmilch Leppersdorf in Germany, created the system that ingests machine condition data and operations logs, learns from sensor inputs to forecast failures, and surfaces recommendations through a generative-AI interface that maintenance teams query in natural language. Sachsenmilch is now extending the deployment to integrate directly with SAP Plant Maintenance, so that maintenance notifications generated by the AI transfer automatically into the work-order system. The agent doesn't write to SAP without authorization - the integration creates draft notifications that the maintenance lead reviews and releases.

Siemens doesn't publish plant-level financial savings, but the operational pattern is well-quantified at the industry level. Deloitte Analytics Institute reports that AI-driven predictive maintenance enables up to 25% reduction in maintenance costs and 10–20% uptime improvements when the alert pipeline is connected to work-order generation rather than to dashboards. The connection is what produces the gain. Alerts without orchestration are just faster ways to discover that something is broken.

3. Quality Deviation Investigation

Quality deviations are high-cost and evidence-heavy. A single defect investigation pulls together machine parameters from the production window, operator notes from the shift logs, supplier lot histories from procurement, and inspection records from QMS. A senior quality engineer spends most of the triage time assembling that picture before they can start analyzing it.

An agent assembles the evidence package: pulls the relevant inspection records, surfaces previous defects with similar batch parameters or supplier lots, and drafts a structured CAPA outline that the engineer can review and edit. This causes the triage step to compress and the analysis step doesn't, which is the point.

This is the pattern with the most rigorously documented results. In pharmaceutical manufacturing, where deviation management is regulated under GMP and audited closely, AI-driven deviation workflows have been shown to reduce investigation time by 50 to 70%. The European Federation of Pharmaceutical Industries and Associations identified this category in its 2024 position on AI in deviation and CAPA management as one of the highest-leverage applications of LLM-based systems in regulated manufacturing. The gain comes from the same mechanism that works in discrete manufacturing: the agent compresses evidence assembly, not analysis or release decisions.

In Codebridge's quality investigation engagements, the architecture stays close to this pattern. The agent reads from MES, QMS, and the supplier records system, drafts a CAPA outline grounded in the historical record of similar deviations, and hands the package to the quality lead with the reasoning trail attached. Product release decisions stay with the quality lead. The agent handles the assembly work that comes before them.

4. Supplier and Production Planning Exception Management

Supplier disruptions don't show up cleanly in any one system. A delayed shipment notice arrives by email. The downstream impact lives in the production schedule. The Supplier disruptions don't show up cleanly in any one system. A delayed shipment notice arrives by email. The downstream impact lives in the production schedule. The substitute material list lives in the ERP. The decision about whether to switch grades and re-run qualifications lives with planning and quality.

IBM's cognitive supply chain, deployed across IBM's own global manufacturing operations, is the most thoroughly documented production deployment of this pattern. The system connects procurement, planning, manufacturing, and logistics data in close to real time, integrates a cloud-based supplier risk tool that scans the web for disruption signals, and reduces the time required to mitigate a part shortage from four to six hours per part number to minutes. For major disruption analysis, like a closed airport or a constrained supplier, the response time has compressed from days to minutes through what-if simulation.

IBM reports USD 160 million in cumulative savings from the deployment, attributed to reduced inventory costs, optimized shipping, time savings, and better decision-making across the supply chain.

The architecture matches the pattern this piece describes. The agents detect disruption signals, model the downstream impact, and surface mitigation options. Procurement teams approve the actions. Microsoft's Procurement Agent in Dynamics 365 Supply Chain Management follows the same shape: decode supplier communications, identify which production orders and customer commitments are at risk, recommend mitigations, keep humans in control of the decision.

The category is moving from custom builds to productized agents fast. The architectural questions stay the same.

Where Agentic AI Is Usually Overkill in Manufacturing

The same six tests sort the negative cases. Four show up consistently as the wrong fit:

- Static reporting. If the objective is visibility, conventional BI and dashboarding are cheaper, faster to build, and more reliable than an agent. Agents earn their cost when decisions need to be made, not when data needs to be displayed.

- Single-system automation. When a task runs entirely inside one system with clear rules, traditional scripts or RPA do the job better. Auto-filling an invoice from a purchase order doesn't need a planner. It needs a script.

- One-off analysis. Non-recurring decisions don't repeat often enough to amortize the integration cost. A senior analyst with the right access to the data is the right tool for a strategic question that gets asked once.

- Safety-critical control loops. Placing an LLM-based planner inside a deterministic safety loop is a category error, not a cost question. These loops stay governed by certified hardware and standards like IEC 61508. The narrow RL exception from Section 3 doesn't change the prohibition for the planner-style agents this article covers.

The Architecture and Governance Pattern That Makes Manufacturing Agents Viable

In production manufacturing, the agent is rarely the entire system. The production-grade system is the architecture around the agent, and that architecture is also where governance lives.

The pattern that holds up under operational and regulatory pressure has five components. Each does architectural work, and most also satisfy a specific governance obligation.

1. The integration layer

Controlled, secure access to the OT/IT stack through APIs or middleware. The integration layer is where the heterogeneous-stack problem from earlier in the article gets solved in code: protocol translation, authentication, rate limiting, and schema validation. This requirement is purely architectural. No regulatory hook attaches directly, but the auditability of every other component depends on this layer being clean.

2. Deterministic execution services

The agent generates intent ("schedule maintenance for asset 4471 within 72 hours, prioritize parts in stock"). A hard-coded service validates the business rules, schemas, and safety constraints before the action executes. This is the architectural answer to the determinism gap from Section 3 — the LLM handles the reasoning, deterministic code handles the action. It's also the boundary that keeps probabilistic systems from drifting into IEC 61508 territory. Letting an LLM orchestrate end-to-end compound errors and produce unstable systems. The hybrid pattern is what makes the system production-viable.

3. Human approval gates

Mandatory gates for any action with significant financial, safety, or quality impact. The threshold for "significant" is set by the organization, not by the agent. This requirement does double duty: architecturally, it's the failsafe that keeps a probabilistic planner from authoring decisions it shouldn't; in regulatory terms, it's how the EU AI Act's human oversight obligations for high-risk AI systems get implemented in code. A manufacturing agent that touches safety components of regulated machinery falls under the AI Act's high-risk classification, which obligates documented human oversight, not advisory oversight. Approval gates are how that obligation lands in the architecture.

4. Replayable, auditable logs

Every recommendation, every reasoning step, every tool call, every approval, every final action — logged in a form that supports forensic reconstruction when a failure happens. Architecturally, this is what allows root-cause analysis when an agent's recommendation leads to a bad outcome. In regulatory terms, this maps to the NIST AI RMF "Measure" function and to the EU AI Act's technical documentation and post-market monitoring requirements. It's also what allows the organization to demonstrate, after an incident, that the agent's behavior was within its authorized boundaries.

5. Fail-closed behavior

When the agent lacks sufficient context, when its confidence falls below the threshold, or when an upstream system is unreachable, the agent stops and escalates to a human. It does not improvise. Fail-closed is the architectural counterpart to the NIST AI RMF "Manage" function, the system has a defined response to its own uncertainty, and that response is to hand control back, not to guess.

How to Start Without Overbuilding

The first agentic AI deployment in a manufacturing organization should be narrow, observable, and reversible. Read-only mode for the first weeks, low-criticality workflow, two systems integrated at most, mandatory human-in-the-loop for any execution. The goal of the pilot isn't to demonstrate the technology – it's to find out which of the architectural and governance assumptions from the previous section actually survive contact with the plant.

Concretely, a workable first pilot looks like this:

- Operates in read-only or monitor-only mode for the first weeks. The agent observes, reasons, and produces recommendations. It doesn't write to any system. This is fail-closed behavior applied at the pilot stage, before the team trusts the agent enough to give it write access.

- Targets a low-criticality workflow - maintenance triage, quality evidence gathering, supplier disruption monitoring. Disruption response and PdM orchestration are the right end states, but they're not the right starting points.

- Integrates two systems at most. MES and CMMS for a maintenance pilot. ERP and supplier email for an exception-management pilot. Each additional system multiplies integration debt; pilot scope discipline is what keeps the architecture clean.

- Keeps a human in the loop on every execution path. No exceptions, no edge-case auto-approvals. The pilot's job is to surface where the agent's reasoning is sound and where it isn't, which only happens if a human is reviewing every action it would have taken.

Track operational metrics, not AI metrics. The four that matter most:

- Mean time to diagnose (MTTD) — how long it takes to identify the root cause of a quality deviation or maintenance failure. The agent compresses the triage step; this metric measures whether the compression is real.

- Planner workload reduction — what percentage of recurring exceptions the agent handles to first-draft quality before a human reviews. If the planner is rewriting every recommendation, the agent isn't producing leverage.

- First-attempt work-order quality — how often a maintenance work order generated by the agent is approved without modification. This is the closest metric to "is the agent useful in production."

- Schedule recovery time — how fast the system produces a recovery plan after a disruption signal. The Bosch Shopfloor Agent and the disruption response use case from Section 5 both live here.

AI accuracy as a top-level metric is a category error in this context. An agent that's 95% accurate at producing recommendations no one acts on is worse than an agent that's 85% accurate at producing recommendations that ship. Track what reaches production.

Conclusion

Agentic AI in manufacturing is a narrow technology with a wide failure surface. The narrow part is what works: planner-style agents above MES and ERP, coordinating across systems that don't share a data model, handling exceptions that static rules can't enumerate. Everything else is where the failures live.

The framework in this article isn't a guide to which agentic AI is best. It's a filter for which workflows should have an agent at all. Six tests, applied honestly, eliminate most candidate workflows. The four that pass: disruption response, predictive maintenance orchestration, quality investigation, and supplier exception management. Pick one, treat the pilot as a falsifiability test of the architecture, and measure the result in operational metrics.

In Codebridge's manufacturing engagements, the pattern is consistent: planner above the control layer, deterministic execution underneath, human approval at every consequential gate, full audit trail of every decision. The use case changes. The pattern doesn't.

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript