Recent forecasts suggest that task-specific AI agents will soon become a common part of enterprise software. Gartner predicts that by 2026, 40% of enterprise applications will incorporate AI agents, compared with less than 5% in 2025. For many organizations, this creates both opportunity and pressure as agentic systems promise deeper automation, but their implementation is significantly more complex than earlier AI deployments.

Many early enterprise AI initiatives relied on Retrieval-Augmented Generation. RAG remains useful for grounding model responses in internal knowledge. However, as models improve at reasoning and tool use, simply retrieving documents is often insufficient for workflows that require planning, coordination, and execution.

As a result, organizations experimenting with agentic systems increasingly face architectural questions about how agents should interact with tools, services, and each other.

This article examines five design patterns organizations should evaluate when designing agentic AI systems.

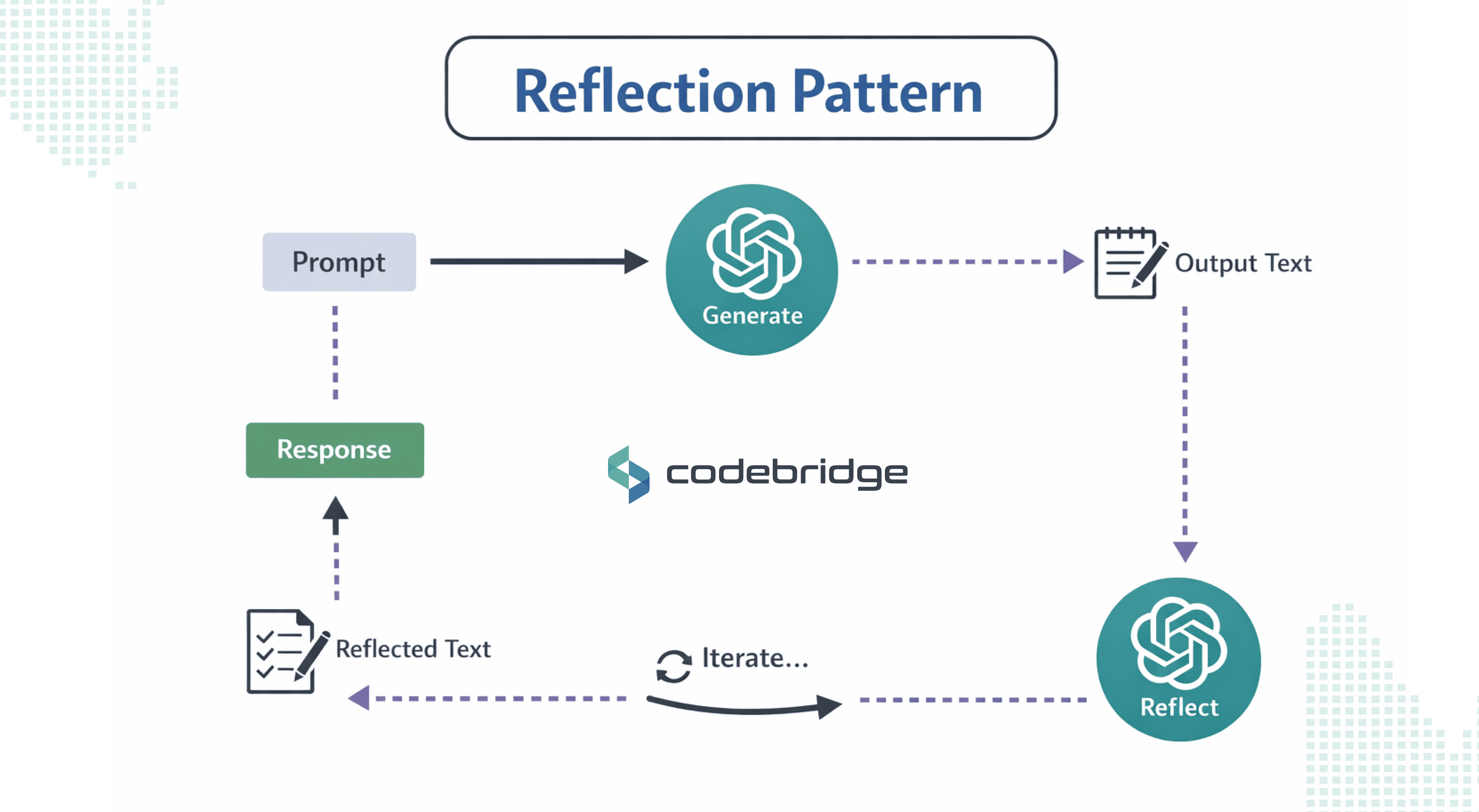

Pattern 1: Reflection (The Self-Correcting Agent)

Reflection is used when a single-pass output is not reliable enough for the task. In many business settings, the issue is not whether a model can produce an answer, but whether that answer can be trusted without an additional review step. This becomes especially important when mistakes are costly, difficult to detect, or expensive to correct later.

In this pattern, one agent generates an initial output, and a second step reviews it against defined criteria. That review may check factual accuracy, internal consistency, policy compliance, or alignment with task-specific requirements. The point is not to make the system endlessly self-improving. It is to introduce a controlled verification layer before the result is used downstream.

The main benefit of Reflection is higher output quality on tasks where first-pass accuracy is too inconsistent.

Complexity and Failure Modes

Implementing Reflection introduces significant operational overhead. Every reflection cycle requires additional model calls, which increases both latency and token consumption. Businesses must treat this as a thinking budget that must be justified by the business value of the task.

Without clear stopping rules, the pattern can also create loops that consume resources without improving the result in a meaningful way.

Best Fit by Stage and Use Case

Reflection is the ideal architectural choice for mature companies operating in regulated or high-stakes domains where mistakes are incredibly expensive. Typical use cases include:

- Legal Tech: Reviewing contracts for hidden indemnity risks.

- Healthcare: Validating medical reasoning against clinical guidelines.

- Software Engineering: Performing security audits on generated code before it enters a CI/CD pipeline.

In these scenarios, the requirement for quality heavily outweighs the need for sub-second processing speed. It is less appropriate for low-risk tasks where speed matters more than precision.

A practical rule is to introduce Reflection only when failure data shows that single-pass generation is not meeting the required standard. In those cases, the pattern can improve reliability, but only if the review criteria and termination conditions are explicit.

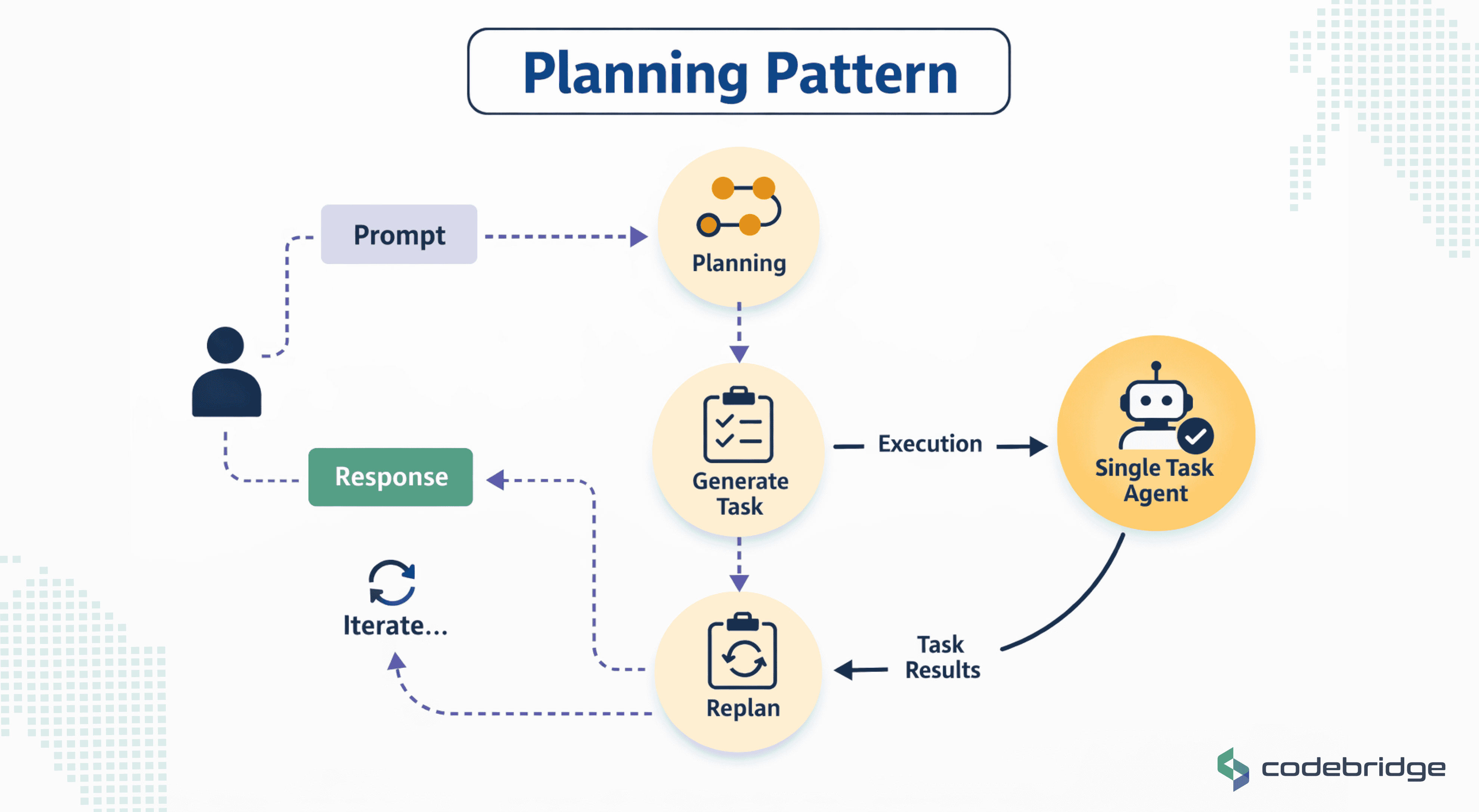

Pattern 2: Plan and Solve (Task to Agent Pattern)

Reflection improves output quality, but it does not address a different source of failure: many tasks break down because the work itself is not structured clearly before execution begins. When an agent is asked to handle a multi-step objective without an explicit plan, it may choose the wrong order of operations, miss dependencies, or use tools before the task has been properly framed.

The Planning pattern addresses this by separating task design from task execution. Instead of acting immediately, the system first breaks the objective into a sequence of steps and defines how those steps relate to one another. Execution begins only after that structure is in place.

What It Improves

By forcing the agent to externalize its strategy upfront, this pattern brings a necessary layer of transparency and order to long-running workflows. It improves system reliability by surfacing potential conflicts or missing information early in the process rather than during the execution of a critical tool call.

For organizations, this pattern provides a clearer audit trail as stakeholders can review the agent's generated plan to verify that it aligns with business logic before authorizing the system to proceed.

Complexity Introduced: The Planning Tax

The primary architectural cost of this pattern is a mandatory upfront computational overhead. Because the model must perform a comprehensive reasoning pass to generate the initial roadmap, latency is increased before the first tangible output is produced.

The core engineering challenge for leadership is accurately assessing task complexity; implementing a roadmap architect for simple, straightforward requests results in unnecessary planning tax without a corresponding increase in value.

Likely Failure Modes

- Over-Decomposition: The agent may break a task into an excessive number of trivial steps, causing cumulative latency to compound rapidly as the system manages the overhead for each minor sub-task.

- Plan Staleness and Excess Rigidity: In dynamic environments where data or system states change mid-run, an agent following a fixed roadmap may stubbornly continue with an irrelevant or broken plan. This leads to "silent failures" where the agent executes its sub-tasks flawlessly but fails to achieve the high-level goal because it lacks the adaptive recovery mechanisms inherent in more interactive patterns.

Best Fit by Stage and Use Case

The Plan and Solve pattern is best suited for enterprise-grade automation and scaling startups managing multi-layered technical operations. Typical use cases include:

- Multi-System Integrations: Sequencing API calls across disparate platforms where the order of operations is critical.

- Data Migration Projects: Handling complex transformations with strict dependencies between steps.

- Deep Research Synthesis: Orchestrating long-running information gathering across diverse sources before generating a final report.

Architecture note: In production, this task-to-agent pattern is a foundational AI automation design approach: a planning step produces the task sequence, and a separate execution layer carries it out. More mature systems also include re-planning checkpoints so the workflow can adapt when a dependency fails or the environment changes.

Pattern 3: Tool Use

.png)

Planning helps structure a task, but structure alone does not create outcomes. Once a workflow depends on live data, external computation, or action inside business systems, the architecture needs a way for the agent to operate beyond the model itself. That is the role of the Tool Use pattern.

In this pattern, the model does not interact with external systems directly. It works through defined tools, each exposed through a controlled interface with a declared purpose, expected parameters, and a known output shape. The model selects a tool, generates the call, receives the result, and uses that result in the next step of the workflow. Microsoft’s tool-use guidance describes this pattern in terms of tool schemas, execution logic, message handling, error handling, and state management, which is a useful way to think about the architecture behind the model.

The main benefit is operational reach. Tool use allows an agent to query databases, call APIs, execute code, and interact with enterprise platforms using current information rather than relying only on static model knowledge. That makes it possible to move from answer generation to task execution.

The trade-off is that reliability now depends on the interface layer around those tools. A weak schema can lead to poor tool selection or invalid arguments. An unstable execution layer can turn API failures, timeouts, and inconsistent outputs into workflow failures. As the tool library grows, routing, validation, and permission control become harder to manage.

Tool Use is most valuable when tasks depend on current data or require interaction with external systems. It is less useful when the task can be completed entirely within the model’s context.

Architecture note: In production systems, tool use is usually implemented as a bounded invocation layer between the model and external systems. That layer defines available tool schemas, validates parameters, executes calls, manages state across the interaction, and logs results for traceability. In higher-risk environments, permissions are also constrained at the tool level rather than delegated broadly to the model. Microsoft’s example of read-only database access is a good illustration of this principle.

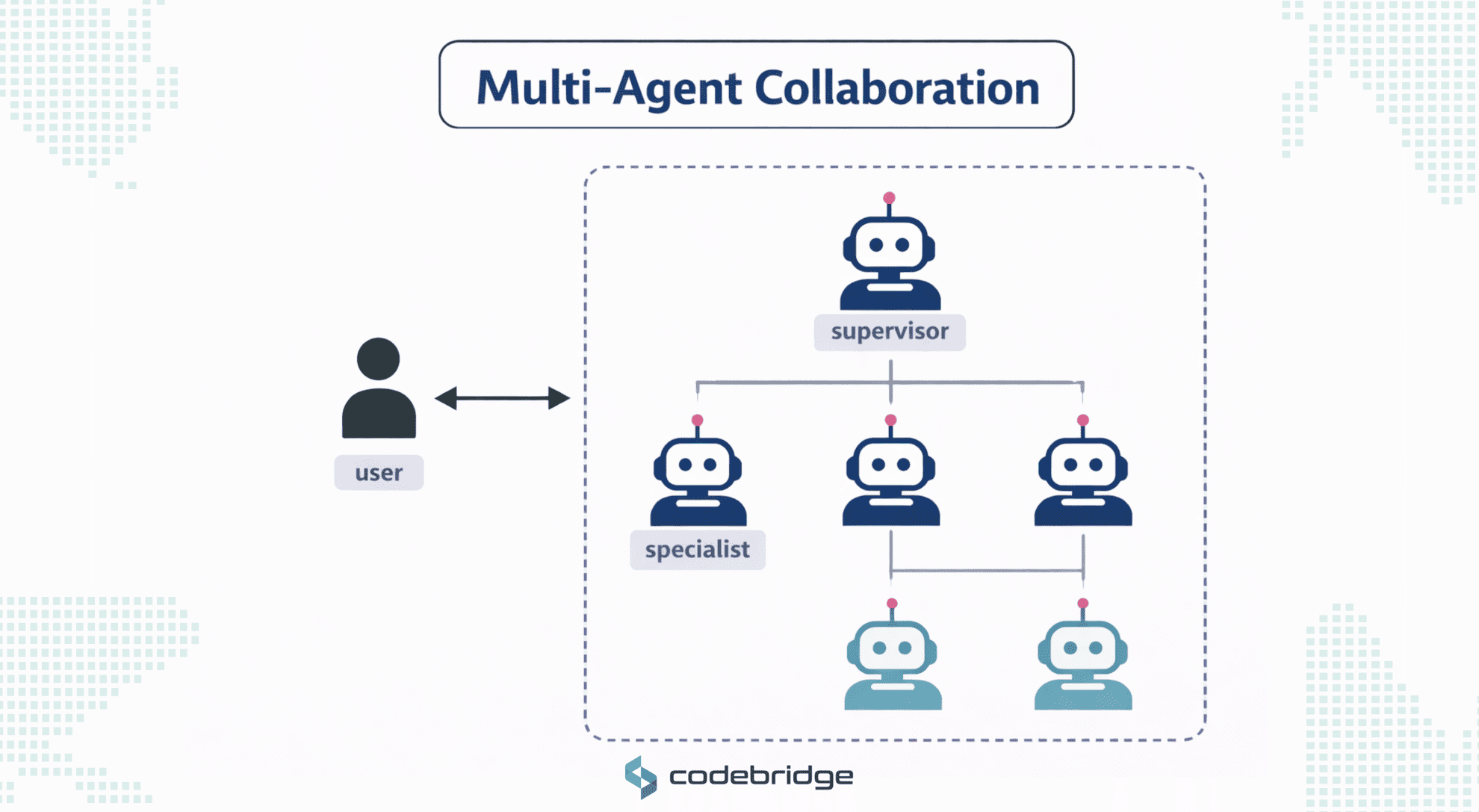

Pattern 4: Multi-Agent Collaboration (The Specialized Team)

Tool use extends what a single agent can do, but it does not remove a different limitation. One agent may still be responsible for too many kinds of work at once. As workflows grow, the same agent may be expected to retrieve information, make decisions, use tools, validate outputs, and communicate results across different domains. At that point, the issue is no longer access to capability, but concentration of responsibility.

How It Works: Orchestrated Specialization

The Multi-Agent Collaboration pattern mirrors the structure of a human organization by distributing work across a network of specialized agents. The process generally involves:

- Task Decomposition: A central orchestrator or manager agent receives a high-level objective and decomposes it into discrete sub-tasks.

- Specialized Delegation: Each sub-task is assigned to a dedicated agent optimized for a narrow domain – equipped with targeted prompts, specific tools, and the most appropriate model for that specific function.

- Collaborative Interaction: Agents interact through structured workflows, which can be sequential (one agent's output is another's input), parallel (agents work simultaneously), or hierarchical (a manager oversees workers).

- Synthesis: The orchestrator collects the outputs from these specialized minds and synthesizes them into a unified, coherent response or final outcome.

The main benefit is clearer specialization as each agent can operate with a narrower objective, more appropriate tools, and a more constrained decision space. This often improves consistency in complex workflows, especially when the work spans different types of reasoning or operational steps.

Architectural Complexity

Deploying a multi-agent system is exponentially harder to design, debug, and maintain than single-agent systems. It requires robust orchestration layers and standardized communication interfaces to ensure interoperability. Technical leaders must implement:

- Routing Protocols: Utilizing open standards like the Agent-to-Agent (A2A) protocol for inter-agent coordination or the Model Context Protocol (MCP) for standardized tool and resource access.

- Conflict Arbitration Logic: Explicit rules to resolve instances where two specialized agents disagree or stall, preventing the system from hanging.

Best Fit by Stage and Use Case

Multi-agent systems are ideal for mature technical organizations and enterprise-scale R&D initiatives where workflows naturally span multiple domains. Typical use cases include:

- Software Development Lifecycles: Integrating specialized agents for requirements analysis, code generation, security auditing, and documentation.

- Enterprise IT Operations: Orchestrating complex pipelines that require simultaneous research, data analysis, and professional presentation.

- Supply Chain Optimization: Coordinating independent agents representing different nodes (suppliers, manufacturers, distributors) to respond to real-time disruptions.

Architecture note: In production, this pattern usually depends on an orchestration layer that manages task assignment, message passing, and result collection across agents. More mature implementations also define explicit boundaries for what each agent is allowed to do, which helps reduce overlap, prevent redundant work, and make failures easier to isolate.

Multi-agent collaboration improves specialization, but once several agents must work together reliably, coordination itself becomes a separate architectural concern.

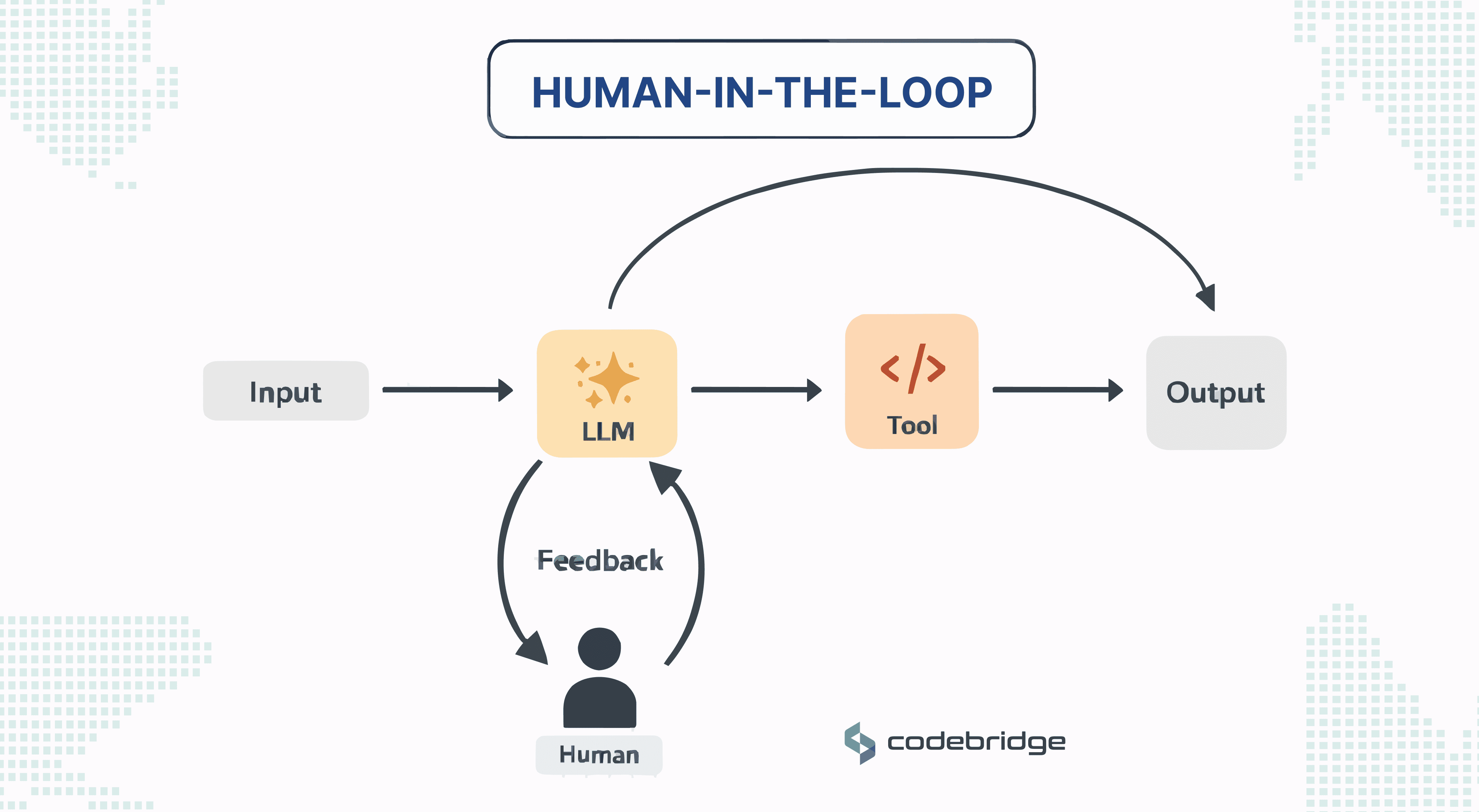

Pattern 5: Human-in-the-Loop (HITL)

As agentic systems become more capable, the architectural challenge shifts from capability to control. Reflection improves reliability, planning structures complex work, tools enable interaction with real systems, and multi-agent designs distribute responsibilities. At this stage, the remaining questions are how critical decisions are supervised and who is accountable for critical decisions.

The Human-in-the-Loop (HITL) pattern introduces explicit points in the workflow where a human reviews or authorizes actions before the system continues. Instead of allowing the agent to execute every step autonomously, the architecture defines escalation thresholds where human judgment becomes part of the decision process.

How It Works

The HITL pattern functions by integrating human intervention points directly into the agent’s execution path. The workflow follows a structured sequence:

- Predefined Checkpoints: Developers model specific steps in the workflow with explicit guards or approval gates.

- Execution Pause: When an agent reaches a critical juncture - such as a request to execute a large financial transaction or release a sensitive report - it pauses its autonomous loop.

- Human Contextualization: The system calls an external interface to notify a human operator, often through familiar enterprise channels like Microsoft Teams or Outlook. The agent provides the human with the proposed action, the reasoning behind it, and the necessary context.

- Action/Resume: The operator can approve the decision, correct an error, or provide missing input. Once the human provides feedback, the agent resumes execution post-approval.

The benefit is accountability. Certain decisions require contextual judgment that automated systems cannot reliably provide, particularly when financial, legal, or safety consequences are involved. HITL allows organizations to combine automated execution with human oversight at critical points in the workflow.

Complexity Introduced

The primary drawback of HITL is significant architectural and infrastructure overhead. Engineering teams must build systems capable of:

- Securely pausing and persisting the state of active workflows for potentially long durations.

- Managing asynchronous waiting states without exhausting system resources.

- Developing robust notification and response-handling logic to resume the agentic loop once the external input is received.

Best Fit by Stage and Use Case

HITL is mandatory for fintechs, healthcare organizations, and legal tech firms where regulatory compliance is non-negotiable.

- Financial Services: Approving transactions that exceed specific authorization thresholds.

- Content Moderation: Handling edge cases that require nuanced cultural or political judgment.

- Healthcare: Validating data anonymization before releasing patient datasets for research.

Agentic AI Design Patterns and When to Use Them

Conclusion

The five patterns in this article reflect different ways of managing complexity in agentic systems. Reflection improves reliability within a single task. Planning adds structure to multi-step work. Tool Use allows the system to operate through external capabilities. Multi-Agent Collaboration distributes responsibilities across specialized agents. Human-in-the-Loop adds oversight where decisions cannot be delegated fully to automation.

Architecture selection is about choosing the minimum level of complexity needed to achieve a reliable outcome. Systems that are under-structured tend to become fragile. Systems that are over-structured become expensive, hard to maintain, and difficult to justify.

For businesses adopting agentic AI, the practical question is not how many agent patterns can be combined. It is which architectural pattern gives the organization enough control to make automation useful, governable, and sustainable in production?

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript