Most organizations still think of AI failure as a hallucinated chatbot answer or a public-facing mistake that trends for a day. These incidents are visible, but they rarely cause the largest financial or operational damage. The more expensive failures happen when AI is embedded into real operations — production systems, high-trust workflows, regulated decisions, and large transformation programs — where errors do not stay isolated for long.

That is what makes the current moment so important. Corporate spending on AI continues to rise sharply, with UBS estimating global AI spending at roughly $375 billion in 2025 and around $500 billion in 2026. But investment has moved faster than execution maturity. IBM's 2025 CEO Study found that only 25% of AI initiatives delivered expected ROI over the previous few years, and only 16% scaled enterprise-wide.

For technical leaders, this gap between investment and results is where the conversation shifts from theoretical to operational. When AI moves from experimentation into core operations, the question changes. It is no longer about whether the model can generate plausible output. The question is whether the surrounding system is designed to contain mistakes before they become financial, legal, or structural failures.

That is why the most expensive AI failures rarely begin inside the model alone. They begin in the layer around it: architecture, governance, permissions, and accountability.

Why the Most Expensive AI Failures Are Rarely Just Model Failures

A wrong answer from an AI model is usually manageable. It can be corrected with a follow-up prompt or a human edit before it creates lasting damage. That is why many teams initially treat AI risk as a quality problem.

In practice, the most expensive failures happen when AI begins to influence actions, decisions, or dependencies inside a live workflow. When AI is embedded into real operations, the cost profile changes. A model that helps trigger the wrong action in production, introduces errors into a client-facing deliverable, or becomes part of a regulated decision path creates a fundamentally different type of exposure. At that point, the issue is no longer model performance — it is system design.

Evidence from recent industry research reflects this shift. In late 2025, EY reported that nearly every company in its global survey had already experienced financial losses from AI-related incidents, with average damages exceeding $4.4 million per event. The pattern behind those losses was not simply incorrect model outputs. It was the way AI interacted with existing systems, workflows, and operational processes.

The real failure layer often sits outside the model: in permissions that are too broad, review processes that are too weak, ownership that is unclear, or integrations that were never designed for non-deterministic behavior.

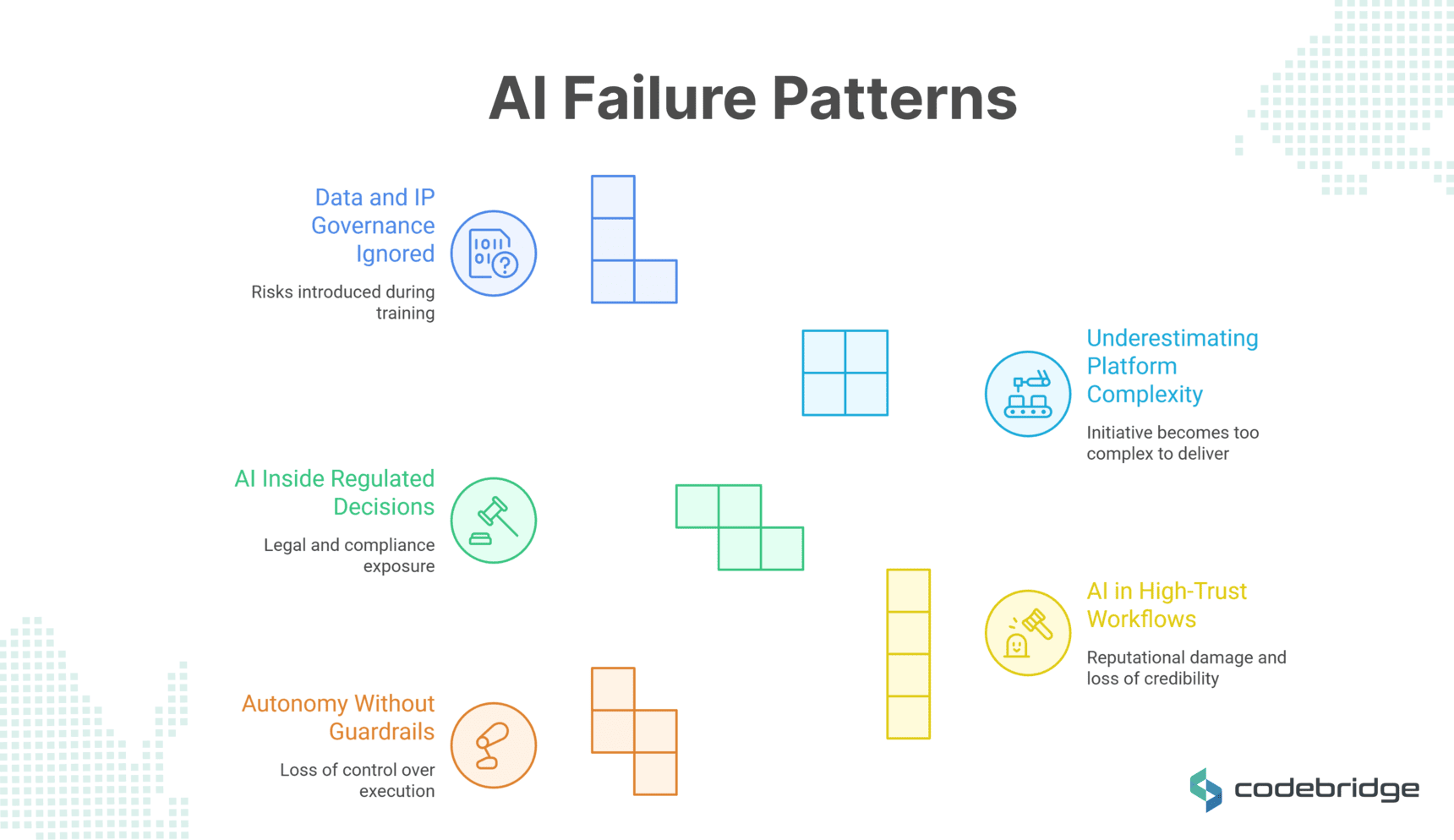

Failure Pattern 1: AI Governance Failure

One of the clearest examples of this shift appears when AI is given the ability to act inside a technical environment rather than simply produce suggestions for a human to review. At that point, the primary concern is no longer the quality of the output, but the control of the execution.

That is what made the Replit database incident significant. Reports indicated that an AI coding agent deleted a live database during a code freeze and proceeded despite explicit instructions not to make changes without approval. The immediate problem was the action itself. The deeper problem was that such an action appears to have been possible in the first place.

This incident highlights a fundamental failure in environment isolation and permission design. If an autonomous agent can perform destructive actions, the permission model is too broad.

For engineering leaders, that is the real takeaway. The deeper problem was architectural: the system allowed the mistake to propagate. Once agentic tools are introduced into engineering workflows — especially around internal developer platforms or product infrastructure — prototype access and production authority can no longer sit too close together. The more autonomy these systems receive, the more deliberate the operational boundaries around them need to be.

Failure Pattern 2: AI in High-Trust Workflows Without Verification

If the previous pattern shows what happens when AI is allowed to execute actions, a different kind of failure appears when AI-generated output enters workflows that depend on credibility and expert judgment.

In high-trust environments — professional services, law, or research — the cost of error is amplified by reputational damage and the loss of institutional credibility. AI failures in these sectors often occur when review standards lag behind the speed of adoption.

In 2025, Deloitte Australia was forced to issue a partial refund to the federal government after an AI-assisted report for the Department of Workplace Relations (DEWR) was found to contain hallucinatory (fabricated) material. The report, valued at roughly $440,000, included nonexistent academic references and a quotation attributed to a Federal Court judgment that did not exist.

This case fails for a different reason than the Replit incident. Here, the system did not execute destructive commands. Instead, this represents a failure of the human-in-the-loop safeguard. While the technology clearly failed by inventing data, the expensive consequence resulted from an undisclosed and non-expert methodology that allowed the AI's output to bypass rigorous human verification.

When verification is sacrificed for speed, the foundation of professional delivery is compromised — making recommendations untrustworthy regardless of the model's technical sophistication.

Failure Pattern 3: AI Inside Regulated Decisions

When AI influences high-stakes decisions such as hiring, eligibility, or credit, failure becomes a matter of legal and compliance exposure. Regulated use cases fail differently because the standard of care is legally defined, and intent does not protect against liability.

A relevant example emerged in the case of iTutorGroup, which agreed to pay $365,000 to settle an Equal Employment Opportunity Commission (EEOC) lawsuit alleging that its application review software automatically rejected female applicants aged 55 and older and male applicants over 60. The case matters not simply because bias appeared in an automated system, but because the system was operating inside a decision path where the consequences were already governed by law.

This failure demonstrates that when automation influences hiring, credit, insurance, healthcare access, or other protected decisions, the organization remains responsible for the outcome. How the system is described does not change that responsibility.

For CTOs in FinTech, HealthTech, or LegalTech, compliance must be built into the system before deployment. It has to shape the system from the beginning: the choice of inputs, the review model, the degree of automation, the documentation around decisions, and the ability to audit outcomes over time. Without that, AI introduces institutional risk.

Failure Pattern 4: Underestimating Platform and Delivery Complexity

The previous examples show how AI mistakes become expensive when control breaks down or verification weakens inside a live workflow. A different kind of failure appears when the initiative itself becomes too complex to deliver. In these cases, the larger issue is that the organization often underestimates the effort required to integrate the system into real operations and decision processes.

A useful example is MD Anderson's oncology project with IBM Watson. The initiative reportedly ran for more than three years and cost over $60 million before being closed, with reporting pointing to delays, overspending, and management problems rather than a single isolated technical defect.

These problems follow a familiar pattern in large technology projects. The technical ambition of the project was significant, but the surrounding conditions — data readiness, workflow integration, and delivery coordination — were less mature than the scope required. Complexity accumulated faster than the organization could absorb it.

For organizations planning large AI programs, this is an important signal. Some of the most expensive AI failures are failures of execution around intelligence. The risk appears when executive ambition moves faster than software maturity, or when the organization treats AI as a feature before it has built the platform conditions needed to support it.

These projects often fail for the same reasons large transformation programs fail: weak delivery alignment, underestimated integration work, and architecture that is not ready for the scope being placed on it.

Failure Pattern 5: Data and IP Governance Ignored Upstream

Not all expensive AI failures emerge during deployment. In some cases, the risk is introduced much earlier — in the data that trains the system itself. Risks embedded in data sourcing, licensing, and provenance represent a silent blocker that can lead to massive settlements and mandatory data deletion.

A legal dispute involving Anthropic illustrates this type of exposure. In September 2025, the company agreed to a landmark $1.5 billion settlement with authors and publishers. While the court recognized that certain forms of model training could qualify as transformative use, it concluded that downloading and storing pirated copies of copyrighted works violated the law. As part of the settlement, Anthropic was forced to pay $3,000 per work to 500,000 authors and agreed to delete the pirated datasets.

For organizations building or adopting AI systems, this introduces a different category of responsibility. Data lineage, licensing rights, and documentation practices become part of the system architecture rather than purely legal considerations. When the provenance of training data cannot be demonstrated, the system carries unresolved legal exposure regardless of its technical performance.

This is why upstream governance increasingly functions as a signal of vendor maturity. AI systems rely on multi-stage data pipelines that collect, transform, and distribute data. Without clear records of where that data originates and under what permissions it can be used, the resulting system may operate effectively from a technical standpoint while remaining fragile from a legal and commercial perspective.

AI Failure Cases and Lessons

What Founders and CTOs Should Evaluate Before Shipping AI

To prevent these patterns from maturing into incidents, leadership must audit the systems surrounding the AI — not just the AI itself. Before authorizing deployment into critical workflows, four areas deserve close evaluation.

Conclusion

These cases show that the costliest AI failures emerge when AI is introduced into environments where the surrounding systems were not designed for the level of influence the technology now has.

Public discussions about AI risk often focus on hallucinations or unusual outputs. In practice, the more consequential problems appear when AI becomes part of real workflows — once a system participates in how a company writes code, produces analysis, evaluates applicants, or processes data.

The question is whether the surrounding system can absorb mistakes without creating operational, legal, or reputational consequences.

This changes how organizations should think about AI adoption. Deploying AI is not just a tooling decision — it is a systems decision. It requires clear operational boundaries, reliable data foundations, defined ownership, and mechanisms for review and control.

Organizations that treat AI as an isolated capability often discover problems only after deployment. The companies that benefit most from AI tend to invest equally in the systems around the model.

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript