Gartner projects that by 2026, 40% of enterprise software applications will include agentic AI. In 2025, that number sat below 5%. The adoption curve is steep. The governance curve is flat.

Fewer than one in ten organizations have a governance framework built for AI agents, according to Gartner's research. Most companies that deploy agents in production rely on general AI usage policies, the same documents they wrote for chatbot features and recommendation engines. Those policies assume a human initiates every action and reviews every output. Agents break that assumption. They act, make chain decisions, and call external services on their own.

AI agent governance belongs in your system architecture. If you treat it as a compliance document, you will retrofit it later at several times the cost.

Why Governance Defaults to Policy

McKinsey's 2025 State of AI report found that 62% of businesses say their organizations are experimenting with AI agents. Governance and risk management lagged far behind. The pattern is familiar: engineering ships the feature, legal drafts a policy, and nobody connects the two at the system level.

There are practical reasons for this gap. Governance feels non-functional. No product manager writes a ticket for it. The agent works in staging, passes QA, and goes to production. The policy PDF lives in a shared drive.

NIST's AI Risk Management Framework draws a useful distinction here. Its "Govern" function covers organizational intent: roles, accountability, and risk appetite. Its "Manage" function covers operational enforcement: monitoring, incident response, and runtime controls. Most teams stop at "Govern" and skip "Manage." They define what the agent should do. They do not build the systems that constrain what it can do.

A policy that states the agent must not access patient records without authorization carries no weight if the agent's service account holds broad database permissions. The constraint has to live in the system, or it does not exist.

What Agentic AI Governance Looks Like As Architecture

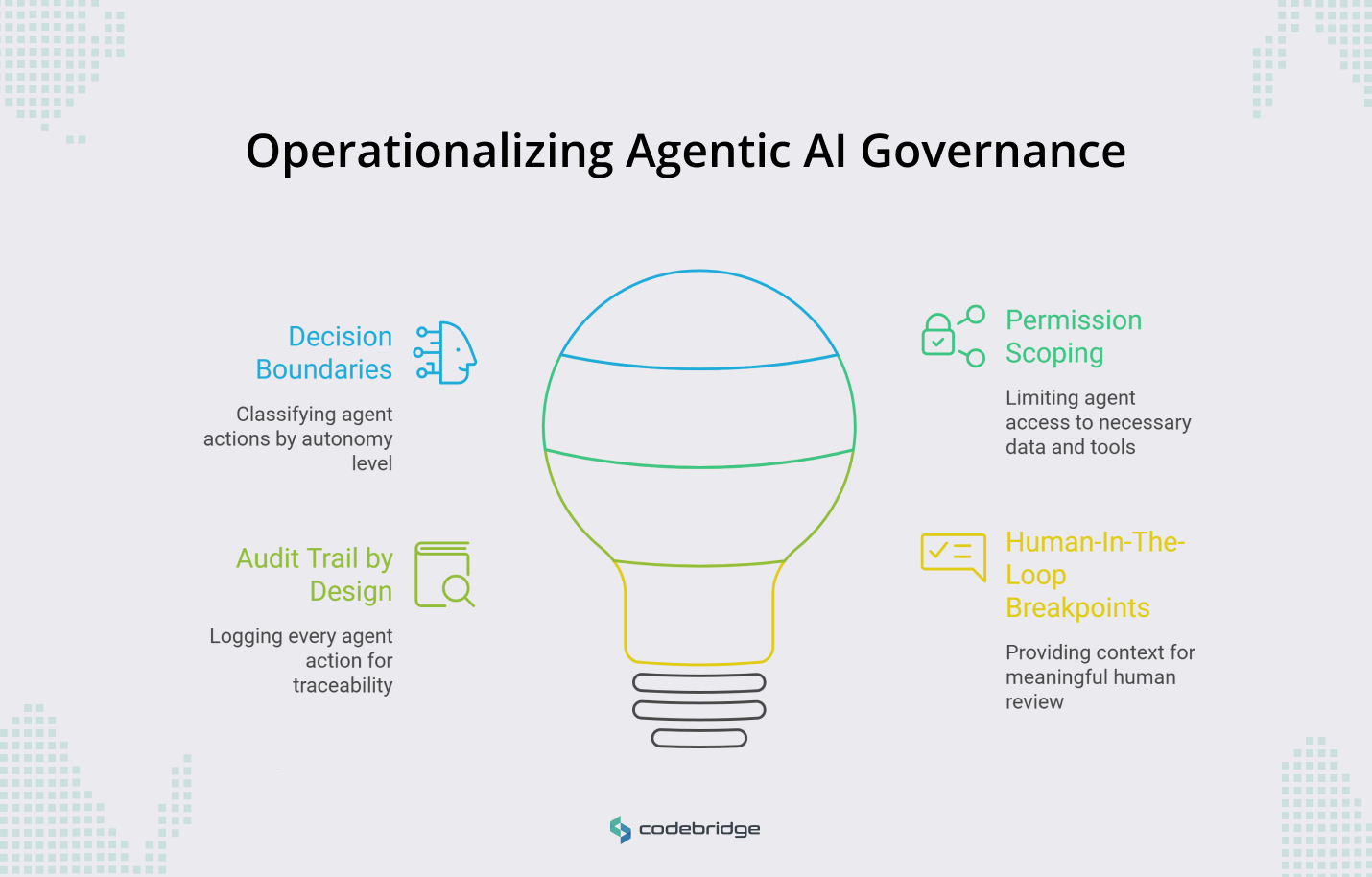

Four design patterns make governance operational. Each maps to a function in NIST's AI RMF. None requires a dedicated governance team. They require engineering decisions during system design.

Decision Boundaries

NIST calls this "human-AI teaming." You classify agent actions by autonomy level before you write any agent code. Some actions the agent executes on its own: formatting a report, summarizing a dataset. Some of it recommends: flagging a transaction for review, suggesting a treatment adjustment. Sometimes it escalates: any action above a dollar threshold, any decision in a regulated workflow.

The EU AI Act, which entered into force in August 2024 and phases in through 2026, requires human oversight mechanisms for high-risk AI systems. If you sell into European markets, these boundaries are a legal requirement. If you do not, they are still an engineering safeguard. Without them, you discover the agent's actual decision scope during an incident, not during design.

Permission Scoping

OWASP added "excessive agency" to its Top 10 for LLM Applications in the 2025 update. The risk: an agent with access to tools and data it does not need for the current task. The fix is the same principle you apply to any microservice. Scope the agent's data access and API permissions per session, per task. If the agent handles customer support queries, it should not hold write access to billing records. This is least-privilege, applied to a new kind of service.

Audit Trail by Design

Gartner's AI TRiSM framework emphasizes traceability and explainability as operational requirements. Every agent action needs a log entry that captures the input, the reasoning chain, the output, and the confidence signal. These logs should be queryable in production, structured for both debugging and compliance review.

When a regulator, a customer, or your own incident response team asks "why did the agent do that," you need an answer within hours, not a three-week forensic reconstruction. Teams that skip audit logging at build time end up reconstructing it from application logs and database snapshots, a slow and unreliable process.

Human-In-The-Loop Breakpoints

Codebridge’s human varification distinguishes between meaningful human control and rubber-stamp approval. A breakpoint works only if the reviewer receives enough context to make a real decision in under thirty seconds: the agent's proposed action, the data it used, the alternatives it considered. If you design the breakpoint as a modal dialog with a yes/no button and no context, reviewers will click "yes" on autopilot. You will have the compliance checkbox without the actual oversight.

Build the review interface alongside the agent, not after the agent ships.

The Cost of Retrofitting

McKinsey's data shows that organizations with mature AI governance are 1.5 times more likely to report positive ROI from AI initiatives. The inverse tells you what retrofitting costs.

Consider a HealthTech product that shipped an agent handling patient triage without structured audit logging. The agent worked. Six months later, regulators asked for decision records. The team spent three months rebuilding the agent's execution layer to produce the logs that should have existed from day one. Feature work stopped. The compliance deadline drove the roadmap.

A FinTech parallel: an agent that auto-approved low-value transactions without explicit decision boundaries. A false positive triggered a compliance review. The team discovered they could not explain which transactions the agent had approved, on what basis, or where the threshold lived in the code. They redesigned the entire workflow.

Both teams had policies. Neither team had systems that enforced those policies at runtime. The cost was engineering time, delayed launches, and eroded trust with customers and regulators. For any company operating in the EU market or targeting regulated domains like HealthTech and FinTech, the EU AI Act's phased deadlines make this a time-boxed problem. You either build compliance into the architecture now or pause feature delivery later to add it.

Practices For Governing Agentic AI Systems: A Starting Point

Before your team builds any agent feature, four questions need to be answered during sprint planning.

- What can this agent decide without a human? Define the autonomy tiers and write them into the agent's execution logic. Review the tiers quarterly as the agent's scope expands.

- What is the minimum data and API access this agent needs? Scope permissions per task. Treat the agent as an untrusted service in your architecture, because from a security perspective, it is one.

- What do you log, and who can query it? Design the audit schema before you design the agent's output format. Your future incident responders and compliance reviewers are the users of this data.

- Where does a human review, and what context do they need? Build the review interface as a first-class component, not a bolted-on approval screen.

Codebridge offers a deeper structure for teams that want to formalize this further. But these four questions cover the architectural surface area that most teams miss.

Conclusion

The teams that treated governance as architecture from the start will spend their engineering hours on new capabilities. The teams that deferred it will spend those hours rebuilding existing systems under regulatory or operational pressure. McKinsey's 1.5x ROI gap between governed and ungoverned AI programs reflects this divergence.

AI agent governance is a system design practice. You can run it with the team you have, in the sprint cadence you already use. The cost of starting now is a few hours of planning per feature. The cost of starting later is months of rework on systems that are already in production.

You do not need a governance committee. You need four answered questions per agent feature, starting with the one your team is building now.

.jpg)

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript