Three weeks into his new IT Director role, Marcus discovered he'd inherited accountability for a major project with an external consulting firm,one he'd never met, never vetted, and whose contract he'd never seen. His boss wanted AI solutions that would "WOW the CEO" within a month. When Marcus explained his nine-person team was already at capacity, the response was dismissive: "You have 9 people, don't tell me you don't have enough resources." No onboarding. No role definitions. No knowledge transfer. Just expectations and a ticking clock.

This scenario plays out in technology organizations every day. The pressure to ship faster, the talent gaps that won't close, and the external teams brought in to fill them,often with vetting processes that amount to little more than checking references and hoping for the best.

KEY TAKEAWAYS

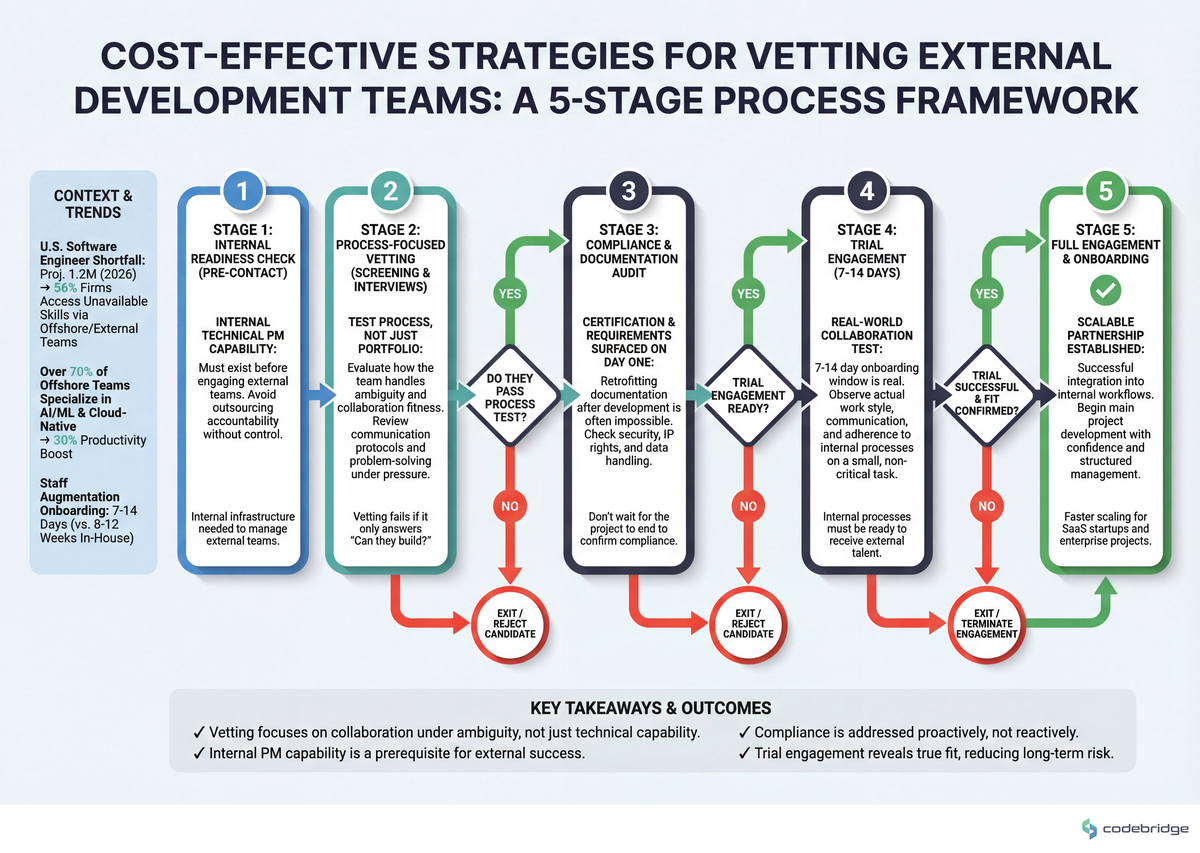

Internal technical PM capability must exist before engaging external teams,without it, you're outsourcing accountability without control.

Vetting should test process, not just portfolio,how a team handles ambiguity reveals more than their best case studies.

Certification and compliance requirements must be surfaced on day one,retrofitting documentation after development is often impossible.

The 7-14 day onboarding window is real,but only if your internal processes are ready to receive external talent.

The Hidden Problem: We're Outsourcing Without Infrastructure

The U.S. software engineer shortfall is projected to reach 1.2 million in 2026, according to KineticStaff's 2025 analysis. This isn't a temporary blip,it's a structural reality driven by AI, blockchain, and cloud-native demand that's outpacing domestic talent pipelines. The result: 56% of firms now access specialized skills through offshore and external teams that simply aren't available in-house.

But here's what the outsourcing pitch decks don't show you: the failure mode isn't usually the external team's technical capability. It's the gap between your organization's readiness and the external team's need for clarity. When a financial services broker recently explored bringing in external developers for AI implementation, the community's advice was unanimous: "The initial solution is to create an in-house Project Manager/Tech team to engage with an external developer." Before you can effectively vet external teams, you need internal infrastructure to manage them.

The vetting process most organizations use,portfolio review, reference calls, maybe a technical assessment,evaluates the wrong things. It answers "Can they build?" when the real question is "Can we work together effectively under ambiguity?"

Where Vetting Goes Wrong: Three Patterns

Pattern 1: Vetting Skills, Not Process

Over 70% of offshore teams now specialize in AI/ML and cloud-native development, per Vrinsofts' 2025 trends analysis. Technical capability is increasingly table stakes. What separates effective external partnerships from expensive failures is process alignment,how teams handle scope changes, communicate blockers, and escalate decisions.

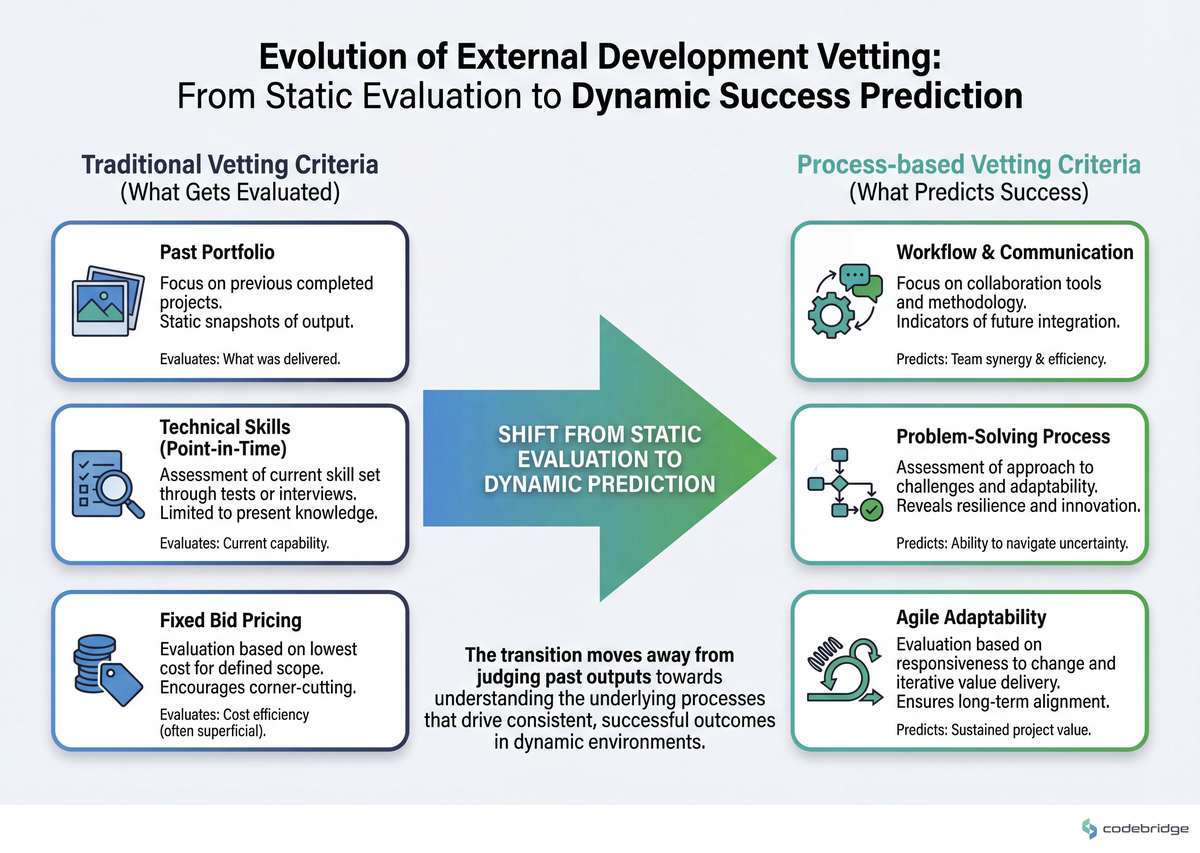

The comparison below illustrates what traditional vetting misses versus what actually predicts partnership success:

Most vetting checklists focus heavily on the left column: past projects, tech stack familiarity, team size. But the right column,communication cadence preferences, escalation protocols, how they've handled past scope disputes,rarely gets explored until you're already in contract.

Pattern 2: No Internal Receiving Capacity

Staff augmentation can onboard in 7-14 days versus 8-12 weeks for in-house hires. That speed is real,54% of U.S. tech firms now prefer outsourcing to India specifically for this flexibility. But the 7-14 day window assumes your organization is ready to receive external talent. Most aren't.

"When I explain they're already at capacity, he says 'You have 9 people, don't tell me you don't have enough resources.'"

IT Director, Reddit r/ITManagers

This IT Director's situation reveals a common failure: leadership treats external teams as additive capacity without accounting for the internal coordination overhead. Every external developer needs context, code review, architecture decisions, and access management. Without dedicated internal capacity to provide these, you're not augmenting,you're creating a coordination tax on your existing team.

The cost of external teams isn't just their rate. It's their rate plus the internal hours required to make them productive. Most vetting processes ignore this entirely.

Pattern 3: Compliance as Afterthought

One development team learned this lesson catastrophically: their entire product failed certification because of mistakes made in the earliest development phases. As the post-mortem noted, "Adding missing requirements, processes, or documentation to a product after it has been constructed can be virtually impossible."

This wasn't a technical failure. It was a vetting failure. The external team was capable,but no one established certification requirements upfront. No one brought the certification body into early conversations. The product worked perfectly. It just couldn't ship.

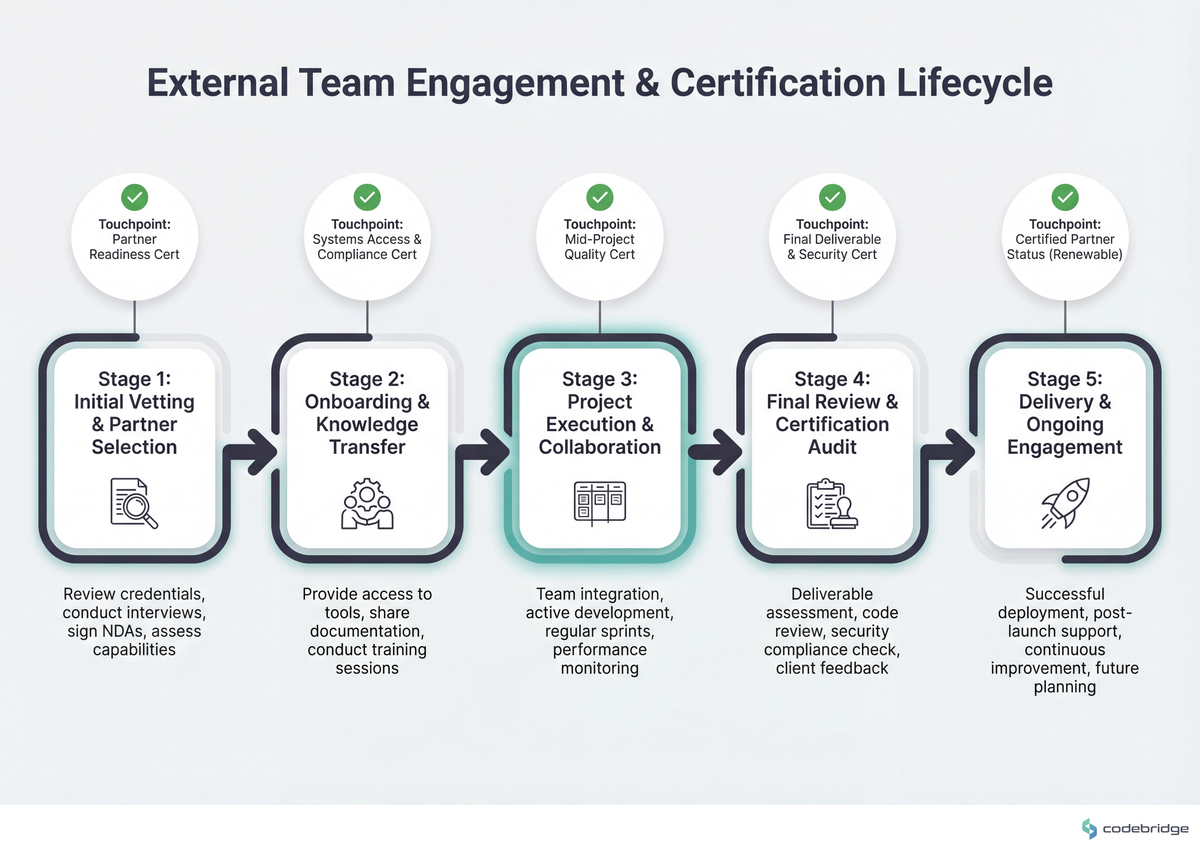

The timeline below shows how certification requirements should integrate with external team engagement:

For regulated industries,healthcare, financial services, safety-critical systems,vetting must include explicit questions about the external team's experience with your specific compliance requirements. Not "have you done regulated work" but "walk me through how you've structured documentation for [specific certification body]."

The Pattern: What Effective Organizations Do Differently

Organizations that consistently succeed with external development teams share a common approach: they vet for collaboration fitness, not just technical capability. This manifests in three specific practices.

They Build Internal Receiving Capacity First

Before issuing an RFP or scheduling vendor calls, effective organizations establish internal technical project management capability. This doesn't mean hiring a full PMO,it means designating someone accountable for: scope definition, documentation standards, security and data handling requirements, and communication protocols.

The financial services broker exploring AI implementation got this advice from the community: establish internal PM capability to handle "planning, scope definition, privacy/data security, and documentation" before engaging external developers. This isn't bureaucracy,it's the infrastructure that makes external partnerships work.

They Test Process Under Pressure

Instead of relying solely on portfolio reviews, effective vetting includes scenario-based discussions:

- "Walk me through a time when requirements changed mid-project." Listen for how they communicated, who made decisions, and how they handled the commercial implications.

- "Describe a situation where you disagreed with a client's technical decision." You want teams who push back thoughtfully, not yes-people who'll build whatever you ask regardless of consequences.

- "How do you handle a situation where a team member is underperforming?" External teams often rotate staff. Understanding their internal quality management matters.

They Front-Load Compliance and Documentation

For any project with regulatory, certification, or audit requirements, effective organizations make these explicit in the vetting process,not as a checkbox but as a detailed discussion:

- What documentation formats does the external team use by default?

- Have they worked with your specific certification bodies before?

- How do they handle traceability between requirements and implementation?

- What's their process for design reviews and sign-offs?

The team whose product failed certification learned that these questions can't wait until development is underway. By then, the architecture decisions that make compliance possible (or impossible) have already been made.

A Cost-Effective Vetting Framework

Effective vetting doesn't require expensive consultants or months of evaluation. It requires asking better questions and structuring the process to reveal collaboration fitness. The following framework illustrates this approach:

Stage 1: Internal Readiness Check (Before Any External Contact)

Before evaluating a single external team, answer these questions internally:

- Who will be the internal technical point of contact with decision-making authority?

- What documentation standards must external work meet?

- What security, compliance, or certification requirements apply?

- How many hours per week can internal team members dedicate to external coordination?

- What's the escalation path when scope or timeline conflicts arise?

If you can't answer these questions, you're not ready to engage external teams,regardless of how urgent the project feels.

Stage 2: Process-Focused Screening (Not Just Portfolio)

Request specific artifacts beyond case studies:

- Sample project documentation,not marketing materials, but actual technical specs, architecture decisions, or sprint retrospectives (redacted as needed)

- Communication samples,how do they report progress? What does a typical status update look like?

- Change request process,how do they handle scope changes commercially and operationally?

These artifacts reveal more about day-to-day collaboration than any portfolio of completed projects.

Stage 3: Scenario-Based Evaluation

Instead of technical tests that evaluate individual contributors, run scenario discussions that evaluate team dynamics:

- Present a deliberately ambiguous requirement and observe how they seek clarification

- Describe a hypothetical conflict (timeline vs. quality, for example) and discuss how they'd approach it

- Ask them to critique something in your existing approach,teams who only agree are teams who won't push back when it matters

Stage 4: Reference Conversations (Not Calls)

Standard reference calls yield predictable positive responses. Instead:

- Ask references to describe a specific challenge that arose and how it was resolved

- Request to speak with someone who managed the day-to-day relationship, not just the executive sponsor

- Ask what they would do differently if starting the engagement over

Stage 5: Paid Trial Engagement

For significant engagements, a paid trial project (2-4 weeks) reveals more than any evaluation process. Structure it to test:

- How quickly they become productive with your codebase and tools

- How they communicate blockers and questions

- How their work integrates with your existing team's workflow

- Whether their documentation meets your standards without extensive revision

The trial cost is a fraction of the cost of a failed six-month engagement. Treat it as insurance, not expense.

The Economics of Better Vetting

Cost-effective vetting isn't about spending less on evaluation,it's about spending evaluation effort on the right things. The comparison below shows where vetting investment yields returns:

| Vetting Activity | Cost | What It Reveals | Failure Prevention Value |

|---|---|---|---|

| Portfolio review | Low | Past capability | Low (doesn't predict collaboration) |

| Reference calls | Low | Curated opinions | Low-Medium |

| Technical assessment | Medium | Individual skill | Medium (doesn't predict team dynamics) |

| Process artifact review | Low | Working style | High |

| Scenario discussions | Medium | Problem-solving approach | High |

| Paid trial engagement | Higher | Actual collaboration | Very High |

Most organizations over-invest in the top three rows and under-invest in the bottom three. Shifting this balance doesn't increase vetting cost,it redirects it toward activities with higher predictive value.

Closing the Loop

Remember Marcus, the IT Director who inherited accountability for an external team he'd never vetted? His situation wasn't unusual,it was the predictable result of organizational patterns that treat external engagement as a procurement exercise rather than a collaboration design challenge.

The talent shortage isn't going away. The 1.2 million engineer gap means external teams will remain essential for most technology organizations. The question isn't whether to use them,it's whether you'll vet them in ways that predict partnership success or just technical capability.

Start with internal readiness. Evaluate process, not just portfolio. Front-load compliance requirements. And when the stakes justify it, invest in paid trials that reveal collaboration fitness before you're committed.

The cost of better vetting is measured in hours. The cost of poor vetting is measured in failed projects, missed deadlines, and products that work perfectly but can't ship.

Building your external team vetting process?

Download our internal readiness checklist to ensure your organization is prepared before the first vendor call.

Diagnostic Checklist: Signs Your Vetting Process Needs Work

You've engaged external teams without a designated internal technical point of contact with decision-making authority

Your vetting process doesn't include reviewing actual project artifacts (documentation, status reports, change requests)

Reference conversations focus on "were you satisfied?" rather than "describe a specific challenge and how it was resolved"

Compliance, certification, or regulatory requirements aren't discussed until after contract signing

You've never calculated the internal coordination hours required to support external team members

Your last external engagement had scope disputes that weren't covered by the original change request process

Internal team members describe external coordination as "taking time away from real work"

You've never run a paid trial engagement before committing to a multi-month contract

REFERENCES

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript