Choosing an AI vendor for a complex SaaS product isn’t just about picking the right model. It’s a broader decision about the system you’re building and the long-term capabilities you need.

While much of the market remains fixated on the raw capabilities of underlying large language models (LLMs), engineering leaders at technology-driven companies recognize that the model is only one component in a probabilistic system.

In a production environment, success is determined by how that system handles real-world constraints, such as multi-tenancy, complex integrations, regulated data, and strict latency service-level objectives (SLOs).

Current market realities show that nearly 78% of organizations have integrated AI into their operations, yet about 95% of generative AI pilots never move beyond the pilot stage.

AI initiatives often stall not because of model quality, but because of weak architecture, unclear governance, and systems that aren’t designed for real-world use.

At the same time, the market is expanding rapidly. New AI vendors appear every day, many offering impressive features and polished demos. On the surface, the options look strong. In practice, it’s difficult to identify a partner who will do more than deliver tools — a vendor who understands your business context, builds with production in mind, and takes responsibility for long-term results.

That’s why this article provides a practical framework for evaluating AI vendors. It’s designed to help you move past surface-level comparisons and choose a partner capable of delivering real, stable, and scalable outcomes.

What You’re Actually Buying When You “Buy AI”

Adding generative AI to a traditional software stack changes how the system behaves. Instead of producing fully predictable results based on fixed logic, now the system generates outputs that can vary.

This shift affects how teams think about reliability and operations in general. This requires companies to adopt new standards for monitoring, quality assurance, and control.

- Probabilistic outputs vs. predictable logic:

When you invest in AI, you’re not just paying for the model. You’re also paying for the system around it – the safeguards, controls, and processes that keep those variable outputs reliable and usable.

Traditional software behaves in a consistent, rule-based way — the same input produces the same output. However, AI systems work differently. They generate responses based on patterns in data, which means the same input can lead to slightly different results. And sometimes those results can be incorrect, even if they sound confident.

- The limits of a strong demo:

Many organizations are still experimenting with AI, and two-thirds of them say that they haven’t moved beyond early pilots.

A successful demo, where a model delivers an impressive result in a controlled setting, rarely guarantees success in production. Demos highlight what looks good in the moment. Production depends on solid engineering.

Although 88% of organizations report using AI regularly, only 39% see a measurable impact at the enterprise level. In many cases, pilots overlook practical challenges such as changing data, system integration issues, and accumulated technical debt, problems that only may surface at scale.

- The Core Purchase: Containment and Governance:

When your company is choosing a vendor, the priority should be their ability to manage risk and maintain control. That includes clear visibility into how the model works, tools to detect unusual behavior, and protections against misuse or attacks.

Sometimes, AI systems can be sensitive to changes in context and don’t always perform well outside the environments they were trained in. That’s why you’re not just buying a model — you’re investing in the processes and safeguards that allow you to test and adapt the system safely.

Common failure patterns in complex SaaS AI deployments

In SaaS, the stakes are elevated by multi-tenancy and the need for seamless integration. Vendors often fail because they treat AI as a standalone feature rather than a deeply embedded system component.

- Latency issues and system slowdowns:

Google’s Accelerate State of DevOps report indicates that AI adoption can actually harm software delivery stability and throughput.

In a multi-tenant SaaS, a single tenant’s high-context query can trigger a latency cascade that slows the entire system. Without proper limits, usage controls, and load management, response times increase and the overall experience suffers.

- Tool failures that spread through the workflow:

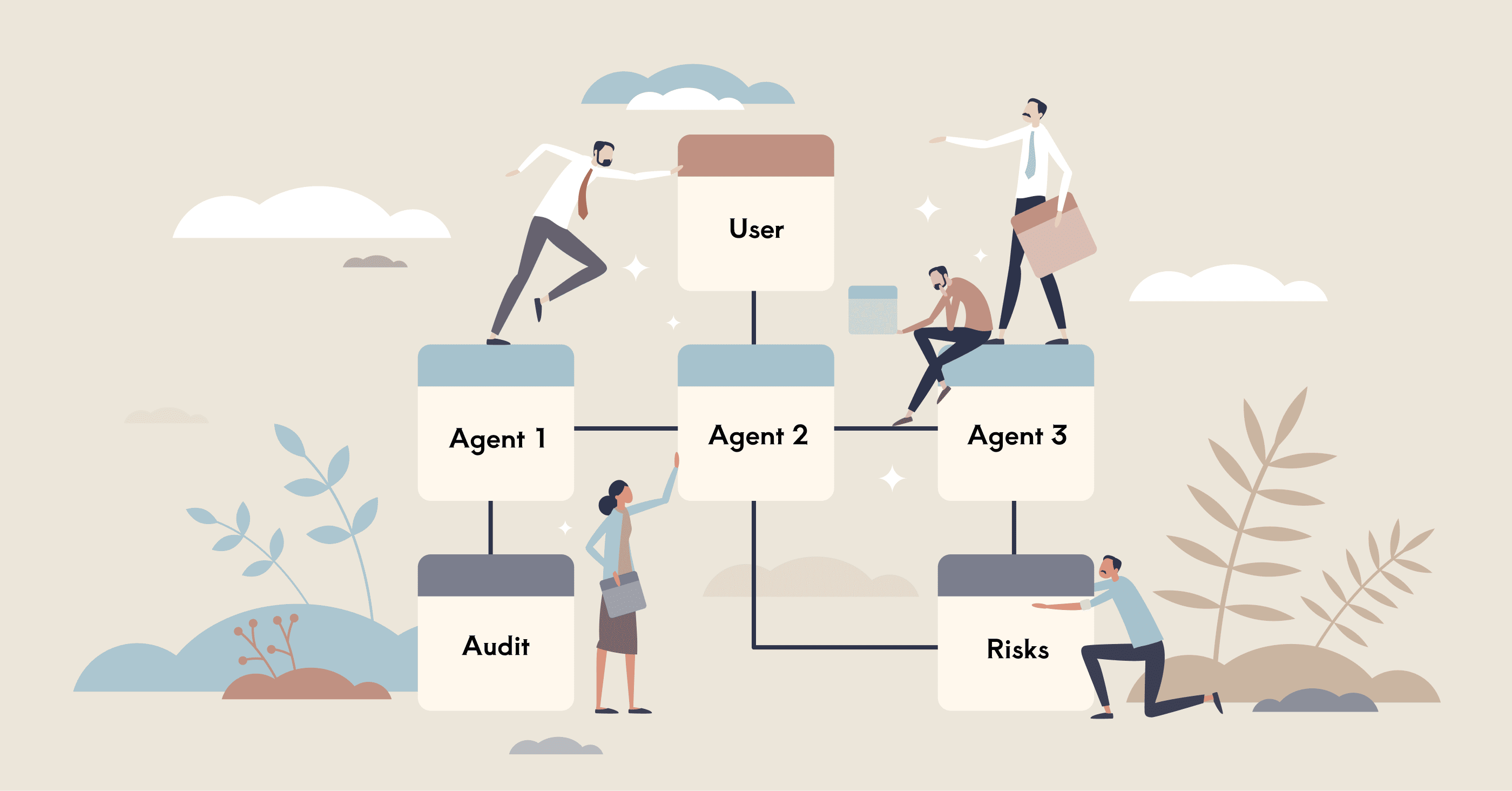

Modern agentic AI does not just generate text. Now it plans and executes multi-step work by calling external tools and APIs.

If a vendor’s orchestration layer is poorly designed, a single broken integration or a "hallucination" in the reasoning loop can propagate through the system, leading to unstable agents that crash entire workflows.

- Cost overruns and runaway usage:

AI pricing is becoming more outcome-based and Agentic Enterprise License Agreements (AELAs), which makes spending harder to predict. If agents aren’t properly controlled, they can repeat actions, call the same APIs over and over, or expand tasks beyond the original scope.

Without clear limits and monitoring in place, this kind of behavior can quickly drive up API costs. When choosing a vendor, it’s important to understand how they prevent runaway usage and keep spending under control.

- Financial models for AI are shifting toward outcome-based pricing and Agentic Enterprise License Agreements (AELAs) to mitigate unpredictable spending. However, without strict execution discipline, autonomous agents can repeat actions, call the same APIs over and over, or expand tasks beyond the original scope.

Without clear limits and monitoring in place, this kind of behavior can quickly drive up API costs. It’s important to understand how AI vendors prevent runaway usage and keep spending under control.

How to Choose a Reliable AI Solution Provider for SaaS

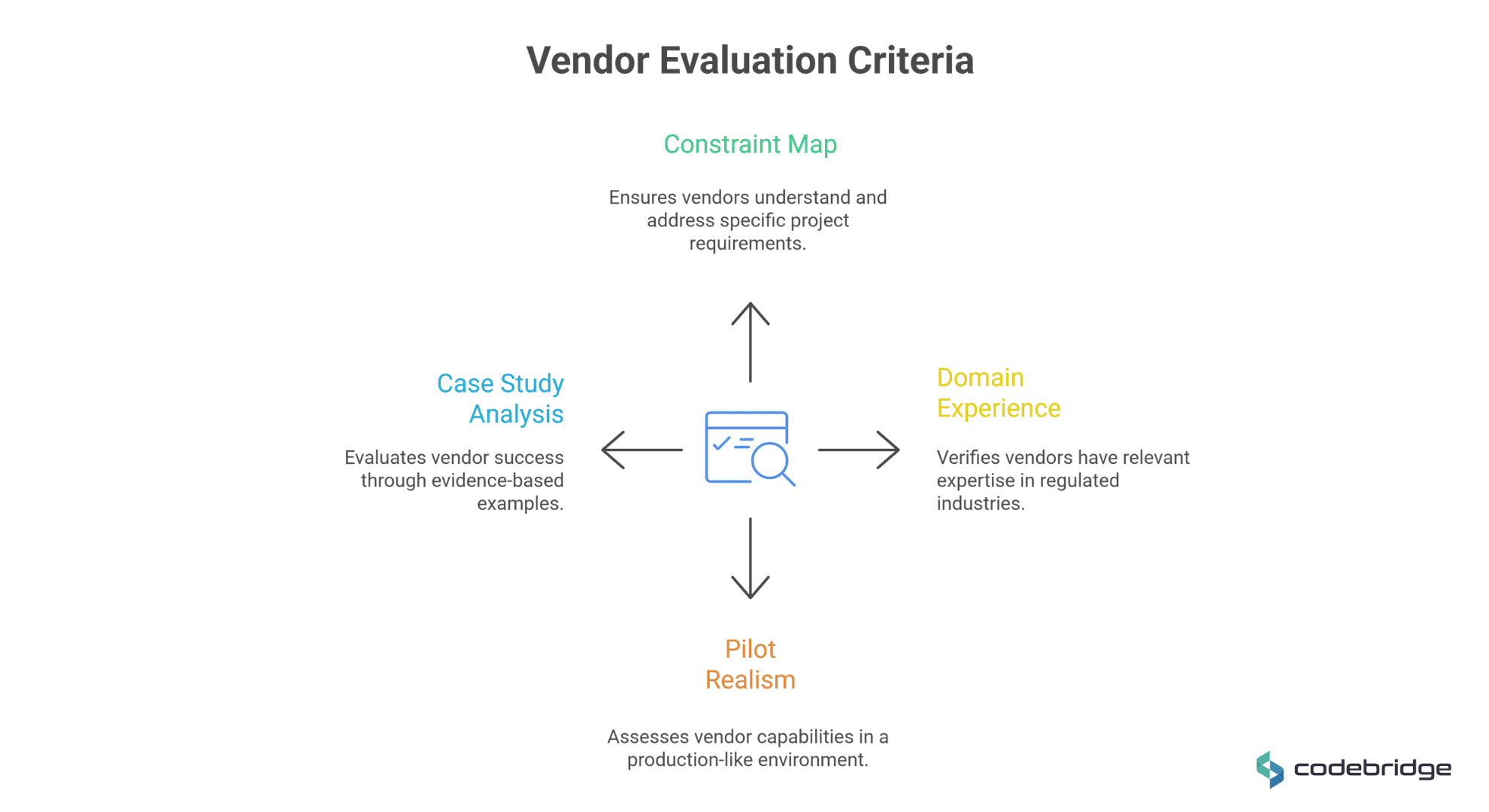

1. Define Your Constraint Map

Most teams skip the critical step of defining their own technical and regulatory constraints before evaluating vendors.

A "Constraint Map" is a buyer-owned artifact that establishes the boundaries within which the AI must operate.

The one-page constraint map: A buyer-owned artifact

A mature Constraint Map serves as the source of truth for the operating environment. It must explicitly define five key dimensions:

- Integration Surface Area:

Buyers must inventory which internal and external APIs, databases, and legacy services the AI will orchestrate.

Failure to define this leads to tool failure propagation, where one broken integration in a chain crashes the entire reasoning loop.

Vendors must demonstrate how they handle integration patterns, whether synchronous or event-driven, and where the state and memory boundaries lie.

- Multi-tenancy and Isolation Requirements:

In SaaS, tenant boundary violations are one of the biggest risks. The map must define how data is siloed between customers.

This is not merely a database question but a model security question: how does the system prevent data leakage through shared model memory or improperly scoped retrieval systems?

A mature response involves enforcing the principle of least privilege across the entire pipeline.

- Latency and Reliability Budgets:

AI components don’t always respond at the same speed. Processing times can vary, which affects the user experience. That’s why it’s important to clearly define which actions must happen instantly (synchronous) and which can run in the background (asynchronous).

Traditional software metrics focus on release speed and system stability. With AI, you also need clear service level objectives for response times and system behavior under load. One slow model call shouldn’t be able to slow down the entire application.

Ask a vendor, how they prevent performance issues from spreading across the system.

- Data Classification and Audit Requirements:

Domain-specific regulations, such as HIPAA, SOC 2, and ISO 27001, must be mapped to the data flow. The map should define the required depth of the audit trail. It should track the full path from User → Model → Tools → Outputs so that every action taken by an autonomous agent is traceable and explainable.

- Change Rate and Ownership Model:

High-performing AI systems are not static; they suffer from "hidden technical debt," boundary erosion, and data drift over time.

The constraint map must define how often prompts and evaluation sets will be updated and who owns the maintenance of those artifacts post-launch. Without clear ownership, systems fail to generalize beyond their initial training environment.

How Vendors Should Respond

A mature vendor should respond to this map with more than vague assurances. Look for an Architectural Decision Record (ADR) and a tailored reference architecture that explicitly shows how they will meet your constraints.

Red flags include generic agent proposals, "tool-first" thinking that ignores your specific environment, and a lack of detail on failure modes.

2. Regulated and Sensitive Domain Fit

In sensitive industries, such as healthcare, fintech, or manufacturing, vendor quality is defined by risk translation. This is the ability to turn legal and compliance requirements into concrete architecture, workflows, and audit trails.

Prior Domain Experience as a Risk Multiplier

Teams without deep exposure to regulated environments typically underestimate the complexity of data classification and access boundaries. They may assume that "adding logging later" is acceptable, which in a regulated setting can lead to reportable security incidents or regulatory failures.

Domain experience helps an AI vendor anticipate these requirements early. Teams that have solved similar problems before know what to plan for, which reduces the risk of major architectural changes and costly rework later in the project.

What to Verify

Verify a vendor's capabilities across four layers:

- Layer A: Architecture Decisions. Ask how they enforce the principle of least privilege and where exactly sensitive data is stored, transformed, and logged.

- Layer B: Workflow Correctness. How do they prevent a hallucination from turning into a system action, and what is the approval path for edge cases?

- Layer C: Compliance-Aware Delivery. Can they produce a threat model or a risk register?

- Layer D: Production Operations. How do they support an audit with logs and a clear change history of prompts and configurations?

Signals of Domain Experience

Use "Tell me about a time..." questions to force a signal:

- "Describe a situation where compliance requirements changed mid-project. What did you redesign?"

- "What is the most common audit mistake teams make in this industry?"

- "Show us an example of an approval workflow you implemented and explain the logic."

Red flags include a lack of a clear stance on data minimization, treating model outputs as authoritative rather than advisory, and having no documented process for incident handling in regulated settings.

3. The Only Pilot That Matters: Production-Like Spec

In SaaS environments, pilots often fail to scale beyond the experimentation phase, often because they are optimized to impress stakeholders rather than to meet real engineering standards.

To evaluate a vendor effectively, leaders must move beyond controlled demo environments and require a pilot that reflects the real conditions of production — including imperfect data, edge cases, performance pressure, and operational constraints.

Demo vs Production Reality

Pilot requirements: Minimum viable realism

To understand whether a vendor is truly ready for production, the pilot should be designed to expose real risks, not hide them. It needs to show how the system performs under realistic conditions and constraints.

At a minimum, a meaningful pilot should meet at least three non-negotiable requirements:

- One Real Integration (No Mocked Data):

The system must integrate at least one real internal or external API to expose potential failures. If a secondary API timeout causes the reasoning loop to break, the architecture is not production-ready.

- Forced Failure-Mode Testing:

Mature engineering requires knowing how a system fails. Vendors should be forced to demonstrate how the architecture handles a manually triggered timeout, a bad input, or a model hallucination.

The goal is to verify that the system has failure containment protocols and a clear path for safe deactivation.

- One Audit/Compliance Requirement:

The pilot should clearly show how each request moves through the system: from the user, to the model, to any connected tools, and finally to the output.

This level of traceability is important for compliance and accountability. Regulations such as the EU AI Act require organizations to keep records of how systems are used and what data sources are accessed.

A vendor should be able to demonstrate that this tracking is built into the solution from the start.

Acceptance criteria written like an engineering contract

Acceptance criteria should be written as Service Level Objectives (SLOs) that define the boundaries of the system’s performance and reliability.

- Responsiveness under stress

The system should stay responsive even if model calls slow down. In multi-tenant SaaS environments, one complex request shouldn’t be able to slow down the experience for everyone else. A strong vendor will show how they isolate performance issues and prevent them from spreading across the platform.

- Auditable traceability

Every action taken by an autonomous agent should be traceable. That means recording when a task started and ended, what tools or data sources were used, and who reviewed or approved the results when required. This is especially important for high-risk use cases where accountability is mandatory.

- Economic predictability

Cost control must be built into the system. Define a clear upper boundary for cost per successfully completed task, even during peak usage. Vendors should demonstrate how they prevent runaway behavior — such as repeated loops or unnecessary API calls — that can quickly drive up expenses.

By establishing these production-like specs, production discipline becomes visible, creating a foundation for a system that can ship safely and scale predictably.

4. Proof of Production Readiness

In enterprise SaaS systems, case studies must serve as evidence of delivery discipline and the ability to manage system-level constraints, rather than just marketing validation. Senior technology leaders should evaluate results based on how a vendor handles the messy transition from a controlled pilot to a high-stakes production environment.

Case Study Example: Regulated Workflow Accuracy Under Constraints (HealthTech)

The RadFlow AI implementation for a diagnostic imaging network demonstrates the rigor required to deploy AI in a domain where error costs are extreme and regulatory compliance is non-negotiable.

- What this project proved:

Success was defined by the vendor's ability to move beyond a "black-box algorithm" and engineer a HIPAA-compliant, cloud-native workspace integrated directly into existing clinical workflows without disruption.

It proved that engineering discipline, specifically a 90% reduction in false positives through a dedicated post-processing classifier, can recover clinician trust that was eroded by previous failed AI pilots.

- Context & Constraints:

The client faced scan volumes increasing by 22% annually with a flat radiologist headcount, leading to turnaround times exceeding SLAs by 15%.

Technical constraints included high "data gravity" with DICOM datasets exceeding several hundred megabytes and the need for sub-second image rendering over low-bandwidth rural satellite connections.

- Measurable Outcomes:

The system reduced average CT reading time by 38% (from 15.2 to 9.4 minutes) across over 4,800 cases. It maintained 96% detection sensitivity for sub-4mm lesions while lowering false positives from 4.1 to 0.4 per scan.

This resulted in an estimated $2.1M annual operational impact and a radiologist trust score increase from 27% to 89%.

- Regulatory Rigor:

The architecture aligned with FDA Software as a Medical Device (SaMD) Class II pathways, incorporating IEC 62304 traceability in CI/CD and immutable audit logs of every AI-assisted decision.

How to read any vendor’s case study

When evaluating vendor-demonstrated results, CTOs should determine who owned the architecture decisions, risk controls, and long-term maintenance responsibilities and use the following checklist to assess implementation depth and operational maturity:

- What constraints existed? Did the vendor address specific technical, regulatory, or multi-tenant boundaries, or was it an isolated test environment implementation?

- What failed initially? Mature vendors can describe the failure modes, such as latency cascades or boundary erosion, that occurred during the pilot and how the architecture was redesigned to contain them.

- How was risk contained? Look for evidence of "failure containment" protocols, such as human-in-the-loop checkpoints or automated deactivation when model performance drifts.

- What metrics prove adoption? Success isn’t just about model accuracy. What matters more is whether people actually rely on the system in their daily work. That could mean tracking user trust levels or how often outputs are accepted without rework,

- Who owns the artifacts? Verify if the client retained full intellectual property rights over trained model weights, adjudicated datasets, and the codebase.

5. Vendor Interview Kit: Forcing Signal Fast

During interviews, focus on the operational realities that vendors often gloss over in sales pitches:

- Architecture: "What are the top five failure modes you expect in this deployment, and how will you contain them?"

- Multi-tenancy: "Show us exactly how you prevent tenant data leakage and enforce least privilege."

- Testing: "How do you run AI regression tests? What specifically breaks when you change an underlying prompt?"

- Exit Strategy: "If we replace you in six months, what artifacts, such as prompts, evaluation sets, and infrastructure-as-code, will allow another team to continue the work?"

Vendor Evaluation Framework: The 5-Dimension Scorecard

To ensure an objective comparison, senior technology leaders should evaluate vendors using a structured scorecard. This framework compares potential partners across the five core dimensions that determine success in production-grade SaaS systems, helping teams assess real capabilities rather than presentation quality.

Conclusion

In the era of autonomous agents and generative AI, the difference between a vendor and a partner matters. A vendor provides a license and a set of tools, while a real partner understands your production constraints and works with you to solve them.

Scaling AI is not just a technical step — it requires stronger engineering practices, team upskilling, and clear risk management processes. Organizations that succeed embed AI into their release processes, cost controls, and risk governance structures.

That means moving away from adopting technology for its own sake and focusing instead on deployments tied to measurable business outcomes. When technical depth, operational discipline, and structured governance are in place, AI becomes a long-term strategic asset rather than a source of instability.

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript