The first wave of generative AI adoption followed a predictable pattern: organizations integrated a single large language model (LLM), which was tasked with broad requirements to handle everything.

For controlled pilots, FAQ bots, or internal productivity experiments, this approach was sufficient. But as enterprises move beyond experimentation, this centralized intelligence model often collapses under the weight of real-world enterprise constraints, such as domain expertise spanning multiple business lines and strict data-sovereignty policies.

A recent MIT Report indicates that 95% of AI initiatives fail to reach production, not because models lack capability, but because systems lack architectural robustness, governance structure, and integration depth. At the same time, global enterprise AI spending is projected to exceed $3 trillion by 2027, with AI becoming one of the fastest-growing categories in enterprise IT budgets.

In many production deployments, system design limitations, such as data isolation and orchestration complexity, now constrain performance more than model capability.

When a single generalized LLM is tasked with handling cross-domain enterprise workflows, it results in a domain overload, where finance logic, clinical compliance, and customer support require fundamentally different reasoning boundaries. It can also lead to context degradation, as task complexity increases, response consistency declines.

In small pilots, these constraints are tolerable. However, in production systems serving thousands or millions of users, they become systemic risk.

This is particularly relevant for regulated domains, such as FinTech, HealthTech, LegalTech, or SaaS platforms operating at scale, where architectural fragility translates directly into revenue, compliance, and operational exposure.

What Is a Multi-Agent System?

A multi-agent system (MAS) is made up of several independent AI agents that operate in the same environment and work together, or sometimes compete, to address complex and large-scale challenges.

These agents are autonomous computational entities situated within a shared environment where they collaborate, coordinate, or negotiate. Unlike single-agent systems, where one model performs tasks across various domains, a MAS leverages distributed control and decision-making among specialized entities.

From Single-Agent AI to Multi-Agent AI

The shift from single-agent to multi-agent architectures happens because centralized intelligence has clear limitations. Single-agent systems often suffer from over-generalization, where a single model attempting to serve multiple business lines leads to brittle prompts and degraded performance.

Furthermore, a monolithic agent can create performance bottlenecks. Multi-step reasoning increases latency, which undermines real-time reliability. At the same time, enterprises require permission isolation, audit trails, deterministic fallbacks, and cost controls, adding governance complexity.

These issues increase security exposure by requiring centralized access to diverse, sensitive datasets.

In contrast, multi-agent systems distribute workloads across specialized agents, each hyper-optimized for a specific domain or function. This role specialization ensures deeper accuracy and allows for the integration of unique toolsets, knowledge graphs, and memory modules tailored to the agent's specific task.

By breaking down complex problems into manageable sub-tasks, MAS can outperform single agents through parallel reasoning and higher-order planning.

Multi-Agent Systems Examples

Practical applications of multi-agent systems demonstrate their utility in handling high-stakes, real-world scenarios across industries:

- Finance Risk Analysis Agents: In financial operations, agents can collaborate across risk assessment, fraud detection, and portfolio optimization, dynamically sharing insights to enhance decision quality and regulatory compliance.

- DevOps Incident Response Agents: Multi-agent orchestration has been shown to transform incident response, achieving a 100% actionable recommendation rate in trials compared to 1.7% for single-agent approaches.

- Automated QA and Bug-Management Agents: In software development, a team of agents can respond to bug requests, analyze past issues for similarity matching, and generate code suggestions or test cases for human verification.

- Healthcare Decision Support: Frameworks like the "AI Tumor Board" bring together agents dedicated to medical imaging analysis, patient history retrieval, and treatment planning to support multidisciplinary medical reasoning.

From AI Orchestration to Multi-Agent Orchestration

As AI systems become more widespread, simple automation is no longer enough. Instead of relying on a single system to handle everything, organizations now need coordinated control over multiple components. This includes not only AI models, but also data pipelines, infrastructure, and internal policies.

What Is AI Orchestration?

AI orchestration is the coordination and management of AI models, systems, and integrations within a greater workflow or application. It encompasses the entire lifecycle of an AI solution, from deployment and resource allocation to ongoing maintenance and failure management.

Unlike workflow automation, which focuses on automating specific, repeatable tasks like data extraction or query routing, orchestration manages the broader dependencies and resource limits of the entire system.

It acts as the "control framework" that ensures all components, including computational resources and data stores, work in sync to meet performance targets and business logic.

What Is Multi-Agent Orchestration?

Multi-agent orchestration refers to the process of enabling multiple autonomous agents to work together toward a common, shared goal. It involves breaking down a complex task into a structured, agentic workflow where agents are assigned specific roles and responsibilities.

This orchestration layer serves as the "brain" of the system, managing dependency graphs to ensure agents are called in the correct sequence and that critical information flows between them.

Multi-agent orchestration must handle state synchronization and conflict resolution to prevent agents from competing for resources or overriding each other's outputs. It also governs the agent lifecycle, monitoring health and implementing fallback mechanisms if an individual agent fails.

By integrating these components, multi-agent orchestration provides the structure, visibility, and control necessary to move from experimental AI to reliable enterprise ecosystems.

Multi-Agent Architecture — The Real Scaling Challenge

This is particularly relevant for regulated environments. The real challenge lies in managing how they interact. As more agents are added, the number of possible interactions increases rapidly, making coordination harder and slowing down learning and decision-making.

To address this challenge, teams are designing new architectures that structure and manage how agents work with each other. These frameworks help control complexity and keep the system stable as it scales.

Core Components of a Multi-Agent Architecture

A robust multi-agent architecture is built on four foundational components that ensure the system remains stable as tasks increase in difficulty:

- Task Decomposition and Role Assignment: The system must break high-level objectives into sub-goals, assigning them to specialized agents such as retrievers, planners, executors, or evaluators. This separation of concerns prevents a single agent from being overwhelmed by broad requirements, which often leads to "endless execution loops" or hallucinations in monolithic models.

- Shared Memory and State Management: Agents require mechanisms to maintain context across turns and multiple executions. This involves both short-term "scratchpad" memory — where agents collaborate in a shared workspace — and long-term persistent storage of agent operational status, configuration, and history to ensure continuity across sessions.

- Tool and API Integration: A standardized interface layer is necessary to connect agents to external tools, databases, and services. Using protocols like the Model Context Protocol (MCP) or the Agent2Agent (A2A) protocol allows for dynamic capability discovery, enabling agents to access external data without requiring custom, hard-coded integrations for every new task.

- Agent Communication Protocols: Standardized languages, such as FIPA-ACL or KQML, govern how agents exchange information, negotiate for tasks, and resolve conflicts. Communication may be direct (message passing) or indirect (modifying a shared environment), but it must be structured to avoid communication overload, where excessive messaging inflates tail latency and saturates control channels.

Centralized vs. Decentralized Coordination

Decision-makers must choose between three primary coordination patterns based on their tolerance for latency and system fragility:

- Centralized (Supervisor) Pattern: A single manager agent coordinates tasks, data flow, and decision-making. While this provides clear control and simplified management, it creates a potential bottleneck and a single point of failure where the entire system collapses if the central unit fails.

- Decentralized Pattern: Agents operate autonomously and share information directly with neighboring agents or via a publish-subscribe message bus. This is more robust and modular, but coordinating behaviors to benefit the entire system becomes significantly more complex.

- Hierarchical Pattern: This tiered structure balances flexibility and oversight by having higher-level agents supervise teams of lower-level worker agents. Responsibilities are divided so that higher levels focus on coordination and planning while lower levels focus on task execution, a pattern that scales effectively for complex enterprise-level automation.

Multi-Agent Frameworks and Orchestration Layers

To manage architectural complexity, developers rely on multi-agent frameworks that provide standardized tools for designing and deploying these systems.

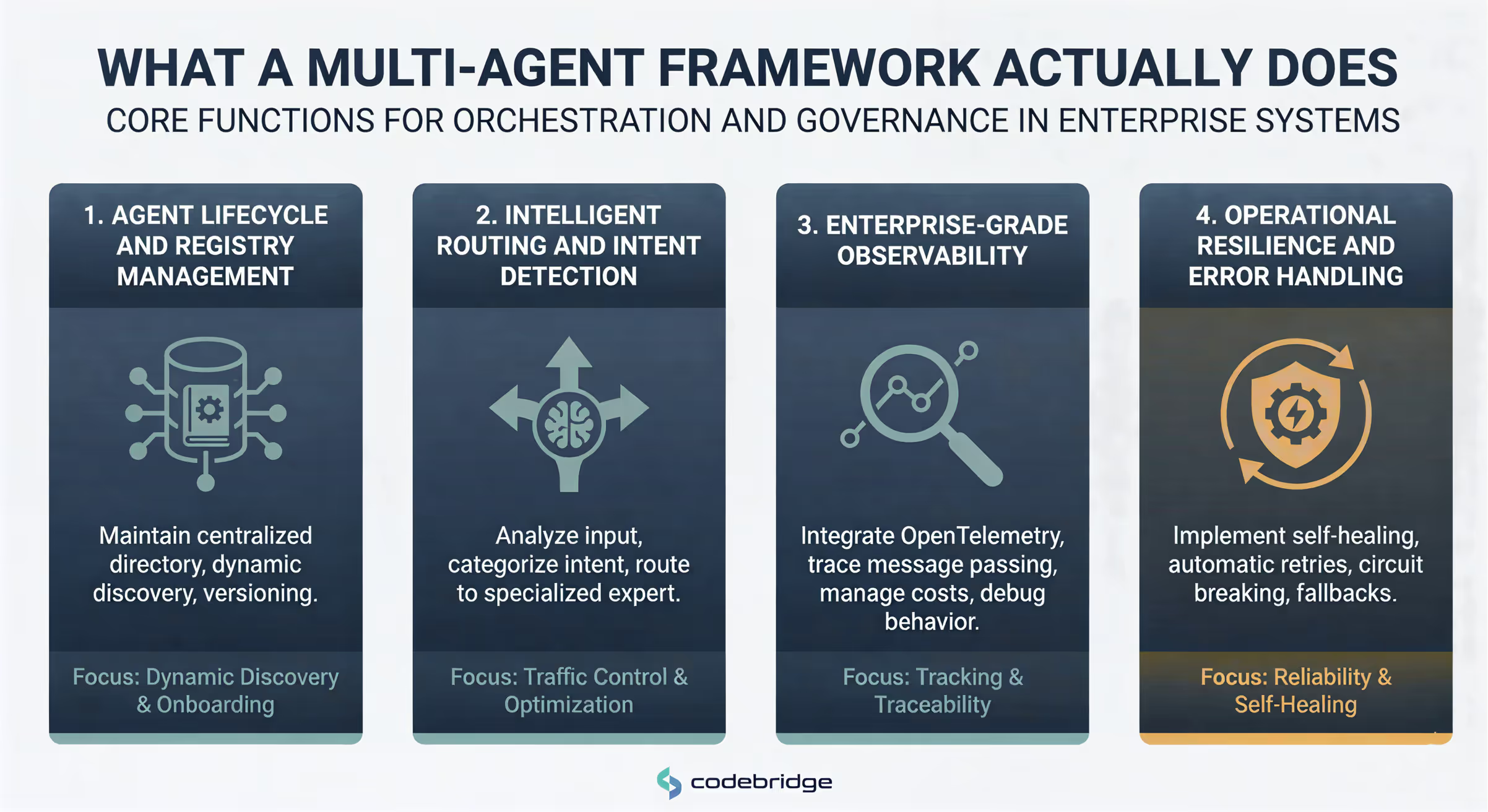

What a Multi-Agent Framework Actually Does

For a CTO, a multi-agent framework is not merely a library of templates; it is the system that manages the distributed lifecycle of autonomous components.

While individual agents provide the reasoning, the framework provides the infrastructure, handling everything from discovery to error recovery, to ensure these agents function as a reliable enterprise ecosystem rather than a collection of isolated scripts.

“A framework typically performs four core functions:

- Agent Lifecycle and Registry Management: Frameworks maintain a centralized "Agent Registry". This directory service stores metadata about each agent’s capabilities, endpoints, and versions. This allows the system to dynamically discover and onboard new agents without hard-coding dependencies into the application.

Example: In Microsoft’s AutoGen v0.4, the framework manages the registration of human-proxy agents and LLM-based assistants, enforcing type checks at build time to ensure they can interact safely across different programming languages.

- Intelligent Routing and Intent Detection: The framework acts as a traffic controller, utilizing a "Classifier" component. It analyzes user input to categorize intent and determine which specialized expert is best suited for the task.

Example: Semantic Kernel serves as an orchestration engine that receives a request, decomposes it into sub-tasks, and routes them to specific agents based on a pre-defined "Intent Plane".

- Enterprise-Grade Observability: Production systems require rigorous tracking of agent interactions to manage costs and debug non-deterministic behavior. Frameworks often integrate industry standards like OpenTelemetry to provide traces of message passing and tool execution.

Example: LangGraph provides a visual roadmap of agent connections, allowing developers to monitor state transitions and identify exactly where an agentic loop might be stalling or hallucinating.

- Operational Resilience and Error Handling: Without a framework, an individual agent failure can cascade into a system-wide outage. Orchestration layers implement "self-healing" properties, such as automatic retries, anomaly-gated circuit breaking, and fallback mechanisms.

Example: In high-stakes environments like financial fraud detection, a framework can detect if a primary analysis agent is failing and automatically reroute the task to a conservative fallback model or a human compliance officer to maintain business continuity.

By handling communication and persistence internally, frameworks like CrewAI or SALLMA let teams focus on agent logic instead of infrastructure.

Multi-Agent Workflows and Agent Collaboration

Designing reliable workflows is the final step in turning a collection of autonomous agents into a functioning enterprise system. These workflows structure agent interactions so outputs remain predictable in real-world production. Technical teams should move beyond simple model chaining and design workflows that explicitly prevent coordination failures.

Designing Reliable Multi-Agent Workflows

In production, agents generally collaborate through one of four established workflow patterns, chosen based on the task's difficulty and the required level of oversight:

- Sequential Workflows (The Assembly Line): Agents execute tasks in a fixed, predetermined order. Each agent completes its specific sub-task before passing the state to the next. This is ideal for structured business processes like document approval pipelines or multi-step regulatory reporting, where the order of operations is non-negotiable.

- Parallel Task Execution (The Force Multiplier): Multiple agents work simultaneously on independent parts of a complex task. This dramatically reduces completion time and token bloat compared to a single agent attempting to process everything sequentially.

Performance Case Study: Anthropic’s research architecture uses a lead agent to plan a strategy while sub-agents gather data in parallel. This multi-agent approach outperformed single-agent Claude Opus benchmarks by 90.2% in internal evaluations.

- Supervisor Agents (The Managerial Pattern): A central "manager" agent decomposes a high-level intent, routes sub-tasks to specialized "worker" agents, and synthesizes the final results.

This pattern is highly scalable for complex enterprise automation, where the manager can decide which specialist to invoke based on real-time data.

- Feedback Loops (Self-Correction): This pattern incorporates internal verification steps where one agent reviews the output of another. By implementing a "reviewer" agent to check code for security flaws or a "fact-checker" to verify research data, MAS can significantly reduce hallucinations and improve overall reliability.

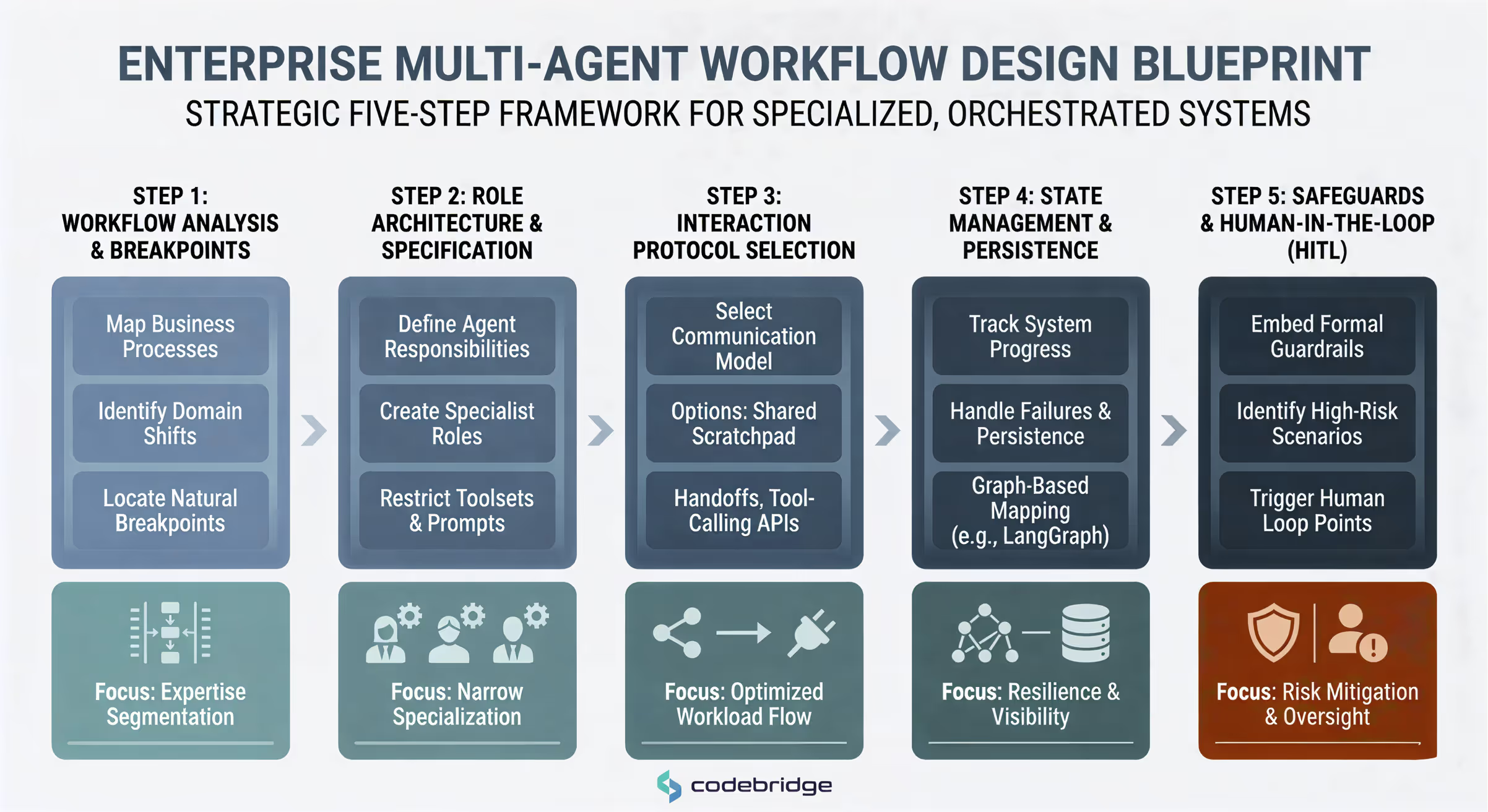

The Engineering Blueprint: Step-by-Step Workflow Design

Building an orchestrated system requires a disciplined, iterative approach. Founders and CTOs should ensure their engineering teams follow this execution-focused roadmap:

Step 1: Workflow Analysis and Natural Breakpoints

Begin by mapping existing business processes to identify where specialized expertise is needed. Identify "natural breakpoints" where a task requires a shift in domain (e.g., from legal compliance to financial calculation).

- Final Output: A comprehensive Dependency Graph showing the sequence and relationship between all sub-tasks.

Step 2: Role Architecture and Agent Specification

Define the specific responsibilities for each agent. Instead of generalist assistants, create specialists with narrow prompts and restricted toolsets.

- Final Output: An Agent Specification Manifest detailing the role, goal, required tools, and prompt constraints for every entity in the network.

Step 3: Interaction Protocol Selection

Select the communication model that best fits the workload. Options include a Shared Scratchpad (where all agents see all history), Handoffs (where agents pass only relevant data to the next destination), or Tool-Calling (where agents treat each other as callable APIs).

- Final Output: A documented Interaction Protocol that minimizes "communication overload" and prevents saturation of control channels.

Step 4: State Management and Persistence Strategy

Determine how the system will track progress and handle failures. Using graph-based representations (like LangGraph) allows for visual mapping of interactions and built-in state persistence.

- Final Output: A Continuity Blueprint defining how the system recovers state after interruptions or crashes.

Step 5: Safeguards and Human-in-the-Loop (HITL) Triggers

Embed formal guardrails and escalation points. Identify "high-risk" or "ambiguous" scenarios where the system must halt and loop in a human expert.

- Final Output: An Escalation Policy that ensures zero-trust security and provides an empathy backstop for sensitive tasks.

Conclusion

AI is shifting from a race for bigger models to a race for better coordination. And competitive advantage now comes from how effectively organizations orchestrate multiple agents into a reliable, well-governed system.

Organizations deploying multi-agent systems at scale treat the architecture as core infrastructure. They design for modularity, clear role separation, observability, and built-in controls. This allows them to replace models without rebuilding the stack, enforce least-privilege access in regulated environments, and contain risk through structured oversight and fail-safes.

This approach reduces iteration time, improves auditability, and lowers operational risk by isolating responsibilities and enforcing structured oversight. Multi-agent systems are not just a technical upgrade — they enable scalable, secure, and production-scale AI operations.

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript