While building a Large Language Model (LLM) prototype is relatively straightforward, scaling that system in production is where most initiatives collapse under the weight of latency spikes and unpredictable costs.

Gartner reports that by the end of last year, at least 50% of generative AI projects were abandoned after proof of concept, primarily due to poor grounding, inadequate data architectures, and a lack of structured workflows for prompt-driven systems. Scaling LLMs requires treating them as infrastructure components, not just API endpoints. This shift is what defines LLMOps.

This article clarifies the distinction between MLOps and LLMOps as operational disciplines rather than tool categories. We will define both in practical terms, compare their architectural assumptions, and examine how deterministic training-centric systems differ from inference-centric probabilistic ones.

From there, we analyze common scaling failures observed in production LLM environments and outline the full lifecycle of large language models. The goal of this piece is to provide a decision framework that helps technical leaders understand when LLMOps becomes necessary and what architectural maturity looks like in practice.

What Is MLOps?

Machine Learning Operations (MLOps) is an engineering discipline that connects machine learning development with operations and data engineering so that models run as stable, scalable production systems. It addresses the common problem where models perform well in isolated experiments but break down under real production traffic or changing data conditions.

In practice, MLOps replaces manual, script-based handoffs with automated CI/CD and Continuous Training (CT) pipelines. It covers the full lifecycle: data collection, feature engineering, model training, optimization, deployment, and ongoing monitoring.

Unlike traditional software, machine learning systems degrade over time when real-world data changes – a phenomenon known as concept drift. MLOps reduces this risk through monitoring, feedback loops, and retraining mechanisms that help maintain performance.

For the business, MLOps is what turns AI from experimentation into operational capability. While up to 88% of corporate ML projects never move beyond the lab, organizations that successfully operationalize models report profit margin increases of 3–15%. MLOps gives founders and CTOs governance, visibility, and cost control, allowing them to manage large model portfolios without accumulating hidden technical debt.

In deployment, it ensures that predictive models remain reliable business assets rather than short-lived experiments.

What Is LLMOps?

Large Language Model Operations (LLMOps) is the structured practice of managing the full lifecycle of LLM systems, from development and deployment to monitoring and ongoing improvement by adapting traditional MLOps principles to the unique demands of probabilistic AI.

LLMOps manages systems that respond to dynamic user inputs and unstructured data. It focuses heavily on inference-time behavior. In practice, this means introducing structured workflows for prompt versioning, retrieval orchestration (RAG), and continuous evaluation of model outputs. The goal is to turn early AI experiments into stable, production-ready systems.

For founders and CTOs, LLMOps is what allows a company to move beyond isolated pilots and build scalable, AI-native products. Generative systems operate under an inference-first cost model, where token usage and context size directly affect spending. Without structured oversight, costs can escalate quickly. LLMOps introduces visibility and control over these variables, helping teams manage performance and budget in parallel.

Beyond capital preservation, it acts as a critical governance layer, ensuring compliance with regulations like GDPR or HIPAA by providing the audit trails and architectural guardrails, such as session tainting, necessary to mitigate hallucinations and security threats.

Operationally, LLMOps becomes the architectural layer that makes generative systems reliable and trusted. By aligning prompt management, evaluation processes, monitoring, and cost controls, organizations can iterate quickly without sacrificing reliability. The result is not just faster experimentation, but infrastructure that can support mission-critical use cases with confidence.

MLOps vs. LLMOps: Key Differences

While both share the goal of delivering reliable AI at scale, their operational optimizations differ across every core dimension. For an executive audience, the distinction lies in how these systems handle uncertainty and capital.

1. From Calculator to Creative Partner

MLOps is built for repeatability. It supports systems that are expected to produce consistent, measurable outputs from structured data, such as fraud scores, churn predictions, or demand forecasts. Once trained and deployed, these models are optimized to return stable results for the same type of input. The operational goal is accuracy and controlled retraining when performance declines.

By contrast, LLMOps manages systems that generate new outputs each time they are used. Large Language Models do not simply classify or score data; they produce text, summaries, recommendations, or code based on dynamic user prompts and changing context. Their behavior is probabilistic, meaning the same input can lead to different outputs. As a result, the operational focus shifts from pure accuracy to managing variability, guiding behavior, and continuously evaluating output quality.

In simple terms, MLOps runs systems designed to calculate, while LLMOps runs systems designed to generate. That difference changes how monitoring, evaluation, cost control, and governance must be designed at scale.

2. The Inversion of Cost

Traditional ML systems are expensive to build and train. Most of the cost sits upfront – collecting data, training models from scratch, and tuning them before deployment. Once the model is in production, inference costs are usually predictable and relatively low.

However, with LLM-based systems, the cost structure changes. Instead of training large models internally, companies typically rely on existing foundation models. This shifts spending from training to paying every time the model processes input and generates output. In this case, operational costs are directly affected by token usage and context size.

3. Quality Control: From Intuition to Measurement

In MLOps, performance is measured with clear metrics such as accuracy, precision, or recall. The output is structured, and the evaluation is objective. You can easily determine whether a prediction is correct or incorrect.

In LLM operations, quality is more contextual. Outputs are written in natural language, and their value depends on clarity, relevance, tone, and correctness. The same response can be technically accurate but still inappropriate or misleading due to system hallucinations. This makes informal review or occasional manual testing insufficient.

As a result, instead of relying on intuition, teams implement automated evaluation frameworks that continuously assess outputs. Approaches such as using one model to evaluate another (“LLM-as-a-judge”) help detect hallucinations, unsafe responses, and brand risks in real time.

4. Operational Agility vs. Fixed Pipelines

ML operations are built around structured, predictable pipelines. Data flows through defined stages, including preprocessing, training, validation, and deployment. The system produces consistent outputs for known tasks. For this reason, these workflows are stable and rarely change in real time.

LLMOps operates differently. Each request may combine a user prompt, retrieved internal knowledge through RAG, system instructions, and contextual data. All these factors are assembled dynamically within milliseconds, and the output is generated on demand, not pulled from a fixed pipeline.

This requires more flexible infrastructure. Systems must handle fluctuating request volumes, external API rate limits, and latency variability without degrading the user experience. In practice, LLMOps environments must absorb spikes, manage timeouts, and maintain responsiveness even when the underlying model behavior is less predictable than traditional ML systems.

Understanding the difference between LLMOps vs MLOps is critical because they address fundamentally different risks. MLOps ensures reliability and stability for predictive systems, while LLMOps manages cost volatility, output variability, and governance in generative systems.

Misclassifying one as the other increases the likelihood that AI initiatives remain expensive experiments instead of becoming dependable, scalable infrastructure.

Common Architecture Mistakes When Scaling LLM Systems

The distinction between MLOps and LLMOps becomes most visible in the real-world production. When LLM systems are designed with ML assumptions, architectural weaknesses emerge quickly at scale.

Scaling an LLM application from a proof-of-concept (PoC) to a production system is rarely a matter of better prompts. Instead, it is a challenge of managing systemic unpredictability, where model outputs, latency, and costs all fluctuate. Most failures at scale stem from treating these engines as if they were deterministic software components.

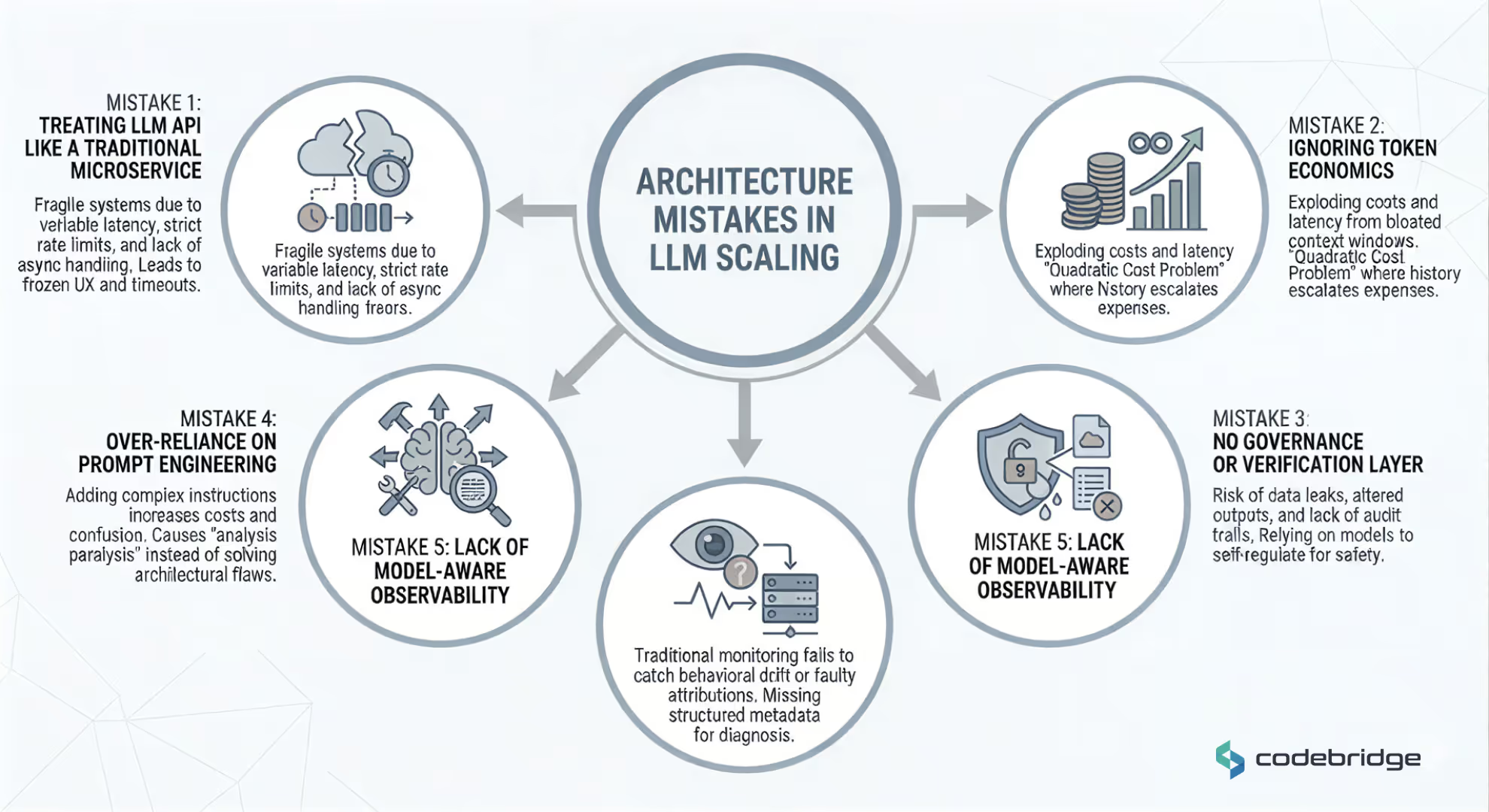

Below are the five critical architecture mistakes that frequently collapse LLM initiatives in production, detailed with execution realities and real-world examples.

Mistake 1: Treating the LLM API Like a Traditional Microservice

Many engineering teams design their AI systems assuming response times similar to standard REST services. However, an LLM is an external probabilistic system with variable response times, strict rate limits, and cost-per-call implications. When the architecture blocks on LLM responses without asynchronous handling or queuing, the entire system becomes fragile.

- The Failure Mode: Under load, a single slow response can block the main execution thread, causing the entire user experience (UX) to freeze or triggering widespread timeouts across the microservices stack.

- Real Example: Cox Automotive, which operates autonomous dealership conversation systems, encountered runaway interaction loops where conversations exceeded reasonable bounds. Without circuit breakers, hard limits on conversation turns (approximately 20), and P95 cost ceilings, sessions could continue indefinitely, locking resources and generating uncontrolled expenses. Their solution was not better prompting, but classic distributed system safeguards such as bounded execution and cost thresholds.

Mistake 2: Ignoring Token Economics and the "Quadratic Cost Problem"

At a prototype scale, token usage feels negligible. However, in production token consumption is the largest cost center. A common mistake is pushing entire chat histories into every request to maintain "memory," which leads to what some call the quadratic cost problem, where every new turn in a conversation makes the next turn significantly more expensive and higher latency.

- The Failure Mode: Mismanaging the context window results in exploding token bills and performance degradation as the model struggles to attend to relevant data in a bloated prompt.

- Real Example: A multi-agent system for market data research at GetOnStack saw its weekly costs escalate from $127 to $47,000 over just four weeks. This was caused by an undetected infinite loop between two agents. Agent A asked Agent B for help, and Agent B requested clarification, creating a recursive cycle that ran for 11 days because the architecture lacked cost-monitoring guardrails.

Mistake 3: No Governance or Verification Layer

Enterprises often deploy LLMs without a centralized governance layer that handles audit logs, prompt access controls, or output verification. Without this layer, the organization cannot prove how a specific output was generated or ensure that sensitive data isn't leaking into the model's training or prompt streams.

- The Failure Mode: Relying on the model to self-regulate for legal or safety constraints. This is a fragile strategy because LLMs can easily bypass their own system instructions through adversarial prompts.

- Real Example: Toyota’s vehicle information platform faced the challenge of ensuring legal disclaimers were never altered by the model's generative process. Recognizing that they couldn't trust the model to accurately repeat legally binding text, they implemented "stream splitting". Their architecture forces the model to output ID codes for legal disclaimers rather than the text itself; the application layer then injects the immutable, vetted disclaimer based on that code, removing the risk of hallucination from the compliance process.

Mistake 4: Over-Reliance on Prompt Engineering to Fix Systemic Flaws

Teams often attempt to fix reasoning issues or hallucinations by adding paragraphs of complex instructions to the prompt. While this may slightly improve quality in a few cases, it increases token costs and adds complexity without solving the underlying architectural flaw.

- The Failure Mode: The "analysis paralysis" occurs when agents are given too many tools or instructions in a single prompt. The model spends more time deciding which instruction to follow than executing the task.

- Real Example: Cubic, while building an AI code review agent, initially gave the agent more tools and broader instructions to increase its "intelligence". Instead of better performance, they saw significant degradation as the agent became confused, generating excessive false positives that eroded developer trust. They had to streamline the architecture by removing tools and forcing the agent to output explicit reasoning logs before acting, proving that fewer capabilities often yield more reliable results.

Mistake 5: Lack of Model-Aware Observability

Traditional monitoring tracks infrastructure metrics like CPU usage and memory, but LLM systems require behavioral observability. Many teams fail because they do not log structured metadata, such as prompt versions, completion sizes, latency, and reasoning traces, making it impossible to diagnose why a model's behavior has drifted.

- The Failure Mode: "Surface Attribution Errors" remain invisible without deep tracing. This occurs when a model incorrectly blames a technology or fact simply because it was mentioned in the retrieved context, not because it was the actual root cause.

- Real Example: Zalando’s postmortem analysis pipeline encountered this failure mode when an LLM would blame a specific service (like S3) for an outage merely because it appeared in the text of an incident report. Without a model-aware monitoring stack that could track causality, they couldn't identify that the model was performing "lazy attributions". They had to re-engineer the pipeline into multiple stages where specific, smaller models were solely responsible for classifying causality.

Scaling an LLM application is less about smarter prompts and more about throughput, rate limits, and concurrency management. Most failures result from applying traditional software assumptions to AI logic.

When Do Companies Actually Need LLMOps?

Investing in LLMOps marks the change from experimentation to production reliance. While the informal manual testing during early development suffices for a prototype, it inevitably collapses under real-world traffic, cost pressure, and unpredictable model behavior.

For leadership, the shift to LLMOps is driven by three specific organizational realities: the "LinkedIn Rule" of quality, the transition across maturity phases, and the emergence of systemic risks that traditional software engineering cannot mitigate.

The 95% Wall: The "LinkedIn Observation."

A consistent pattern in production deployments is that reaching 80% output quality happens rapidly, but pushing past 95% consumes the vast majority of development time. Organizations require LLMOps when they hit this wall.

In this final stretch, manual spot-checking of responses is no longer viable. Success here requires transitioning to systematic, automated evaluation frameworks, often using "LLM-as-a-judge" patterns, to quantitatively track whether architectural mitigations are actually effective./

Organizational Triggers: From Startup to Enterprise

The need for LLMOps typically manifests through distinct growing pains as a company scales its AI initiatives:

- The Observability Phase (Seed to Series A): A company needs LLMOps when its engineering team spends more time debugging failures than building new features. At this stage, without full-stack visibility into reasoning traces and token-level cost attribution, quality drops remain unexplainable and prompt changes are rolled back manually in a cycle of trial and error.

- The Collaboration Phase (Scaleup / Series B+): LLMOps becomes mandatory when multiple teams ship AI features, but prompts live in unversioned documents like Notion or Slack. This fragmentation leads to cross-team prompt conflicts and an inability to compare model performance systematically. A centralized LLMOps platform is required to treat prompts as version-controlled code and prevent quality regressions through automated regression detection.

- The Governance Phase (Enterprise): For organizations in regulated domains (e.g., healthcare, finance), LLMOps is the prerequisite for production. Governance is required when security reviews block deployments because the organization cannot prove how sensitive data is handled or trace an AI decision back to a specific prompt version to meet HIPAA or SOC2 standards.

Architectural Triggers: Managing Systemic Unpredictability

Beyond organizational size, the technical architecture itself may demand LLMOps through several specific failure modes:

- Exploding Token Economics: Organizations need LLMOps when token usage becomes a major cost center rather than a negligible line item. Without a sophisticated assembling context through RAG or memory summarization, unoptimized context windows lead to exponential budget leaks that burn infrastructure funds at an unsustainable rate.

- Agentic Complexity: If a system evolves from "drafting assistance" to autonomous, multi-step workflows, it requires the "durable execution" frameworks and circuit breakers found in LLMOps.

Without these, agents can enter recursive loops, such as Agent A and Agent B requesting clarification from each other indefinitely, resulting in undetected cost spikes like the $47,000 incident seen at GetOnStack.

LLMOps becomes necessary when AI-generated errors create material operational or compliance risk. The experimentation phase has ended when cost control and architectural ownership become the primary drivers of the product roadmap.

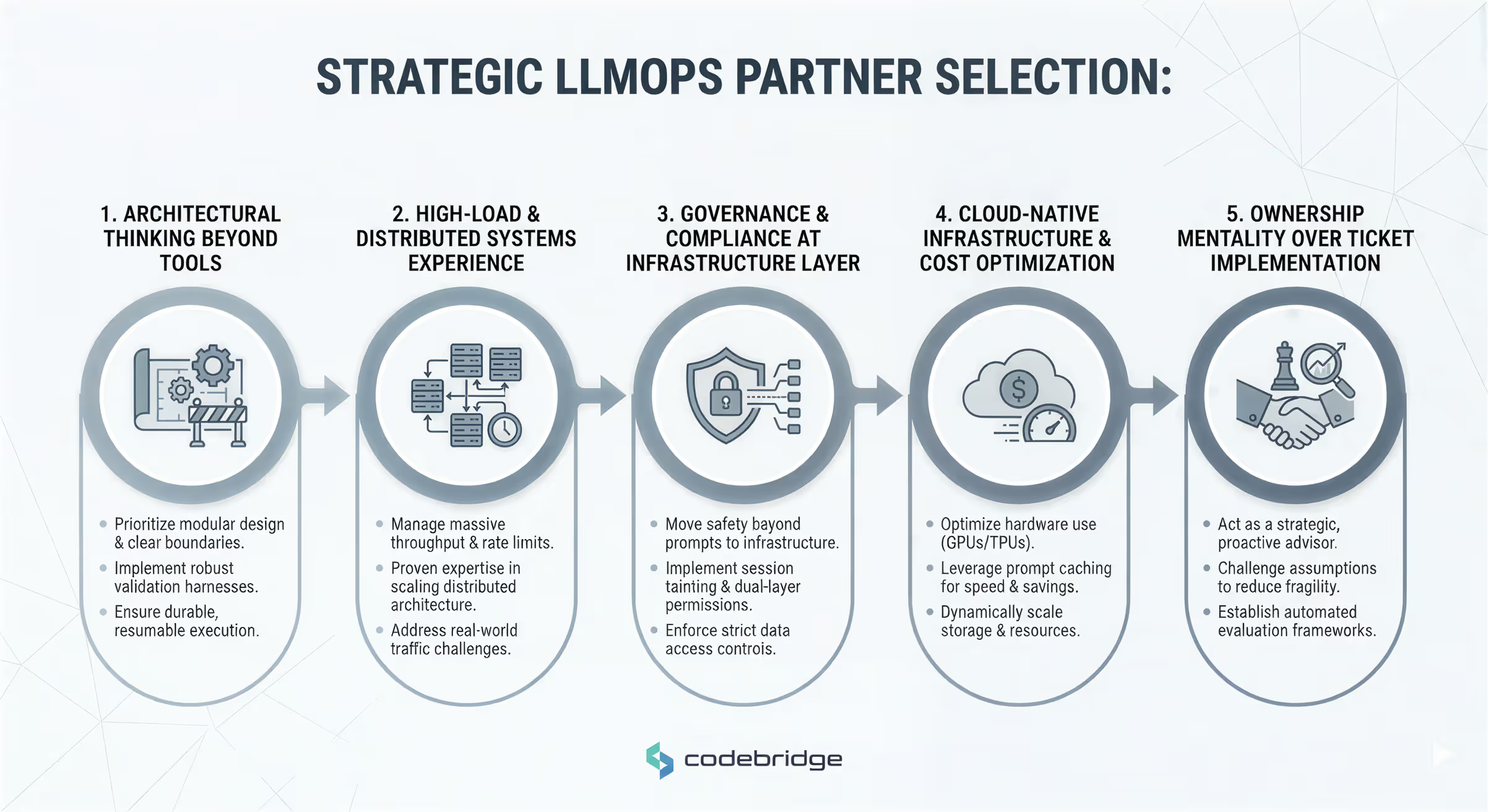

What to Look for in an LLMOps Partner

Selecting a development partner for LLMOps requires looking beyond AI enthusiasm to the core systems engineering discipline. Because raw model capability is increasingly commoditized, the real value lies in the infrastructure that makes those models reliable and cost-effective.

Founders and CTOs should evaluate partners based on their ability to contain model unpredictability with solid engineering controls.

1. Architectural Thinking Beyond Tools

A mature partner does not view LLMs as magic endpoints but as chaotic components that must be contained. They should prioritize designing clear system boundaries, separating the untrusted, non-deterministic AI layer from the deterministic application logic.

A reliable partner will focus on:

- Modularity: Designing a stack where model providers can be swapped without rewriting the entire backend.

- Harness Engineering: Building the "harness" around the model, including validation layers and circuit breakers, rather than just refining prompts.

- Durable Execution: Implementing frameworks that allow long-running agentic tasks to maintain state and resume after network failures rather than restarting from scratch.

2. Experience with High-Load and Distributed Systems

The hardest part of scaling is not generating text, but managing throughput and rate limits under real-world traffic. A partner must demonstrate a deep understanding of distributed systems, as the skills required for production LLMOps are often closer to networking and platform engineering than AI research.

3. Governance and Compliance at the Infrastructure Layer

In regulated domains (healthcare, finance, legal), "safety by prompt" is insufficient. Large Language Models can often bypass their own system instructions through adversarial attacks.

A sophisticated partner moves safety out of the prompt and into the infrastructure. Look for expertise in:

- Session Tainting: Architectural patterns where a session is "tainted" once it touches untrusted data, automatically blocking it from secure sinks or external communication.

- Dual-Layer Permissions: Ensuring that an agent cannot access any data that the human user themselves is not authorized to see.

- Stream Splitting: Forcing the model to output immutable ID codes for regulated text (like legal disclaimers) rather than generating the text itself, which eliminates the risk of hallucinated compliance data.

Compliance implication: Prompt-level safety is insufficient

Regulated environments require infrastructure-layer governance such as session tainting and stream splitting.

4. Cloud-Native Infrastructure and Cost Optimization

Efficient LLM deployment is a hardware optimization challenge. A partner must understand the trade-offs between GPUs and TPUs and when to use smaller, specialized models (e.g., 8B parameters) that match frontier model quality for specific tasks at 50x lower latency.

Key performance levers they should manage include:

- Prompt Caching: Implementing caching for static context (like large medical records or system prompts), which can cut token costs by up to 86% and improve speed by 3x.

- Speculative Execution: Techniques to improve throughput and decrease latency in real-time user-facing applications.

- Storage Management: Dynamically scaling storage volumes based on actual usage to prevent the 4x overprovisioning costs common in ML operations.

5. Ownership Mentality over Ticket Implementation

Finally, a partner should act as a strategic advisor who challenges architectural assumptions rather than a vendor that merely implements tickets. Over-engineering increases system fragility, while simplified architectures are easier to monitor and control.

A partner with an "ownership mentality" treats your infrastructure budget as their own, identifies the "95% wall" where manual spot-checks fail, and implements the systematic, automated evaluation frameworks required to bridge the gap between a prototype and a trusted enterprise product.

The Future of LLMs and LLMOps

The field is moving toward multi-model systems where the "frontier model" is just one component.

The industry is moving into a phase of disciplined AI systems engineering. For founders and CTOs, the future of LLMs is no longer defined by who has the largest context window or the highest benchmark score, but by who builds the most resilient, cost-effective infrastructure around those models.

The following three trends represent the next stage of operational maturity for LLMOps.

1. From Prompt Hacks to Context Engineering

As the novelty of prompt engineering fades, "Context Engineering" is emerging as the primary performance lever. Stuffing a million tokens into a context window leads to "context rot" and "analysis paralysis," where model performance degrades even if the tokens fit.

Future systems will rely on "Just-in-Time" context injection, dynamically assembling only the relevant tool definitions and data for a specific turn, then purging them immediately after use. Techniques where agents only see the specific API fields required for a task will become standard to reduce choice entropy and improve reliability.

2. Autonomous Agentic Workflows and Durable Execution

Agents are evolving from "drafting assistants" to autonomous entities completing multi-step, mission-critical tasks without human intervention. To support this, the industry is adopting "durable execution" frameworks.

Because long-running agentic tasks are prone to network timeouts and service interruptions, future LLMOps stacks will treat agents as stateful microservices. If a research agent fails mid-task, it will resume from the exact step it left off rather than restarting from scratch, ensuring both reliability and token cost preservation.

3. Mandatory Infrastructure-Layer Governance

Future governance will live in the infrastructure layer through architectural guardrails like "session tainting" and "dual-layer permissions".

Architects will design systems to automatically block an agent from secure sinks (like external communication) once it has touched untrusted data, regardless of what its prompt says. Furthermore, "circuit breakers" will be mandatory, hard architectural limits that kill recursive loops or stop agents once they reach a P95 cost or turn threshold.

Conclusion: LLMOps Is Not a Toolset – It’s an Architectural Shift

LLMOps is not merely about choosing among tools; it is a fundamental change in how organizations manage risk at scale. Success in the next generation of machine learning will not be determined by who has the smartest model, but by who has the most disciplined infrastructure.

The experimentation phase of generative AI has ended. The engineering phase, defined by reliability, cost control, and architectural ownership, has begun.

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript