The hardest part of deploying AI agents is not generating outputs. It is deciding what happens when an agent acts with the wrong context or the wrong permissions.

Once an agent moves beyond text generation and starts interacting with tools, workflow state, and live business processes, the risk changes. At that point, the key question for technology leaders is whether the surrounding system can keep that usefulness under control.

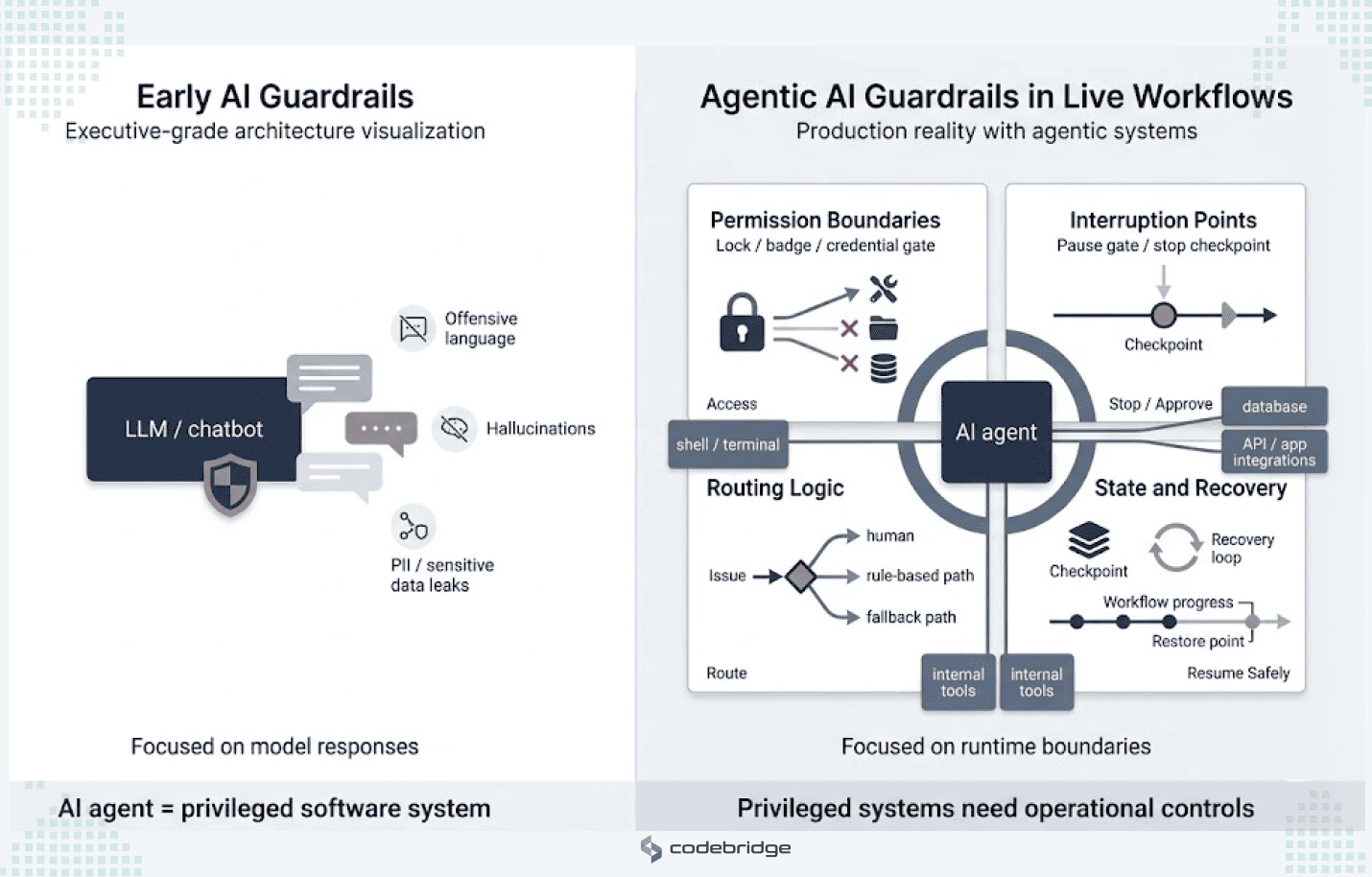

This is where most guardrail discussions fall short. Prompt constraints and output filters still matter, but they do not solve the larger production problem. In live workflows, control means deciding who can authorize an action, what can interrupt execution without breaking state, where exceptions should be routed, and how the workflow resumes after failure.

A production-ready agent is defined by how much control the business retains when the workflow becomes risky, ambiguous, or starts to go wrong.

What AI Agent Guardrails Mean Once Agents Touch Live Workflows

In early AI implementations, guardrails usually referred to model-safety measures: mechanisms intended to prevent offensive language, hallucinations, or the disclosure of PII. Those measures are still necessary, but they are not enough for agentic systems that can invoke shells, modify databases, or interact with third-party APIs.

In production systems, guardrails need to be understood as a runtime architecture that defines the operational boundaries of an autonomous system. They are the mechanisms that determine:

- Permission boundaries: which tools, data, and credentials the agent can access.

- Interruption points: the moments when execution must stop for approval, validation, or policy checks.

- Routing logic: whether an issue should go to a human, a deterministic rule, or a narrower fallback path.

- State and recovery: how the system tracks progress, avoids duplicate actions, and resumes safely after failure.

That is the real shift. An AI agent becomes a privileged software system, and privileged software systems require real operational controls around them.

Why Agent Failures Are Not Just Model Failures

A bad action inside a live workflow is fundamentally different from a hallucinated answer in a chatbot. Once an agent can query internal systems, trigger tools, or move a process forward, failure becomes operational.

Agents fail in ways that affect the workflow itself, not only the text they produce. They can act on stale context, call the wrong tool, misread a system response, or continue executing after conditions have changed. A retrieval step may surface the right document, yet the agent may still apply the wrong rule. A retry loop may repeat an action that should only happen once.

These are not phrasing errors. They are system behaviors with downstream consequences: incorrect updates, broken process state, duplicate actions, or false confidence in a completed task.

Production control has to sit between reasoning and execution. The risk is not only that the model says something wrong. It is that the workflow proceeds as though that mistake were true.

Kill Switches: How to Interrupt an Agent Without Collapsing the Workflow

In enterprise discussions, a kill switch is often reduced to the idea of a single emergency shutdown button. In a real production environment, that view is too narrow. A full shutdown can interrupt healthy workflows, leave actions half-finished, or create new failures in the surrounding system.

The first design question is what stop actually means inside the workflow.

For some AI systems, stopping should mean disabling write actions, blocking access to a specific tool, freezing automation, or forcing the agent into read-only mode. For others, it may mean pausing a single session for review while the rest of the platform continues running. The objective is to define multiple stop states based on risk rather than relying on one binary control.

Those controls must also sit outside the agent’s own reasoning path. A real kill switch should be enforced through the orchestration layer, access controls, or infrastructure policy, not through prompts or model instructions that the system may work around.

Kill switches are only useful when they are protected and tested. Access should be limited, every activation should be logged, and teams should rehearse shutdown scenarios before they are needed in production.

A kill switch is not a panic button. It is a layered interruption design that stops the right part of the system. For these mechanisms to satisfy governance requirements, every intervention must generate immutable, attributed logs.

Sources: NIST AI Risk Management Framework

Escalation Paths: Where Uncertainty Becomes a Routing Decision

Escalation is a routing system that determines when system autonomy gives way to supervised decision-making. It is not simply a matter of sending outputs to a human for review. It is a logic layer designed to handle high-risk actions, policy conflicts, and abnormal execution states.

A strong escalation architecture is triggered when an agentic system encounters specific thresholds:

- Authority Thresholds: the agent attempts a tool call or write operation beyond its pre-assigned privilege level.

- Contextual Ambiguity: the system encounters novel incidents or conflicting instructions that do not match known failure patterns.

- Missing Data: the agent lacks the specific parameters or environmental context required for safe execution.

- High-Stakes Policy Conflicts: the cost of a mistake, such as in financial trading, healthcare data access, or public-facing communication, outweighs the efficiency benefit of automation.

A useful escalation path requires a named owner, a defined response window, and concise context. Humans should not be given raw logs alone. Instead, agents should provide a summary that includes the timeline, the scope of the issue, and attempted remediations. That allows the expert to focus on judgment, negotiation, and strategy.

One of the main risks in escalation design is review fatigue. When humans are required to approve hundreds of low-risk actions, oversight turns into routine clicking rather than real judgment. Mature systems reduce that risk by routing only the most sensitive exceptions to people while using deterministic fallbacks or alternate business rules for lower-stakes uncertainty.

This layer is also essential for meeting regulatory expectations. Article 14 of the EU AI Act stresses that human oversight must be operational. That requires a demonstrable ability for humans to intervene in real time, supported by immutable, attributed logs that record who reviewed an action, what context they received, and the final resolution path.

Sources: EU AI Act Article 14

Recovery Design: How the Workflow Resumes After the Stop

Recovery design is the layer that separates a fragile prototype from a production system capable of surviving real-world incidents. Stopping an agent is only the first half of the operational problem. The business must also restore the interrupted state without losing data or duplicating actions.

That requires the integration of forensics and execution traces: effectively replayable logs of agent decisions and tool calls that provide the visibility needed for post-incident analysis.

Senior technology leaders should design for three distinct recovery modes:

- Resume from Checkpoints: using state persistence and versioned environments to restore a workflow to its last known good configuration.

- Idempotent Retries: ensuring that individual steps, especially those involving state-changing writes or API calls, can be repeated without side effects or duplicate transactions.

- Compensation Patterns: implementing undo or offsetting workflows for distributed systems where a direct rollback is impossible, such as a sent email or a processed payment.

Idempotency is a critical requirement because AI agents are inherently non-deterministic. Even at a temperature setting of zero, hardware-level variations can produce slight execution differences, which means exact-match testing cannot be relied on for recovery. Systems therefore need to intercept generated code before it reaches an execution sandbox so they can evaluate functional safety and prevent destructive commands from being repeated.

Regulators and auditors increasingly expect organizations to provide evidence, such as replaying an abuse path to confirm that a fix works, before a high-risk system resumes live operation. In practice, the maturity test is not whether the agent fails. It is whether the system can stop, route, and recover the workflow without the business losing control.

Stop, Escalate, Recover

A Practical Control Architecture for Agentic Systems

A useful control architecture sits around the workflow. It defines how far the agent can go, when it must stop, and how the business remains in control when something begins to drift. For businesses, that is the real test of production readiness.

A practical control layer usually comes down to five parts.

1. Permission Boundaries

Start with authority. An agent should never inherit broad access by default. It should operate with a narrow identity, limited tool access, and only the permissions required for a specific workflow or task.

High-risk actions should sit behind additional approval or policy checks. This matters because the safest way to reduce agent risk is to reduce what the model is allowed to touch when something goes wrong.

2. Runtime Monitoring

Production monitoring cannot stop at uptime, latency, or successful API responses. It needs to track how the agent behaves inside the workflow.

That includes repeated retries, unusual tool sequences, stalled execution, rising cost, or actions that fall outside the expected path. The goal is to detect when the workflow starts behaving in ways that appear unsafe, wasteful, or misaligned with the task.

3. Escalation Logic

Not every exception should go directly to a human, and not every human review step is useful. Escalation works best when it is tied to clear conditions: missing data, authority limits, policy-sensitive actions, or uncertainty the system cannot safely resolve on its own.

Some cases should route to a person. Others should fall back to deterministic rules or a narrower workflow. What matters is that escalation is designed as routing logic rather than left as a vague review step at the edge of the system.

4. State and Recovery Controls

A production workflow should not become fragile the moment execution is interrupted. The system needs to know what has already happened, what can be retried safely, and where the workflow should resume.

That means preserving state, keeping execution history, and designing writes so that a retry does not create duplicate transactions or corrupt data. Recovery is about continuing the workflow without losing track of what is already true.

5. Governance Visibility

The final layer is evidence. After deployment, the business needs to be able to review what happened, why it happened, and who had control at each step.

That requires logs, decision records, policy traces, and enough context to determine whether a failure came from infrastructure, workflow design, permissions, or an incorrect operational decision.

Without that visibility, teams may have controls in theory but very little proof that those controls worked in practice.

This is what a real control architecture does. It makes uncertainty governable by bounding authority, monitoring execution, routing exceptions, preserving recoverable state, and leaving behind a usable record of what happened.

Conclusion: The Control Layer Is Part of the Product Architecture

AI agents become production systems the moment they start taking actions inside real workflows. At that point, control is no longer something that can be added later through policy or review. It becomes part of the architecture.

The teams that scale agentic AI effectively will be the ones that decide in advance how the system can be stopped, where it must escalate, and how the workflow recovers when something goes wrong.

In production, that is the real test: whether the business can contain failure, maintain control, and continue operating when the system is under pressure.

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript