The dominant story around artificial intelligence has long been shaped by a web interface where a user enters a prompt and receives a response. The rapid emergence and adoption of OpenClaw point to something more significant. OpenClaw is an open-source, self-hosted gateway that connects Large Language Models (LLMs) to tools, messaging channels, and persistent memory. Its relevance for technology leaders lies less in its individual popularity than in the architectural shift it signals: AI moving from a SaaS feature to persistent, user-controlled infrastructure.

For Founders and CTOs, the “lobster” framework, as the OpenClaw community describes it, is a clear signal of where agentic systems are heading. The next phase of deployment will be shaped by who controls the runtime, how state is managed across channels, and whether governance can keep pace with autonomy.

What the Rise of OpenClaw Signals to the Business World

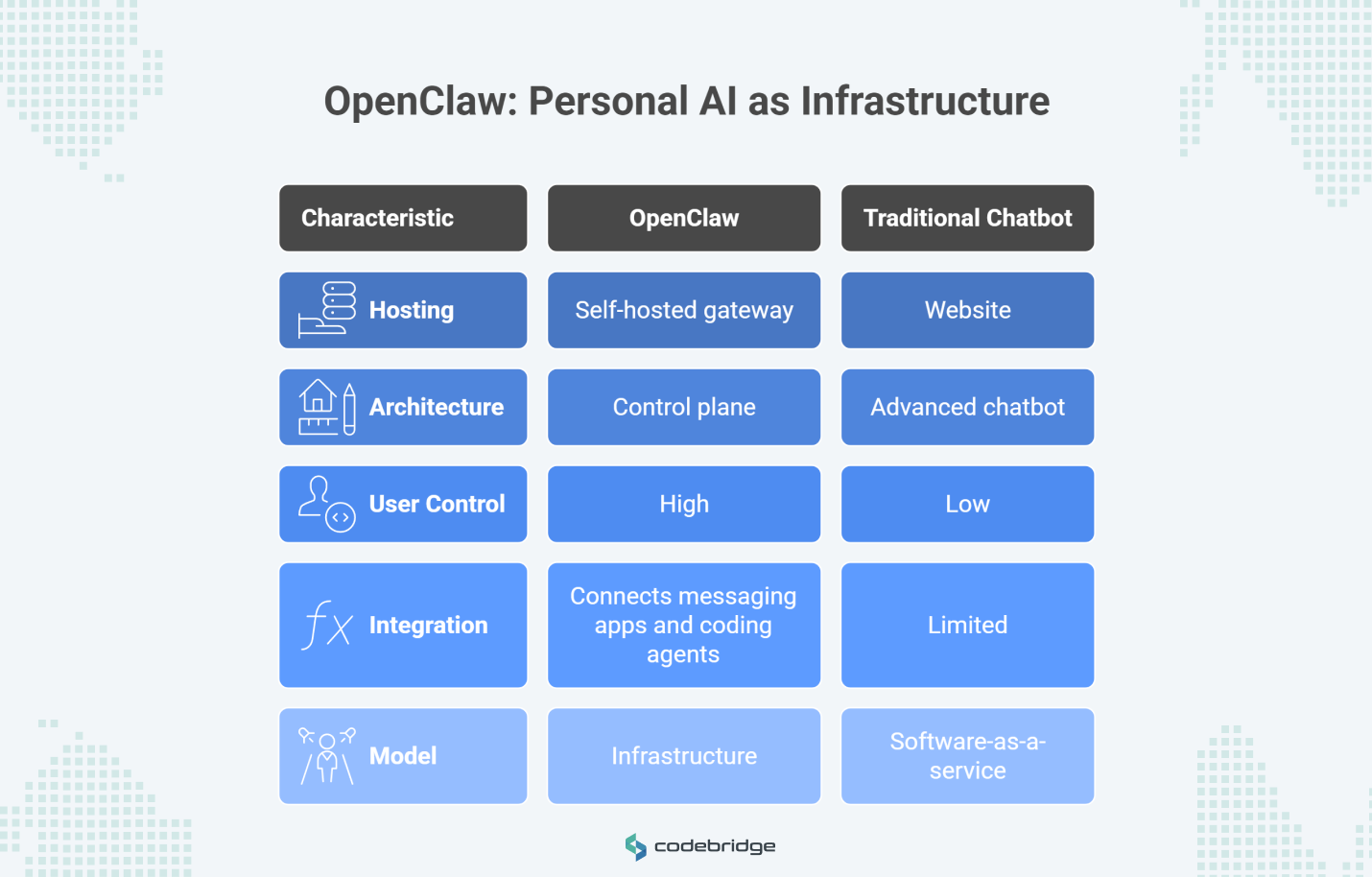

OpenClaw presents itself as a personal AI assistant running on a user’s own hardware, connecting messaging apps such as WhatsApp or Telegram with coding agents like Pi. On the surface, that may resemble a more advanced chatbot. In production, the architecture is materially different: it is a self-hosted gateway process that functions as a control plane.

That distinction matters for long-term system decisions. In this model, the assistant is no longer a website a user visits. It becomes a runtime the user controls. The OpenClaw gateway handles message ingress and egress, session transcripts, tool execution policies, and device pairing.

By separating the interface from the execution layer, OpenClaw points toward a model of personal AI that sits closer to the user’s local environment, including files, communication channels, and execution context. For decision-makers, the more important development is that personal AI is beginning to look more like infrastructure than software-as-a-service.

Why Local-First AI Agents Are Resurging

The return of local-first and self-hosted models is a practical response to the friction built into today’s cloud-based AI ecosystems. Cloud platforms offer convenience, but they also create recurring problems around fragmented toolsets, limited control over local execution, and persistent privacy concerns when sensitive business data is involved.

OpenClaw is attractive to power users and developers who need continuity and control. Its architecture is built around multi-channel access, sessions, and memory, which supports a persistent context that one-off web prompts often lose. From an execution perspective, local-first models also address the “Edge AI Paradox”: the tension between the need for frontier-level reasoning and the hardware limits of localized devices. Innovations such as 4-bit quantization and Grouped-Query Attention (GQA) have made it possible for sub-10 billion parameter models, including Llama-3-8B, to achieve the functional reasoning of much larger models while operating on consumer-grade hardware.

As agents gain workflow authority, with the ability to act across files and tools, the core question for a CTO shifts. The issue becomes who controls the runtime. Local-first models are resurging because agents that can run shell commands or browse the web require a trust boundary inside the corporate or personal network, not outside it.

How Personal AI Is Becoming an Infrastructure Layer

The OpenClaw gateway acts as the single source of truth for routing, session management, and channel connections. It supports isolated sessions by agent or workspace, which enables multi-agent routing and reduces the risk of context leakage across tasks or senders. That is a meaningful change. It moves the assistant from an application a user opens to an operating layer that can be accessed through multiple surfaces, including CLI, browser UI, and mobile nodes.

Seen this way, the chat interface becomes interchangeable. The control plane is what matters: the layer responsible for state, event routing, and permissions. Where product evaluation once focused on chat quality, the next stage will focus more on the resilience and interoperability of the runtime itself. That is why “personal AI infrastructure” is a useful description for this category. It refers to a system that orchestrates work above communication channels and below the business workflows themselves.

What the Shift to Personal AI Means for SaaS Vendors

This architectural shift has implications for traditional SaaS vendors. Investors have already raised concerns that new AI tools could absorb functions that specialized corporate software was originally built to handle, including customer data organization and guided business workflows.

Major vendors are already repositioning in response. Oracle is reworking its cloud-based financial and procurement software into “agentic apps” built to work with AI agents, with the aim of allowing human users to ask business questions while agents retrieve data across fragmented applications. Salesforce is also repositioning itself as an enterprise platform for building, deploying, and governing AI agents on top of its proprietary customer data.

The competitive issue for SaaS vendors is not necessarily total replacement. It is the possibility that they stop being the primary work surface and become, instead, a governed system of record and execution backend. In that scenario, workflow logic moves up a layer toward user-controlled agent platforms. SaaS vendors will increasingly compete on how effectively they expose their data and actions to external agent layers.

Why Open Standards Will Shape the Agent Stack

As the agent ecosystem matures, enterprises are unlikely to rebuild core agent plumbing for every model vendor or application. That need for interoperability helped drive the creation of the Agentic AI Foundation (AAIF) under the Linux Foundation in December 2025. Backed by Anthropic, OpenAI, Google, and Microsoft, the AAIF is intended to support more transparent and collaborative development of agentic AI.

Within that context, the Model Context Protocol (MCP) is emerging as an important standard. Originally introduced by Anthropic, MCP provides a universal protocol for connecting AI models to data, tools, and applications. There are more than 10,000 active public MCP servers and adoption across platforms such as ChatGPT, Cursor, and VS Code. In practice, MCP is becoming part of the connective layer that allows agents to work together.

For technical leaders, the most durable agent layer is unlikely to be the one with the strongest demo. It is more likely to be the one built on the most interoperable and portable control architecture.

Why Governance Matters More Than Autonomy for AI Agents

In production environments, raw autonomy is a liability. As agents gain access across tools and sensitive data, architectural boundaries have to be enforced outside the model itself. Northeastern University's study showed that OpenClaw agents, despite being trained for “good behavior,” could still be manipulated into self-sabotage or secret disclosure through human guilt-tripping and gaslighting.

At the same time, regulation is becoming more demanding. The European Union’s AI Act, which entered into force in August 2024, establishes a legal framework based on four levels of risk. By August 2026, most of its rules will be fully applicable, requiring providers of high-risk AI systems to maintain strict documentation, human oversight, and strong cybersecurity and robustness practices.

For enterprise adoption, the decisive question is not simply whether an agent can complete a task. It is whether an organization can prove what that agent can access and how it can be audited when something goes wrong. Under that standard, governance mechanisms become core architectural components. One of the examples of this shift is the “dangerous three” separation between external sources, implementation plans, and execution. Furthermore, OpenClaw’s own documentation already reflects this emphasis through tokens, allowlists, and workspace isolation.

What Enterprises Will Standardize in Personal AI Infrastructure

Individuals and technical teams will continue experimenting with local-first, self-hosted assistants to preserve privacy and control in their own creative processes. At the enterprise level, however, organizations are more likely to converge around governed agent platforms.

Those platforms are described through four characteristics:

- Clear identity and permissions, with standardized models for agent identity similar to those being developed within the AAIF.

- Interoperable routing, supported by protocols such as MCP for connecting models to enterprise systems.

- Rigorous auditability, including hash-chained audit logging and tamper-evident tracing.

- Deterministic execution, moving toward a “code before prompts” model in which deterministic orchestration layers wrap probabilistic models for consistency.

In that framing, OpenClaw appears not as a final answer, but as an early and visible prototype of the direction the market is taking. It shows that reliable agentic AI depends on specialized runtimes that enterprises can monitor, control, and recover.

Conclusion

OpenClaw marks an important step in the maturation of artificial intelligence. The category is moving away from chatbot UX and toward agent runtime design. In that environment, success will be defined less by model cleverness alone and more by the quality of the control plane: the infrastructure that governs permissions, interoperability, and operational trust. For technology leaders, the strategic objective is no longer only choosing the right AI tool. It is building the right AI architecture.

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript