One agent drafting pull requests or triaging support tickets is a solved problem. The organizational question arises when you deploy five, ten, or twenty agents across a delivery pipeline. Someone has to assign goals, own outputs, and handle exceptions when escalation paths are unclear.

Most teams skip this step. They connect agents to tools, give them channel access, and treat coordination as something they'll figure out later. That works until two agents duplicate the same task or a customer-facing action fires without approval.

This article walks through a specific architecture for hybrid agent-human organizations using OpenClaw as the execution layer and Paperclip as the organizational layer. The focus is on where to draw boundaries, how to structure oversight without creating bottlenecks, and what typically breaks when teams get this wrong.

Why Agent Runtime Alone Doesn't Solve Multi-Agent Coordination

When you run one agent, you are the coordinator. You feed it context, review its output, and decide what happens next. That works because the management overhead is yours, and it scales with your attention.

Add a second agent and the overhead changes shape. Now you need to answer: Does Agent B have access to what Agent A produced? If both agents touch the same ticket, who owns the final state? If Agent B fails mid-task, does Agent A know to stop waiting?

Google Cloud's multi-agent architecture guidance makes the distinction clearly. Single-agent systems can handle multi-step work with external data. Multi-agent systems need explicit coordination patterns because human-in-the-loop coordination does not scale.

OpenClaw addresses the execution side of the problem. It provides a self-hosted gateway connecting agents to messaging platforms (WhatsApp, Telegram, Discord), with control over tool access, automation hooks, and session persistence. Your agents can read channels, call APIs, and maintain state across conversations. OpenClaw gives each agent a stable runtime environment.

But execution surface and organizational control are not the same thing. OpenClaw doesn't define who sets an agent's goals, who reviews its output, what budget ceiling applies to a given role, or what should happen when an agent hits an exception it can't resolve.

Paperclip handles that layer. It models your organization through role definitions, goal hierarchies, per-agent budgets, and heartbeat-driven coordination cycles. Where OpenClaw gives agents the ability to act, Paperclip defines the boundaries and reporting structure within which they act.

Without that structure, you have a collection of capable but unmanaged processes, each operating on its own assumptions about what matters.

The Real Opportunity Is Hybrid Organizations

Full-automation narratives make for good conference talks. In production, they collapse under delivery risk. When an agent cancels a subscription it shouldn't have, pushes a deployment that breaks a downstream service, or commits budget against the wrong project, someone has to own the consequence. That someone is always a human, whether you designed for it or not.

Anthropic and OpenAI both address this directly in their agent design frameworks. Anthropic's guidance centers on a specific principle that humans must retain control over how goals are pursued, not just whether they're achieved. Their framework calls for explicit checkpoints before high-stakes actions like financial commitments or account modifications, and treats the ability to pause or redirect an agent mid-task as a design requirement, not an optional feature.

OpenAI's safety guidance takes a complementary angle, recommending that tool approvals stay active so end users confirm destructive operations before they execute. Their architecture assumes that any agent with write access to production systems needs a human confirmation layer, particularly for actions that can't be reversed. Both frameworks treat human oversight as load-bearing infrastructure, not a compliance checkbox.

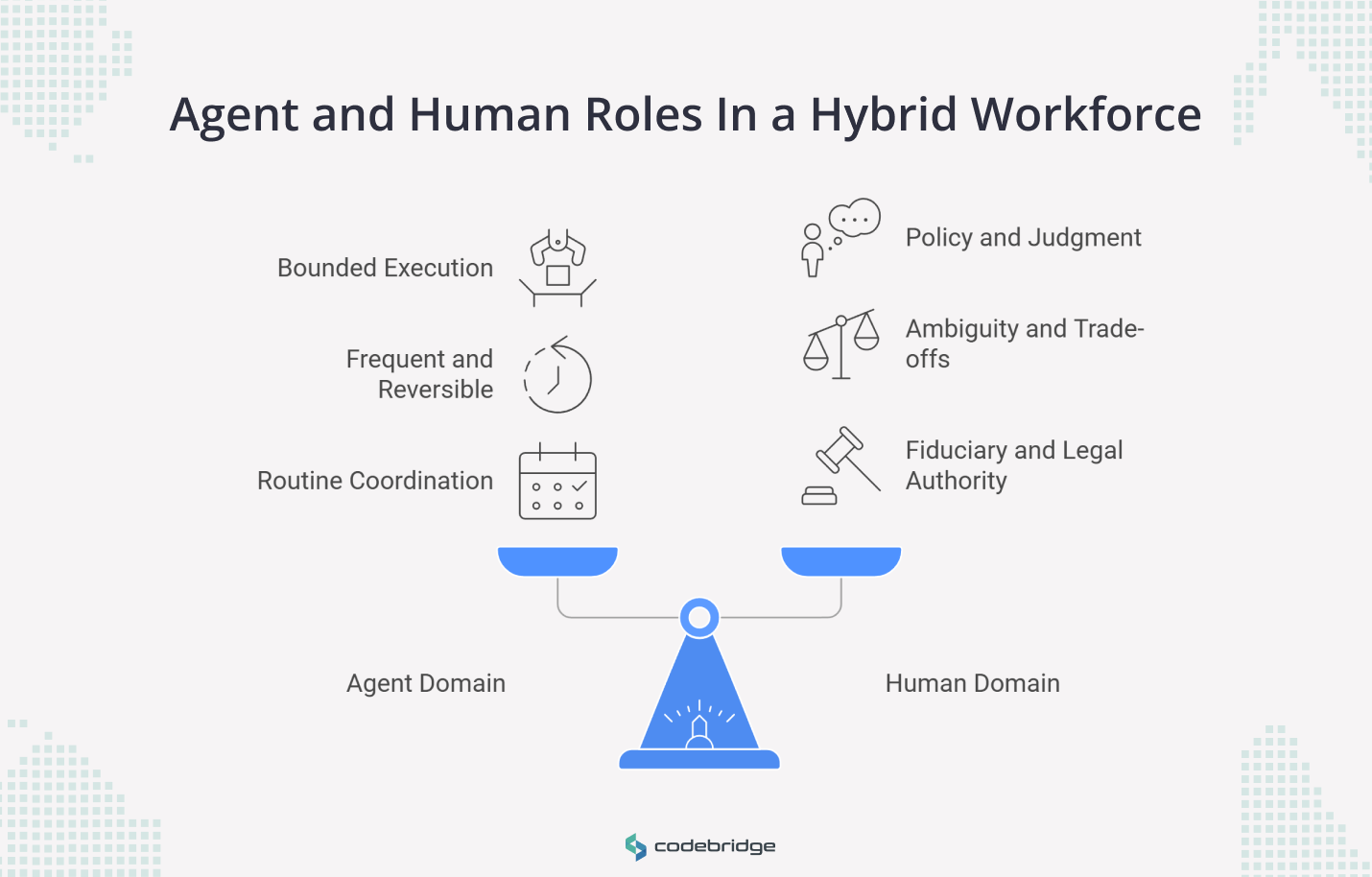

The practical implication for your org structure is that the human role shifts from execution to system design. You stop reviewing every pull request and start defining which categories of action require approval, what budget ceilings apply per agent role, and which exceptions trigger escalation. Day-to-day, that means monitoring agent output asynchronously and intervening when the system hits a policy boundary or an unresolvable exception, not approving each individual task.

Dividing Work Between Agents and Humans in the OpenClaw/Paperclip Stack

The division comes down to three questions about any given task: how structured is it, how often does it repeat, and what happens if the output is wrong?

What Agents Handle Through OpenClaw

Agents connected through OpenClaw can operate with direct access to messaging channels, APIs, file systems, and session history. Each agent runs inside a sandboxed environment (Docker containers), which means it can execute code and modify files without risking the host system. That sandboxing is what makes the following delegation patterns viable rather than reckless.

An OpenClaw agent can watch a Telegram channel or a support queue continuously, classify incoming requests, pull relevant context from connected systems, and route items to the right owner. The output is a structured triage, so a wrong classification costs minutes of rework rather than a production incident.

Bug reproduction and patch drafting follow the same logic. An agent can pull a reported issue, attempt to reproduce it in a sandboxed environment, draft a fix, and run the existing test suite against it. The work product is a proposed patch with test results, not a merged commit.

Paperclip adds a scheduling layer on top of this. Through its heartbeat model, each agent wakes on a defined cycle, checks its current assignments against the goal hierarchy, executes the next task in its queue, reports the result, and returns to idle. This creates a natural audit boundary: every heartbeat produces a discrete record of what the agent attempted, what tools it called, and what it returned.

What Stays With Your Team

Four categories of work should stay with humans, and Paperclip's organizational model is designed to enforce that boundary.

- Goal-setting and prioritization

The Board layer in Paperclip is where the human operator defines the company's mission, approves the CEO agent's proposed strategy, and sets priorities that cascade through the org chart. Agents can surface data and propose plans. The approval to pursue a direction remains human.

- Trade-off decisions

Conflicts between ship date and test coverage, cost and quality, or scope and timeline require a business context that agents don't carry. When a Paperclip agent encounters competing objectives, the system escalates rather than resolves.

- Budget authority

Paperclip enforces budget ceilings at the role level. Each agent role has a monthly limit set by the human operator, preventing a single agent from consuming disproportionate resources on exploratory work while higher-priority tasks wait. NIST's AI Risk Management Framework supports this pattern, assigning governance and budget authority to actors with management and fiduciary responsibility.

- Policy exceptions

Any action outside pre-defined guardrails (a refund above a set threshold, a deployment touching production infrastructure, a communication sent to an external party) routes to the human lead. The agent pauses, the trace is available for inspection, and the human decides whether to approve, modify, or stop the workflow.

How the Three-Layer Operating Model Makes Agents and People Work Together

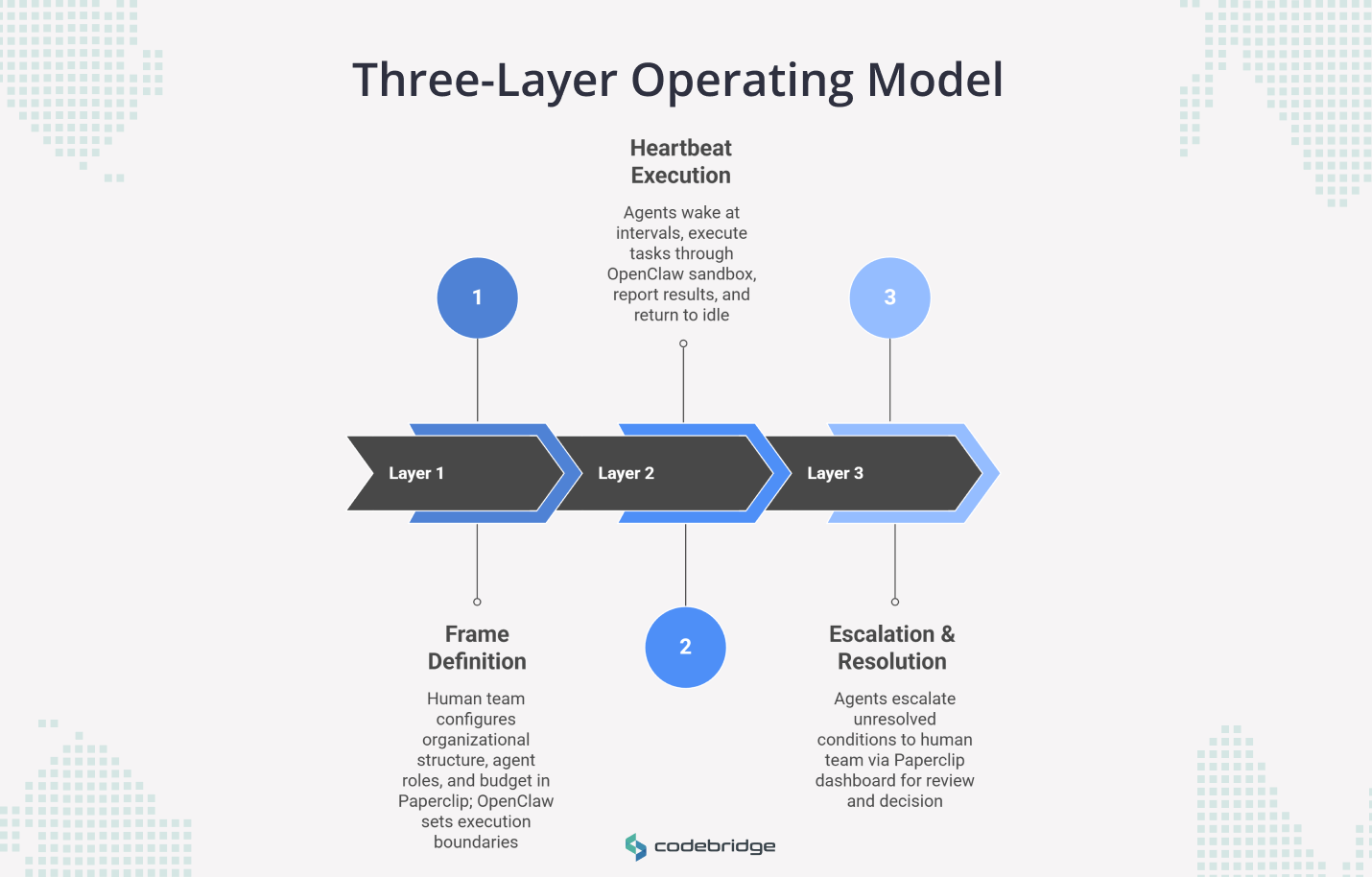

The OpenClaw/Paperclip architecture splits into three layers: governance, execution cycles, and escalation. Each layer has a clear owner and a defined interface between the two systems.

Layer 1: Your team defines the frame in Paperclip

Paperclip's dashboard is where you configure the organizational structure your agents operate within. As the Board (Paperclip's term for the human operator), you set the company mission, define agent roles in the org chart, approve or reject the CEO agent's proposed strategy, and assign monthly budget ceilings per role. Adding a new agent to the organization means defining its job description, its reporting line, and the scope of actions it can take.

OpenClaw's configuration handles the corresponding execution boundaries. When you add an engineering agent in Paperclip, you configure its OpenClaw gateway to grant access to specific channels (a Telegram dev group, a Discord support channel), specific tools (GitHub API, CI pipeline triggers), and specific sandbox permissions. The frame layer is where these two systems align: Paperclip defines what an agent is responsible for, OpenClaw defines what it can touch.

Layer 2: Agents execute in heartbeat cycles through OpenClaw

Agents don't run continuously. Paperclip's heartbeat model schedules each agent to wake at a defined interval, check its current assignments against the goal hierarchy, execute the next task in its queue, report the result, and return to idle.

During the active phase of a heartbeat, OpenClaw manages the execution session. The agent connects through OpenClaw's gateway to its assigned tools and channels, operates inside a sandboxed environment, and produces a discrete output (a drafted patch, a triage summary, a status update). OpenClaw maintains session history so the agent can reference prior cycles when context is relevant, but each heartbeat is a bounded unit with a clear start, execution, and report.

This cycle structure gives you two control surfaces. On the Paperclip side, you control what the agent works on, how often it wakes, and what budget it can consume per cycle. On the OpenClaw side, you control what tools and channels the agent accesses during execution. Cost exposure is bounded by cycle frequency and per-role budget limits rather than by hoping an agent will self-regulate.

Layer 3: Escalation Routes, Exceptions To Your Team

When an agent encounters a condition it can't resolve within its guardrails, the system escalates. Specific triggers include: a test suite failure on a proposed patch, a task that would exceed the role's budget ceiling, an action that falls outside the agent's defined policy boundaries, or an unresolvable conflict between competing objectives.

The escalation surfaces a trace of the agent's heartbeat cycle: every tool call, every decision point, every intermediate output. Your team reviews the trace through Paperclip's dashboard and decides whether to approve the pending action, redirect the agent to a different approach, or stop the workflow. The agent remains paused until the human lead resolves the escalation, which prevents cascading failures where downstream agents act on an unreviewed output.

What Usually Fails in Agent-Human Organizations

When these systems break, the LLM is almost never the problem. The failures come from how you configured the organization around it.

- Unclear escalation of ownership. If you don't assign a specific human to each agent role's escalation path in Paperclip, a blocked agent stays blocked. Its heartbeat cycle pauses at the escalation step, downstream agents that depend on its output stall, and no one receives a notification because no one was designated to receive one.

- Absent Policy Boundaries: Giving agents execution capability (e.g., full shell access via OpenClaw) without defining policy boundaries creates immense risk. An agent might helpfully delete what it considers duplicate files, only to restructure a user's entire system in a way that was never intended.

- Performative Oversight: Inserting a human approval step into every heartbeat cycle without giving that human the trace data to make an informed decision creates a bottleneck that adds latency without adding control. If your lead is clicking "approve" on 40 agent outputs a day without reviewing the tool calls and decision points behind them, you have approval theater rather than meaningful oversight. Paperclip's trace records exist for this reason, but they only work if the review process is designed around reading them.

- Missing Budget Controls: Without per-role budget ceilings in Paperclip, an agent running exploratory research can consume the same resources as your critical delivery pipeline. One engineering agent spinning up extended test cycles or making repeated API calls can crowd out agents handling time-sensitive triage.

- Role Ambiguity: When multiple agents have overlapping job descriptions but no clear owner for a specific phase of a workflow, the result is duplicated work or fragmented results.

Conclusion

The value of combining OpenClaw and Paperclip is the ability to move from isolated execution to a structured operating model. OpenClaw serves as the execution layer, providing the necessary gateway, tools, and runtime control. Paperclip adds the organizational layer, providing the roles, goals, budgets, and bounded cycles required for corporate oversight.

However, the ultimate authority remains human. Governance, approval policies, and accountability for consequences cannot be delegated away. By designing a system where authority is explicit and agents operate within supervised cycles, companies can build organizations that move with the speed of AI while maintaining the control of a professional enterprise.

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript