Last month, an aerospace hiring manager posted a frustrated confession that stopped me cold. After reviewing over 1,000 applications for a senior developer role, they found maybe 20 real candidates. The rest? A flood of AI-generated resumes claiming impossible credentials. One applicant had apparently "managed satellite systems at PayPal." The cover letters looked perfect. The coding challenges came back with the right answers. But when it came time for live technical discussions, the facade crumbled instantly.

If you're a founder or CEO trying to build an AI product team in 2026, this story probably hits uncomfortably close to home. The hiring landscape has broken, and the traditional playbook,post a job, screen resumes, run coding tests,no longer separates genuine talent from sophisticated fabrication.

KEY TAKEAWAYS

AI-generated applications have weaponized traditional screening, making resume review nearly worthless for identifying genuine candidates.

The demand for AI developers has exploded 143% year-over-year, but overall tech job openings are down 36%,creating a brutal competition for a shrinking pool of verified talent.

Human-centered skills now outrank coding ability in AI job requirements, changing what "qualified" means.

Milestone-based verification and live technical assessment have become non-negotiable for any serious AI hiring process.

The Systemic Collapse of Developer Screening

Here's what the data reveals about the hiring environment you're navigating: AI-related job postings doubled from 66,000 to nearly 139,000 between January and April 2025 alone. AI Engineer roles specifically grew 143.2% year-over-year,the fastest growth of any technical position. Yet simultaneously, overall US tech job openings dropped 36% from early 2020 levels.

This creates a paradox that's crushing hiring managers: explosive demand for AI talent combined with a broader hiring freeze means every qualified AI developer receives dozens of competing offers. Meanwhile, the ease of using LLMs to generate convincing applications has flooded every posting with fabricated credentials that surface-level screening can't detect.

One hiring manager described the new reality bluntly: applicants are "straight up lying about their qualifications, which bumps them to the top of the list, but then the screening comes and they're very obviously just plugging questions into an LLM." Traditional resume screening fails when AI-generated applications look identical to genuine ones.

The Phantom Job Problem

But the dysfunction runs deeper than fraudulent applications. An experienced web developer recently observed a pattern that should concern every founder: many of those AI developer job postings you're competing against don't represent real hiring intent at all.

"Most of these roles are never meant to fill, but again, just for outward/inward optics." Instead, they ask their existing devs to pick up the slack, use AI.

u/[webdev user], Reddit r/webdev

Companies post phantom roles for investor optics while expecting existing teams to absorb the workload using AI tools. This means your genuine job posting is competing for attention against thousands of fake ones, while real candidates have learned to be skeptical of any posting's legitimacy.

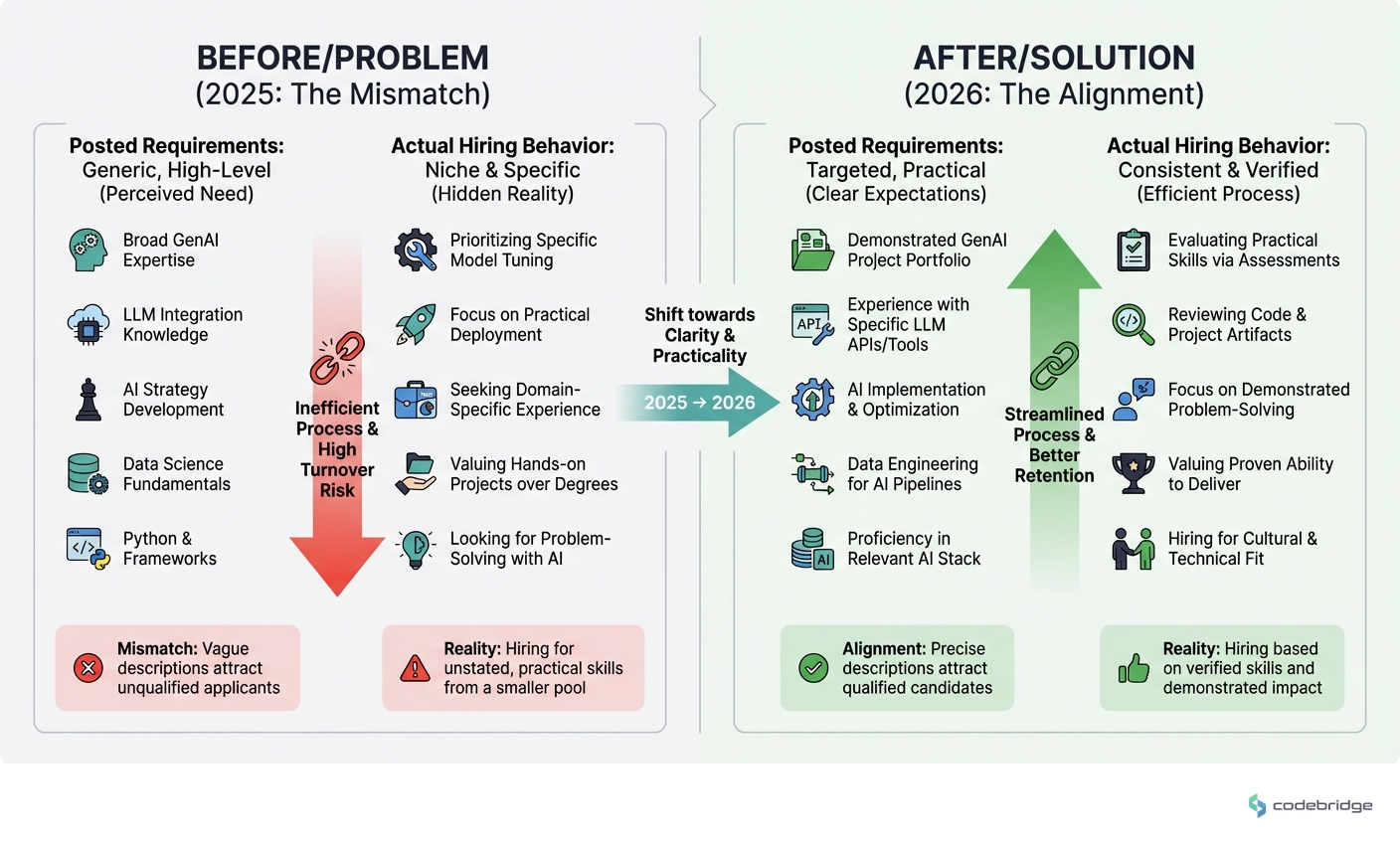

The following comparison illustrates how the hiring landscape has fractured between what companies advertise and what they actually need:

For candidates, this creates exhaustion and cynicism. For founders genuinely trying to build teams, it means the best developers have developed sophisticated filters to ignore most opportunities,including potentially yours.

What Successful AI Teams Do Differently

The companies winning the AI talent war have abandoned the post-and-pray approach entirely. Look at how the leaders are adapting:

Google rebuilt its engineering organization after 2023 layoffs by focusing specifically on AI engineers in concentrated geographic hubs. The result: engineering headcount up 16% from 2022 levels. They didn't try to hire everyone everywhere,they went deep in specific talent pools.

Anthropic faced the same bidding war as everyone else, with competitors offering multimillion-dollar packages. Their response wasn't to match the highest offers. Instead, they implemented retention strategies emphasizing culture and mission alignment, achieving an 80% retention rate that dominates their peers. They understood that keeping talent matters more than acquiring it.

Meta took the aggressive approach, offering packages up to $10-20 million for their superintelligence lab. Even with that firepower, some top researchers still rejected their offers. Money alone doesn't close AI talent.

The pattern is clear: successful AI hiring requires verification systems, not just compensation. You can't outbid Meta, but you can out-verify and out-retain.

The Skills Paradox: Why Your Technical Screen Is Filtering Out Your Best Candidates

Here's something that surprised me in the 2025 data: design and human-centered skills like communication have surpassed coding as the top demands in AI job listings. This contradicts everything we assumed about technical hiring.

The reason becomes clear when you examine what AI product development actually requires. Building with LLMs isn't primarily about writing algorithms,it's about understanding user intent, designing appropriate interfaces for probabilistic outputs, and communicating complex tradeoffs to non-technical stakeholders.

A growing startup planning to double their engineering team from 25 to 50 developers captured this tension perfectly. Despite offering 80th percentile compensation, they found that money alone wasn't sufficient. Their concern: "We're all worried about the prospect of keeping our internal culture strong while simultaneously not lowering our hiring standards."

Their solution involved a multi-channel sourcing strategy including a developer blog, conference recruiting, and referral incentives. They recognized that the best AI developers aren't actively applying to job postings,they're being recruited through trusted networks and demonstrated expertise.

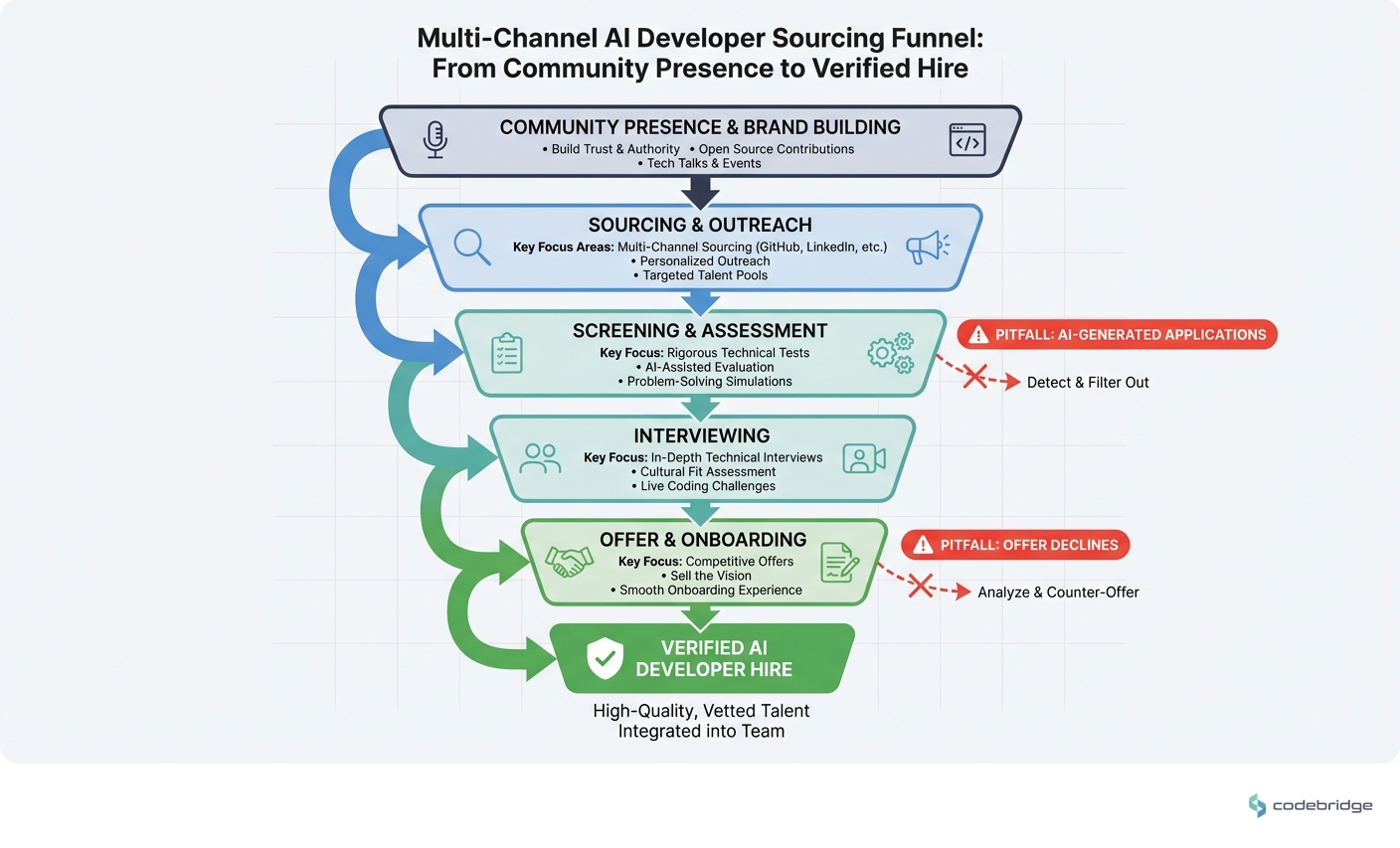

The process flow below shows how effective AI developer sourcing differs from traditional job posting approaches:

A Vetting Framework That Actually Works

Based on what's working for companies successfully building AI teams, here's a framework that addresses the current dysfunction:

1. Verify Before You Screen

Move live technical assessment to the earliest possible stage. A small business owner hiring LLM engineers put it directly: "Since there is a lot of fraud in this department, I would prefer the early payments to start small." The same principle applies to time investment. Don't spend hours reviewing resumes that may be entirely fabricated. A 15-minute live technical conversation eliminates 80% of fraudulent applications.

2. Source Through Demonstrated Work, Not Claims

Referrals-only hiring is tempting but disadvantages real applicants who lack network connections. Instead, verify through community channels: GitHub contribution history, technical blog posts, conference talks, or active participation in relevant Discord/Slack communities. These artifacts are much harder to fabricate than resumes.

3. Test for Communication, Not Just Code

Given that non-IT roles requiring generative AI skills grew 9x from 2022 to 2024, your AI developers will increasingly work alongside non-technical team members. Include a component where candidates explain technical concepts to a non-engineer. This reveals both communication ability and depth of understanding.

4. Use Milestone-Based Engagement

For contractors or early hires, structure compensation around deliverables rather than time. Start with a small paid project,a real problem from your backlog,before committing to full engagement. This verifies capability while limiting exposure to fraud.

5. Verify Hiring Intent From the Other Side

When competing for candidates, remember they're screening you too. Candidates should verify your hiring intent through insider contacts. Be prepared to demonstrate that your role is real: share specific project details, introduce team members, discuss concrete timelines. Transparency about your actual hiring process differentiates you from phantom postings.

The Regional Arbitrage Opportunity

One trend that deserves attention: Asia's AI hiring grew 94.2% year-over-year compared to North America's 88.9%. This widening gap creates both competition and opportunity.

If you're building an AI product team, the talent pool is increasingly global. But this also means your verification challenges multiply. Time zone differences make live technical assessment harder. Cultural context affects communication evaluation. Reference checks become more complex across borders.

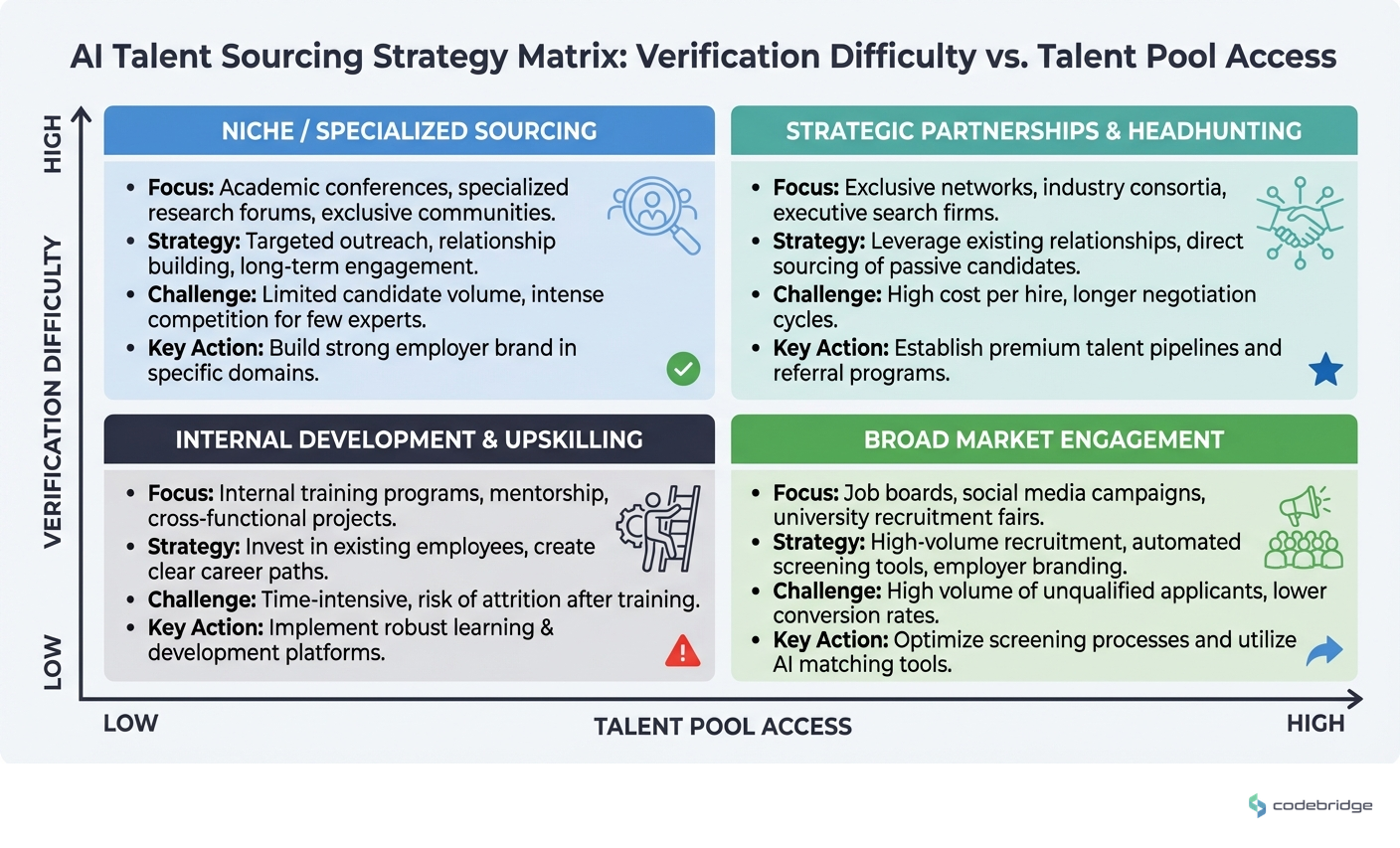

The quadrant below maps different hiring approaches against their verification difficulty and talent pool access:

Companies successfully hiring globally have invested in asynchronous verification methods: recorded technical explanations, collaborative document reviews, and extended trial projects rather than single high-stakes interviews.

The Entry-Level Collapse and What It Means for Your Pipeline

One statistic should shape your long-term hiring strategy: the share of new computer science graduates landing roles at the Magnificent Seven dropped by more than half since 2022. Big Tech is prioritizing experienced AI talent over entry-level hires.

This creates a talent development vacuum. If no one is training junior AI developers, where will your senior talent come from in three years? Forward-thinking founders are building internal development programs now, hiring promising candidates with adjacent skills and investing in their AI-specific training.

It's a contrarian move,most companies are chasing the same pool of experienced candidates,but it may be the only sustainable approach as the verified senior talent pool remains constrained.

Returning to the Hiring Manager's Dilemma

Remember the aerospace hiring manager drowning in 1,000+ applications to find 20 real candidates? Their instinct was to move to referrals-only hiring. It's understandable, but it creates its own problems,disadvantaging qualified candidates without network access and limiting diversity.

The better answer isn't to close the door. It's to change what the door looks like. When your first interaction with candidates is a live technical conversation rather than resume review, the fabricators self-select out. When you source through demonstrated work rather than claimed credentials, the signal-to-noise ratio inverts.

The AI developer hiring crisis is real, but it's not unsolvable. It requires abandoning assumptions about how hiring should work and building verification systems appropriate for an era where credentials can be fabricated in seconds.

Your AI product's success depends on the team you build. In 2026, that means becoming excellent at verification, not just recruitment.

Building an AI product team and struggling with verification?

Download our technical assessment template designed for the current hiring environment.

Diagnostic Checklist: Signs Your AI Hiring Process Is Broken

More than 50% of candidates who pass resume screening fail basic live technical questions

Your time-to-hire for AI roles exceeds 90 days despite high application volume

Candidates frequently ghost after receiving your offer, suggesting competing offers you didn't know about

Your coding challenge completion rate is high but interview pass rate is low (suggests AI-assisted cheating)

You can't articulate what differentiates your opportunity from phantom job postings

Your AI hires consistently struggle with cross-functional communication despite strong technical credentials

You have no verification mechanism for claimed GitHub contributions or portfolio projects

Your referral pipeline produces less than 30% of your AI developer hires

Candidates ask about your hiring intent more than your product vision

REFERENCES

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript