AI agents become valuable the moment they stop behaving like passive assistants and start interacting with real systems. However, this shift from outputs to actions introduces a governance dilemma where the same autonomy and adaptability that drive efficiency also create unprecedented security risks.

At that point, access control stops becomes a core design decision. Traditional permission models were built for users and fixed applications. They are much less reliable when an agent can combine retrieval, reasoning, and action across multiple systems in a single flow.

For founders and CTOs, the key question is how much authority an agent should have in production. What can it see, which tools can it use, what actions can it take, and when does it need human approval? Those boundaries determine whether an agent behaves like a controlled system or an over-permissioned liability.

What AI Agent Access Control Actually Means

In the context of agentic systems, access control is the comprehensive framework that determines what an agent can access, which systems it can use, and exactly what operations it is permitted to perform under specific runtime conditions. It is a discipline that extends far beyond simple user logins or static API permissions. Once agents can call tools and move workflows forward, access control becomes a question of authority.

NIST’s National Cybersecurity Center of Excellence (NCCoE) explicitly frames this challenge as a need to identify, manage, and authorize both the access and the actions taken by AI agents. This requires a clear distinction between the agent’s identity and the human identity on whose behalf it may be acting.

In practice, effective agent access control sets boundaries around four things:

- Resource Visibility: What data the agent can retrieve.

- Tool Functionality: Which tools and functions can it use

- Decision Rights: Which decisions or actions can it complete without human approval

- Auditability: How its actions are logged and reviewed afterward

That is the difference between giving an agent access and giving it controlled authority.

At Codebridge, AI agent practice focuses on embedding these boundaries into real‑world systems, from identity and tools to runtime policy and audit.

Why Agentic Systems Expose the Limits of Traditional Permissions

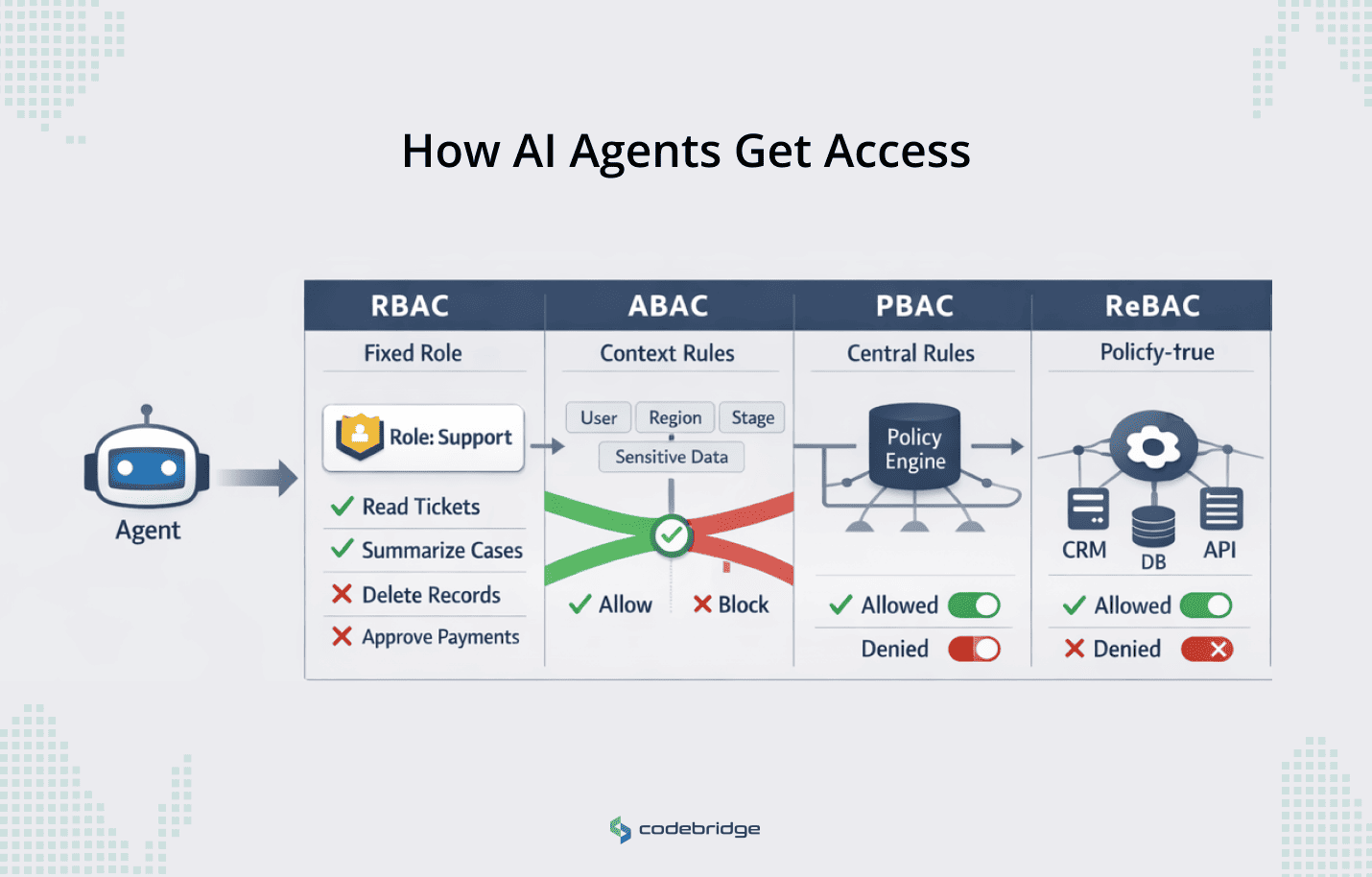

Most enterprises already rely on models like RBAC and ABAC to manage access across users, applications, and services. Those models still matter in agentic systems. The problem is that they are often implemented too coarsely for software that can retrieve data and take multi-step actions across systems with limited human supervision.

AI agents do not make traditional access models irrelevant. They expose where those models become incomplete on their own. A role may define broad responsibility. An attribute may narrow access by context. But neither is enough if the system cannot clearly control delegated authority, restrict tool use, separate read access from action rights, or enforce approvals at the point of execution.

That is why the core issue is not whether RBAC or ABAC still applies. It is whether the surrounding permission architecture is detailed enough for agent behavior in production.

Practical Control Models: Choosing the Right Approach

No single access model is sufficient for agent control in production. The real design question is which framework matches the way the agent actually operates. Some agents have stable responsibilities. Others need permissions that change by record, workflow stage, tenant, or relationship.

When we build AI agents with Codebridge, we combine RBAC, ABAC, PBAC, and ReBAC in a way that matches the agent’s real responsibilities, data domains, and risk profile.

RBAC for Stable, Role-Based Agents

Role-Based Access Control (RBAC) still works when the agent has a clear, durable role. If an internal reporting agent or support assistant performs a narrow set of tasks, role-based permissions are often the simplest way to keep scope understandable. The weakness appears when the role is too broad for the work. An agent may have the right role in general, but still should not be able to access every record, call every tool, or take every action within that role.

ABAC for Context-Sensitive Decisions

Attribute-Based Access Control (ABAC) becomes more useful when access depends on context. It lets teams define permissions using conditions such as user, record type, geography, workflow stage, or data sensitivity. That makes it a stronger fit for agents working across regulated or multi-step workflows. An agent may be allowed to summarize a record during review, for a specific user, in a specific region, but not export it or modify it.

PBAC for Centralized Policy Enforcement

Policy-Based Access Control (PBAC) matters when those rules need to be enforced centrally and updated without rewriting application logic. This is often the practical answer when teams need policy to change at runtime based on risk, environment, or approval state. In agent systems, that flexibility matters because access decisions often depend on more than identity alone.

ReBAC for Record- and Relationship-Driven Systems

Relationship-Based Access Control (ReBAC) defines permissions based on the graph of relationships between subjects and resources. This is essential for B2B SaaS and regulated workflows where authorization depends on specific associations: "This agent can access this file because it is assigned to this specific matter."

Google’s Zanzibar is the most notable implementation of this model at scale. While ReBAC is highly flexible, it can introduce performance overhead due to the need for multiple relationship lookups per request.

The important point is that these models are not mutually exclusive. Most production agent systems need a combination. RBAC can define broad responsibility. ABAC can narrow scope by context. PBAC can centralize and enforce policy. ReBAC can handle record- and relationship-level access.

The right model is a part of a control stack that matches how the agent actually behaves.

The Boundary Stack: An Architectural Framework

RBAC, ABAC, PBAC, and ReBAC help model access, but they do not by themselves govern agent behavior in production across identity, delegation, tools, actions, approvals, and auditability.

In practice, production-grade agent control is a chain of boundaries that limits what the agent can do, under whose authority, in which systems, and with what evidence afterward. The access model gives you the logic. The boundary stack turns that logic into an operating control layer.

A practical boundary stack for AI agents should include eight layers:

1. Agent Identity Boundary

Every production agent must have its own distinct identity. Without this, teams cannot reliably assign responsibility or investigate misuse later. If the system cannot clearly tell which agent acted, every downstream control becomes weaker.

2. Delegation Boundary

The system must define whether the agent acts as itself, on behalf of a user, or under some other delegated authority. This is one of the most important boundaries in agent design. Agents should never silently inherit broad human access by default.

3. Resource Access Boundary

An agent should only be able to retrieve the data, records, tenants, and systems that are in scope for the task. This is where fine-grained authorization matters most. Broad access at the data layer quickly turns a useful agent into an overexposed one.

4. Tool Boundary

The agent should only be able to call the tools and functions it really needs. Tool access should be narrowed as aggressively as possible. For example, a mail-reading tool should not have the ability to send messages or modify settings.

5. Action Boundary

Access to a system is not the same as permission to act within it. Teams should separate rights for reading, summarizing, drafting, recommending, updating, approving, deleting, or executing. Generic “system access” is too coarse for agent behavior in production.

6. Approval Boundary

High-impact actions, such as financial transactions or code deployments, should require explicit human-in-the-loop confirmation.

7. Runtime Policy Boundary

Agent permissions should not remain static if the surrounding conditions change. Authorization may need to be tightened based on workflow state, sensitivity level, environment, anomaly signals, or the source of the instruction.

8. Audit Boundary

Every meaningful access or action should leave a trace that shows which agent acted, what authority it used, what resource or tool it touched, what policy allowed it, and whether approval was required. If that chain cannot be reconstructed later, the system is not truly governable.

AI agent access control is a layered control system. RBAC, ABAC, PBAC, and ReBAC help shape the permission logic, but the boundary stack is what turns that logic into operating constraints.

Where Agent Access Control Fails in Real Deployments

Broad delegated authority

In March 2026, Meta disclosed a SEV1 security incident after an internal AI agent gave flawed technical guidance that led to unauthorized employee access to sensitive company and user data for more than two hours.

Meta said no user data was mishandled, but the incident is a strong example of what happens when agent advice or action paths operate too close to privileged workflows without clear authority boundaries.

Missing approval boundaries

In Australia, Deloitte partially refunded a government report worth about A$440,000 after the document was found to contain apparent AI-generated errors and fabricated legal citations. The problem was not only model accuracy. It was that weak review and approval controls allowed low-confidence output into a high-trust deliverable.

Unchecked automated decision rights

In the US, iTutorGroup agreed to pay $365,000 to settle an EEOC lawsuit alleging its hiring software automatically rejected older applicants, screening out women over 55 and men over 60. That is a boundary failure too. A high-impact decision was effectively delegated to automation without adequate control over what the system was allowed to decide.

Despite different contexts, all three cases show the same failure pattern: unclear authority turned automation into operational risk.

Executive Checklist: Is Your Agent Actually Governable?

Before an agent goes live, leadership should be able to answer these questions without hesitation:

- Does every production agent have its own distinct identity?

- Can we explain whether the agent is acting for itself or under delegated human authority?

- Are its permissions limited to only the tools and functions needed for the task?

- Are read, write, approve, and execute rights separated clearly?

- Are high-impact actions such as payments, deletions, or deployments blocked until a human reviews them?

- Can authorization change based on workflow stage, data sensitivity, or operating context?

- Can we see which policy or rule allowed a specific action?

- Can we reconstruct which agent accessed which tool, system, or record?

- Have unused tools, test plugins, and excess permissions been removed from production?

- Would this design hold up in an internal audit or a customer security review?

If those answers are unclear or depend on assumptions, the system is probably not ready for production. Governable agents are defined by how clearly their authority can be limited and explained.

Conclusion

AI agent access control is about deciding where agent authority starts and where it stops. The safest and most valuable agentic systems are not the ones with the most advanced reasoning, but the ones with well-defined identities, narrow permissions, controlled actions, and visible approval paths.

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript