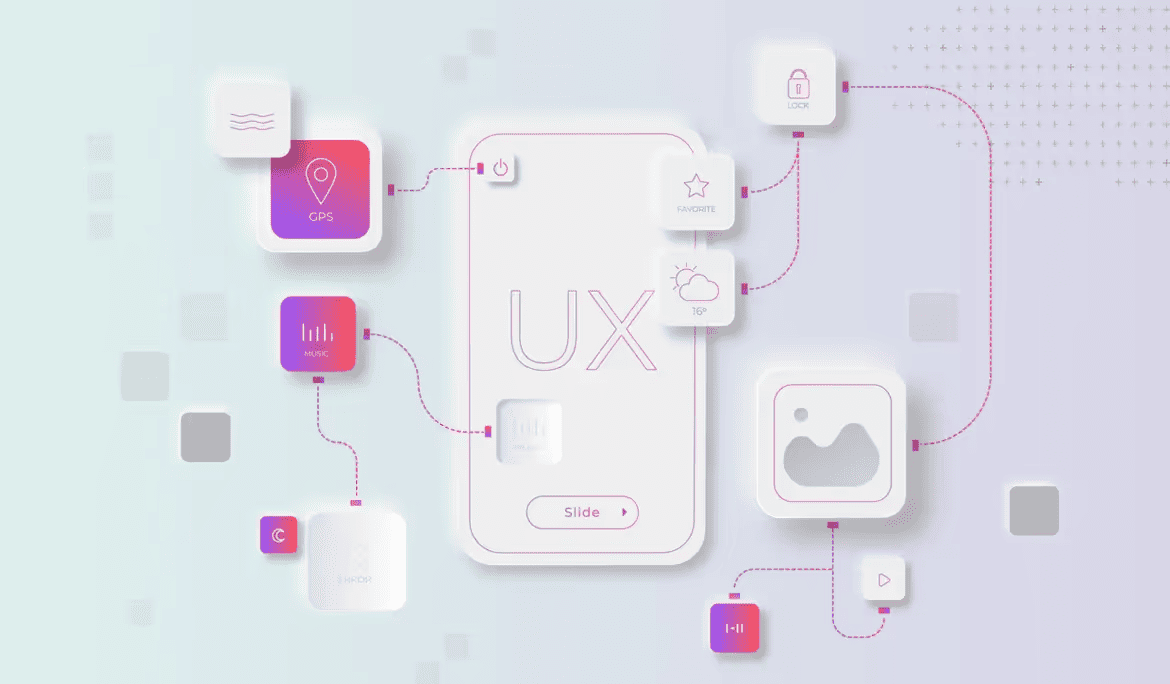

Testing the design and its usability is a delicate process. To ensure you don’t miss any crucial tasks or information, follow these six simple steps for a smooth and effective usability testing session.

1. Welcome the Participant

When the participant arrives at an in-person or remote session, start with a warm welcome. Introduce yourself and express your gratitude for their participation. Be mindful of your language; avoid using the word “test,” as it can make participants feel like they are being evaluated. Remember, the goal is to test the design, not the user. A welcoming atmosphere sets the stage for a productive session.

2. Inform the Participant About Observers and Recordings

Transparency is key. Inform participants about any observers and the recording process during the recruitment stage. This gives them the choice to participate fully informed. Reinforce this information at the beginning of the session to ensure they are comfortable with the setup.

3. Ask the Participant to Sign the Consent Form

Consent is crucial. For remote sessions, provide a link to an online consent form via the chat feature. In in-person sessions, participants typically sign a paper version, but an electronic version can also be used if preferred. Encourage participants to ask questions before they sign, and ensure they don’t feel rushed during this process.

4. Give Tasks One at a Time

Whether the session is remote or in-person, deliver tasks one at a time through a chat interface or printed slips of paper. Providing a written version of each task, especially if it involves complex scenarios, allows participants to refer back as needed. This approach ensures they have all the details necessary to complete the task effectively.

5. Ask Follow-up Questions

After the participant attempts each task, ask prepared follow-up questions to gather additional insights. Consider questions like:

- “What did you think about doing this activity on the website you just used?”

- “Was there anything easy or difficult about doing this activity?”

Start with broad, open-ended questions to encourage detailed responses, then move to more specific questions to pinpoint particular issues or successes within the interface.

6. Thank the Participant and End the Session

Conclude the session by thanking the participant for their time and effort. Acknowledge their contributions and explain how their feedback will help improve the design. This positive reinforcement leaves participants feeling valued and appreciated, encouraging their future participation.

Testing the design, not the user, is crucial. A structured approach to usability testing ensures comprehensive feedback and a positive participant experience.

By following these steps, you can moderate usability tests effectively, ensuring a comprehensive evaluation of your design while maintaining a positive experience for your participants.

FAQ

What is usability test moderation?

Usability test moderation is the process of guiding participants through a test session, observing their interactions, and collecting insights without influencing their behavior. The goal is to understand how real users experience a product.

What preparation is needed before moderating a usability test?

Preparation includes defining test objectives, selecting participants, creating test scenarios, preparing tasks, and ensuring the testing environment and tools are ready.

How should a moderator interact with participants during a test?

Moderators should remain neutral, ask open-ended questions, and avoid leading participants. Encouraging users to think aloud helps reveal thought processes and pain points.

What common mistakes should moderators avoid?

Common mistakes include giving hints, interrupting users, explaining the interface, or reacting to participant actions. These behaviors can bias results.

How should observations and feedback be captured during testing?

Notes, recordings, and observation templates help capture user behavior, comments, and issues. Documenting insights immediately ensures accuracy.

What happens after the usability test is complete?

After testing, findings are analyzed, patterns are identified, and insights are translated into actionable recommendations. Sharing results with stakeholders supports informed design decisions.

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript